Dario Amodei’s High‑Wire Act: Pentagon Clash, White House Talks, and the Rise of ‘Mythos’

Under siege and in demand: How Anthropic CEO Dario Amodei is reshaping AI policy, product strategy, and geopolitics in 2026.

Under siege and in demand: How Anthropic CEO Dario Amodei is reshaping AI policy, product strategy, and geopolitics in 2026.

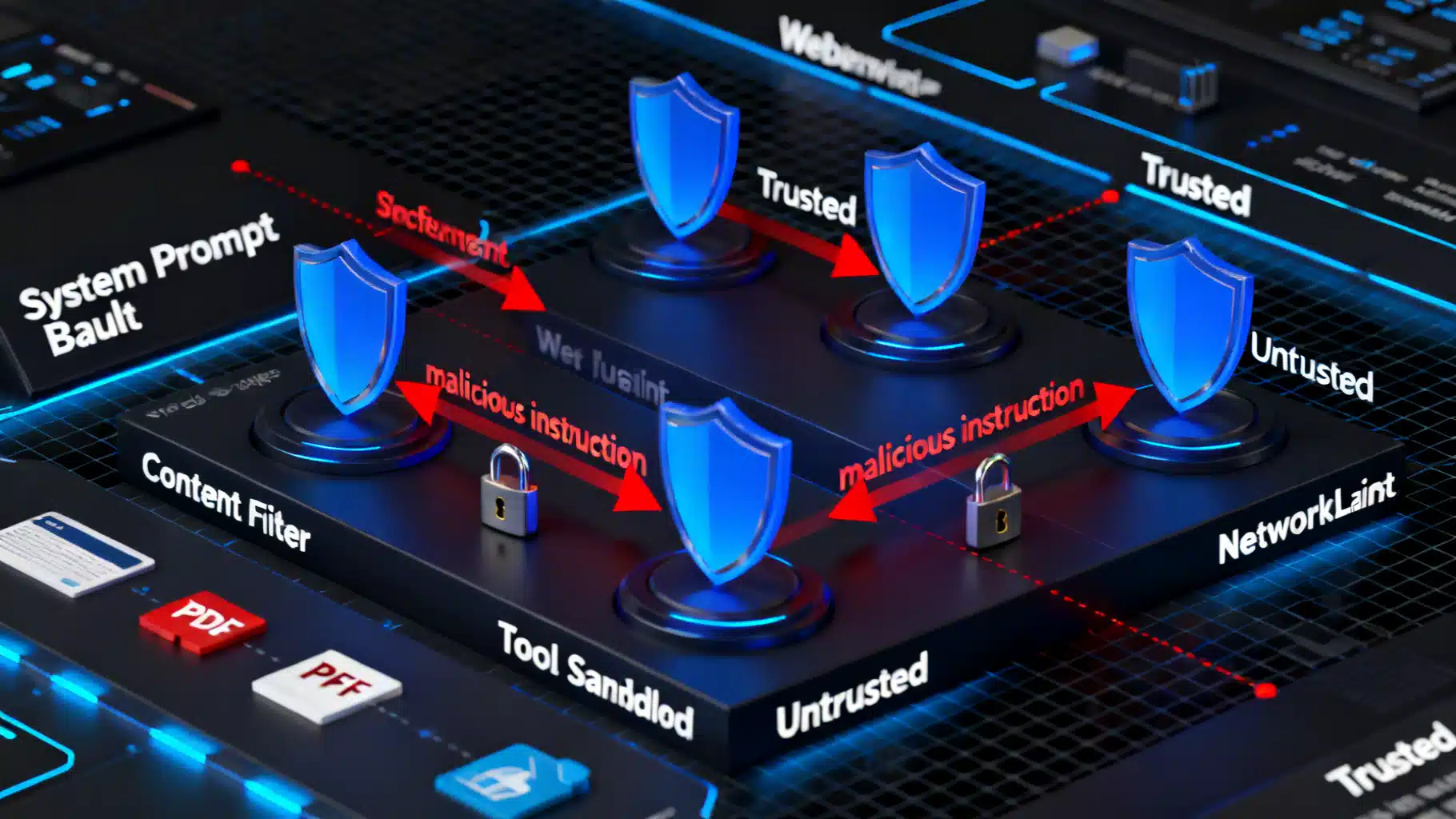

A practical blueprint for preventing prompt injection in LLM apps: threat models, mitigations, code patterns, testing, and operations.

A practical tutorial on reasoning models and chain-of-thought: safe prompting, self-consistency, tree-of-thought, tooling, and evaluation patterns.

A Fortune-confirmed leak reveals Anthropic testing “Claude Mythos,” a new tier above Opus with major cyber gains—and risks—now under tight early access.

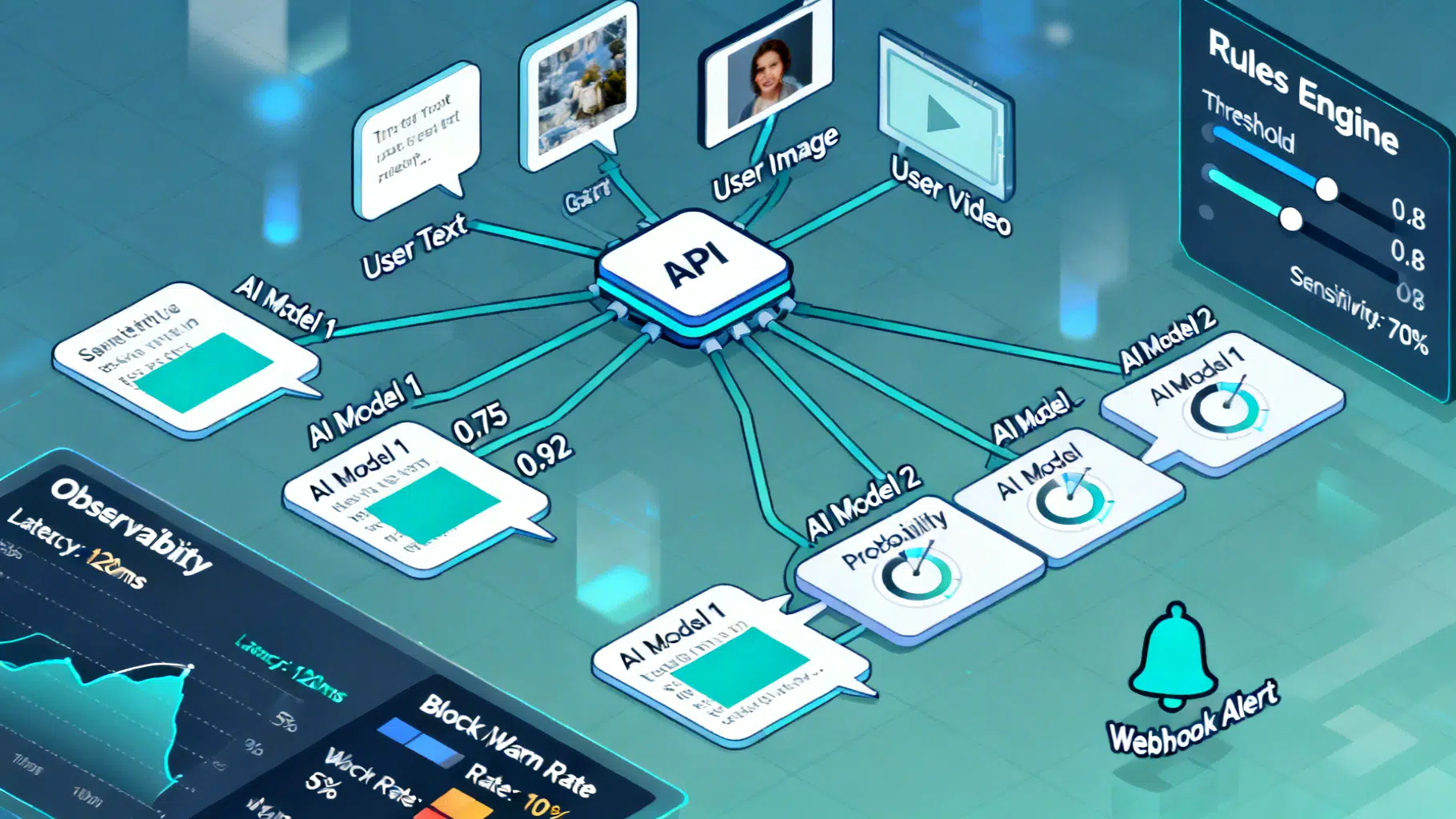

Design and implement a reliable AI content moderation API: taxonomy, architecture, code, policy config, thresholds, privacy, and evaluation best practices.

A practical, end-to-end guide to reducing AI hallucinations with data, training, retrieval, decoding, and verification techniques.