LLM Context Window Optimization: Strategies for Speed, Cost, and Accuracy

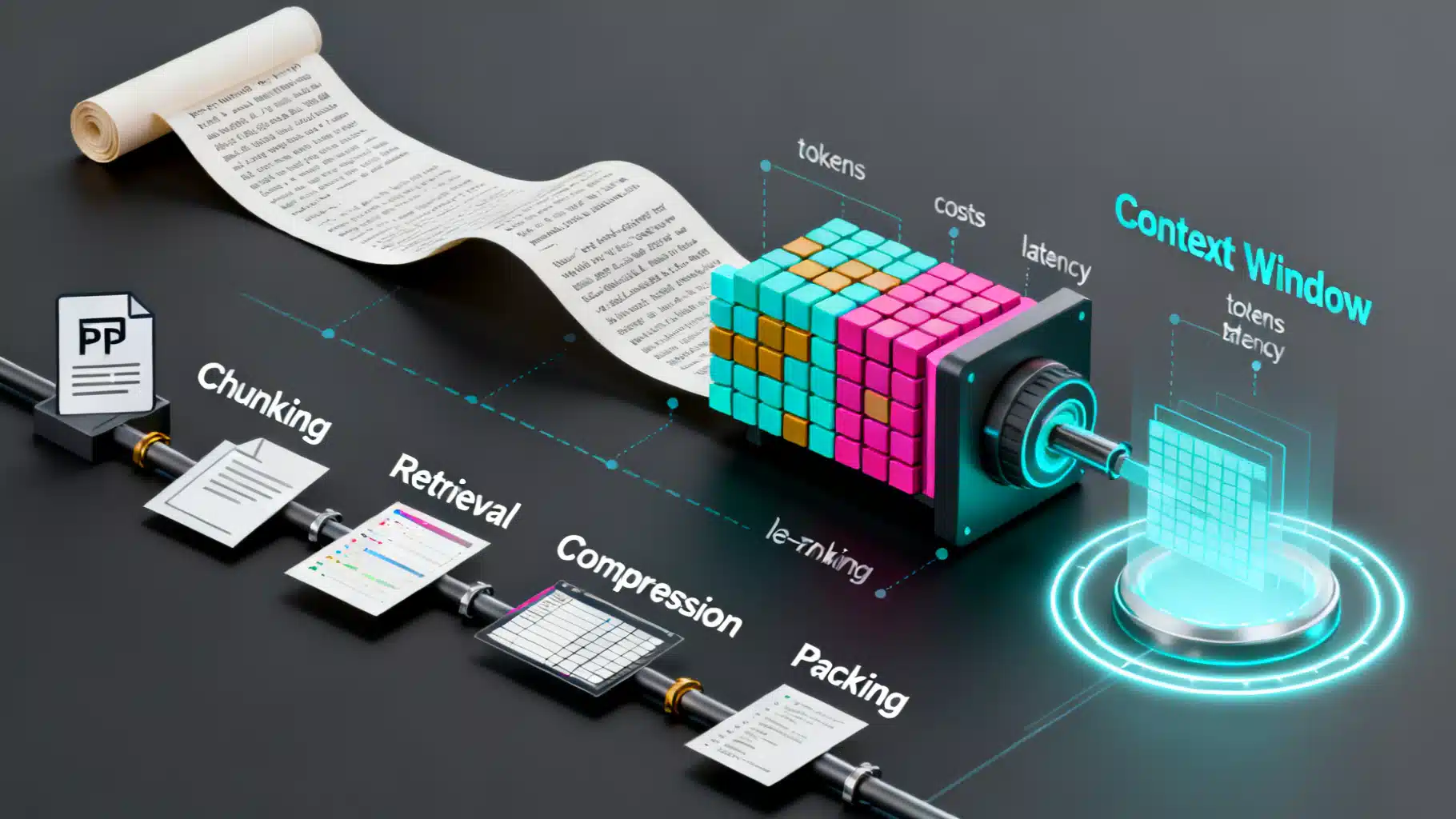

Practical strategies to optimize LLM context windows—reduce cost and latency while preserving accuracy with RAG, chunking, compression, caching, and evaluation.

ASOasis

Read More

8 min