LLM Context Window Optimization: Strategies for Speed, Cost, and Accuracy

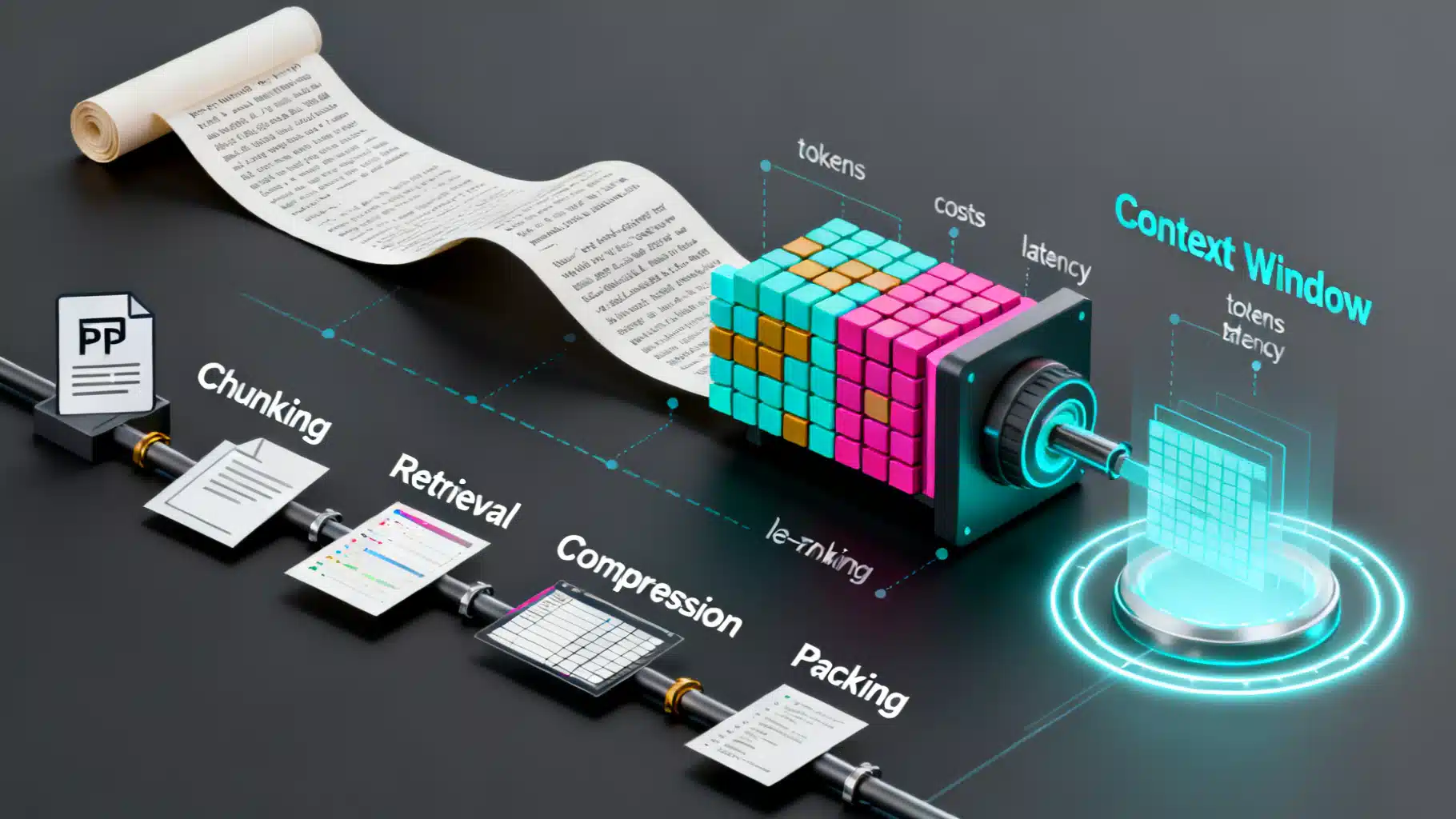

Practical strategies to optimize LLM context windows—reduce cost and latency while preserving accuracy with RAG, chunking, compression, caching, and evaluation.

Image used for representation purposes only.

Overview

Large Language Models (LLMs) thrive on context—the tokens you send as instructions, data, and conversation history. But context comes at a price: larger prompts mean higher latency, higher cost, and a higher risk that the model “misses” key facts (especially in the middle of very long inputs). Context window optimization is the practice of delivering only the most useful tokens to the model—structured and ordered to maximize answer quality per token.

This article distills proven strategies for reducing token footprint while preserving or improving accuracy. You’ll learn how to budget tokens, chunk and retrieve data, compress and order content, and evaluate your gains with simple, repeatable tests.

What a Context Window Really Is

- A context window is the maximum number of tokens an LLM can attend to at once (input plus output).

- Tokens are subword units. English prose often averages roughly 0.7–1.3 tokens per word; code and some languages can be more token-dense.

- The model’s attention budget is finite: feeding more tokens dilutes attention and increases compute. Bigger windows are not a substitute for good selection and structure.

Implications:

- Cost typically scales with input and output tokens. Optimize both.

- Latency increases with prompt length; long contexts slow time-to-first-token and overall generation.

- Accuracy can degrade if key evidence is buried or if irrelevant text distracts the model.

The Token Budget Mindset

Treat tokens as a hard budget you plan, monitor, and enforce.

- Define success and constraints

- Quality: exact answer correctness, citation faithfulness, code tests passing, etc.

- Limits: maximum input tokens, maximum output tokens, latency SLOs, cost ceiling per request.

- Track token usage

- Count tokens for: system instructions, tools/schema, conversation history, retrieved docs, and the user’s query.

- Add a safety margin (e.g., 10–20%) for the model’s completion.

- Automate enforcement

- If projected tokens exceed limits, trigger compression, drop low-utility docs, or ask for user clarification before proceeding.

Retrieval: Selecting Only What Matters

Retrieval-augmented generation (RAG) replaces “dump everything” with “fetch only what’s relevant.” Strong retrieval beats giant prompts.

Key patterns:

- Chunking: Split sources into semantically coherent chunks. Typical starting ranges: 200–400 tokens for prose; shorter for code/API references.

- Overlap: 10–20% token overlap reduces boundary misses without blowing up index size.

- Embedding-based recall: Use vector search to shortlist candidates; calibrate K to your budget.

- Re-ranking: Use a cross-encoder or lightweight LLM pass to re-rank top-K chunks before packing.

- Deduplication: Drop near-duplicate chunks to save tokens.

Pseudocode:

# Pseudocode: retrieval + packing pipeline

MAX_INPUT = 6000

RESERVED_COMPLETION = 800

BUDGET = MAX_INPUT - RESERVED_COMPLETION

query = user_input()

q_tokens = count_tokens(query)

candidates = vector_search(query, top_k=40)

ranked = cross_rerank(query, candidates) # better precision

context = []

used = count_tokens(system_prompt) + count_tokens(schema) + q_tokens

for chunk in ranked:

t = count_tokens(chunk.text)

if used + t > BUDGET:

continue

if is_duplicate(chunk, context):

continue

context.append(chunk.text)

used += t

prompt = assemble(system_prompt, schema, query, context)

Compression: Keep Signal, Drop Noise

When the right chunks are still too big, compress them. Aim for selective, loss-aware compression—retain citations, numbers, code semantics, and entity names.

Compression strategies:

- Extractive: Keep only sentences that directly answer the query; use keyword/entity filters.

- Abstractive: Summarize paragraphs into bullet points that preserve facts and units.

- Structured: Normalize to JSON/YAML with fields like claim, evidence, source_id.

- Keyphrase maps: Title → key points → supporting quotes with character spans.

- Code-aware: Collapse comments, keep function signatures and critical logic; preserve line numbers for traceability.

Prompt pattern for compression:

You are a compression assistant. Goal: minimize tokens while preserving facts relevant to the query.

- Keep identifiers, numbers, units, citations.

- Remove greetings, verbose examples, and unrelated sections.

- Output JSON with fields: claim, evidence_snippets (≤3), source_id.

Ordering and the “Lost in the Middle” Effect

Long prompts can dilute attention to middle tokens. Practical mitigations:

- Priority ordering: Place the single most relevant chunk first; put the second-most last; distribute the rest by descending relevance.

- Signposting: Use clear section headers and numbered lists. Models track structure better than walls of text.

- Beacons: Repeat ultra-critical facts succinctly at the top (and optionally at the end) to reinforce recall.

Prompt Design for Density

Well-structured prompts pack more meaning per token.

- Role and goal first: a one-sentence task statement beats a long essay.

- Constraints before references: specify output schema and evaluation criteria upfront.

- Minimal scaffolding: use compact bullets, numbered steps, and consistent delimiters.

- Schema-first outputs: ask for JSON/YAML with short keys to reduce output tokens and make parsing deterministic.

Example skeleton:

SYSTEM: You are a precise analyst. Obey the schema and cite sources.

Task: Answer the user’s query using only the provided context.

Constraints:

- If unsure, say "insufficient evidence".

- Output JSON: {"answer": str, "sources": [id], "confidence": 0–1}

Context:

[DOC id=17]

- Key point A …

[DOC id=22]

- Key point B …

User query: <query>

Caching and Reuse

- Prefix/prompt caching: If your system prompt, tool specs, or policies rarely change, cache their encoded form or leverage provider-side prefix caching. This reduces latency and cost for repeated prefixes.

- Summarized memory: In multi-turn sessions, periodically condense history into a 150–400 token summary and keep detailed turns in a retriever store.

- Deterministic boilerplate: Replace verbose instructions with a stable, short tag (e.g., “POLICY_V3”) whose full text the system injects server-side once.

Guardrails: Truncation and Fallbacks

- Hard caps: Refuse to exceed token limits; ask for narrower scope or run a two-pass flow (retrieve → clarify → answer).

- Smart truncation: Drop lowest-utility context first (low rank, duplicates, off-topic).

- Retry with compression: If the first attempt risks overflow, compress top chunks and rebuild the prompt.

Evaluation: Prove It with Small, Focused Tests

Quantify the win, not just the intuition.

Suggested tests:

- Retrieval precision: For a labeled set of questions and supporting passages, measure recall@K and MRR before/after re-ranking and compression.

- Needle-in-haystack: Plant atomic facts in long distractor text; confirm the model cites and uses them.

- Answer quality: Use exact match/F1 for QA, unit tests for code, or contract checks for structured outputs.

- Cost/latency: Track tokens in/out and end-to-end latency; require improvements without quality regressions.

A simple harness:

def evaluate(queries, ground_truth):

results = []

for q in queries:

prompt, stats = build_prompt(q) # returns prompt + token counts

answer = call_llm(prompt)

score = grade(answer, ground_truth[q.id])

results.append({"score": score, **stats})

return aggregate(results)

Practical Chunking Guidelines

- Split by semantic boundaries: headings, paragraphs, function/class scopes.

- Keep chunks self-contained: a title + a few bullet points often compress better than raw paragraphs.

- Maintain identifiers: keep document IDs and stable anchors for citation and re-retrieval.

- Language-specific tuning: legal and scientific text benefit from slightly larger chunks; code/docs often do better with tighter chunks to avoid irrelevant boilerplate.

Context Packing Heuristics That Work

- Top-1 first, top-2 last: combats middle dilution.

- Group by source: pack multiple short snippets from the same source under one header to minimize metadata overhead.

- Token-aware merge: concatenate very short related chunks into a single block to reduce delimiter bloat.

- Negative context (sparingly): include short “do-not-use” notes if common confusions appear in retrieval.

Structured Compression Example

# Pseudocode: compress then pack

def compress_chunk(chunk, query):

# Call a small/cheap model to shrink the chunk

prompt = f"Summarize only facts relevant to: {query}. Keep numbers and citations. JSON keys: k, v, src."

return call_small_model(prompt, chunk)

ranked = cross_rerank(query, vector_search(query, 50))

compressed = [compress_chunk(c, query) for c in ranked[:12]]

context = pack_until_budget(compressed, budget=BUDGET)

Multi‑Turn Memory Without Bloat

- Rolling summaries: after N turns, summarize the conversation into 150–400 tokens, preserving decisions, definitions, and open TODOs.

- Long-term store: index earlier full turns; retrieve when the user references past topics.

- Session schemas: store memory as typed records: entity, attribute, value, last_updated, source.

Failure Modes to Watch

- Hallucinated citations when context is thin or conflicting. Mitigation: require source IDs, allow “insufficient evidence.”

- Over-compression that drops units, dates, or negations. Mitigation: compression checklists and unit tests.

- Drift in instructions across turns. Mitigation: keep a canonical, short system prompt and restate key constraints in each turn.

Observability and Cost Control

- Log token counts per component (system, tools, query, context, completion).

- Track acceptance criteria per call (e.g., must cite ≥1 source) and trigger retries with tighter context.

- Attribute costs to features (which retriever, which re-ranker) to prioritize optimizations with the best ROI.

A Minimal End‑to‑End Recipe

- Index preparation

- Chunk at 200–400 tokens with 10–20% overlap; store title and source_id.

- Build embeddings and an ANN index; keep a small keyword index for exact matches.

- Query-time pipeline

- Retrieve top-40 by vector similarity; optionally mix in keyword hits.

- Re-rank to top-12; deduplicate near-matches.

- Compress to structured bullets preserving numbers and citations.

- Pack top items by descending utility until token budget is met.

- Place most relevant first, second-most last; add a short beacon summary at the top.

- Ask for JSON output with short keys.

- Evaluation and iteration

- Compare quality, latency, and cost against a naive “dump everything” baseline.

- Tune chunk size, K, compression ratio, and ordering.

Checklist: Ship This

- Token budgets and enforcement are in code, not tribal knowledge.

- Retrieval + re-ranking + deduplication are in place.

- Compression preserves facts, numbers, and citations.

- Prompt is concise, schema-first, and consistently delimited.

- Ordering mitigates middle-token forgetting.

- Caching/prefix reuse is leveraged where possible.

- Automated tests show equal or better quality at lower cost/latency.

Conclusion

Optimizing the LLM context window is not about squeezing more text into the model—it’s about feeding the right text, in the right shape, at the right time. With disciplined retrieval, thoughtful compression, strategic ordering, and rigorous evaluation, you can cut tokens dramatically while improving answer quality and reliability. Treat tokens like a budget, measure everything, and iterate with data—not guesswork.

Related Posts

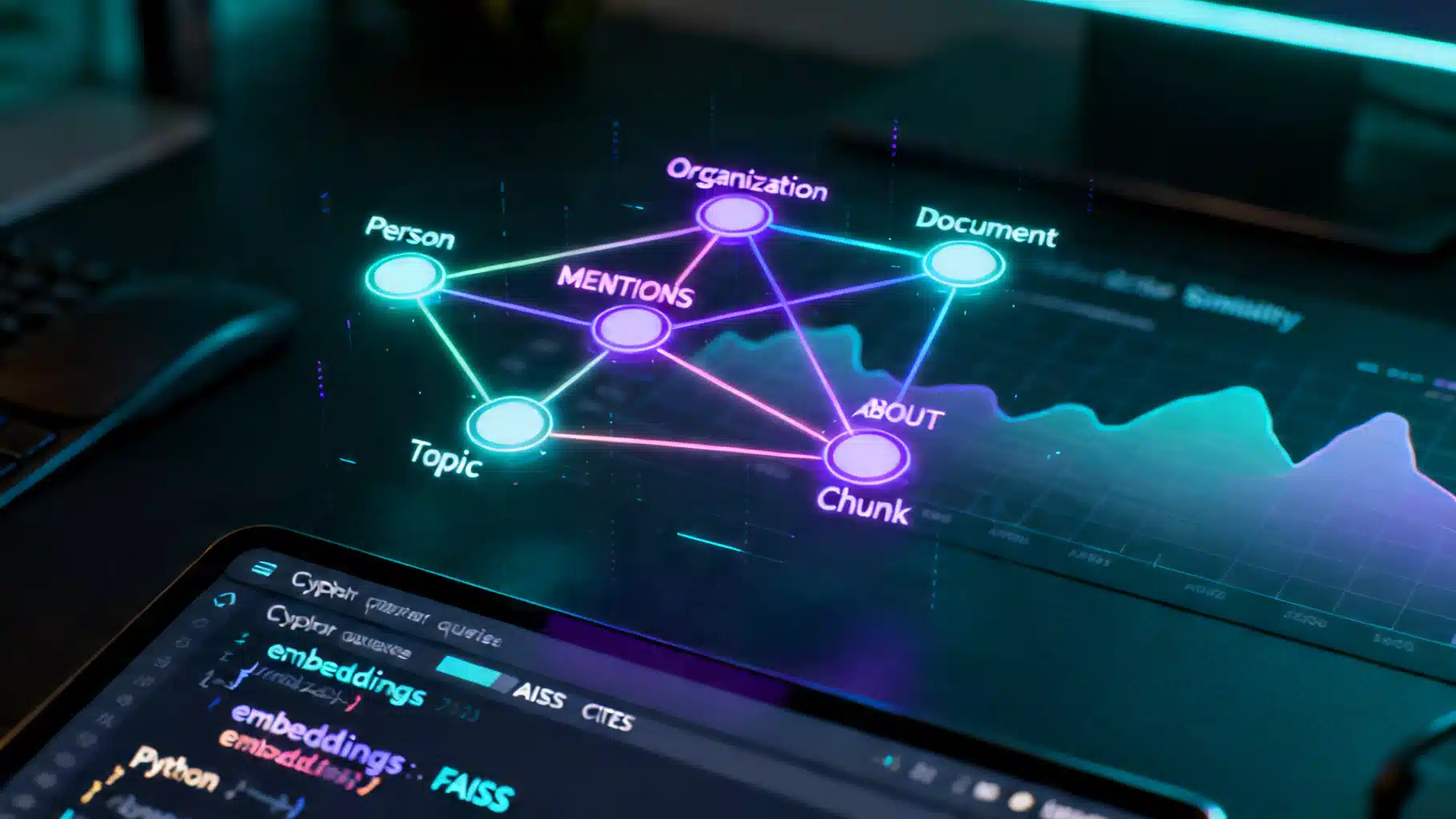

A Practical Tutorial on Knowledge Graph–Enhanced AI Retrieval (GraphRAG)

Build a production-ready tutorial for knowledge graph–enhanced AI retrieval: schema, ingestion, Cypher, hybrid search, and evaluation.

Integrating an AI Writing Assistant via API: Architecture, Code, and Best Practices

A practical guide to integrating an AI writing assistant via API—architecture, prompt design, code samples, safety, evaluation, and performance optimization.

AI Summarization APIs for News: Architecture, Quality, and Compliance

Design a reliable AI summarization API for news: architecture, schema, grounding, evaluation, safety, compliance, and cost strategies.