RAG vs. Fine‑Tuning: How to Choose the Right Approach

A practical guide to choosing RAG vs fine-tuning, with a clear decision framework, patterns, code sketches, and pitfalls.

ASOasis

6 min read

Image used for representation purposes only.

TL;DR

- Use RAG when your knowledge changes frequently, must be source‑grounded, or needs tenant isolation without retraining.

- Use fine‑tuning when you need behavior changes (style, tone, step‑by‑step formats, tool use), consistent structured outputs, or very low latency with smaller models.

- Most production systems benefit from a hybrid: fine‑tune for formatting and reasoning patterns; RAG for fresh, attributable facts.

Definitions at a glance

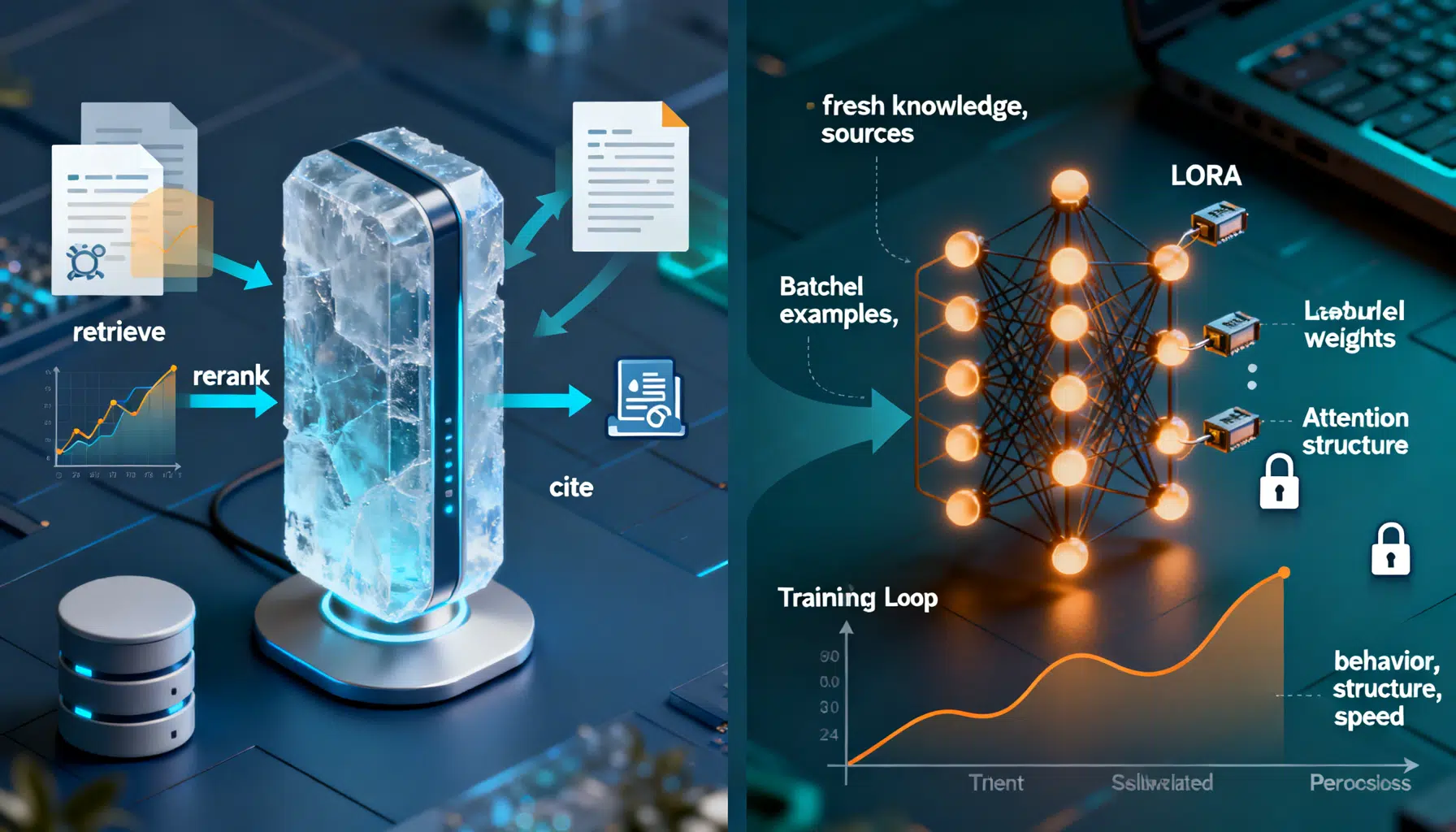

- Retrieval‑Augmented Generation (RAG): The model stays frozen. You retrieve relevant context (documents, tables, APIs) at query time and feed it to the model via the prompt. Quality hinges on retrieval and prompt engineering.

- Fine‑tuning: You update model weights (full or parameter‑efficient like LoRA/QLoRA) using curated examples so the model internalizes behaviors or domain patterns. Quality hinges on data quality and training regimen.

What problem does each solve?

- RAG solves knowledge freshness and attribution.

- Injects external facts at inference time.

- Enables citations and auditability.

- Supports per‑tenant knowledge without retraining or risk of data leakage (with isolated indexes).

- Fine‑tuning solves behavior and formatting.

- Teaches the model to follow domain‑specific instructions, schemas, and voice.

- Reduces prompt complexity and tokens used to “steer” outputs.

- Improves reliability of function/tool calling and JSON compliance.

Decision framework (use this mental checklist)

Ask these questions and pick the dominant pattern:

- How volatile is the knowledge?

- High volatility (hours–weeks): Prefer RAG.

- Low volatility (months–years): Fine‑tuning viable.

- Do you need citations or audit trails?

- Yes: RAG (with retrieved sources) or hybrid.

- Is tenant isolation a must?

- Yes: RAG with per‑tenant indexes; fine‑tuning per tenant is costly and risky.

- Is the main gap “facts” or “behavior”?

- Facts: RAG. Behavior/format: Fine‑tuning.

- Latency and cost targets?

- Ultra‑low latency or edge deployment: Fine‑tune a smaller model; optionally distill. RAG adds retrieval hops.

- Context length constraints?

- Very long documents or multi‑file analysis: RAG with chunking/rerankers; consider long‑context models. Fine‑tuning does not expand context.

- Output structure strictness?

- Strict JSON or schema: Fine‑tuning (plus constrained decoding) shines.

- Data privacy/regulatory needs?

- Keep raw docs out of prompts? Prefer fine‑tuning (but trade off freshness). Need traceability? Prefer RAG.

Architecture patterns

- RAG pipeline

- Ingest: chunking, embeddings, metadata, access‑control tags.

- Index: vector store; optional BM25 for hybrid search; reranker (cross‑encoder) for precision.

- Orchestration: retrieve → construct prompt with sources → generate → cite.

- Enhancements: query rewriting (HyDE), multi‑vector indexes, caching, retrieval‑guardrails.

- Fine‑tuning pipeline

- Data: pairs of (instruction, response), tool‑use traces, or preference data.

- Training: SFT → (optional) preference optimization (DPO) → evaluation → safety review.

- Deployment: versioned checkpoints, A/B, rollback; monitor drift and format adherence.

Cost, latency, and scale

- RAG

- Cost drivers: embedding generation (one‑time per document), storage, retrieval tokens, larger prompts.

- Latency: extra hops for retrieval/rerank; cache hot paths to mitigate.

- Scaling: shard indexes by tenant/domain; precompute embeddings offline.

- Fine‑tuning

- Cost drivers: curation and model training; cheaper inference if a smaller, specialized model is used.

- Latency: can be fastest at runtime, especially with small models and no retrieval.

- Scaling: more models/checkpoints to manage; re‑train for new knowledge or behaviors.

Evaluation and quality signals

- RAG‑specific

- Retrieval hit rate@k, MRR, reranker precision.

- Groundedness/factuality: proportion of claims supported by retrieved sources.

- Context utilization: how often cited context appears in output.

- Fine‑tuning‑specific

- Instruction following accuracy; schema/JSON validity rate.

- Tool‑use success and function‑calling accuracy.

- Consistency: variance across seeds and prompts.

- Shared

- Task metrics (Exact Match, F1, ROUGE for summarization, BLEU for translation‑like tasks).

- Human ratings for helpfulness, harmlessness, honesty.

- Latency p50/p95; cost per successful task.

Security and governance considerations

- RAG

- Pros: source attribution, easier redaction/updates, tenant isolation by index and ACLs.

- Cons: prompt may contain sensitive snippets; apply PII scrubbing, encryption at rest/in transit, and access policies.

- Fine‑tuning

- Pros: no document snippets at inference; smaller payloads.

- Cons: risk of memorization if trained on sensitive data; strict data minimization, differential privacy, and red‑teaming advised.

Real‑world scenarios (recommendations)

- Customer support on ever‑changing policies and product catalogs: RAG first; optionally fine‑tune for agent style and reply templates.

- Contract clause extraction with strict JSON schema: Fine‑tune for structured output; add RAG if you must cite the source clause.

- Internal knowledge assistant across teams with different permissions: RAG with per‑tenant/per‑team indexes; optional lightweight fine‑tune for tone.

- Code‑aware assistant for a living codebase: RAG over repos + reranker; small fine‑tune for chain‑of‑thought style or tool calling.

- On‑device assistant with tight latency and no network: Fine‑tune or distill a small model; periodically refresh via OTA updates.

Implementation quick‑start

- Minimal RAG sketch (Python‑like pseudocode)

# 1) Ingest and index

chunks = chunk_docs(load_docs("/docs"), tokens=800, overlap=120)

emb = EmbeddingModel("mini-embed")

vecs = [emb.encode(c.text) for c in chunks]

index = VectorStore.from_embeddings(vecs, metadatas=[c.meta for c in chunks])

# 2) Query → retrieve → rerank → prompt

q = user_input()

q_vec = emb.encode(q)

candidates = index.search(q_vec, top_k=40)

reranked = CrossEncoder("rerank-large").rank(q, candidates)[:8]

context = "\n\n".join([f"[Doc {i}] {c.text}" for i, c in enumerate(reranked, 1)])

prompt = f"""

You are a helpful assistant. Answer using only the provided sources.

Question: {q}

Sources:\n{context}

Cite sources as [Doc N].

"""

answer = LLM("gpt-like").generate(prompt)

return answer

- Minimal fine‑tuning sketch (parameter‑efficient LoRA)

from transformers import AutoModelForCausalLM, AutoTokenizer, Trainer, TrainingArguments

from peft import LoraConfig, get_peft_model

base = AutoModelForCausalLM.from_pretrained("base-llm")

base.gradient_checkpointing_enable()

peft_cfg = LoraConfig(r=16, lora_alpha=32, lora_dropout=0.05, target_modules=["q_proj","v_proj"])

model = get_peft_model(base, peft_cfg)

train_data = load_sft_dataset("train.jsonl") # {instruction, input, output}

tok = AutoTokenizer.from_pretrained("base-llm")

args = TrainingArguments(per_device_train_batch_size=4, num_train_epochs=3, lr_scheduler_type="cosine",

learning_rate=2e-4, fp16=True, logging_steps=20, save_total_limit=2)

trainer = Trainer(model=model, args=args, train_dataset=tokenize(train_data, tok))

trainer.train()

model.save_pretrained("ft-checkpoint-lora")

Hybrid strategies that work

- Fine‑tune for formatting and tool use; RAG for facts and citations.

- Use small fine‑tunes to compress prompts (teach style), then keep prompts short to control token costs in RAG.

- Add a reranker to reduce context size and improve groundedness.

- Apply constrained decoding (JSON schema) even with fine‑tuning for extra reliability.

- Cache retrieval results and final completions for frequent queries.

Common pitfalls

- Treating fine‑tuning as a fix for missing knowledge. It will go stale; prefer RAG for updates.

- Over‑chunking documents leading to loss of context; under‑chunking bloats prompts. Start with ~600–1,000 tokens and tune.

- Ignoring retrieval quality: embeddings, hybrid search, and reranking often matter more than the base LLM choice.

- Using noisy SFT data: garbage in → brittle behaviors and hallucinations. Enforce data contracts and review guidelines.

- No evaluation loop: ship‑and‑forget leads to regressions. Automate offline and online evals with clear SLAs.

Migration path (pragmatic)

- Start with RAG MVP to unblock knowledge freshness and citations.

- Instrument: log retrieval hit rate, groundedness, latency, and user feedback.

- Identify recurring formatting/behavioral gaps from logs.

- Curate high‑quality SFT data from best interactions and add tool‑use traces.

- Fine‑tune parameter‑efficient adapters; layer into the RAG system.

- Continuously evaluate; only consider full model fine‑tunes or distillation when latency/cost or on‑device constraints demand it.

Quick checklist

- If you need up‑to‑date, attributable facts → choose RAG.

- If you need consistent format, tool use, or domain style → fine‑tune.

- For strict compliance/traceability → RAG (with citations) or hybrid.

- For ultra‑low latency/edge → fine‑tune a compact model.

- When in doubt, start with RAG, measure, then fine‑tune for the gaps.

Related Posts

AI Image Generation API Integration: Architecture, Code Examples, and Best Practices

A practical guide to integrating AI image generation APIs with production-ready code, architecture patterns, safety, and cost optimization.

ASOasis

Read More

7 min

Designing a Robust AI Text Summarization API: Architecture to Production

How to build and use an AI text summarization API: models, request design, chunking, evaluation, security, and production best practices.

ASOasis

Read More

7 min

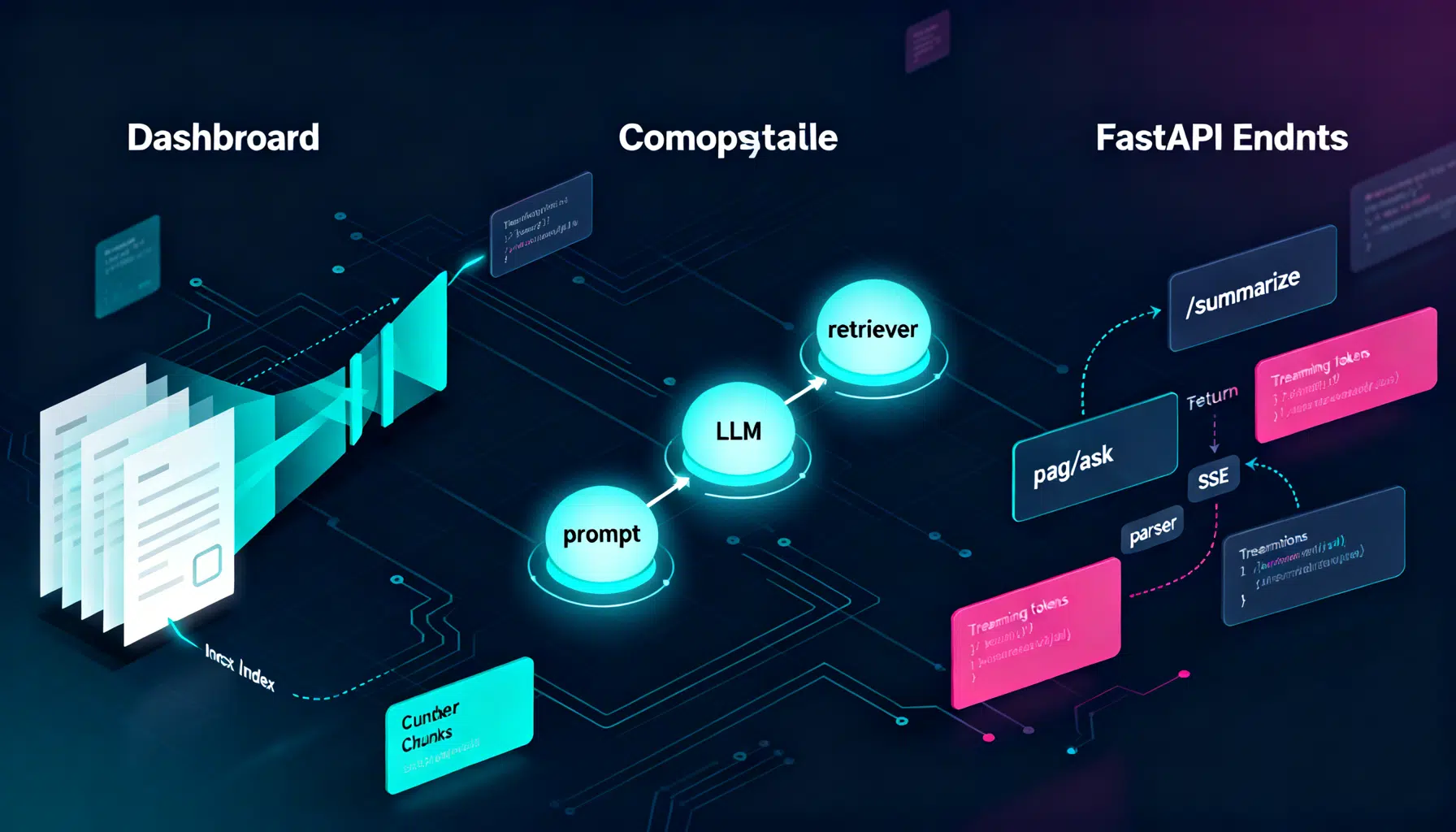

LangChain API Tutorial: From Hello World to Production RAG with FastAPI and LangServe

Build a production-ready LangChain API: LCEL chains, LangServe, FastAPI streaming, RAG, structured outputs, testing, and deployment tips.

ASOasis

Read More

7 min