Pinecone Vector Database API Tutorial: Semantic & Hybrid Search with Python and Node (2026)

Hands-on Pinecone API tutorial: create serverless indexes, upsert vectors, filter metadata, and run semantic + hybrid search in Python & Node (2026).

Image used for representation purposes only.

Overview

Pinecone is a managed, serverless vector database built for semantic and hybrid (dense+sparse) search. In this tutorial you’ll: create a serverless index, upsert data, filter by metadata, and run both semantic and hybrid queries from Python and Node.js. We’ll also cover limits, namespaces, and production tips with the latest API guidance as of March 14, 2026. Pinecone’s current stable API is 2025-10; pin this version in headers and SDKs unless you have a reason to opt into a preview. (docs.pinecone.io )

What’s notably new vs. older blog posts and snippets:

- Serverless is the default path; you choose a cloud+region once per index. On Starter, you can only create indexes in AWS us-east-1. (docs.pinecone.io )

- Integrated embedding: create an index that embeds text automatically (upsert/search with text), or “bring your own vectors” (BYO) and control embeddings yourself. (pinecone.mintlify.app )

- The official Python package is pinecone (not pinecone-client). If you’re upgrading older code, see the SDK notes. (sdk.pinecone.io )

Prerequisites

- A Pinecone account and API key.

- Python 3.9+ and/or Node.js 18+.

- Pin the latest stable API version via header X-Pinecone-Api-Version: 2025-10 for REST examples. (docs.pinecone.io )

Install SDKs:

- Python: pip install “pinecone[grpc]” (docs.pinecone.io )

- Node.js: npm install @pinecone-database/pinecone (docs.pinecone.io )

Set your key securely (shell examples):

export PINECONE_API_KEY="<your-key>"

Designing your index: Integrated vs. BYO

You have two common patterns:

- Integrated embedding: create a dense or sparse index tied to a hosted model; you upsert/search with text and Pinecone handles embeddings. Faster to ship, less plumbing. Note: some text-only flows (e.g., update/import with text) have feature caveats—check docs for integrated indexes. (pinecone.mintlify.app )

- BYO vectors: you embed with your own model/service, then upsert vectors + metadata. Offers full control over dimensions/metrics and model changes. (docs.pinecone.io )

Also decide if you need hybrid search (dense semantics + sparse keywords). Pinecone supports sparse-dense hybrid indexes and queries; weighting between dense and sparse is done by scaling vectors client-side. (docs.pinecone.io )

Create a serverless index

Choose a cloud+region; you cannot change this later. On Starter, only AWS us-east-1 is available. (docs.pinecone.io )

Python (BYO dense index):

from pinecone import Pinecone, ServerlessSpec

pc = Pinecone(api_key=os.environ["PINECONE_API_KEY"]) # pip install "pinecone[grpc]"

index_name = "tutorial-dense"

if not pc.has_index(index_name):

pc.create_index(

name=index_name,

vector_type="dense",

dimension=1536, # match your embedding model

metric="cosine",

spec=ServerlessSpec(cloud="aws", region="us-east-1"),

deletion_protection="disabled",

tags={"env": "dev"}

)

Same flow is available in Node.js:

import { Pinecone } from "@pinecone-database/pinecone";

const pc = new Pinecone({ apiKey: process.env.PINECONE_API_KEY! });

const indexName = "tutorial-dense";

const exists = await pc.hasIndex(indexName);

if (!exists) {

await pc.createIndex({

name: indexName,

vectorType: "dense",

dimension: 1536,

metric: "cosine",

spec: { serverless: { cloud: "aws", region: "us-east-1" } },

deletionProtection: "disabled",

tags: { env: "dev" },

});

}

For integrated embedding, you can shortcut creation by binding a hosted model (e.g., llama-text-embed-v2) and a field map for your source text. (docs.pinecone.io )

from pinecone import Pinecone

pc = Pinecone(api_key=os.environ["PINECONE_API_KEY"])

if not pc.has_index("integrated-dense"):

pc.create_index_for_model(

name="integrated-dense",

cloud="aws",

region="us-east-1",

embed={

"model": "llama-text-embed-v2",

"field_map": {"text": "chunk_text"}

}

)

Tip: you can describe an index to check readiness/state and see schema details (e.g., metadata indexing rules) when using the latest API.

Upsert data

For BYO, embed text with your model and upsert vectors+metadata. Pinecone’s upsert operation accepts dense values, optional sparse values, and metadata. Batch up to 1,000 vectors or 2 MB per request; metadata per record up to 40 KB. (docs.pinecone.io )

Python (BYO vectors):

from pinecone.grpc import PineconeGRPC as Pinecone # gRPC client

pc = Pinecone(api_key=os.environ["PINECONE_API_KEY"])

index = pc.Index(host="<INDEX_HOST>") # see docs for getting host

vectors = [

{

"id": "doc1#chunk1",

"values": embedding1, # list[float] length=1536

"metadata": {"source": "doc1.pdf", "lang": "en", "page": 1}

},

{

"id": "doc1#chunk2",

"values": embedding2,

"metadata": {"source": "doc1.pdf", "lang": "en", "page": 2}

}

]

res = index.upsert(vectors=vectors, namespace="kb")

print(res)

Node.js follows the same structure with the Node client. (docs.pinecone.io )

Integrated embedding lets you upsert text directly (Pinecone embeds it under the hood). See “Upsert text” in the API reference when using an integrated index. (pinecone.mintlify.app )

Important consistency note: Pinecone is eventually consistent. A read immediately after a write may miss the newest record; add a short retry/backoff or poll for readiness in ingestion workflows. (docs.pinecone.io )

Query: semantic search with metadata filters

Two primary options:

- Query with a vector (BYO): embed the query with the same model you used for ingestion, then call query.

- Search with text (integrated): send raw text; Pinecone embeds + searches for you.

Metadata filters use a JSON expression language ($eq, $in, $and/$or, ranges, etc.). This is applied server-side before scoring top_k within the candidate set. (docs.pinecone.io )

Python (BYO vector query):

xq = embed("what is the refund policy?") # returns 1536-dim vector

result = index.query(

vector=xq,

top_k=10,

namespace="kb",

filter={"lang": {"$eq": "en"}, "page": {"$lte": 5}},

include_values=False,

include_metadata=True,

)

for match in result["matches"]:

print(match["id"], match["score"], match["metadata"])

Search with text (integrated index):

res = index.search(

text="what is the refund policy?",

top_k=10,

namespace="kb",

filter={"lang": {"$eq": "en"}},

include_values=False,

include_metadata=True,

)

Both flows and parameters are documented in the Query/Search API. Obey result-size limits (max top_k=10,000; 4 MB payload cap). Use include_metadata/values strategically to stay under limits. (docs.pinecone.io )

Hybrid search (dense + sparse)

Hybrid retrieval blends semantic similarity with keyword fidelity. With Pinecone you can store/query a single sparse-dense vector; to bias toward one side, scale the dense and sparse parts with an alpha parameter client-side. (docs.pinecone.io )

Python example (conceptual):

from pinecone_text.hybrid import hybrid_convex_scale # helper

dense_q = embed_dense("benefits enrollment window") # list[float]

sparse_q = encode_sparse("benefits enrollment window") # indices/values

alpha = 0.7 # 0..1 (1=dense-only, 0=sparse-only)

scaled_dense, scaled_sparse = hybrid_convex_scale(dense_q, sparse_q, alpha)

res = index.query(vector=scaled_dense, sparse_vector=scaled_sparse, top_k=20,

filter={"doc_type": {"$in": ["policy","handbook"]}},

include_metadata=True)

Details and helper utilities are in the hybrid search guide and pinecone-text helper library. (docs.pinecone.io )

Namespaces, multitenancy, and when to filter vs. split

- Namespaces logically partition data within an index (e.g., per customer). Filtering within a single massive namespace can be costlier than querying a smaller namespace. At scale, prefer one index with many namespaces (e.g., per tenant) and filter within a tenant only when needed. Pinecone supports very large namespace counts on paid plans. (docs.pinecone.io )

- The default namespace is "" (or “default” with newer APIs). Be explicit in production to avoid cross-tenant leakage. (docs.pinecone.io )

Operational limits and costs (RUs/WUs)

Keep these in mind as you design:

- Upsert: ≤ 1,000 vectors or 2 MB per request; metadata ≤ 40 KB per record; IDs ≤ 512 chars. (docs.pinecone.io )

- Query: top_k ≤ 10,000; result size ≤ 4 MB; use include_metadata/values to control payload size. (docs.pinecone.io )

- Serverless quotas (Starter): monthly read units (RUs) and write units (WUs) caps exist; exceeding them returns 429s until you upgrade. Implement retries with exponential backoff and monitor usage. (docs.pinecone.io )

Production checklist

- Pin API version headers (2025-10) across REST clients; keep SDKs updated to the versions that support this API. (docs.pinecone.io )

- Region choice: pick the closest region to your app; can’t change after creation. Starter plan is limited to AWS us-east-1. (docs.pinecone.io )

- Consistency: add small ingestion-to-query delays or read-retries after large upserts/updates. (docs.pinecone.io )

- Batching: upsert in batches sized for throughput and request limits; pipeline embeds+upserts asynchronously for speed. (docs.pinecone.io )

- Metadata indexing (EA): on recent API versions you can constrain which fields are indexed for filtering to speed up build and queries—decide at index/namespace creation time. (docs.pinecone.io )

End-to-end mini example (BYO, Python)

from pinecone import Pinecone, ServerlessSpec

from pinecone.grpc import PineconeGRPC as PineconeGRPC

pc = Pinecone(api_key=os.environ["PINECONE_API_KEY"]) # control plane

name = "mini-kb"

if not pc.has_index(name):

pc.create_index(

name=name, vector_type="dense", dimension=1536, metric="cosine",

spec=ServerlessSpec(cloud="aws", region="us-east-1"))

# data-plane (query/upsert)

ix = PineconeGRPC(api_key=os.environ["PINECONE_API_KEY"]).Index(host="<INDEX_HOST>")

# 1) embed (pseudo) and upsert

records = [

("faq#1", embed("How do refunds work?"), {"lang":"en","topic":"refunds"}),

("faq#2", embed("How to change my password"), {"lang":"en","topic":"account"}),

]

ix.upsert(vectors=[{"id":rid, "values":vec, "metadata":meta} for rid,vec,meta in records], namespace="kb")

# 2) query

xq = embed("refund policy")

res = ix.query(vector=xq, top_k=5, namespace="kb", filter={"topic":{"$eq":"refunds"}}, include_metadata=True)

for m in res["matches"]:

print(m["id"], m["score"], m["metadata"])

Upsert/query shapes match the Database API; fetch/delete/update endpoints follow similar patterns when you need direct record management, and Pinecone now supports new bulk-by-metadata operations. (docs.pinecone.io )

Troubleshooting quick hits

- Python import errors: ensure you’re on the pinecone package (not pinecone-client) and that your SDK version matches the API version. (sdk.pinecone.io )

- Queries return fewer hits than expected: check top_k vs. filter selectivity and the 4 MB result limit; try lowering included payload or increasing top_k within limits. (docs.pinecone.io )

- “Missing” fresh writes: add a small delay/retry due to eventual consistency. (docs.pinecone.io )

Where to go next

- Create and tune a hybrid index and evaluate alpha scaling per query type. (docs.pinecone.io )

- Explore hosted embedding/rerank models and “search with text” flows for rapid prototyping. (pinecone.mintlify.app )

- Review limits, quotas, and region options before scaling beyond Starter. (docs.pinecone.io )

With these building blocks you can ship a robust semantic or hybrid search stack on Pinecone—cleanly, quickly, and with the right guardrails for production.

Related Posts

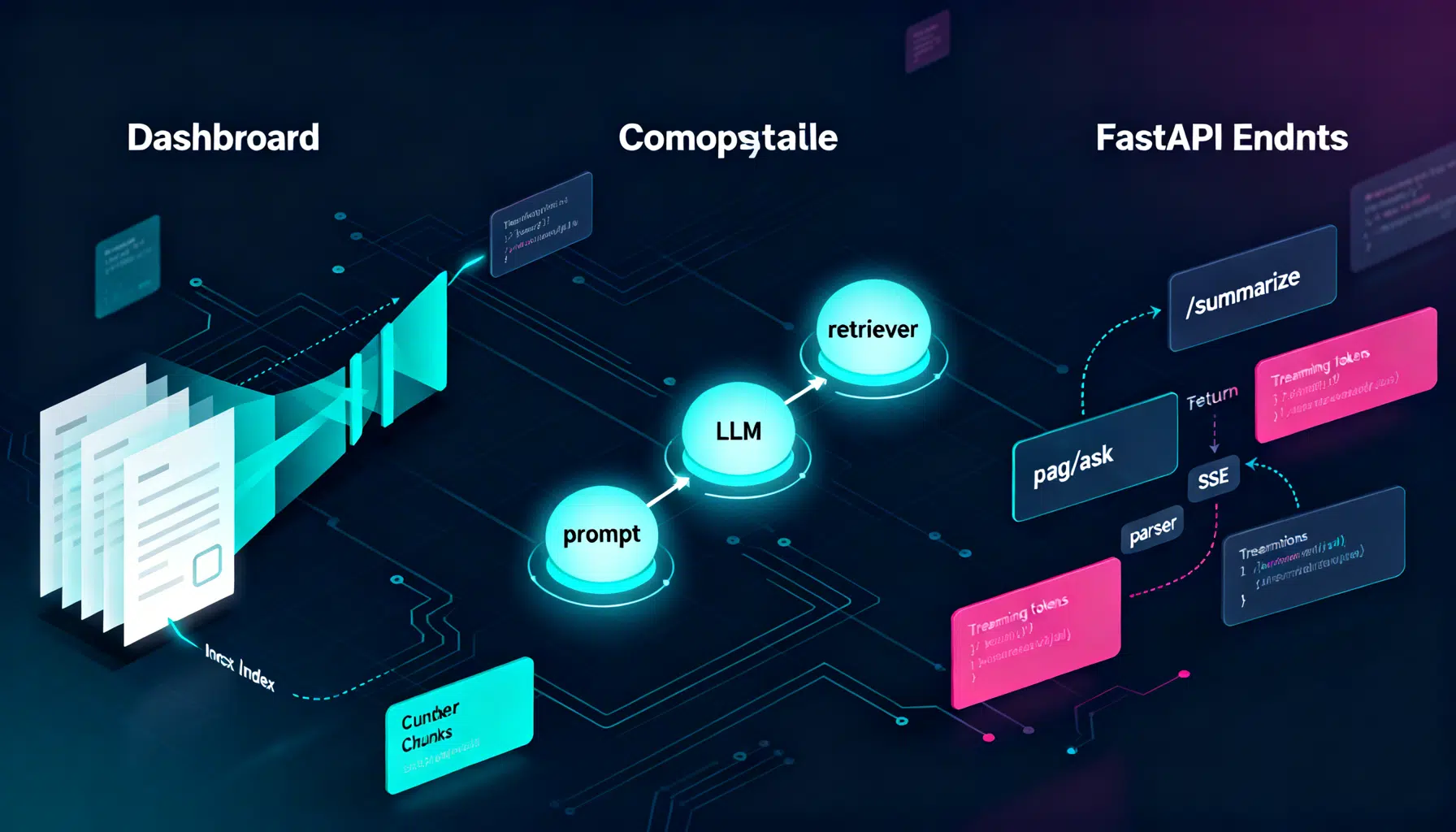

LangChain API Tutorial: From Hello World to Production RAG with FastAPI and LangServe

Build a production-ready LangChain API: LCEL chains, LangServe, FastAPI streaming, RAG, structured outputs, testing, and deployment tips.

LLM Prompt Engineering Techniques in 2026: A Practical Playbook

A 2026 field guide to modern LLM prompt engineering: patterns, multimodal tips, structured outputs, RAG, agents, security, and evaluation.

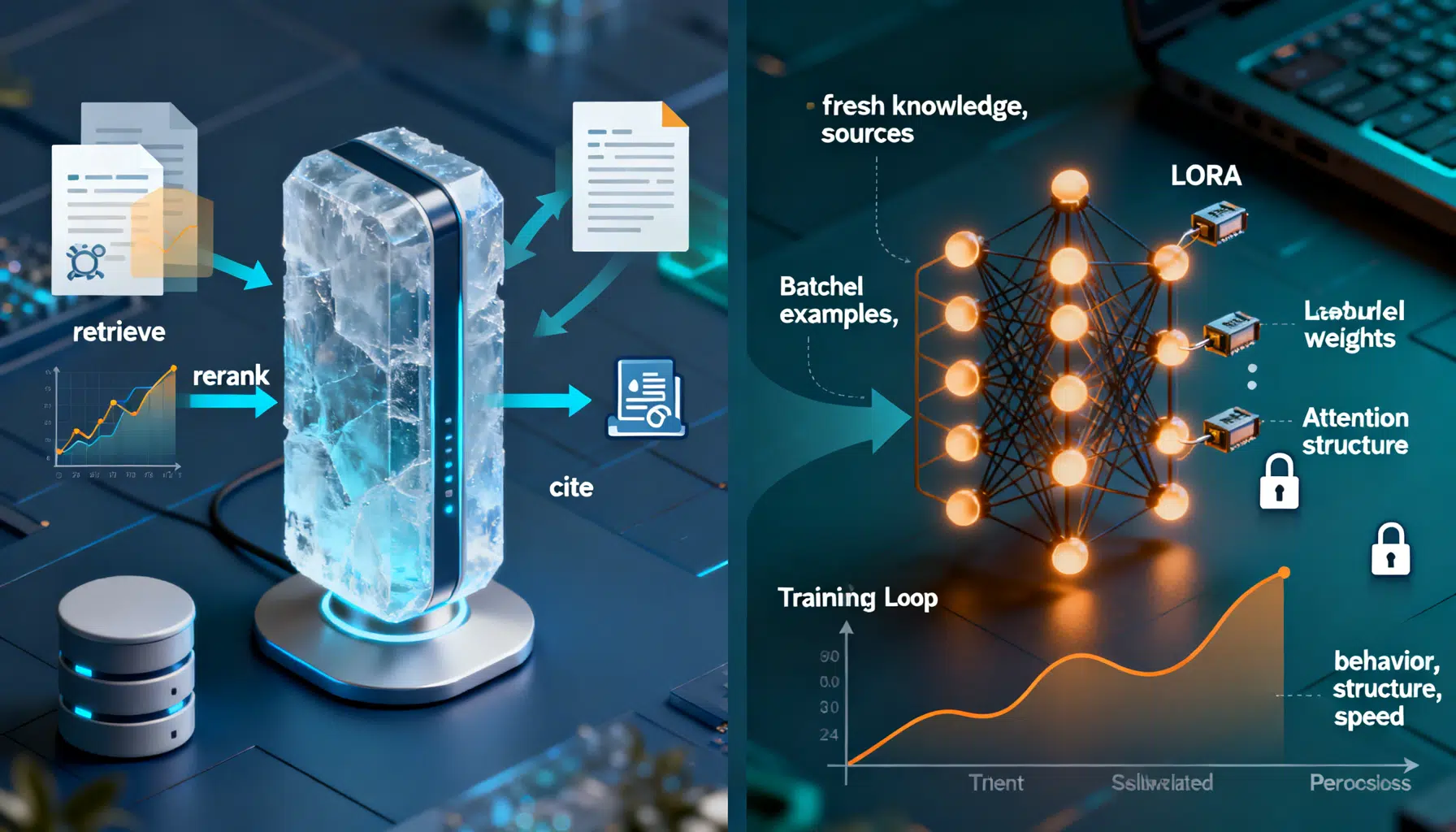

RAG vs. Fine‑Tuning: How to Choose the Right Approach

A practical guide to choosing RAG vs fine-tuning, with a clear decision framework, patterns, code sketches, and pitfalls.