Designing AI Agent Memory: Episodic vs. Semantic Systems That Scale

Build robust AI agent memory with episodic and semantic layers: schemas, retrieval, consolidation, evaluation, and governance—practical patterns included.

Image used for representation purposes only.

Overview

Memory is the difference between a one-off chatbot and a competent AI agent. Two complementary layers do most of the heavy lifting:

- Episodic memory: time-stamped records of specific experiences (who, what, when, where, why, how).

- Semantic memory: structured, generalizable knowledge about the world (entities, concepts, rules, and their relationships).

Designing these layers well determines an agent’s ability to learn from interaction, generalize, and act reliably over time.

Cognitive analogy vs. engineering reality

The cognitive science analogy is useful but imperfect:

- Humans: episodic = autobiographical episodes; semantic = facts/meanings abstracted from episodes.

- Agents: episodic = interaction logs, events, and sensor traces; semantic = normalized knowledge graphs, taxonomies, embeddings, and distilled rules.

In software, the split optimizes for two different queries:

- “What happened before?” → episodic retrieval on a timeline.

- “What is generally true and how do things relate?” → semantic retrieval over a graph or ontology.

The memory stack at a glance

Think in layers that trade off fidelity, generality, and cost:

- Working memory: short-lived context inside the model call (prompt + retrieved snippets).

- Episodic memory: append-only event store with indices for time, participants, and context vectors.

- Semantic memory: curated, deduplicated knowledge represented as entities, relations, and schemas; often mirrored in embeddings for fuzzy match.

- External sources: authoritative systems of record (CRMs, docs, APIs) referenced rather than copied when possible.

Data models

Episodic memory schema

Treat each interaction or event as a first-class record:

- id: UUID

- timestamp: ISO 8601

- actors: [agent, user, third parties]

- artifacts: messages, files, tool outputs, environment states

- context_vector: embedding over salient text/metadata

- tags: task, intent, sentiment, confidence

- outcome: success/failure signals, metrics

Indexes to consider:

- Time index (range queries by recency or window).

- Actor/asset index (per-user, per-project lookup).

- Vector index (cosine/inner-product similarity for topical recall).

Semantic memory schema

Represent stable knowledge with structure:

- Entities (User, Company, Document, API, Feature)

- Types/ontologies (Person → Employee → Engineer)

- Relations (works_at, owns, depends_on, contradicts, supersedes)

- Attributes (version, validity interval, provenance)

- Embeddings for entity and relation text to support fuzzy joins

Graph databases shine here. Pair a graph with a vector store to enable hybrid symbolic + semantic retrieval.

Ingestion and normalization

- Capture: hook into the agent’s tool layer and dialogue manager to log events automatically.

- Normalize: extract entities/relations from episodes via IE pipelines; reconcile to canonical IDs (e.g., via string matching + embeddings + rules).

- Validate: maintain provenance and confidence; never overwrite facts without versioning.

- Compress: summarize long episodes into compact notes while preserving links back to raw data.

Retrieval patterns

Different tasks call for different retrievals; combine them:

- Temporal recall: filter by recent N days or specific windows, then rank by similarity.

- Actor-scoped recall: retrieve episodes linked to the current user/team/asset.

- Query-focused recall: embed the current goal, fetch top-k from episodic and semantic stores, then de-duplicate.

- Hybrid graph + vector: expand the query along graph neighbors (1–2 hops), convert those nodes/edges to text snippets, and re-rank with embeddings.

- Constraint-aware recall: enforce policy filters (privacy/tenancy) before ranking.

A practical approach is “retrieve broadly, re-rank narrowly,” guided by task and token budget.

Consolidation: from episodes to knowledge

You need scheduled jobs that distill specific episodes into durable knowledge:

- Candidate selection: use recency × frequency × novelty × utility scores.

- Summarization: produce concise, verifiable statements with citations back to episodes.

- Conflict resolution: if new knowledge contradicts old, mark the old fact as superseded, don’t delete it.

- Curriculum replay: periodically resurface high-utility episodes to keep them salient.

- Forgetting: apply TTL or compression to low-value logs while preserving aggregates and key summaries.

Storage technologies

- Vector databases (FAISS, Milvus, pgvector): fast similarity search for episodic notes and textual graph payloads.

- Document stores (Postgres/JSONB, MongoDB): flexible, transactional episode storage with metadata.

- Time-series DBs (Timescale, Influx): sensor/metrics-heavy agents.

- Graph databases (Neo4j, Neptune, Memgraph): semantic store for entities/relations and provenance.

Often the winning architecture is polyglot: document store + vector index + graph DB, with a memory service orchestrating them.

Packing memory into model context

Context is scarce. Prioritize by:

- Relevance: embedding similarity to the goal.

- Diversity: minimize redundancy; cluster and pick representatives.

- Recency and authority: prefer fresh or canonical sources.

- Format: provide tight, labeled snippets and structured key-value facts.

Template suggestion:

- Brief task recap

- Top-k episodic snippets (oldest → newest) with timestamps

- Semantic facts (entity cards and relations)

- Open questions or missing data slots

Example: a minimal memory service

from datetime import datetime

from typing import List, Dict

class MemoryService:

def __init__(self, episodic_store, vector_idx, graph_store, embedder):

self.ep_store = episodic_store

self.vidx = vector_idx

self.graph = graph_store

self.embed = embedder

def write_episode(self, user_id: str, text: str, meta: Dict):

vec = self.embed(text)

ep = {

"id": meta.get("id"),

"user_id": user_id,

"timestamp": datetime.utcnow().isoformat(),

"text": text,

"meta": meta,

"vector": vec

}

self.ep_store.insert(ep)

self.vidx.add(ep["id"], vec)

return ep["id"]

def retrieve(self, query: str, user_id: str, k: int = 8) -> List[Dict]:

qvec = self.embed(query)

# 1) episodic candidates by similarity

cand_ids = self.vidx.search(qvec, topk=32)

episodes = self.ep_store.load(cand_ids, user_id=user_id)

# 2) semantic expansion via graph neighbors

entities = self.graph.match_entities(query, topk=10)

neighbors = self.graph.expand(entities, hops=1)

facts = self.graph.to_snippets(entities + neighbors)

# 3) re-rank everything by cross-encoder or cosine

combined = rerank([e["text"] for e in episodes] + facts, query)

return combined[:k]

This service:

- Writes episodes with embeddings and metadata.

- Retrieves via hybrid vector + graph expansion.

- Defers to a re-ranker before packaging context.

Scoring and triage for consolidation

Use a composite score to decide what to summarize or promote to semantic memory:

score = α·recency + β·frequency + γ·novelty + δ·task_utility − λ·redundancy

Where:

- Recency: exponential decay over days since last seen.

- Frequency: count of references/queries touching the episode.

- Novelty: embedding distance from cluster centroids.

- Task utility: improvement in success metrics when included.

- Redundancy: similarity to existing semantic facts.

Evaluation methodology

Treat memory as a product surface with measurable impact.

- Retrieval precision@k: fraction of retrieved snippets judged relevant by humans.

- Answer correctness: task success with vs. without memory.

- Knowledge update latency: time from new fact arrival to being used correctly.

- Hallucination rate: proportion of claims without provenance when memory exists.

- Lifespan curves: retention vs. time after consolidation/forgetting policies.

- Cost/latency: end-to-end time and token consumption per action.

Design offline testbeds:

- Synthetic timelines with planted facts and distractors.

- Mutation tests where facts change (e.g., version updates) to assess supersession.

- Real traffic replays with privacy-safe sampling.

Governance, privacy, and safety

Memory amplifies risk as well as capability.

- Data minimization: store only what’s needed; prefer references to copies.

- Access control: tenant- and user-scoped retrieval; encrypt at rest and in transit.

- PII handling: redact, hash, or tokenize; maintain reversible vaults only when justified.

- Provenance: attach source URIs, timestamps, and signatures to semantic facts.

- Right-to-be-forgotten: design delete paths that cascade through summaries and embeddings.

- Policy-aware retrieval: filter before ranking to avoid leaking restricted data.

Common pitfalls

- Overlong episodes: failing to chunk and summarize leads to slow, noisy retrieval.

- Single-index thinking: relying only on vectors or only on graphs underperforms hybrid approaches.

- Unbounded growth: no TTL or consolidation strategy bloats cost and dilutes relevance.

- Stale knowledge: missing supersession/versioning produces contradictions.

- Evaluation blind spots: measuring only top-k similarity, not downstream task success.

Design patterns that work

- Dual-write with provenance: every episodic capture logs source and hash; semantic promotion references episode IDs.

- Graph-first canonicalization: entity resolution happens once, everywhere else uses canonical IDs.

- Memory middle layer: a stateless API that abstracts multiple backends and enforces policy.

- Active rehearsal: schedule periodic “memory refresh” prompts that re-summarize critical domains using latest episodes.

- Contrastive consolidation: when two episodes conflict, promote both with context and create an explicit contradicts edge pending adjudication.

Scaling considerations

- Index sharding: partition episodic vectors by tenant or time; maintain a small hot index for recent activity and a cold archive.

- Learned re-ranking: cross-encoders or lightweight rerankers dramatically improve precision over pure ANN search.

- Token-aware packaging: estimate tokens per snippet and fit to budget via greedy selection with diversity penalty.

- Caching: memoize retrieval results for repeated or multi-step plans; invalidate on new writes.

Where this is heading

- Memory-graph hybrids (Vector+Knowledge Graph): unified nodes with both symbolic attributes and dense vectors.

- Long-context models: reduce retrieval pressure but increase the need for structured packaging and citation.

- Event-sourced agents: deterministic replay of decisions for auditing and improvement.

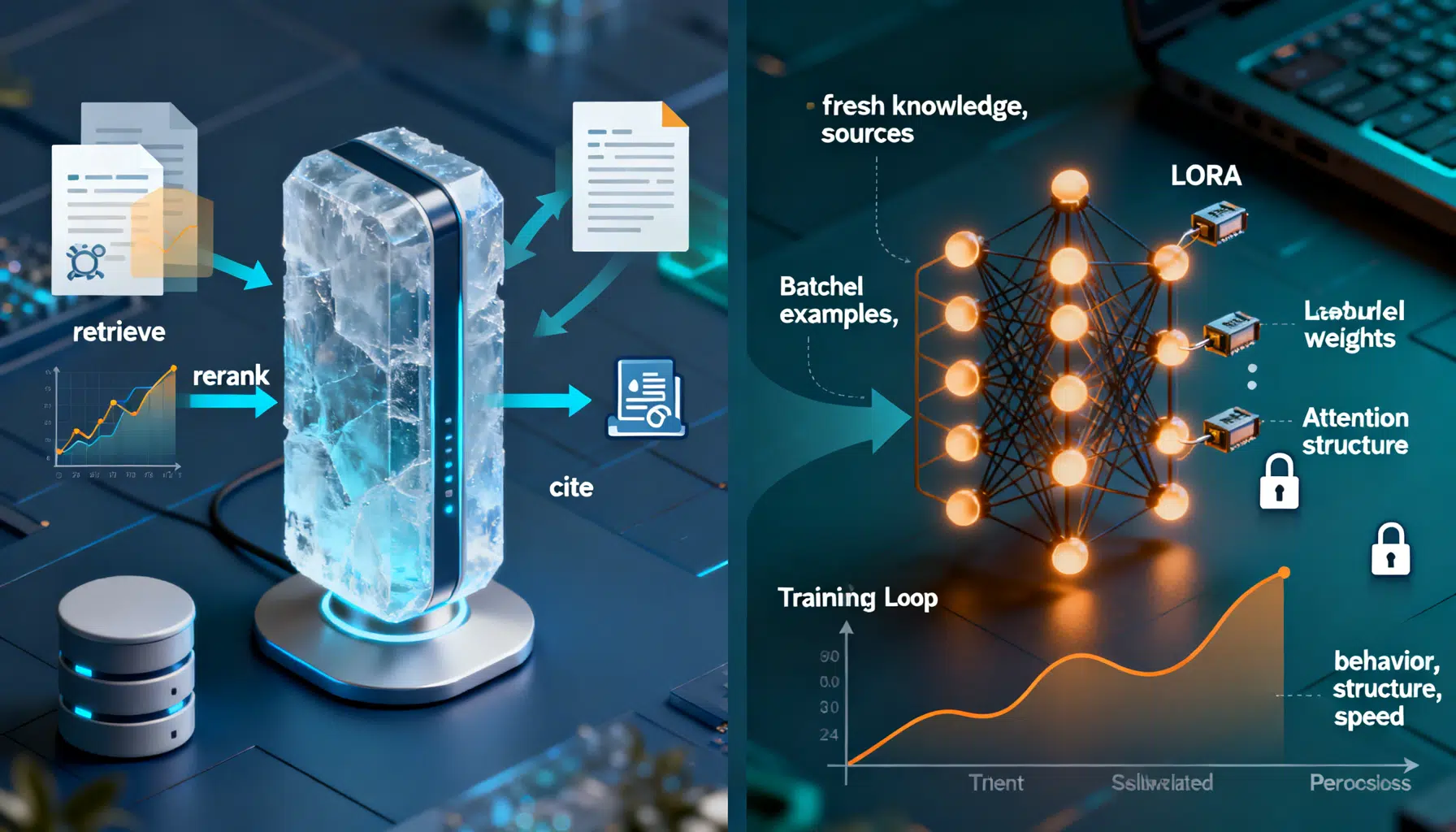

- Continual learning loops: fine-tuned adapters that absorb stable semantic knowledge from consolidated facts.

A practical blueprint

- Start small: document store + pgvector for episodes; add a graph later for high-value domains.

- Define schemas and governance on day one; version facts and keep provenance.

- Implement hybrid retrieval with re-ranking; measure P@k and downstream success.

- Add consolidation jobs with clear triage scores; monitor knowledge update latency.

- Optimize for cost/latency only after you’re confidently improving task outcomes.

Conclusion

Episodic and semantic memory aren’t competing strategies; they are complementary layers that let agents remember experiences and generalize from them. Get the schemas right, combine vector and graph retrieval, consolidate relentlessly, and measure what matters. The result is an agent that not only answers—It learns, adapts, and improves with every interaction.

Related Posts

LLM Prompt Engineering Techniques in 2026: A Practical Playbook

A 2026 field guide to modern LLM prompt engineering: patterns, multimodal tips, structured outputs, RAG, agents, security, and evaluation.

RAG vs. Fine‑Tuning: How to Choose the Right Approach

A practical guide to choosing RAG vs fine-tuning, with a clear decision framework, patterns, code sketches, and pitfalls.

Pinecone Vector Database API Tutorial: Semantic & Hybrid Search with Python and Node (2026)

Hands-on Pinecone API tutorial: create serverless indexes, upsert vectors, filter metadata, and run semantic + hybrid search in Python & Node (2026).