Claude API Tool Use: The Complete, Practical Tutorial

A practical, end-to-end tutorial for Claude API tool use: define tools, run the tool loop, add web search/fetch, and productionize with SDK patterns.

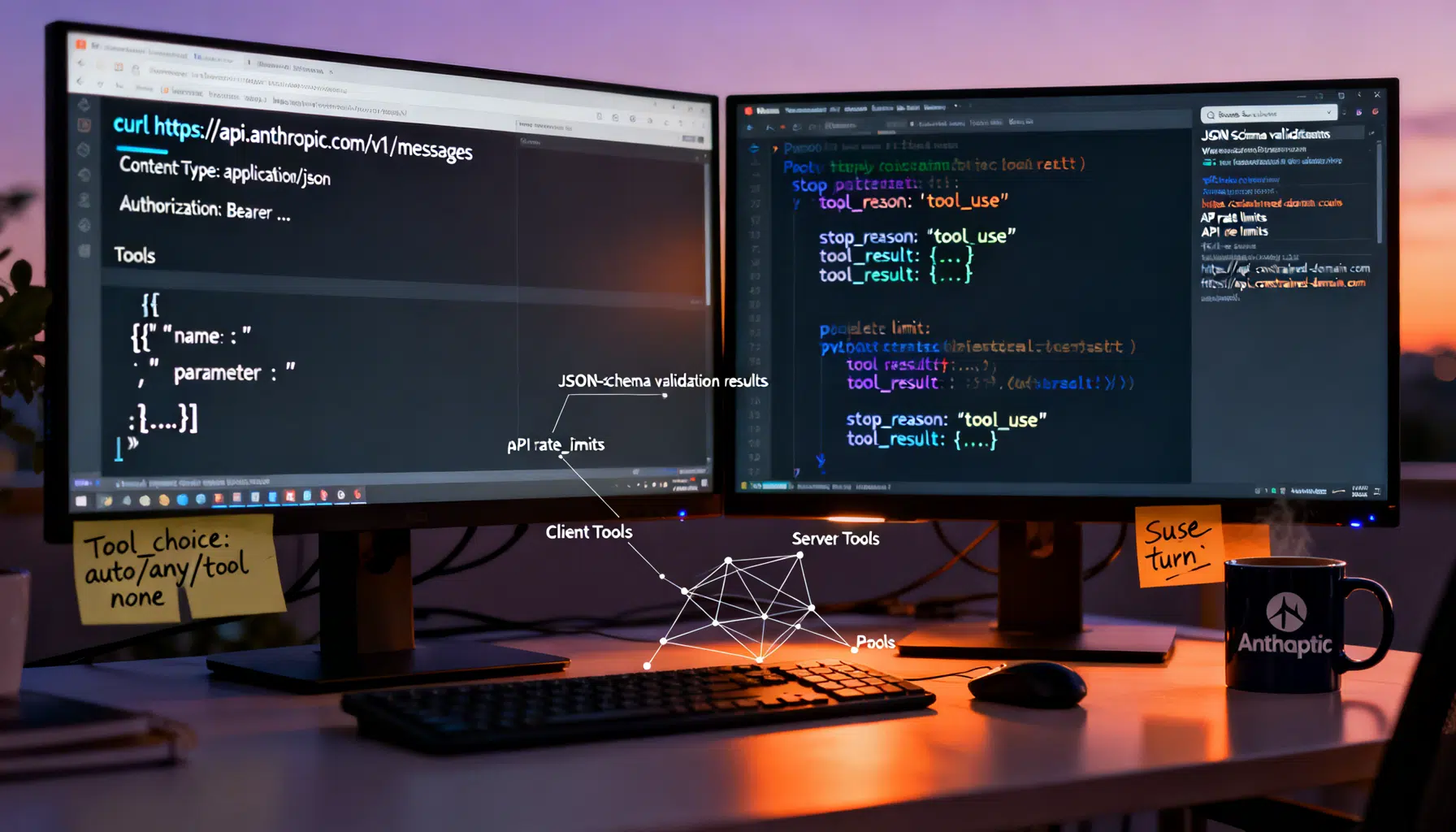

Image used for representation purposes only.

Overview

Claude’s tool use (aka function calling) lets you extend a model with real capabilities: call your own APIs, run code, search the web, or connect to Model Context Protocol (MCP) tools—all from the Messages API. In this tutorial, you’ll learn the end‑to‑end flow for client tools you execute on your servers, how to use Anthropic’s server tools like web search/fetch, and practical patterns for reliability, cost control, and safety. (docs.anthropic.com )

What you’ll build

- Define a JSON‑Schema tool Claude can call

- Run a minimal “tool loop” in Python/TypeScript

- Handle multiple/parallel tool calls and tool_choice

- Add server tools (web search/fetch) and handle pause_turn

- Adopt SDK conveniences like the tool runner and MCP integration

Prerequisites

- Anthropic API key set as ANTHROPIC_API_KEY

- Messages API basics; requests include anthropic-version: 2023‑06‑01 (curl shown below) (docs.anthropic.com )

Pick the right model for tools

Use the latest Claude Opus (4.x series) for complex/ambiguous multi‑tool tasks; Haiku for lightweight, straightforward tools where cost/latency matter most. Opus handles multiple tools better and asks clarifying questions when needed. (docs.anthropic.com )

The shape of a tool

Each tool you expose lives in the top‑level tools array and includes:

- name: 1–64 chars, [A‑Z a‑z 0–9 _ -]

- description: when/how to use the tool, caveats, fields

- input_schema: JSON Schema for arguments

- input_examples (optional): valid examples to teach formats for tricky inputs

Stronger, longer descriptions materially improve tool selection and parameter quality; add input_examples only when schemas are complex, and ensure examples validate against your schema (invalid examples 400). (docs.anthropic.com )

Minimal curl example

curl https://api.anthropic.com/v1/messages \

-H 'content-type: application/json' \

-H "x-api-key: $ANTHROPIC_API_KEY" \

-H 'anthropic-version: 2023-06-01' \

-d '{

"model": "claude-opus-4-6",

"max_tokens": 1024,

"tools": [

{

"name": "get_weather",

"description": "Return the current weather for a city, state.",

"input_schema": {

"type": "object",

"properties": {"location": {"type": "string"}},

"required": ["location"]

}

}

],

"messages": [

{"role": "user", "content": "What is the weather in San Francisco?"}

]

}'

Claude replies with either a normal answer, or a tool_use decision you must fulfill. (docs.anthropic.com )

Building the client‑side tool loop

Claude’s response includes content blocks. When it wants you to run a client tool, stop_reason == “tool_use” and you’ll see one or more tool_use blocks with id, name, and input. You then execute your function and respond with a new user message whose content begins with tool_result blocks (and no text before them). This tool_result message must immediately follow the assistant’s tool_use message in the transcript. Violations cause 400s. (docs.anthropic.com )

Python (manual loop)

import os, anthropic

client = anthropic.Anthropic()

messages = [

{"role": "user", "content": "Add two numbers using the adder tool: 41 and 1"}

]

tools = [

{

"name": "adder",

"description": "Add two integers and return their sum as a string.",

"input_schema": {

"type": "object",

"properties": {"a": {"type": "integer"}, "b": {"type": "integer"}},

"required": ["a", "b"]

}

}

]

while True:

resp = client.messages.create(

model="claude-opus-4-6",

max_tokens=256,

tools=tools,

messages=messages,

)

# If Claude asked to use a tool, run it and return tool_result(s)

if resp.stop_reason == "tool_use":

tool_results = []

for block in resp.content:

if block.type == "tool_use" and block.name == "adder":

a = block.input.get("a"); b = block.input.get("b")

result = str(int(a) + int(b))

tool_results.append({

"type": "tool_result",

"tool_use_id": block.id,

"content": result

})

# Append assistant turn, then user tool_result turn (tool_result blocks first)

messages.append({"role": "assistant", "content": resp.content})

messages.append({"role": "user", "content": tool_results})

continue

# Otherwise Claude is done

print(resp.content[0].text)

break

Key rules this enforces: tool_result must come first in the user content array and must immediately follow the assistant’s tool_use turn; ids map results to calls. (docs.anthropic.com )

TypeScript (manual loop)

import Anthropic from "@anthropic-ai/sdk";

const client = new Anthropic();

const tools = [

{

name: "lookup_user",

description: "Lookup a user by email and return id and plan.",

input_schema: {

type: "object",

properties: { email: { type: "string" } },

required: ["email"],

},

},

];

let messages: Anthropic.Messages.MessageParam[] = [

{ role: "user", content: "What plan is user alice@example.com on? Use tools." },

];

while (true) {

const resp = await client.messages.create({

model: "claude-opus-4-6",

max_tokens: 256,

tools,

messages,

});

if (resp.stop_reason === "tool_use") {

const toolResults: any[] = [];

for (const block of resp.content) {

if (block.type === "tool_use" && block.name === "lookup_user") {

const { email } = (block as any).input;

const record = await dbFindByEmail(email); // your code

toolResults.push({ type: "tool_result", tool_use_id: block.id, content: JSON.stringify(record) });

}

}

messages.push({ role: "assistant", content: resp.content });

messages.push({ role: "user", content: toolResults });

continue;

}

console.log(resp.content?.[0]?.text);

break;

}

Controlling tool behavior with tool_choice and parallelism

The tool_choice field supports four modes:

- auto: model decides whether to call tools (default when tools are present)

- any: must use one of the provided tools

- tool: force a specific tool by name

- none: forbid tool use (default when tools are absent)

You can also disable parallel tool use by setting disable_parallel_tool_use: true within tool_choice. (docs.anthropic.com )

Server tools: web search and web fetch

Server tools execute on Anthropic’s side within a sampling loop. You include them in tools; Claude may call them, and the results arrive already incorporated into the model’s reply. If the loop hits its iteration cap, you’ll see stop_reason == pause_turn—send the response back to continue. (docs.anthropic.com )

- Web search: type “web_search_20250305”; supports domain filters and usage caps; pricing is per search in addition to tokens. (docs.anthropic.com )

- Web fetch: fetch and analyze specific URLs; you can constrain allowed_domains; streaming emits web_fetch_tool_result blocks. (docs.claude.com )

Tip: When enabling server tools, design prompts that ask Claude to cite sources and to prefer high‑quality domains; this improves grounding and downstream trust.

Faster starts with the SDK tool runner (beta)

Anthropic SDKs provide a tool runner that automatically executes tools, manages the message loop, and adds type validation and optional compaction. It’s available in Python, TypeScript, and Ruby and can stream fine‑grained tool parameters. Use it for most app code; drop to manual control for custom orchestration. (docs.anthropic.com )

MCP tools and connectors

If you already expose tools via the Model Context Protocol, you can pass MCP tool definitions directly by renaming inputSchema to input_schema, or use Anthropic’s MCP connector to call remote MCP servers from the Messages API without writing a client. (docs.anthropic.com )

Reliability patterns and common pitfalls

- Keep tool descriptions long and explicit: what it does, when to use it, parameter meanings, constraints, and what it does NOT return. Add examples only for tricky formats. (docs.anthropic.com )

- Return only high‑signal fields from your tools (stable IDs, minimal payloads) to reduce token waste and parsing errors. (docs.anthropic.com )

- Strict tool use and Structured Outputs: add strict: true to guarantee schema‑conformant inputs. (docs.anthropic.com )

- Respect ordering: the user tool_result turn must immediately follow the assistant tool_use turn, and tool_result blocks must precede any text in that turn, or the API returns 400. (docs.anthropic.com )

- Unique tool_use ids: each tool_use id maps to one tool_result; duplicate or reused ids produce 400s in practice—log request_id and errors. (reddit.com )

- Pause turns: when server tools return pause_turn, simply continue the conversation by passing back the response so Claude can finish. (docs.anthropic.com )

Cost control and caching

Tool use adds tokens for the tools array, tool_use, and tool_result blocks; server tools may add usage‑based fees (e.g., search). Use prompt caching for big tool surfaces and large tool_result payloads; as of May 1, 2025, cache_control must be set on the parent content block of tool_result/document.source. (docs.anthropic.com )

Practical tips:

- Consolidate many tiny tools into a few multi‑action tools to shrink tool definitions

- Use meaningful namespacing (e.g., github_list_prs) to reduce selection ambiguity

- Cache repeated corpus fetches (e.g., search summaries) at tool_result boundaries

- Prefer Opus for large tool collections; consider Haiku for frequent, cheap lookups (docs.anthropic.com )

Advanced features you may want

- Fine‑grained tool streaming (beta): stream parameters before full JSON validation; enable via beta header noted in release notes. (docs.anthropic.com )

- Computer Use and Text Editor/Bash tools: latest versions decouple from computer use; the computer tool requires a dated beta header. Review the release notes for exact header names and versions. (docs.anthropic.com )

- Interleaved/extended thinking with tools: see limits on tool_choice with extended thinking. (docs.anthropic.com )

End‑to‑end example: client tool + server search

This sketch shows a customer_support tool for order lookups, plus server web search for policy questions.

import anthropic

client = anthropic.Anthropic()

tools = [

{

"name": "customer_support",

"description": "Lookup an order by id and return status, total, and ETA. Use only when users ask about orders.",

"input_schema": {

"type": "object",

"properties": {"order_id": {"type": "string"}},

"required": ["order_id"]

}

},

{ "type": "web_search_20250305", "name": "web_search", "max_uses": 3 }

]

messages = [

{"role": "user", "content": "Has our return policy changed this year? Also, where is order ORD-92?"}

]

while True:

resp = client.messages.create(model="claude-opus-4-6", max_tokens=512, tools=tools, messages=messages)

if resp.stop_reason == "tool_use":

results = []

for b in resp.content:

if b.type == "tool_use" and b.name == "customer_support":

data = db_lookup(b.input["order_id"]) # your code

results.append({"type": "tool_result", "tool_use_id": b.id, "content": anthropic.TextBlockParam(text=str(data))})

messages.append({"role": "assistant", "content": resp.content})

if results:

messages.append({"role": "user", "content": results})

continue

print(resp.content[0].text)

break

Claude may use web_search to address the policy question and the client tool for the order. If the server tool loop pauses, forward the response to let it finish. (docs.anthropic.com )

Migration notes (OpenAI SDK compatibility)

If you have an OpenAI‑style function‑calling app, Anthropic publishes a compatibility layer so you can test Claude models with minimal code changes—including support for parallel_tool_calls. Validate behavior and safety before going to production. (docs.anthropic.com )

Troubleshooting checklist

- 400 errors after tool calls? Verify tool_result comes first in the user message, placed immediately after the tool_use turn, and that tool_use ids are unique. (docs.anthropic.com )

- Model never uses your tool? Strengthen the description, add input_examples, or set tool_choice:any or tool to force usage. (docs.anthropic.com )

- Web fetch/search limits? Validate allowed_domains for fetch and search usage caps/pricing. (docs.claude.com )

Final thoughts

Start simple: one or two well‑described tools, log every tool_use and tool_result, and add prompt caching as payloads grow. Once stable, layer on server tools and the SDK tool runner, then consider strict tools for guaranteed schemas. This keeps reliability high while you scale from a handful of tools to production‑grade agents. (docs.anthropic.com )

Related Posts

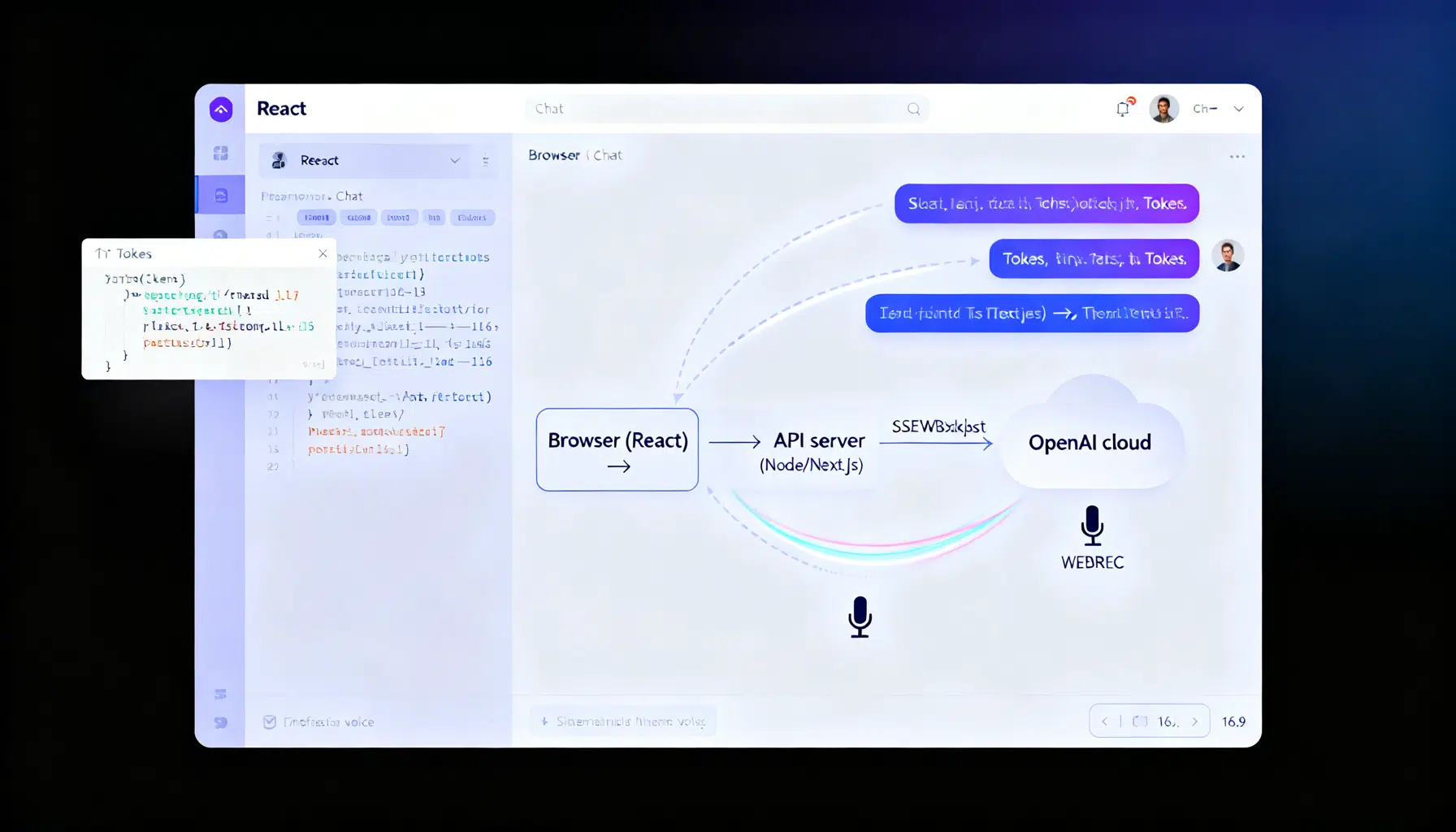

OpenAI API with React: A 2026 Guide to Chat, Tools, and Realtime Voice

A practical 2026 guide to building React apps on the OpenAI API: secure setup, typed streaming, tools/function calling, and live voice with Realtime.