Practical Techniques to Reduce AI Hallucinations

A practical, end-to-end guide to reducing AI hallucinations with data, training, retrieval, decoding, and verification techniques.

Image used for representation purposes only.

Overview

Large language models are powerful, but they sometimes produce confident, fluent answers that are wrong—so‑called “hallucinations.” This article maps the problem space and offers practical techniques to prevent, detect, and mitigate hallucinations across the entire lifecycle: data, training, retrieval, decoding, and operations.

What counts as a hallucination?

Hallucinations are outputs that are not supported by facts or by the model’s accessible context. Common forms include:

- Fabrications: Invented facts, events, or entities.

- Misattributed citations: Real sources cited for claims they do not contain.

- Faulty reasoning: Valid facts connected by incorrect logic.

- Temporal drift: Statements that were once true but are now outdated.

- Overconfident speculation: Answers delivered with certainty despite low evidence.

Two useful quality notions:

- Faithfulness: Does the answer stay grounded in the provided context/evidence?

- Truthfulness: Is the answer correct with respect to the real world, even beyond the provided context?

Why hallucinations happen

- Objective mismatch: Next‑token prediction rewards plausible continuations, not factuality.

- Data noise and gaps: Web-scale data contains errors and coverage holes.

- Distribution shift: User queries differ from training distribution.

- Limited context and forgetting: Evidence may not fit the context window or be recalled reliably.

- Decoding randomness: Sampling can favor fluent but unsupported continuations.

- Miscalibration: Token probabilities do not reflect true uncertainty.

Measuring hallucinations

You cannot reduce what you do not measure. Combine multiple evaluation methods:

- Groundedness checks: Compare answers against provided documents; flag claims not entailed by sources.

- Automatic verification: Use retrieval to find supporting evidence and run NLI/entailment to score support vs contradiction.

- Human evaluation: Expert review with rubrics for correctness, completeness, and confidence.

- Claim extraction: Parse outputs into atomic claims and attempt to verify each.

- Calibration metrics: Expected Calibration Error (ECE), selective risk with abstention, and coverage/precision trade‑offs.

- Task benchmarks: Closed‑book QA, open‑book QA, and domain datasets (e.g., finance, medical, legal) with strict citation requirements.

Techniques that work in practice

The most reliable solutions are layered. Combine upstream (data/training) and downstream (retrieval/decoding/ops) controls.

1) Data curation and pretraining hygiene

- Deduplication and provenance: Reduce spurious correlations and repeated errors.

- Quality filtering: Heuristics, classifiers, and weak supervision to remove low‑credibility text.

- Balanced coverage: Include authoritative sources for underrepresented domains.

- Verified synthetic data: Use generation pipelines with retrieval and multi‑step verification to create high‑precision training examples.

2) Instruction tuning for honesty and uncertainty

- High‑quality instruction datasets that reward: stating uncertainty, asking clarifying questions, and refusing when evidence is missing.

- Preference optimization (RLHF/RLAIF): Include preferences that prioritize factual grounding over verbosity.

- Constitutional objectives: Encode rules like “cite sources,” “don’t speculate about unverifiable facts,” and “ask for context when unsure.”

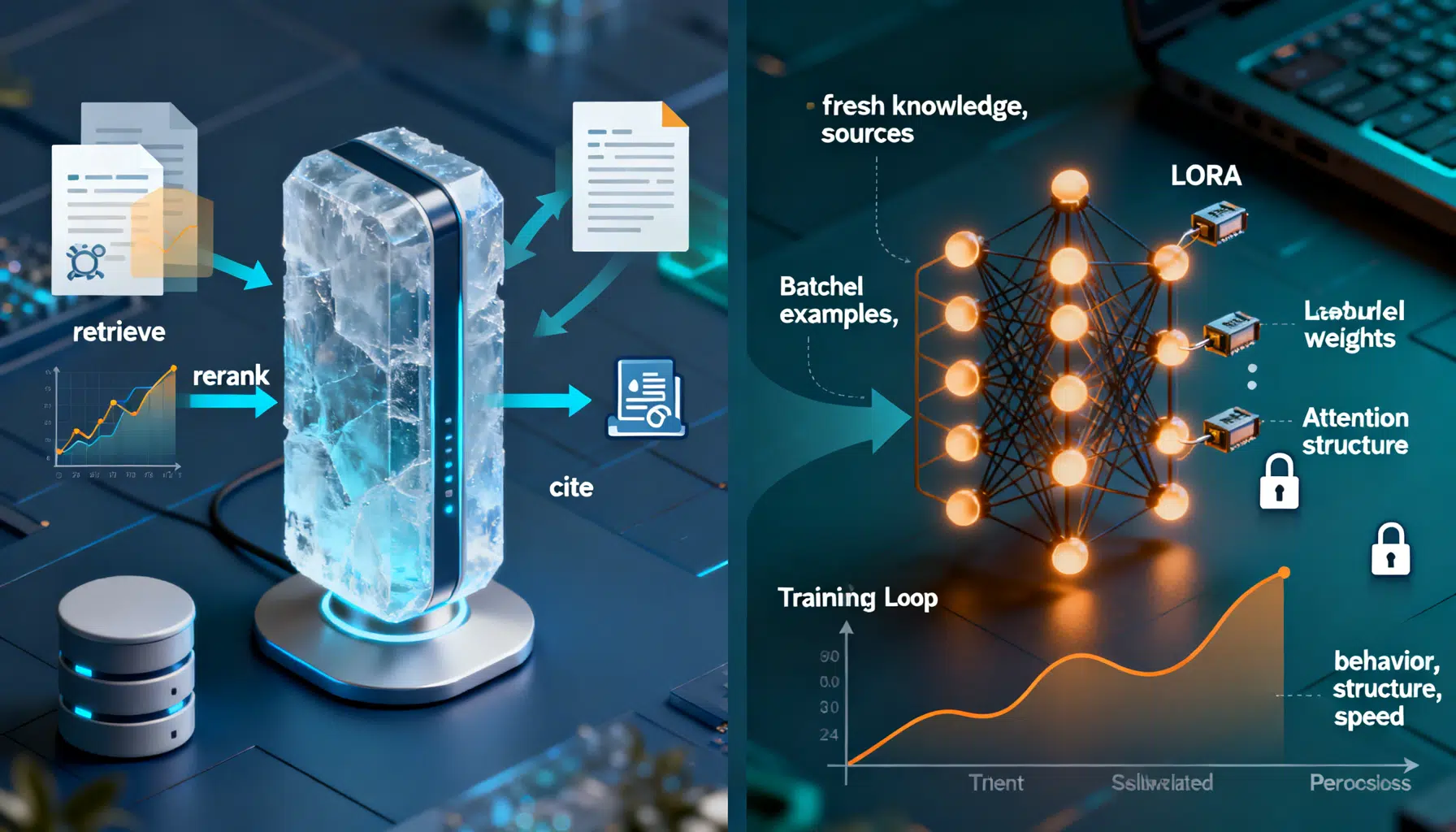

3) Retrieval‑Augmented Generation (RAG)

Ground the model in up‑to‑date, authoritative evidence.

- Index design: Use high‑quality embeddings, domain‑specific tokenization, and smart chunking (overlap, headings, tables as units).

- Query planning: Expand, rewrite, and multi‑hop query to fetch supporting documents.

- Reranking: Cross‑encoders to promote passages that directly answer the question.

- Context orchestration: Deduplicate, compress, and cite passages; include metadata (title, date, source).

- Source discipline: Require the model to only answer from retrieved sources or to abstain.

Example RAG flow (pseudocode):

question = user_input()

queries = expand_queries(question)

candidates = hybrid_search(index, queries) # sparse + dense

ranked = cross_encoder_rerank(candidates)

context = budgeted_summarize(ranked, limit=8_000) # token budget aware

prompt = build_prompt(system_rules, context, question)

raw_answer = llm.generate(prompt, temperature=0.2)

answer = cite_and_ground(raw_answer, context)

return verify(answer, context) # NLI + link reachability + snippet match

4) Verification layers (self‑checking and external tools)

- Self‑critique: Ask the model to list claims that need support, then verify each against the provided context.

- Cross‑model agreement: Compare answers from different models; escalate disagreements.

- Tool use: Calculators, code interpreters, and APIs (e.g., knowledge bases) for factual or numeric tasks.

- Chain‑of‑verification: After drafting, run targeted retrieval for each claim and revise unsupported parts.

- Majority voting / self‑consistency: Sample multiple low‑temperature answers, pick the consensus that is best supported by evidence.

Self‑check prompt pattern:

You are a fact‑checker. Given the answer and the supporting excerpts, do the following:

1) Extract atomic claims.

2) For each claim, label: Supported, Contradicted, or Not Found;

include the citation IDs.

3) Propose precise revisions for any Not Found/Contradicted claims.

Return only JSON with fields: claims[], revisions[].

5) Constrained decoding and structured outputs

Reduce degrees of freedom at generation time.

- Temperature and nucleus sampling (top‑p): Lower values reduce off‑manifold flights.

- Logit constraints: Penalize tokens not present in sources; bias toward citing.

- Grammar‑constrained decoding: Force valid JSON, SQL, or domain schemas.

- Schema‑aware answering: Define allowed fields, types, and enumerations.

JSON Schema example:

{

"type": "object",

"properties": {

"answer": {"type": "string"},

"citations": {

"type": "array",

"items": {"type": "string", "pattern": "^src:[0-9]+$"}

},

"confidence": {"type": "number", "minimum": 0, "maximum": 1}

},

"required": ["answer", "citations"],

"additionalProperties": false

}

6) Prompt engineering that discourages guessing

- Specify boundaries: “If information is not in the provided sources, say ‘I don’t know’.”

- Demand citations: “After each paragraph, list source IDs used.”

- Ask for clarifying questions when the prompt is underspecified.

- Encourage calibrated language: “Express uncertainty numerically when possible.”

Guardrail system prompt snippet:

System: You must ground every factual claim in the provided context. If the context is insufficient, ask for more information or answer: "I don’t know based on the provided sources." Do not invent citations. Prefer brevity over speculation.

7) Post‑generation validation

- Citation integrity: Verify that each URL is reachable and semantically contains the cited claim (snippet match).

- Contradiction scan: Use an NLI model to flag internal contradictions or conflicts with context.

- Toxicity/PII filters: Prevent harmful fabricated content from reaching users.

- Rewrite pass: If issues are found, route the draft back through retrieval and revision.

8) Human‑in‑the‑loop and escalation

- Confidence thresholds: Below a threshold, require human review.

- Triage: High‑risk domains (medical, legal, finance) default to human approval.

- Feedback loops: Capture corrections and feed them into preference data and retrievers.

Putting it together: a reference pattern

A robust production pattern often looks like this:

- Ingestion: Clean, deduplicate, and annotate sources with dates and trust scores.

- Retrieval: Hybrid search + reranking; log which passages supported which tokens.

- Drafting: Low‑temperature generation constrained by a schema; encourage abstention.

- Verification: Self‑check + external tools; targeted retrieval for unsupported claims.

- Citation validation: Link reachability and semantic snippet matching.

- Calibration: Add a confidence score and an “uncertainty explanation.”

- Escalation: Route low‑confidence or high‑impact answers to humans.

- Learning: Store counterexamples; update indexes and preference models.

Practical trade‑offs

- Latency vs accuracy: Multi‑pass verification and majority voting increase cost and delay.

- Coverage vs precision: Aggressive abstention reduces hallucinations but may frustrate users.

- RAG quality dependence: Poor indexing or chunking yields confident but wrong answers.

- Over‑constraining risk: Excessive guardrails can prevent correct but novel reasoning.

Mitigation: Provide adaptive modes (fast vs thorough), cache verified answers, and invest in retrieval quality before model size.

Quick‑start checklist for product teams

- Require citations for factual tasks; block answers with broken or non‑matching citations.

- Lower temperature/top‑p for knowledge tasks; raise only for creative work.

- Add a self‑check pass that extracts and verifies claims.

- Use hybrid retrieval with reranking; store passage IDs in logs.

- Enforce JSON/grammar constraints for structured tasks.

- Calibrate with selective abstention; show confidence to users.

- Add “I don’t know” and “ask for clarifications” behaviors to tuning data.

- Set up human review for high‑risk domains and low‑confidence outputs.

Future directions

- Faithful decoding: Training and inference methods that directly optimize factual entailment.

- Verifiable reasoning: Proof‑carrying answers and tool‑grounded intermediate steps.

- Better calibration: Post‑hoc methods and training objectives that align confidence with correctness.

- Trust‑aware UX: Interfaces that expose sources, confidence, and easy reporting of errors.

Conclusion

No single method eliminates hallucinations. The best results come from layered defenses: curate data, tune for honesty, ground with high‑quality retrieval, constrain decoding, and verify before delivery. Pair these with calibration, human oversight, and continuous learning, and you can drive hallucination rates low enough for real‑world reliability without sacrificing user experience.

Related Posts

RAG vs. Fine‑Tuning: How to Choose the Right Approach

A practical guide to choosing RAG vs fine-tuning, with a clear decision framework, patterns, code sketches, and pitfalls.

Designing Agentic AI with ReAct: From Reasoning to Action

A practical guide to the ReAct (Reason + Act) pattern for agentic AI, with design choices, code, safety, and evaluation tips.

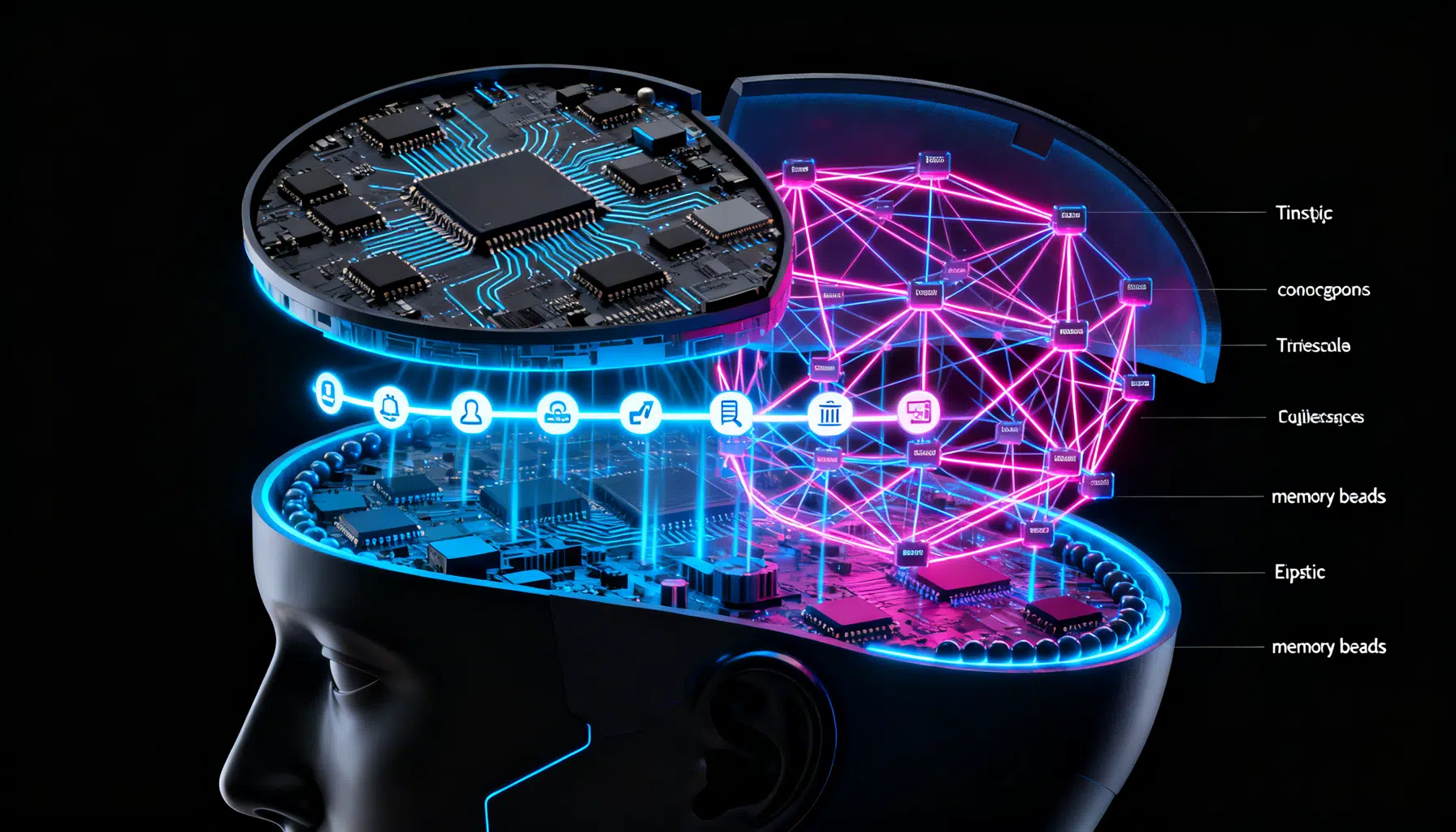

Designing AI Agent Memory: Episodic vs. Semantic Systems That Scale

Build robust AI agent memory with episodic and semantic layers: schemas, retrieval, consolidation, evaluation, and governance—practical patterns included.