DeepSeek API Integration Tutorial: From First Call to Production

Step-by-step DeepSeek API integration: base URL, models, cURL/Python/Node code, streaming, thinking mode, tool calls, errors, and production tips.

Image used for representation purposes only.

Overview

DeepSeek exposes powerful chat and reasoning models over a simple, OpenAI‑compatible HTTP API. You point an OpenAI client at DeepSeek’s base URL, pass your API key, choose a model, and send a messages array; the server replies with a familiar chat completion schema. This tutorial walks you from first request to production‑ready integration, including streaming, “thinking mode,” tool calls, error handling, and cost control. (api-docs.deepseek.com )

What you’ll build

- A “Hello, DeepSeek” request via cURL

- Minimal Python and Node.js clients using the OpenAI SDK with a custom base URL

- Streaming and JSON‑mode responses

- An example that toggles DeepSeek’s thinking mode and calls a tool (function)

- Production guardrails: retries, timeouts, and error decoding

Prerequisites

- A DeepSeek account and API key (from the platform dashboard)

- Python 3.9+ and/or Node 18+

- Basic familiarity with HTTP/JSON and environment variables

Get your API key and choose a model

- Create an API key in the DeepSeek developer platform dashboard. Keep it secret and store it in an environment variable (e.g., DEEPSEEK_API_KEY). (api-docs.deepseek.com )

- Pick a model:

- deepseek-chat (V3.2, non‑thinking mode)

- deepseek-reasoner (V3.2, thinking mode) Both support 128K context and OpenAI‑style Chat Completions. (api-docs.deepseek.com )

Base URL, endpoint, and authentication

- Base URL: https://api.deepseek.com (https://api.deepseek.com/v1 also works for OpenAI compatibility; “v1” is not a model version). (api-docs.deepseek.com )

- Primary endpoint: POST /chat/completions. (api-docs.deepseek.com )

- Auth: HTTP Bearer token in the Authorization header: Authorization: Bearer YOUR_API_KEY. (api-docs.deepseek.com )

Quickstart: cURL

export DEEPSEEK_API_KEY="sk-..."

curl https://api.deepseek.com/chat/completions \

-H "Content-Type: application/json" \

-H "Authorization: Bearer ${DEEPSEEK_API_KEY}" \

-d '{

"model": "deepseek-chat",

"messages": [

{"role": "system", "content": "You are a helpful assistant."},

{"role": "user", "content": "Hello!"}

]

}'

This returns a chat completion with choices[0].message.content containing the assistant’s reply. (api-docs.deepseek.com )

Quickstart: Python (OpenAI SDK)

# pip install openai

import os

from openai import OpenAI

client = OpenAI(api_key=os.getenv("DEEPSEEK_API_KEY"), base_url="https://api.deepseek.com")

resp = client.chat.completions.create(

model="deepseek-chat",

messages=[

{"role": "system", "content": "You are a helpful assistant."},

{"role": "user", "content": "Hello!"}

]

)

print(resp.choices[0].message.content)

The OpenAI client works because DeepSeek mirrors the OpenAI chat‑completions schema. (api-docs.deepseek.com )

Quickstart: Node.js (OpenAI SDK)

// npm i openai

import OpenAI from "openai";

const openai = new OpenAI({

apiKey: process.env.DEEPSEEK_API_KEY,

baseURL: "https://api.deepseek.com"

});

const completion = await openai.chat.completions.create({

model: "deepseek-chat",

messages: [{ role: "user", content: "Hello!" }]

});

console.log(completion.choices[0].message.content);

Model IDs and request shape match the DeepSeek API reference for Chat Completions. (api-docs.deepseek.com )

Streaming responses (SSE)

DeepSeek supports server‑sent event (SSE) streaming using stream: true. The SDKs yield chunks until a final data: [DONE] marker. When servers are under high load, you may see SSE keep‑alives (": keep-alive"); your parser should ignore them. Non‑streaming requests may return empty lines while queued. If inference hasn’t started within ~10 minutes, the server closes the connection. (api-docs.deepseek.com )

Python example:

from openai import OpenAI

import os

client = OpenAI(api_key=os.getenv("DEEPSEEK_API_KEY"), base_url="https://api.deepseek.com")

stream = client.chat.completions.create(

model="deepseek-chat",

messages=[{"role": "user", "content": "Stream a haiku about ocean tides."}],

stream=True,

)

for chunk in stream:

delta = chunk.choices[0].delta

if delta and getattr(delta, "content", None):

print(delta.content, end="", flush=True)

print()

Thinking mode (deepseek-reasoner)

DeepSeek provides a “thinking” (reasoning) mode that can invoke internal chains of thought and tool calls before finalizing the answer. You can toggle it by model selection and, for fine control, via the thinking object (e.g., {“thinking”: {“type”: “enabled”}}) in the request. Some advanced flows in thinking mode require echoing reasoning_content back to the API between steps; if you don’t return it correctly, you may get a 400 error. See the Thinking Mode guide for the data flow and compatibility notes. (api-docs.deepseek.com )

Example (enabling thinking explicitly):

{

"model": "deepseek-reasoner",

"thinking": { "type": "enabled" },

"messages": [

{"role": "user", "content": "Outline a weekend plan with 3 outdoor activities in Denver."}

]

}

Tool calls (function calling) and strict mode

You can provide a tools array of JSON‑Schema‑described functions. The model decides when to call a function (auto) or you can force it (required). In strict mode (Beta), the server validates tool schemas and the model’s outputs must match the schema. To enable strict mode features, point your client to the Beta base URL (https://api.deepseek.com/beta ) and set strict: true in your function definitions. (api-docs.deepseek.com )

Python sketch:

from openai import OpenAI

client = OpenAI(api_key=os.getenv("DEEPSEEK_API_KEY"), base_url="https://api.deepseek.com")

tools = [

{

"type": "function",

"function": {

"name": "get_weather",

"description": "Get the current weather for a city.",

"parameters": {

"type": "object",

"properties": {"city": {"type": "string"}},

"required": ["city"],

"additionalProperties": False

}

}

}

]

resp = client.chat.completions.create(

model="deepseek-chat",

messages=[{"role": "user", "content": "Weather in Austin?"}],

tools=tools,

tool_choice="auto"

)

print(resp.choices[0].message)

JSON‑mode output

To force machine‑parsable replies, set response_format to {“type”: “json_object”} and also instruct the model in your prompt to output JSON. If finish_reason == “length” you may receive truncated JSON; increase max_tokens or retry. These behaviors are documented in the Chat Completions reference. (api-docs.deepseek.com )

Handling errors and retries

Common HTTP statuses include:

- 400 Invalid format: fix request body/schema

- 401 Authentication fails: check Bearer key

- 402 Insufficient balance: top up in dashboard

- 422 Invalid parameters: correct unsupported values

- 429 Rate limit reached: back off and retry with jitter

- 500/503 Server issues: exponential backoff and retry See DeepSeek’s Error Codes page for full descriptions and remediation. (api-docs.deepseek.com )

Retry policy sketch (pseudocode): retry on 429/500/503 with exponential backoff and jitter; don’t retry 4xx except 429. DeepSeek also exposes a public status page you can check during incidents. (chat-deep.ai )

Performance and cost tuning

- Context length: up to 128K for V3.2 models.

- Default/maximum output tokens: e.g., deepseek-chat default 4K (max 8K), deepseek-reasoner default 32K (max 64K). Tune max_tokens to control latency and spend.

- Pricing (per 1M tokens, as listed): input (cache hit/miss) and output rates are published on the Models & Pricing page; check it before launch since prices can change. (api-docs.deepseek.com )

- Streaming: improves perceived latency for long replies. (api-docs.deepseek.com )

- JSON‑mode and tool calls: constrain outputs to reduce post‑processing.

Security and data hygiene

- Store API keys in environment variables or a secrets manager; never commit them to source control.

- Avoid sending sensitive PII; minimize, redact, and encrypt logs.

- Use server‑side calls or a secure proxy—don’t expose keys in client‑side JS.

- Enforce timeouts; set sane per‑request and connect/read timeouts to avoid hanging connections. These practices align with DeepSeek’s guidance and the API’s SSE behavior. (api-docs.deepseek.com )

Troubleshooting checklist

- 401/403: Verify Authorization header format and account status; regenerate key if compromised. (api-docs.deepseek.com )

- 404: Check the exact path /chat/completions and base URL (api.deepseek.com). (api-docs.deepseek.com )

- 429: Slow your request rate and retry with backoff; consider smoothing bursts. (api-docs.deepseek.com )

- Long waits: Expect empty lines (non‑stream) or : keep‑alive (stream) during congestion; the server may close idle starts at ~10 minutes. (api-docs.deepseek.com )

- Outages: Check the status page for current incidents. (status.deepseek.com )

End‑to‑end example (with options)

from openai import OpenAI

import os

client = OpenAI(api_key=os.getenv("DEEPSEEK_API_KEY"), base_url="https://api.deepseek.com")

messages = [

{"role": "system", "content": "You are a concise trip‑planner that outputs JSON."},

{"role": "user", "content": "2‑day Austin itinerary with food + live music."}

]

resp = client.chat.completions.create(

model="deepseek-reasoner",

messages=messages,

response_format={"type": "json_object"},

temperature=0.4,

max_tokens=800,

stream=False

)

print(resp.choices[0].message.content)

This uses deepseek-reasoner, requests JSON output, and limits max_tokens to cap latency/cost. Model and parameter semantics follow the Chat Completions reference. (api-docs.deepseek.com )

Where to go next

- Quick Start (“Your First API Call”) for copy‑paste‑ready snippets

- Models & Pricing for current context limits and per‑token rates

- Thinking Mode and Tool Calls guides for agents and function calling flows

- API Reference for the complete schema and advanced options

- Status Page during incidents Official links are maintained in the DeepSeek API Docs. (api-docs.deepseek.com )

Related Posts

gRPC Streaming API Tutorial: Server, Client, and Bidirectional Patterns with Go and Python

Hands-on gRPC streaming tutorial: server, client, and bidirectional streams with Go and Python, plus proto design, flow control, deadlines, security, and testing.

Pinecone Vector Database API Tutorial: Semantic & Hybrid Search with Python and Node (2026)

Hands-on Pinecone API tutorial: create serverless indexes, upsert vectors, filter metadata, and run semantic + hybrid search in Python & Node (2026).

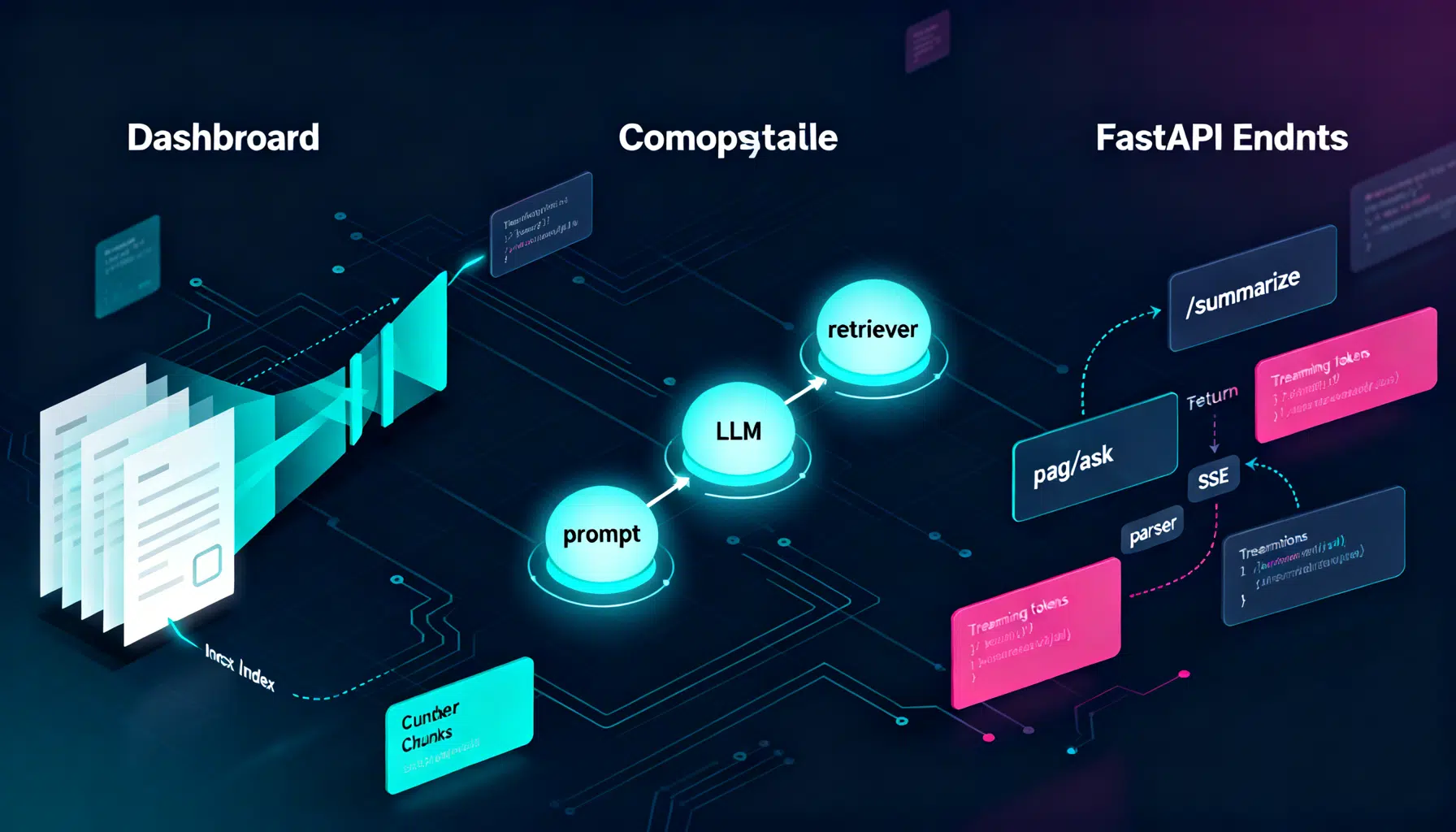

LangChain API Tutorial: From Hello World to Production RAG with FastAPI and LangServe

Build a production-ready LangChain API: LCEL chains, LangServe, FastAPI streaming, RAG, structured outputs, testing, and deployment tips.