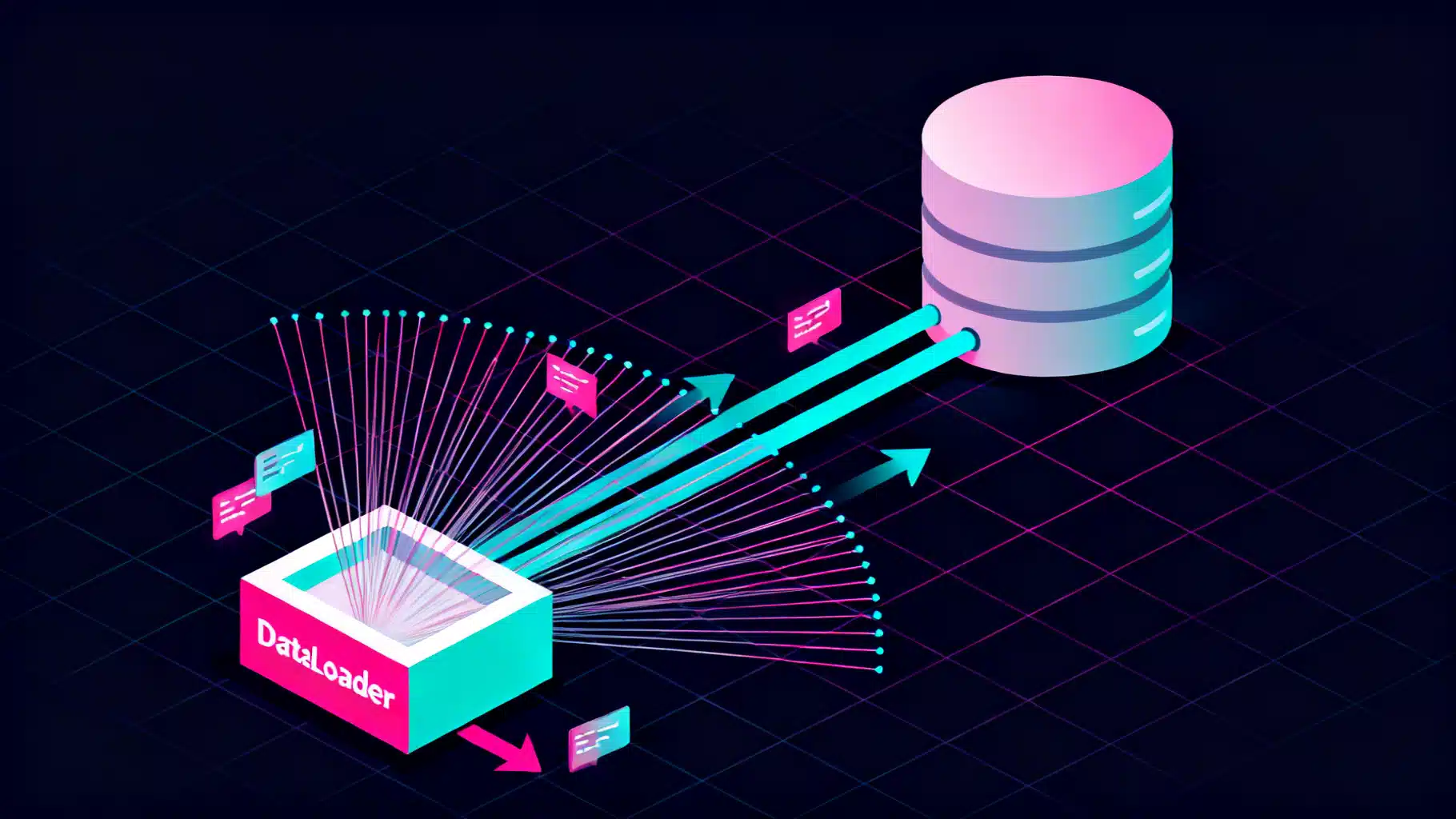

Stop the N+1 Spiral: The GraphQL DataLoader Batching Pattern, Explained

A practical guide to GraphQL DataLoader batching: fix N+1, design robust loaders, TypeScript examples, caching, observability, and production pitfalls.

Image used for representation purposes only.

Why the DataLoader Pattern Matters in GraphQL

GraphQL resolvers are small, composable units that fetch the data for each field. That composability is a superpower, but it also invites the N+1 query problem: a top-level list resolver triggers N additional calls—often one per item—for nested fields. At scale, this quietly turns simple queries into latency bombs and hammers your database or downstream services.

The DataLoader pattern eliminates most of that waste. It batches many key-based loads into a single fetch and caches results for the duration of a request, commonly reducing dozens or hundreds of round-trips to just a handful. In this article, we’ll dissect the pattern, build it step-by-step, cover edge cases, and share production-ready best practices.

The N+1 Problem, Illustrated

Consider a schema:

type Query {

users(ids: [ID!]!): [User!]!

}

type User {

id: ID!

name: String!

posts: [Post!]!

}

type Post {

id: ID!

title: String!

authorId: ID!

}

A naive implementation of User.posts fetches posts one user at a time:

// Pseudocode (naive): one query per user

const resolvers = {

Query: {

users: (_parent, { ids }, { db }) => db.user.findMany({ where: { id: { in: ids } } }),

},

User: {

posts: (user, _args, { db }) => db.post.findMany({ where: { authorId: user.id } }),

},

};

For a query requesting 50 users and their posts, you’ll run 1 query for users plus 50 queries for posts—51 total. With multiple nested fields, the count grows quickly.

Enter DataLoader: Batching and Per-Request Caching

At its core, a DataLoader:

- Accepts many individual

load(key)calls during a short window (often a single event loop tick). - Coalesces those keys into one

batchFunction(keys)call. - Returns results aligned with the input keys (including nulls for missing records).

- Caches results for the current request to deduplicate loads.

This keeps your resolver code simple while turning many small calls into a few big ones.

Building a Minimal DataLoader in Node.js/TypeScript

We’ll use the popular dataloader library API for concreteness. The same ideas apply in other languages and frameworks.

import DataLoader from 'dataloader';

import type { DB, UserRow, PostRow } from './types';

// Utility to map rows to keys while preserving order

function mapToKeys<K extends string | number, V extends { id: K }>(

keys: readonly K[],

rows: readonly V[],

by: (v: V) => K = (v) => v.id

): (V | null)[] {

const map = new Map<K, V>();

for (const r of rows) map.set(by(r), r);

return keys.map((k) => map.get(k) ?? null);

}

export function buildLoaders(db: DB) {

const userById = new DataLoader<string, UserRow | null>(async (ids) => {

const rows = await db.user.findMany({ where: { id: { in: [...ids] } } });

return mapToKeys(ids, rows);

});

const postsByAuthorId = new DataLoader<string, PostRow[]>(async (authorIds) => {

const rows = await db.post.findMany({ where: { authorId: { in: [...authorIds] } } });

const groups = new Map<string, PostRow[]>();

for (const id of authorIds) groups.set(id, []);

for (const r of rows) groups.get(r.authorId)?.push(r);

return authorIds.map((id) => groups.get(id) ?? []);

});

return { userById, postsByAuthorId } as const;

}

Attach loaders to the GraphQL context per request:

import { createYoga } from 'graphql-yoga';

import { buildLoaders } from './loaders';

import { db } from './db';

const yoga = createYoga({

schema,

context: async ({ request }) => ({

db,

loaders: buildLoaders(db), // new loaders EACH request

viewer: await authenticate(request),

}),

});

Use the loaders in resolvers:

const resolvers = {

Query: {

users: (_p, { ids }, { loaders }) => Promise.all(ids.map((id: string) => loaders.userById.load(id))),

},

User: {

posts: (user, _args, { loaders }) => loaders.postsByAuthorId.load(user.id),

},

};

The first time a resolver calls load(id), the request-scoped cache is empty, so the key is queued for batching. Additional loads of the same key within the request return the cached Promise—no extra database round-trip.

Verifying the Impact

Without loaders, a query for 50 users and their posts produces 51+ queries. With loaders:

- One batched query to fetch 50 users by ID.

- One batched query to fetch posts for those 50 authors.

Total: 2 large queries, not 51+. Latency typically drops and throughput increases, especially when the original pattern thrashed connections.

Core Design Choices

- Scope: Always create loaders per request. Global singletons leak data, break authorization, and balloon memory use.

- Keys: Use stable, serializable keys (IDs, tuples). If keys are objects, define a

cacheKeyFnthat canonicalizes shape and casing. - Cache behavior: Keep the default in-memory per-request cache for dedupe. Consider an LRU or TTL only if your request lifetimes are long or memory is tight.

- Error handling: Batch functions must return an array the same length as keys. Place errors where they belong; don’t throw a single error for the whole batch unless the entire batch fails.

- Ordering: Always reorder results to match the incoming keys. Missing items should map to

null(or an empty array for fan-out loaders like posts-by-author).

One-to-Many Loaders Done Right

Loading arrays (e.g., posts by author) requires careful grouping:

const postsByAuthorId = new DataLoader<string, PostRow[]>(async (authorIds) => {

const rows = await db.post.findMany({ where: { authorId: { in: [...authorIds] } } });

const groups = new Map<string, PostRow[]>();

for (const id of authorIds) groups.set(id, []);

for (const row of rows) groups.get(row.authorId)?.push(row);

return authorIds.map((id) => groups.get(id) ?? []);

});

This pattern:

- Batches all authors into a single query.

- Preserves the order of

authorIdsin the output. - Returns

[]for authors with no posts (notnull), matching GraphQL list semantics.

Mutations, Priming, and Cache Invalidation

Write operations can make cached reads stale. Options:

- Clear on write:

loaders.userById.clear(userId)after an update. - Prime with fresh data:

loaders.userById.clear(userId).prime(userId, updatedUser). - Broad invalidation: If a write impacts many keys (e.g., change an org name visible across many fields), either avoid priming or rely on short-lived request caches and let the next request read from the source of truth.

Remember that DataLoader’s default cache is per request; many apps don’t need global invalidation at all. If you add a cross-request cache, you must design an invalidation strategy.

Batching Windows and Microtasks

Most DataLoader implementations schedule the batch function to run on the next microtask or event loop tick. Practical implications:

- Multiple

load(key)calls in one tick coalesce into a single batch. - If you

awaitbetween loads in the same resolver, you may accidentally split batches. Prefer parallel patterns likePromise.all.

Example anti-pattern:

// This may split batches and reduce efficiency

for (const id of ids) {

const u = await loaders.userById.load(id);

users.push(u);

}

Better:

const users = await Promise.all(ids.map((id) => loaders.userById.load(id)));

Integrating with Popular Servers

- Apollo Server: Build loaders in the

contextfunction. With federation, create loaders in each subgraph for local data and in the gateway for cross-service fetches. - GraphQL Yoga / Helix: Same as shown—return loaders from the

contextfactory. - NestJS GraphQL: Provide a request-scoped provider that constructs and injects loaders per request.

- GraphQL Mesh / BFF layers: Use loaders to batch calls to REST or gRPC services, not just databases.

Beyond Databases: Batch Any Keyed Backend

DataLoader shines anywhere you have keyed lookups:

- REST: Batch

GET /users/:idintoGET /users?ids=...(if the service supports it) or parallelize with connection pooling. - gRPC/Thrift: Use a batch endpoint or multiplex across a single connection.

- Caches: Deduplicate cache misses while a fill is in flight.

Production Hardening and Pitfalls

- Avoid global singletons. Always request-scope loaders to prevent data leakage and unbounded growth.

- Don’t over-batch. Very large batches can cause huge SQL

INclauses or payloads. Cap batch sizes (e.g., 100–500) and let the library split them. - Respect authorization. If access control varies per viewer, loaders must be built with the viewer context and include any security predicates in the batch query.

- Stable keys only. If keys are composite, define a stable string form like

"${orgId}:${userId}". - Memory pressure. A single query that returns megabytes of data can bloat the per-request cache. Keep fields selective and consider projection.

- Partial failures. Return errors per key; a 404 for one key shouldn’t fail the entire batch.

- Transaction boundaries. If a resolver needs strong consistency inside a transaction, pass a transaction handle into the loader factory so that all batched queries use the same transaction.

Observability and Tuning

Track these metrics per loader:

- Batch size distribution (p50, p95, max).

- Cache hit ratio within a request.

- Downstream latency and error rate.

- Number of keys per request.

Add lightweight logging around the batch function, or expose counters via your metrics system. In GraphQL tracing tools, annotate resolvers with loader metadata to correlate slow fields to specific loaders.

Tuning knobs:

- Batch size limit: Prevent pathological

IN (...)explosions. - Cache toggles: Disable cache for highly volatile keys (

{ cache: false }) or use short TTLs in cross-request caches. - Sharding loaders: Separate hot and cold paths or multi-tenant datasets so batch queries stay tight and predictable.

A Practical Checklist

- Create loaders per request:

context.loaders = buildLoaders(deps). - Use loaders in nested resolvers, never call the database directly for key-based fetches.

- Keep batch functions pure and deterministic; always return an array matching input order.

- Use

Promise.allto preserve batching opportunities. - Implement

clear/primearound mutations when needed. - Cap batch sizes; monitor batch metrics and cache hit ratios.

- Test loaders in isolation with representative keys and missing-data cases.

Testing Loaders

Unit test the batch function directly:

it('maps rows to keys and preserves order', async () => {

const db = fakeDb({ users: [{ id: '1' }, { id: '3' }] });

const { userById } = buildLoaders(db);

const result = await userById.loadMany(['1', '2', '3']);

expect(result).toEqual([

{ id: '1' }, // hit

null, // miss

{ id: '3' }, // hit

]);

});

For micro-batching behavior, use fake timers or flush microtasks to ensure keys coalesce as expected. Add integration tests that execute real GraphQL operations and assert the count of database queries.

DataLoader vs. Caching Layers

DataLoader is not a substitute for Redis, CDNs, or database indices. Think of it as a request-scoped dedupe and batching mechanism. It pairs well with:

- Query-level caching (e.g., persisted queries + CDN).

- Record-level caches (e.g., Redis) to reduce source-of-truth reads.

- Database optimizations (indexes, projections, covering queries).

Use DataLoader to shape traffic efficiently; use other caches to avoid traffic entirely when safe.

Conclusion

The DataLoader batching pattern is the most effective first step to eliminate N+1 problems in GraphQL. By coalescing many small lookups into a few well-shaped batch queries—and caching results per request—you get predictable performance without sacrificing resolver composability. With careful scoping, solid metrics, and a few practical safeguards, loaders remain simple to maintain and pay dividends across the lifetime of your API.

Related Posts

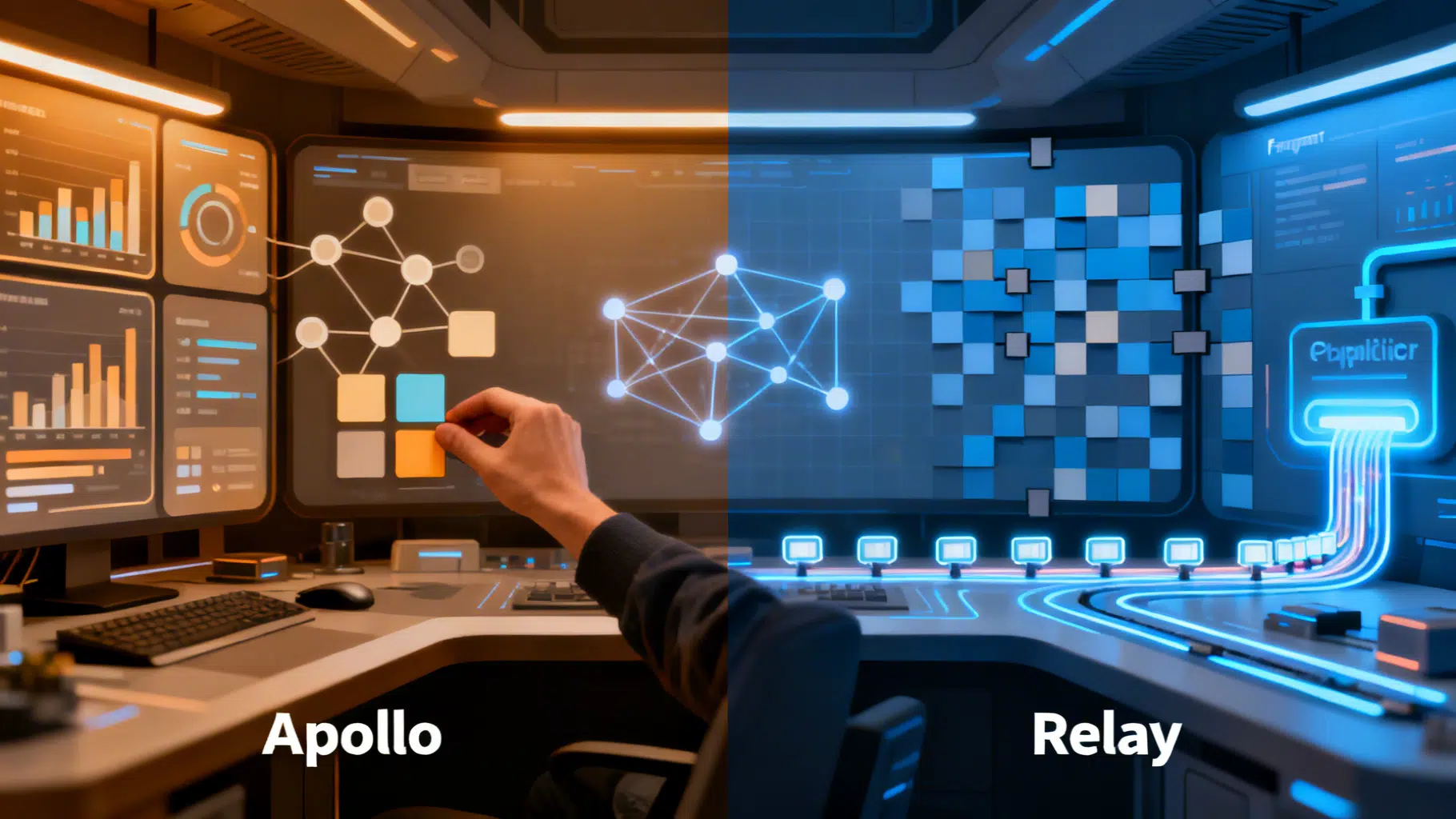

Apollo vs Relay: Choosing the Right GraphQL Client for React

Apollo vs Relay: strengths, trade‑offs, and when to choose each for React GraphQL apps, from caching and pagination to SSR, typing, and developer UX.

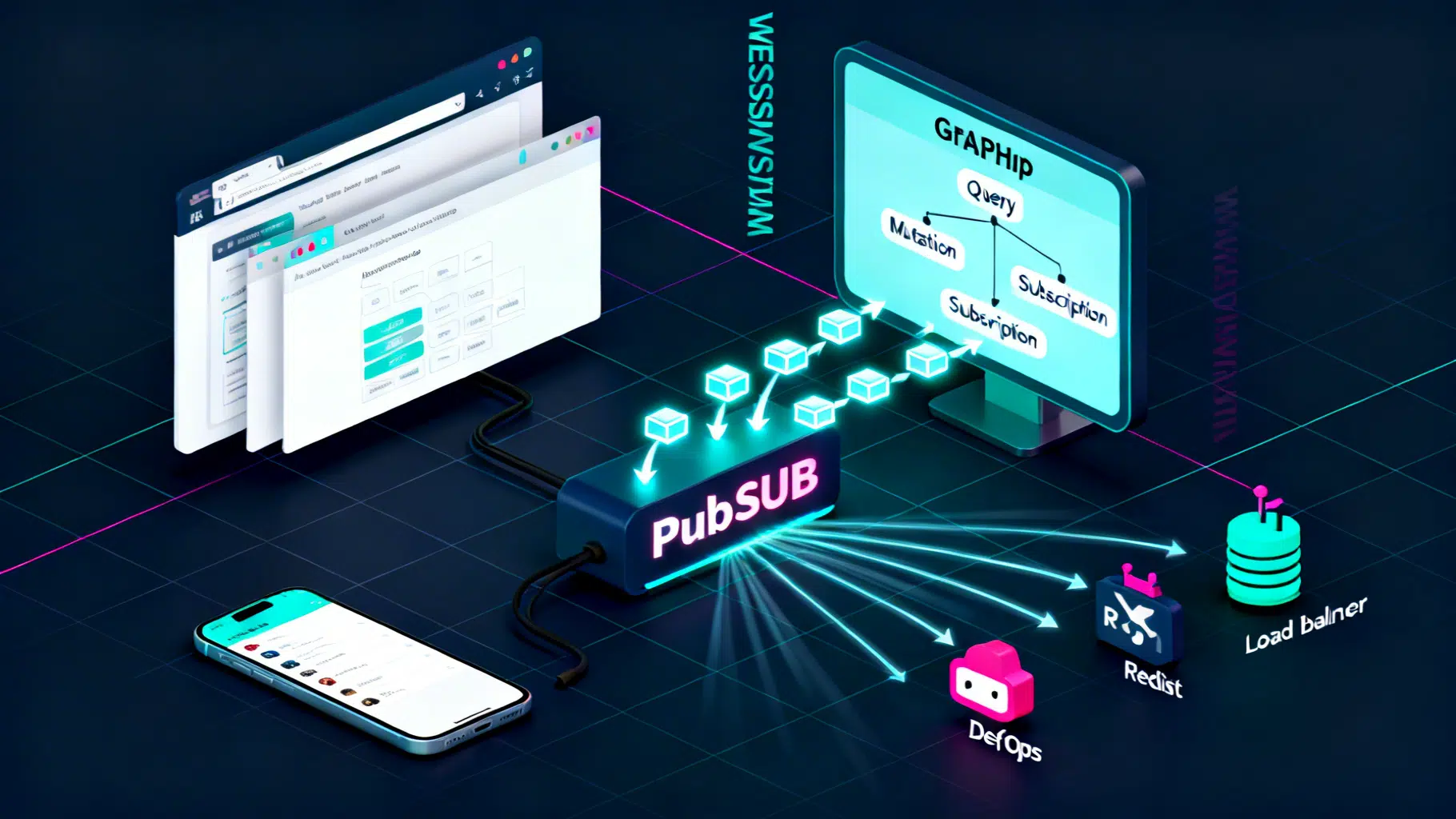

GraphQL Subscriptions: A Practical Real‑Time Data Tutorial

Build real-time apps with GraphQL Subscriptions: step-by-step server, client, auth, scaling, and testing guidance using Node, WebSockets, and graphql-ws.

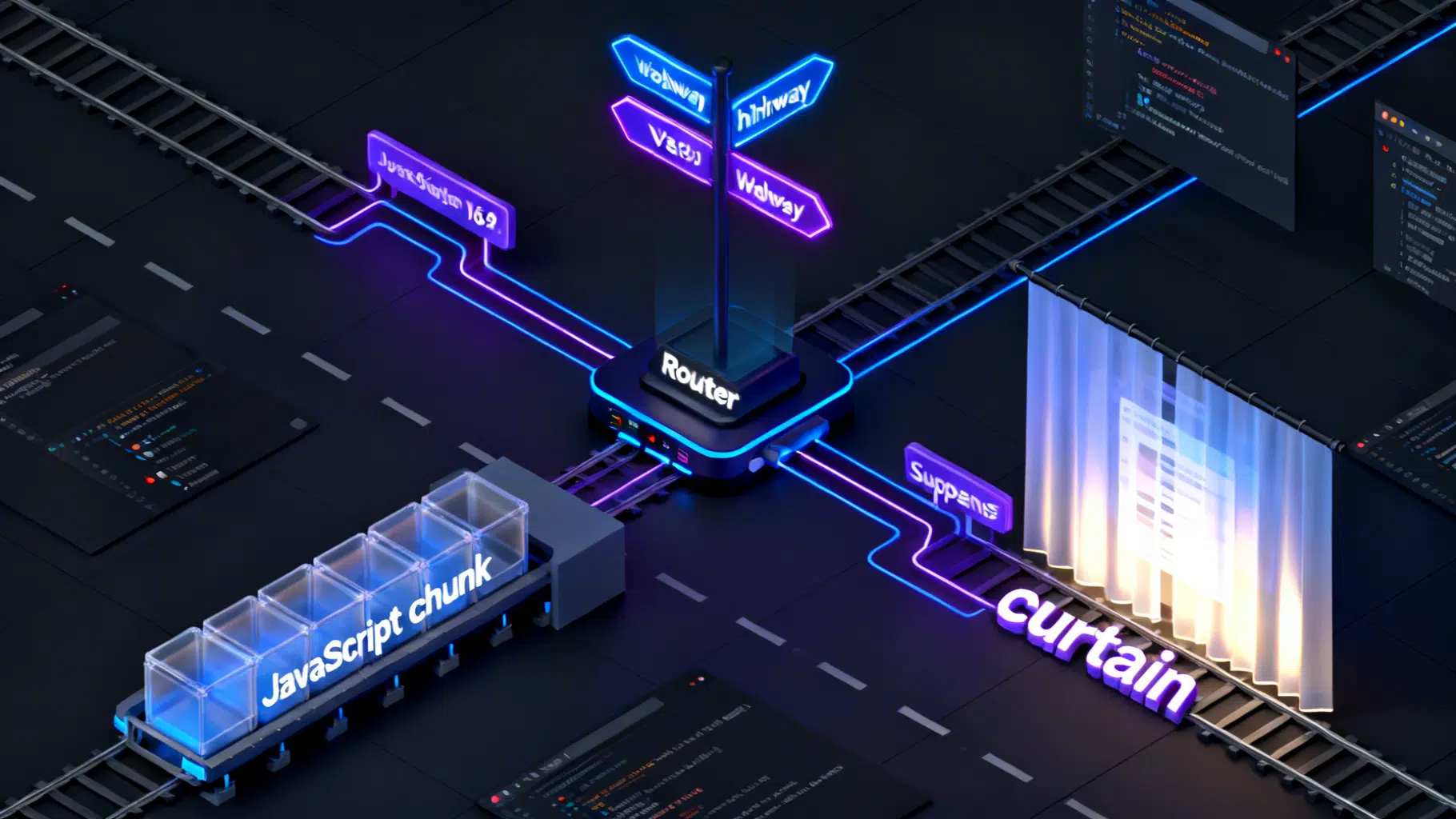

React Dynamic Imports and Lazy Routes: A Practical Guide

A practical guide to React dynamic imports and lazy routes with React Router, preloading strategies, SSR notes, and performance pitfalls.