Building a Production-Ready AI Image Recognition API for Mobile Apps

Design and ship a production-grade AI image recognition API for mobile apps: architecture, models, privacy, performance, and code snippets for iOS/Android.

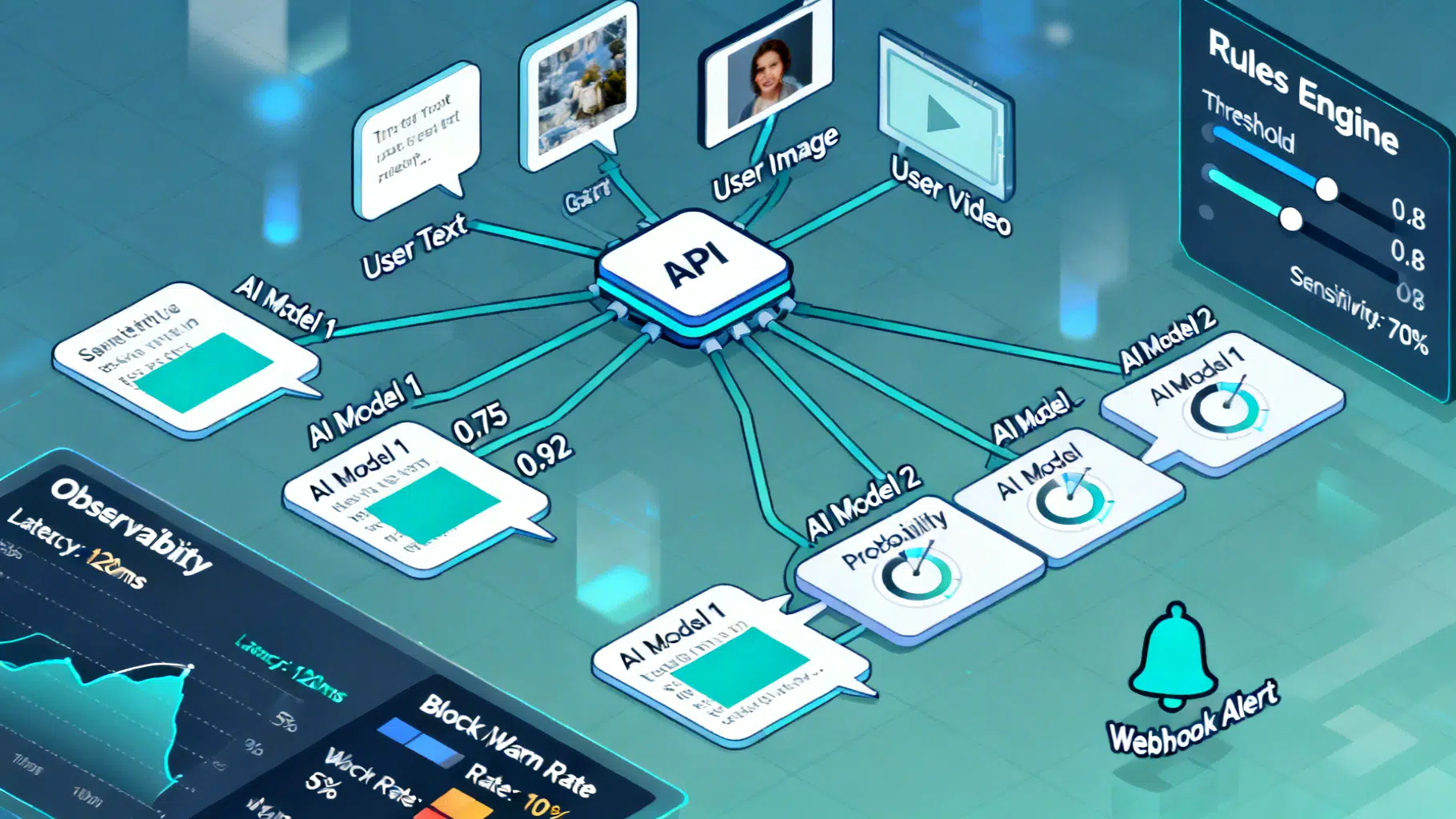

Image used for representation purposes only.

Overview

AI image recognition has moved from research labs into everyday mobile experiences: visual search, photo moderation, plant and product identification, accessibility, document capture, and augmented reality. This article walks through how to design and ship a production-grade image recognition API for a mobile app—covering architecture, model choices, privacy, performance, and maintainability—with practical snippets for iOS and Android.

Common use cases

- Visual search: identify products, artworks, or landmarks.

- Accessibility: describe scenes, read text, detect faces or obstacles.

- Document intelligence: classify receipts, extract fields, and validate IDs.

- Safety and moderation: detect prohibited content before upload.

- Inventory and quality control: recognize SKUs or defects in field apps.

Architecture choices: on-device, cloud, or hybrid

Selecting where inference runs is the single highest-leverage decision.

-

On-device (edge)

- Pros: lowest latency, offline, strong privacy, reduced cloud spend.

- Cons: model size/compute limits, device fragmentation, update cadence.

- Best for: real-time overlays, continuous camera preview, privacy-sensitive tasks.

-

Cloud

- Pros: access to large/accurate models, easier updates, centralized observability.

- Cons: network latency, bandwidth costs, privacy considerations, rate limits.

- Best for: heavy models (detection+OCR+understanding), moderation-at-scale, long-tail categories.

-

Hybrid

- Pros: local fast path with cloud fallback for hard cases or enrichment.

- Cons: added complexity in routing and consistency.

- Best for: broad consumer apps with variable connectivity and accuracy needs.

Decision guidelines:

- Latency target under 100 ms end-to-end? Prefer on-device.

- Strict privacy or regulated data? Prefer on-device or secure on-prem/cloud with data minimization.

- Need frequent label updates or long-tail recognition? Use cloud or a hybrid approach.

Capability map: what your API should expose

Think in terms of capabilities rather than specific models. A clean API surface lets you swap implementations without app rewrites.

- Classification: image → labels + confidence.

- Detection: image → bounding boxes + classes.

- Segmentation: image → per-pixel mask(s).

- OCR: image → text + layout (blocks, lines, words).

- Visual understanding: image + optional text prompt → structured output or caption.

- Safety: image → categories/score thresholds (e.g., violence, adult, medical).

Design your versioned endpoints around these outputs. Keep responses compact, typed, and forward-compatible.

Data flow in the app

- Capture

- Use CameraX (Android) or AVFoundation (iOS). Lock exposure and white balance where possible for stable inputs.

- Preprocess

- Resize to model input (e.g., 224×224 or 640×640), maintain aspect ratio with letterboxing, normalize channels.

- Inference

- Edge: run Core ML/NNAPI/TensorFlow Lite; Cloud: upload compressed JPEG/WebP with sensible quality (70–85) and EXIF stripped unless needed.

- Postprocess

- Non-max suppression for detectors, thresholding, class remapping.

- UX

- Render overlays, provide progressive results, allow user correction for training loops.

Model and library options (portable picks)

- On-device

- Classification: MobileNetV3, EfficientNet-Lite.

- Detection: YOLOv8n/v10n or MobileDet variants converted to Core ML/TFLite.

- Segmentation: lightweight DeepLab/YOLO-seg small.

- OCR: on-device OCR via platform frameworks or Tesseract-derived libraries; prefer platform frameworks for speed and language support.

- Cloud

- Use a vendor API that offers detection, OCR, and content safety with strong SLAs. Choose providers with stable versioning, regional hosting, and transparent usage limits.

Future-proof by exporting models to ONNX and maintaining conversion pipelines to Core ML and TFLite.

API design patterns

- Authentication: mobile app obtains a short-lived token (OAuth 2.0 or signed JWT) from your backend; never ship long-lived provider keys in the app.

- Idempotency: for upload endpoints, accept an Idempotency-Key header to avoid duplicate charges on retries.

- Error taxonomy: 4xx for client issues (payload too big, unsupported format), 5xx for transient server issues; include retry-after hints.

- Rate limiting: per-user and per-device; return headers (X-RateLimit-Remaining, X-RateLimit-Reset).

- Streaming: for long-running jobs, emit partial results via Server-Sent Events or WebSockets; mobile UI can show incremental boxes/masks.

- Privacy by design: support on-device redaction (blur faces/plates) before cloud upload; allow a “no-upload” mode.

iOS: capture + on-device + cloud fallback (Swift)

import AVFoundation

import Vision

final class VisionController: NSObject {

private let session = AVCaptureSession()

private let output = AVCaptureVideoDataOutput()

private var request: VNCoreMLRequest?

func start() throws {

session.beginConfiguration()

session.sessionPreset = .high

guard let camera = AVCaptureDevice.default(.builtInWideAngleCamera, for: .video, position: .back),

let input = try? AVCaptureDeviceInput(device: camera) else { throw NSError() }

session.addInput(input)

output.setSampleBufferDelegate(self, queue: DispatchQueue(label: "frames"))

output.alwaysDiscardsLateVideoFrames = true

session.addOutput(output)

// Load on-device model (compiled .mlmodelc)

if let modelURL = Bundle.main.url(forResource: "MobileClassifier", withExtension: "mlmodelc"),

let coreMLModel = try? MLModel(contentsOf: modelURL) {

let vnModel = try VNCoreMLModel(for: coreMLModel)

request = VNCoreMLRequest(model: vnModel)

}

session.commitConfiguration()

session.startRunning()

}

}

extension VisionController: AVCaptureVideoDataOutputSampleBufferDelegate {

func captureOutput(_ output: AVCaptureOutput, didOutput sampleBuffer: CMSampleBuffer, from connection: AVCaptureConnection) {

guard let request = request else { return }

let handler = VNImageRequestHandler(cmSampleBuffer: sampleBuffer, orientation: .up)

do {

try handler.perform([request])

} catch {

// Fallback: send a single frame to your cloud API

// compress to JPEG, attach ephemeral auth token

}

}

}

Notes:

- Keep model input small for real-time (e.g., 224×224). For cloud fallback, sample 1 frame every N seconds to control costs.

- Use Metal for acceleration (enabled by default with Vision/Core ML). Warm up the model on app launch to reduce first-inference latency.

Android: capture + TFLite + Retrofit (Kotlin)

class CameraAnalyzer(

private val interpreter: Interpreter,

private val api: VisionApi

) : ImageAnalysis.Analyzer {

override fun analyze(imageProxy: ImageProxy) {

val tensorInput = preprocess(imageProxy) // resize/normalize

val outputs = HashMap<Int, Any>()

val outBuffer = Array(1) { FloatArray(NUM_CLASSES) }

outputs[0] = outBuffer

try {

interpreter.runForMultipleInputsOutputs(arrayOf(tensorInput), outputs)

val top = postprocess(outBuffer[0])

// If confidence low, upload still frame

if (top.confidence < 0.6f) {

val jpeg = encodeJpeg(imageProxy)

api.classify(ImageRequest(jpeg)).enqueue(/* handle */)

}

} finally {

imageProxy.close()

}

}

}

interface VisionApi {

@POST("/v1/vision:classify")

fun classify(@Body req: ImageRequest): Call<ImageResponse>

}

Tips:

- Enable NNAPI or GPU delegate where available.

- Use CameraX backpressure strategy KEEP_ONLY_LATEST to avoid frame queue buildup.

- Throttle cloud calls with a token bucket and exponential backoff on 429/503.

Performance engineering

- Quantization: INT8 or FP16 models often deliver 2–4× speed-ups with minimal accuracy loss; measure per-device.

- Batching: for still image workflows, server-side batching reduces cost. For live preview, single-image low-latency wins.

- Warmup: run a dummy inference at startup to compile kernels.

- Caching: memoize results for identical frames (perceptual hash) and identical uploads (content hash → durable cache key).

- Tiling: for high-res docs or panoramas, tile on-device; upload only tiles requiring cloud OCR.

- Payload hygiene: strip EXIF and GPS unless explicitly needed; compress to quality 75–80 for a good fidelity-size tradeoff.

UX patterns that increase trust

- Make the model’s uncertainty visible: show confidence bars or “about” ranges.

- Offer corrective controls: “Not a daisy? Tap to relabel.” Feed corrections into retraining pipelines.

- Support progressive disclosure: show boxes first, then labels, then rich info.

- Offline-first: explain when results are on-device vs. from the cloud.

Privacy, security, and compliance

- Data minimization: default to on-device; when uploading, crop to regions of interest and redact faces/plates.

- Consent and transparency: explain what is sent, for what purpose, and retention periods; allow opt-out.

- Secure transport and storage: TLS 1.2+, HSTS; encrypt at rest; rotate keys; short-lived tokens.

- Access controls: separate queues/namespaces for production vs. testing; limit who can view payloads.

- Regulatory considerations: if serving minors, evaluate COPPA; for general users, honor deletion requests and retention limits consistent with CCPA/CPRA and GDPR.

Evaluation and monitoring

Track both ML quality and system health.

- Quality metrics

- Classification: top-1/top-5 accuracy, F1, calibration error (ECE).

- Detection: mAP@[.50:.95], latency-aware accuracy (accuracy at <100 ms).

- OCR: character error rate (CER), word error rate (WER), layout F1.

- System metrics

- P50/P95 latency by device class and network type.

- Upload rate per DAU, cloud fallback ratio, error codes, retry rates.

- Drift: distribution shift between production images and training data (color histograms, embeddings).

- Human feedback loop

- In-app “Was this helpful?” tied to sample images.

- Active learning: prioritize uncertain or novel samples for labeling.

Shipping models safely

- Versioning: model_id, model_semver, and dataset_hash embedded in metadata.

- Rollouts: staged by device class/OS, with remote config and instant rollback.

- A/B tests: compare model A vs. B on real traffic; gate on latency and quality thresholds.

- Reproducibility: containerize training/inference; pin library versions; record seeds.

Costing without surprises

- Build a simple cost model:

- Cost_per_user ≈ (Uploads_per_user × Avg_image_size_MB × Egress_price) + (Cloud_inferences_per_user × Inference_price).

- Reduce cloud calls with:

- Confidence thresholds + on-device gating.

- Delta uploads (ROI crops instead of full image).

- Server-side caching keyed by content hash.

Failure modes and mitigations

- Poor lighting/motion blur → enable auto-stabilization hints; require min shutter speed; denoise before inference.

- Domain shift (new product packaging) → hybrid fallback, active learning, rapid model hotfix path.

- Rate limiting/quotas → exponential backoff with jitter; display graceful UI messages; prefetch offline packs.

- Adversarial content/spam → content safety pass; heuristic limits (e.g., max frames per minute per user).

Minimal schema for a clean API

{

"id": "req_123",

"model": "detector-v2",

"input": {

"image": { "base64": "..." },

"hints": { "language": "en", "roi": [ [x1,y1,x2,y2] ] }

},

"response": {

"objects": [

{ "label": "daisy", "score": 0.91, "box": [x,y,w,h] }

],

"segments": [

{ "label": "leaf", "score": 0.88, "mask": "rle..." }

]

}

}

Design notes:

- Always include a top-level request id for tracing.

- Keep masks compressed (RLE/COCO) and optional.

- Reserve a “hints” object for augmenting behavior without breaking compatibility.

Checklist before launch

- Functional: on-device path works offline; cloud fallback gated and throttled.

- Performance: P95 latency < target across device tiers; warmup complete before first use.

- Privacy: data map documented; opt-outs honored; redaction validated.

- Reliability: idempotency and retries tested; chaos tests for network faults.

- Observability: dashboards for latency, accuracy, error codes, fallback ratios.

- Documentation: public schemas, error catalog, versioning policy, deprecation timelines.

Conclusion

A great AI image recognition experience on mobile is equal parts ML and engineering discipline. Treat the model as one interchangeable component behind a stable API, push as much as possible to the edge for speed and privacy, and reserve the cloud for what it does best: heavy lifting, long-tail coverage, and continuous improvement. With thoughtful architecture, careful evaluation, and user-centered design, you can ship a fast, trustworthy, and scalable vision feature that delights users and respects their data.

Related Posts

Flutter Push Notifications with Firebase Cloud Messaging (FCM): A Complete Setup Guide

Step-by-step guide to set up FCM push notifications in Flutter for Android and iOS, with code, permissions, background handling, and testing tips.

Flutter Plugin Development with Native Code: Channels, Pigeon, and FFI

A practical, end-to-end guide to building robust Flutter plugins with native code, Pigeon, FFI, testing, and multi-platform best practices.

Implementing an AI Content Moderation API: Architecture, Policy, and Code

Design and implement a reliable AI content moderation API: taxonomy, architecture, code, policy config, thresholds, privacy, and evaluation best practices.