AI Document Understanding API Tutorial: From PDFs to Structured Data in Production

Build a production‑ready pipeline for AI document understanding: upload, OCR, schema‑based extraction, tables, QA, validation, and storage.

Image used for representation purposes only.

Overview

AI document understanding turns messy, multi‑page PDFs, scans, and images into clean, structured data your apps can trust. In this tutorial you’ll build an end‑to‑end pipeline that:

- Uploads documents (PDFs, TIFFs, JPEGs)

- Runs OCR + layout analysis

- Extracts fields and tables with a schema

- Answers questions about the document

- Validates and stores results

- Handles webhooks, retries, and monitoring for production

All examples are provider‑agnostic and use a hypothetical base URL. Replace endpoints and headers with your vendor’s equivalents.

What you’ll build

We’ll process invoices and produce a normalized JSON payload ready for a database, then query the document (“What’s the due date?”) using retrieval‑augmented QA.

Prerequisites

- An AI document understanding API key (export as DOC_API_KEY)

- Python 3.10+ and Node.js 18+

- curl and jq for quick tests

- A few sample documents: one clean digital PDF and one photographed/low‑contrast scan

Optional but helpful:

- A Postgres instance for storing results

- ngrok or a public HTTPS endpoint to receive webhooks

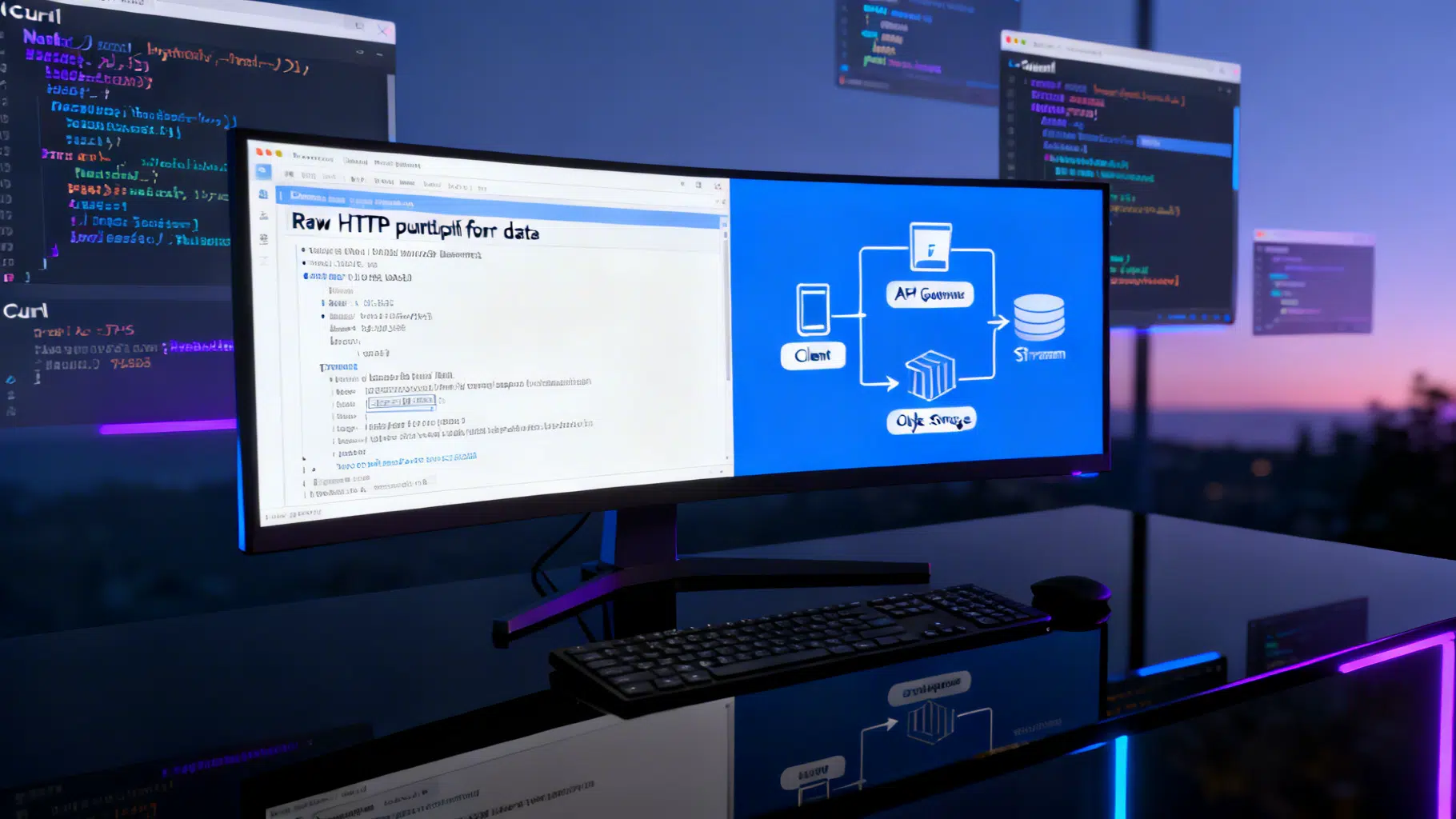

Architecture at a glance

[Client] -> Upload -> [API: /documents]

-> OCR/Layout -> [API: /pipelines/ocr]

-> Extract -> [API: /extract] --(schema)--> JSON

-> Tables -> [API: /tables]

-> QA -> [API: /query]

-> Webhook -> [Your service]

-> Validate/Store -> [DB]

Key ideas:

- Separate ingestion from extraction so you can re‑use uploaded pages with different prompts/schemas.

- Use webhooks for long‑running jobs; poll only when webhooks aren’t possible.

- Keep extraction prompts and schemas versioned to guarantee reproducibility.

Step 1 — Upload a document

Use multipart upload; request an ID you’ll reuse downstream.

curl

curl -X POST \

https://api.example.ai/v1/documents \

-H "Authorization: Bearer $DOC_API_KEY" \

-H "Idempotency-Key: $(uuidgen)" \

-F file=@./samples/invoice.pdf \

-F metadata='{"source":"tutorial","doctype":"invoice"}'

Example response

{

"id": "doc_12345",

"status": "uploaded",

"pages": 3,

"content_type": "application/pdf"

}

Notes

- For large files, chunked uploads or pre‑signed URLs may be required.

- Store the mapping between your internal record and doc_id.

Step 2 — Run OCR and layout analysis

Trigger OCR with language hints and quality controls.

curl

curl -X POST \

https://api.example.ai/v1/pipelines/ocr \

-H "Authorization: Bearer $DOC_API_KEY" \

-H "Content-Type: application/json" \

-d '{

"document_id": "doc_12345",

"languages": ["en"],

"features": {

"tables": true,

"handwriting": false,

"barcodes": true

},

"webhook_url": "https://yourapp.com/webhooks/ocr"

}'

Example response

{

"job_id": "job_ocr_7788",

"status": "queued"

}

Polling (if no webhook)

curl -H "Authorization: Bearer $DOC_API_KEY" \

https://api.example.ai/v1/jobs/job_ocr_7788

When complete, you’ll have page‑level text, tokens, bounding boxes, and reading order—crucial for reliable extraction and table parsing.

Step 3 — Define a structured extraction schema

Treat extraction as “programming with data contracts.” Define the fields, types, and validations you expect. Keep this schema in version control.

invoice.schema.json

{

"$schema": "https://json-schema.org/draft/2020-12/schema",

"$id": "https://schemas.yourapp.com/invoice.v1.json",

"title": "Invoice v1",

"type": "object",

"required": ["invoice_number", "invoice_date", "seller", "buyer", "total"],

"properties": {

"invoice_number": {"type": "string"},

"invoice_date": {"type": "string", "format": "date"},

"due_date": {"type": "string", "format": "date"},

"seller": {"type": "string"},

"buyer": {"type": "string"},

"currency": {"type": "string", "pattern": "^[A-Z]{3}$"},

"subtotal": {"type": "number"},

"tax": {"type": "number"},

"total": {"type": "number"}

}

}

Extraction request

curl -X POST \

https://api.example.ai/v1/extract \

-H "Authorization: Bearer $DOC_API_KEY" \

-H "Content-Type: application/json" \

-d '{

"document_id": "doc_12345",

"schema": {"$ref": "https://schemas.yourapp.com/invoice.v1.json"},

"prompt": "Extract invoice fields. Prefer values on the first page if duplicates appear.",

"webhook_url": "https://yourapp.com/webhooks/extract"

}'

Example response payload

{

"job_id": "job_extract_9012",

"status": "queued"

}

Job result (abridged)

{

"document_id": "doc_12345",

"status": "succeeded",

"output": {

"invoice_number": {"value": "INV-2026-0417", "page": 1, "bbox": [92, 118, 250, 136]},

"invoice_date": {"value": "2026-04-01", "page": 1},

"due_date": {"value": "2026-04-30", "page": 1},

"seller": {"value": "Acme Components LLC"},

"buyer": {"value": "Northwind Traders"},

"currency": {"value": "USD"},

"subtotal": {"value": 1249.5},

"tax": {"value": 112.46},

"total": {"value": 1361.96}

},

"confidence": 0.94

}

Tips

- Ask for bounding boxes and page numbers to support review UIs.

- Prefer ISO‑8601 dates and three‑letter ISO‑4217 currencies for easy validation.

Step 4 — Extract line items as a table

Tables often cause the most pain. Request structured rows with column mappings and tolerances for merged cells.

curl -X POST \

https://api.example.ai/v1/tables \

-H "Authorization: Bearer $DOC_API_KEY" \

-H "Content-Type: application/json" \

-d '{

"document_id": "doc_12345",

"table_hint": "Line items table usually below the header on page 1",

"columns": [

{"name": "description", "type": "string"},

{"name": "qty", "type": "number"},

{"name": "unit_price", "type": "number"},

{"name": "line_total", "type": "number"}

]

}'

Example table output

{

"rows": [

{"description": "Gear Assembly A2", "qty": 5, "unit_price": 199.90, "line_total": 999.50},

{"description": "Shipping", "qty": 1, "unit_price": 250.00, "line_total": 250.00}

],

"page": 1,

"confidence": 0.91

}

Best practices

- Provide column names and types to reduce hallucinations.

- Enable header detection and tolerance for rotated text when scanning.

- Consider a “strict mode” to fail extraction when any cell is low confidence.

Step 5 — Ask questions about the document

Use retrieval QA to answer user queries grounded in the uploaded pages.

curl -X POST \

https://api.example.ai/v1/query \

-H "Authorization: Bearer $DOC_API_KEY" \

-H "Content-Type: application/json" \

-d '{

"document_id": "doc_12345",

"question": "What is the payment due date and accepted methods?",

"citations": true

}'

Example answer

{

"answer": "Due 2026-04-30. Accepted methods: ACH, wire, major credit cards.",

"citations": [

{"page": 1, "bbox": [80, 640, 420, 700], "snippet": "Payment due 30 Apr 2026..."}

]

}

Step 6 — Receive results with webhooks

Long extractions benefit from webhooks.

Node.js (Express)

import express from 'express';

import crypto from 'crypto';

const app = express();

app.use(express.json({ type: '*/*' }));

function verify(signature, body, secret) {

const h = crypto.createHmac('sha256', secret).update(body).digest('hex');

return crypto.timingSafeEqual(Buffer.from(signature), Buffer.from(h));

}

app.post('/webhooks/extract', (req, res) => {

const sig = req.header('X-Signature') || '';

const raw = JSON.stringify(req.body);

if (!verify(sig, raw, process.env.WEBHOOK_SECRET)) return res.sendStatus(401);

// Upsert job status and results

// enqueue post-processing

res.sendStatus(200);

});

app.listen(3000, () => console.log('Webhook listening'));

Step 7 — Validate and normalize

Add domain checks before writing to your systems.

Python

from decimal import Decimal, ROUND_HALF_UP

from datetime import datetime

def normalize_amount(x):

return Decimal(str(x)).quantize(Decimal('0.01'), rounding=ROUND_HALF_UP)

def validate_invoice(o):

# Required fields

for k in ["invoice_number","invoice_date","seller","buyer","total"]:

assert k in o, f"Missing {k}"

# Dates

inv_date = datetime.fromisoformat(o["invoice_date"]).date()

if due := o.get("due_date"):

assert datetime.fromisoformat(due).date() >= inv_date, "Due before invoice date"

# Arithmetic

subtotal = normalize_amount(o.get("subtotal", 0))

tax = normalize_amount(o.get("tax", 0))

total = normalize_amount(o["total"])

assert subtotal + tax == total, "Subtotal + tax != total"

# Currency

if cur := o.get("currency"):

assert len(cur) == 3 and cur.isupper(), "Invalid currency code"

return {**o, "total": float(total)}

Step 8 — Store results

SQL

create table invoices (

id text primary key,

invoice_number text not null,

invoice_date date not null,

due_date date,

seller text,

buyer text,

currency char(3),

subtotal numeric(12,2),

tax numeric(12,2),

total numeric(12,2) not null,

raw jsonb not null,

created_at timestamptz default now()

);

Python insert

import psycopg2

def save_invoice(conn, doc_id, parsed):

with conn.cursor() as cur:

cur.execute(

"""

insert into invoices (id, invoice_number, invoice_date, due_date, seller, buyer,

currency, subtotal, tax, total, raw)

values (%s,%s,%s,%s,%s,%s,%s,%s,%s,%s,%s)

on conflict (id) do update set raw = excluded.raw

""",

(

doc_id,

parsed.get('invoice_number'),

parsed.get('invoice_date'),

parsed.get('due_date'),

parsed.get('seller'),

parsed.get('buyer'),

parsed.get('currency'),

parsed.get('subtotal'),

parsed.get('tax'),

parsed.get('total'),

json.dumps(parsed)

)

)

conn.commit()

Step 9 — Evaluate and benchmark

Create a golden set of documents and expected outputs. Compute field‑level precision/recall and table accuracy.

Metrics

- Exact‑match accuracy per field

- Numeric tolerance (e.g., ±0.01) for amounts

- Edit distance for IDs and names

- Table row recall and column accuracy

Process

- Run the pipeline on the golden set after each change.

- Diff against the ground truth; fail the build if KPIs drop.

- Track confidence scores and add a “human review required” threshold (e.g., < 0.85).

Step 10 — Production hardening

- Idempotency: send an Idempotency‑Key on uploads and extraction calls.

- Rate limits: implement exponential backoff and jitter; parallelize within vendor quotas.

- Retries: retry 429/5xx with backoff; don’t retry 4xx except 408/409 when safe.

- Webhook security: verify HMAC signatures; reject unsigned payloads.

- Redaction: mask PII you don’t need (SSNs, account numbers) before logging.

- Data residency: select regions and disable training on your data if required.

- Observability: log job_id, document_id, model version, latency, token usage, and confidence.

Cost and performance tuning

- Prefer text‑understanding for digital PDFs; use vision‑heavy OCR only for scans.

- Crop to content: remove blank margins to cut tokens and speed up.

- Page selection: extract only header + totals for invoices when tables aren’t needed.

- Caching: memoize stable results by document hash.

- Schema scoping: smaller schemas increase accuracy and reduce hallucinations.

- Batch mode: group multiple documents to amortize overhead and save on requests.

Common errors and fixes

- 400 Invalid schema: validate locally against JSON Schema before calling the API.

- 413 Payload too large: switch to chunked uploads or pre‑signed URLs.

- 415 Unsupported media type: convert images to PNG/JPEG and PDFs to PDF/A when possible.

- 429 Too many requests: respect Retry‑After; implement client‑side rate limiting.

- 499/408 Client timeout: use webhooks or increase request timeouts for long jobs.

- Mis‑extracted numbers: strip thousands separators; normalize decimals with a locale‑aware parser.

- Wrong tables: provide explicit column headers and page/table hints; enable rotated text detection.

End‑to‑end Python example

import os, time, json, requests

BASE = "https://api.example.ai/v1"

KEY = os.environ["DOC_API_KEY"]

H = {"Authorization": f"Bearer {KEY}"}

# 1) Upload

def upload(path):

with open(path, 'rb') as f:

r = requests.post(f"{BASE}/documents", headers=H, files={"file": f})

r.raise_for_status(); return r.json()["id"]

# 2) OCR

def ocr(doc_id):

r = requests.post(f"{BASE}/pipelines/ocr", headers={**H, "Content-Type":"application/json"},

data=json.dumps({"document_id": doc_id, "languages":["en"], "features":{"tables":True}}))

r.raise_for_status(); return r.json()["job_id"]

# Helper: poll

def wait_job(job_id):

while True:

r = requests.get(f"{BASE}/jobs/{job_id}", headers=H)

r.raise_for_status(); j = r.json()

if j["status"] in ("succeeded","failed"): return j

time.sleep(1.2)

# 3) Extract

def extract(doc_id):

payload = {

"document_id": doc_id,

"schema": {"$ref": "https://schemas.yourapp.com/invoice.v1.json"},

"prompt": "Extract invoice fields precisely; output ISO dates and 2dp numbers."

}

r = requests.post(f"{BASE}/extract", headers={**H, "Content-Type":"application/json"}, data=json.dumps(payload))

r.raise_for_status(); job = wait_job(r.json()["job_id"]) ; return job["output"]

if __name__ == "__main__":

doc = upload("./samples/invoice.pdf")

print("doc:", doc)

job = ocr(doc)

print(wait_job(job)["status"]) # ensure OCR done before extraction

result = extract(doc)

print(json.dumps(result, indent=2))

Troubleshooting checklist

- If confidence is low, try: better scans (300+ DPI), deskewing, or enabling table detection.

- If dates parse incorrectly, specify expected locale/format in the prompt and normalize post‑extraction.

- If totals don’t reconcile, extract line items and recompute; prefer numeric parsing over text copying.

- For multilingual docs, pass language hints or enable auto‑detect; consider fallback OCR models.

Where to go next

- Add a human‑in‑the‑loop review UI wired to page bboxes.

- Build templates for common vendors and route docs by classifier.

- Introduce active learning: send low‑confidence fields to annotation, retrain periodically.

- Expand to receipts, ID cards, utility bills, and bank statements with tailored schemas and QA.

Summary

You now have a repeatable, production‑grade approach to document understanding: upload, OCR, extract with a contract, validate, and store—augmented with tables, QA, and strong operational practices. Adapt the endpoints to your provider, keep schemas versioned, and measure quality continuously. That’s the difference between a demo and a dependable pipeline.

Related Posts

DeepSeek API Integration Tutorial: From First Call to Production

Step-by-step DeepSeek API integration: base URL, models, cURL/Python/Node code, streaming, thinking mode, tool calls, errors, and production tips.

REST API Multipart File Upload: A Practical, End-to-End Guide

A concise, end-to-end guide to designing, implementing, and securing multipart/form-data file uploads in REST APIs, with examples in Node, Python, Java, .NET, and Go.

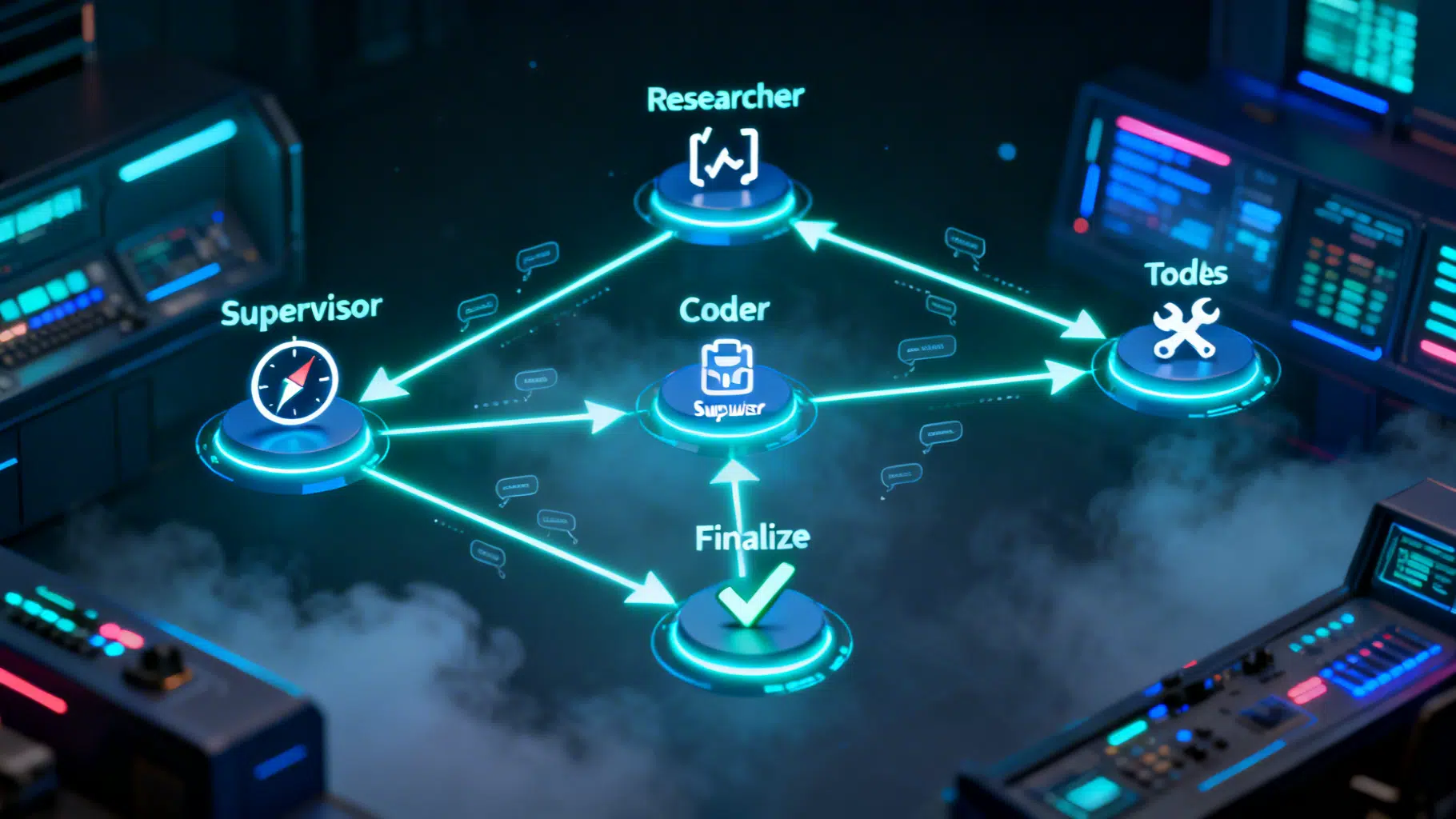

LangGraph Multi‑Agent Workflow Tutorial: From Supervisor Routing to Tool Execution

Build a production-ready LangGraph multi-agent workflow with a supervisor, tools, checkpointing, and streaming—step-by-step with tested Python code.