GraphQL Caching Strategies on Client and Server: A Practical Playbook

A practical guide to GraphQL caching on the client and server—policies, headers, CDNs, and invalidation patterns that keep data fast and correct.

Image used for representation purposes only.

Why GraphQL Caching Is Different

Caching is one of the fastest ways to improve perceived performance and reduce backend load. With GraphQL, however, cacheability isn’t as straightforward as in REST because:

- Most operations are POSTed, which disables transparent HTTP caching by default.

- Responses are shaped by the query, variables, and user context; two queries for the same entity can return different selections.

- Results often combine multiple backend resources, each with its own volatility.

This article maps out practical, battle-tested GraphQL caching strategies across client, edge/CDN, gateway, and resolver layers, and shows how to keep data fast without sacrificing correctness.

A Layered Mental Model

Think about caching as layered responsibilities:

- Client: normalized entity cache, UI state, pagination windows.

- Edge/CDN: public response caching for safe, cacheable queries.

- Gateway/server: full-response caching, field-level memoization, and compilation caches (parse/validate/plan).

- Data sources: database and HTTP caches; per-request batching/deduping.

Also distinguish:

- Response caching: store the entire JSON result of an operation.

- Object/field caching: cache specific entities or resolver outputs.

- Scope: public (shared) vs private (per-user/session) caches.

Client-Side Caching: Fast UI and Fewer Round Trips

Client caches deliver sub-100 ms reads and allow optimistic UI. The leading approaches normalize data by entity so multiple queries share the same records.

Normalization and Cache Keys

- Use the pair (__typename, id) as the canonical cache key for entities.

- For types lacking a stable id, define a custom key from unique fields.

- Include variables in field keys (e.g., list arguments like search, filters, and cursors) so different views don’t overwrite each other.

Example (Apollo Client):

const cache = new InMemoryCache({

typePolicies: {

Product: { keyFields: ["sku"] },

Query: {

fields: {

products: relayStylePagination(["category", "sort"]) // args form the field key

}

}

}

});

Fetch Policies and Partial Results

- cache-first: fastest perceived performance; good for stable data.

- cache-and-network: show cached data, then refresh; great default for lists.

- network-only / no-cache: use for highly dynamic or user-specific data.

- standby: keep results out of the active store until needed.

- Allow partial data with precise loading states for better UX.

Keeping the Cache Fresh

- Write precise updates after mutations (writeQuery/writeFragment) to avoid full refetches.

- Use field policies (read/merge) to implement cursor or offset pagination.

- Evict or “tag” related records when a mutation affects many items.

- Optimistic updates keep the UI responsive; reconcile on server response.

Local-First Enhancements

- Persist the normalized cache to storage for instant cold-starts.

- Preload queries during navigation or idle time.

- For SSR/SSG, hydrate the store with server-fetched data to avoid layout shifts.

Server and Gateway Caching: Big Wins With Guardrails

Server-side caches cut tail latency and protect downstream systems.

Response Caching at the GraphQL Layer

- Key by: operation id/name, sorted query text (or persisted id), variables, and relevant request headers.

- Respect user context: include Authorization or user-id in the key for private data; otherwise mark as public.

- Attach cache metadata (TTL, tags) to support targeted invalidation.

Many servers support a response-cache plugin or middleware; if not, a simple Redis-backed store keyed by a hash of the inputs works well.

Pseudocode:

const key = hash({ opId, vars, headers: pick(req.headers, ["accept-language", "authorization"]) });

const cached = await redis.get(key);

if (cached) return JSON.parse(cached);

const result = await executeGraphQL({ query, variables, context });

if (isPublic(result)) await redis.setEx(key, 60, JSON.stringify(result)); // 60s TTL

return result;

Field/Resolver-Level Caching

- Cache expensive resolver outputs using a stable key (type, field, args) and a TTL that matches data volatility.

- Tag entries by entity id (e.g., product:123) so mutations can invalidate all dependent fields.

- Beware: DataLoader is primarily a per-request batcher/deduper; it does not provide a cross-request cache. Pair it with a real cache if you need reuse across requests.

Compilation Caches

- Cache parsed/validated query ASTs and execution plans based on a hash of the operation text. This removes repeated parse/validate work.

- Persisted queries make this more effective and safe (see below).

Persisted Queries and GET: Unlocking the CDN

Most GraphQL queries are POST, which limits edge caching. Persisted queries solve this by pre-registering operations and then executing them by id.

- Automatic Persisted Queries (APQ): client sends a hash; server fetches the text if needed, otherwise executes.

- Safe reads via GET: combine persisted ids and GET to enable CDN caching of public data.

- Security: only allow whitelisted operations; reject arbitrary text at the edge.

Example request path for a public catalog query:

- Client issues GET /graphql?operationId=abc123&variables=…

- CDN caches based on URL + selected headers.

- Origin attaches Cache-Control and Surrogate-Control to define TTLs and invalidation semantics.

HTTP Caching and CDNs: Make Headers Work For You

Even with GraphQL, HTTP caching rules still apply when responses are cacheable and safe to share.

Key headers:

- Cache-Control: max-age, s-maxage, stale-while-revalidate, stale-if-error.

- ETag / If-None-Match: enable revalidation with 304 Not Modified.

- Vary: include headers that affect the response (e.g., Authorization, Accept-Language, x-tenant).

- Surrogate-Control and Surrogate-Key (or CDN-specific tagging): enable edge-specific TTLs and tag-based purges.

Example headers for a public response:

Cache-Control: public, max-age=60, s-maxage=300, stale-while-revalidate=30

ETag: "W/\"resp:3f9c...\""

Surrogate-Control: max-age=300

Surrogate-Key: product:123 category:shoes list:top-sellers

Vary: Accept-Language

Guidelines:

- Don’t cache authenticated responses at a shared edge unless you segment by user or session.

- Keep CDN keys low in cardinality; move per-user data behind the origin or a private cache.

- Use ETag revalidation to reduce bandwidth for dynamic but stable payloads.

Invalidation: The Hardest Part, Tamed

You need a mix of strategies:

- Time-based: set conservative TTLs that reflect data volatility.

- Event-driven: on writes, publish invalidation messages (e.g., product:123 updated) to evict response and field caches that include those tags.

- Tag-based purges at the CDN: purge all content with a given Surrogate-Key.

- Versioned keys: bump a schema or business-logic version to invalidate en masse.

A simple pattern:

- Each resolver that reads entity X attaches the tag x:{id} to the response metadata.

- The mutation that updates X publishes x:{id}; listeners evict matching cache entries (server, gateway, CDN) in near-real time.

Real-Time and Incremental Delivery

- Subscriptions and live queries: treat results as non-cacheable responses; however, you can still cache source reads inside resolvers with very short TTLs to protect downstream systems.

- @defer and @stream: not caching features, but they complement caching by speeding first paint; you can still cache the fully assembled result server-side if appropriate.

Security and Correctness

- Never publicly cache responses that include PII or user-scoped data.

- If you must cache per-user, encrypt at rest and set short TTLs.

- Validate that authorization is applied before a cached read; include auth-relevant headers in the key.

- Log cache hits/misses with user and operation identifiers to support audits.

Observability: Measure What Matters

- Hit ratio at each layer (client, edge, gateway, resolver) and end-to-end latency percentiles (p50/p90/p99).

- Cardinality of cache keys (variables, headers) to avoid unbounded growth.

- Eviction reason codes (TTL, tag purge, capacity) to spot noisy datasets.

- Cost per request before/after caching and downstream request fan-out.

Example Architecture: Product Catalog

Goal: fast browsing for anonymous users, consistent data for signed-in users.

- Client

- cache-and-network for the product list; relay-style pagination keyed by category and sort.

- optimistic updates on cart mutations; precise cache writes to cart lines.

- CDN

- Persisted GET queries for public catalog reads with 5-minute s-maxage and ETag revalidation; Vary on Accept-Language.

- Gateway/Server

- Response cache (60–120 s) for anonymous requests; private cache disabled for authenticated users.

- Field cache for expensive recommendation resolver (TTL 15 s; tag by user-segment and product ids).

- Parse/plan cache for persisted operations.

- Data sources

- Database results cached by SKU for 30 s; product image metadata for 10 minutes.

- Invalidation

- On product update, publish product:{id}; purge CDN and server caches with that tag; evict related list keys.

Practical Snippets

Apollo Client mutation update:

update(cache, { data: { updateProduct } }) {

cache.writeFragment({

id: cache.identify({ __typename: 'Product', sku: updateProduct.sku }),

fragment: gql`fragment P on Product { price stock }`,

data: { price: updateProduct.price, stock: updateProduct.stock }

});

}

Resolver with Redis-backed memoization:

async function productBySku(_, { sku }, { redis, db }) {

const key = `product:${sku}`;

const cached = await redis.get(key);

if (cached) return JSON.parse(cached);

const row = await db.getProduct(sku);

await redis.setEx(key, 30, JSON.stringify(row)); // 30s TTL

return row;

}

Checklist: Getting Caching Right

- Define stable entity ids and type policies on the client.

- Choose sensible fetch policies per screen; prefer cache-and-network for lists.

- Implement response caching at the server with clear TTLs and tags.

- Use persisted queries and GET to unlock CDN caching for public reads.

- Set Cache-Control, ETag, and Vary correctly; use Surrogate keys for purges.

- Combine TTL and event-driven invalidation; version keys when rules change.

- Track hit ratios and cardinality; fix hot keys or exploding key spaces.

- Document what is public vs private and test for auth leaks with synthetic traffic.

Conclusion

GraphQL caching pays off when each layer does its job: the client keeps UI responsive with a normalized store, the server shields backends with targeted response and field caches, and the edge accelerates public reads with well-tuned HTTP semantics. Combine persisted queries, precise keys, conservative TTLs, and event-driven invalidation, and you’ll get both speed and correctness—without fighting your cache on every release.

Related Posts

Apollo vs Relay: Choosing the Right GraphQL Client for React

Apollo vs Relay: strengths, trade‑offs, and when to choose each for React GraphQL apps, from caching and pagination to SSR, typing, and developer UX.

GraphQL Error Handling Best Practices: Clear, Secure, and Resilient APIs

A practical guide to GraphQL error handling: schema design, HTTP codes, partial data, masking, client patterns, observability, and examples.

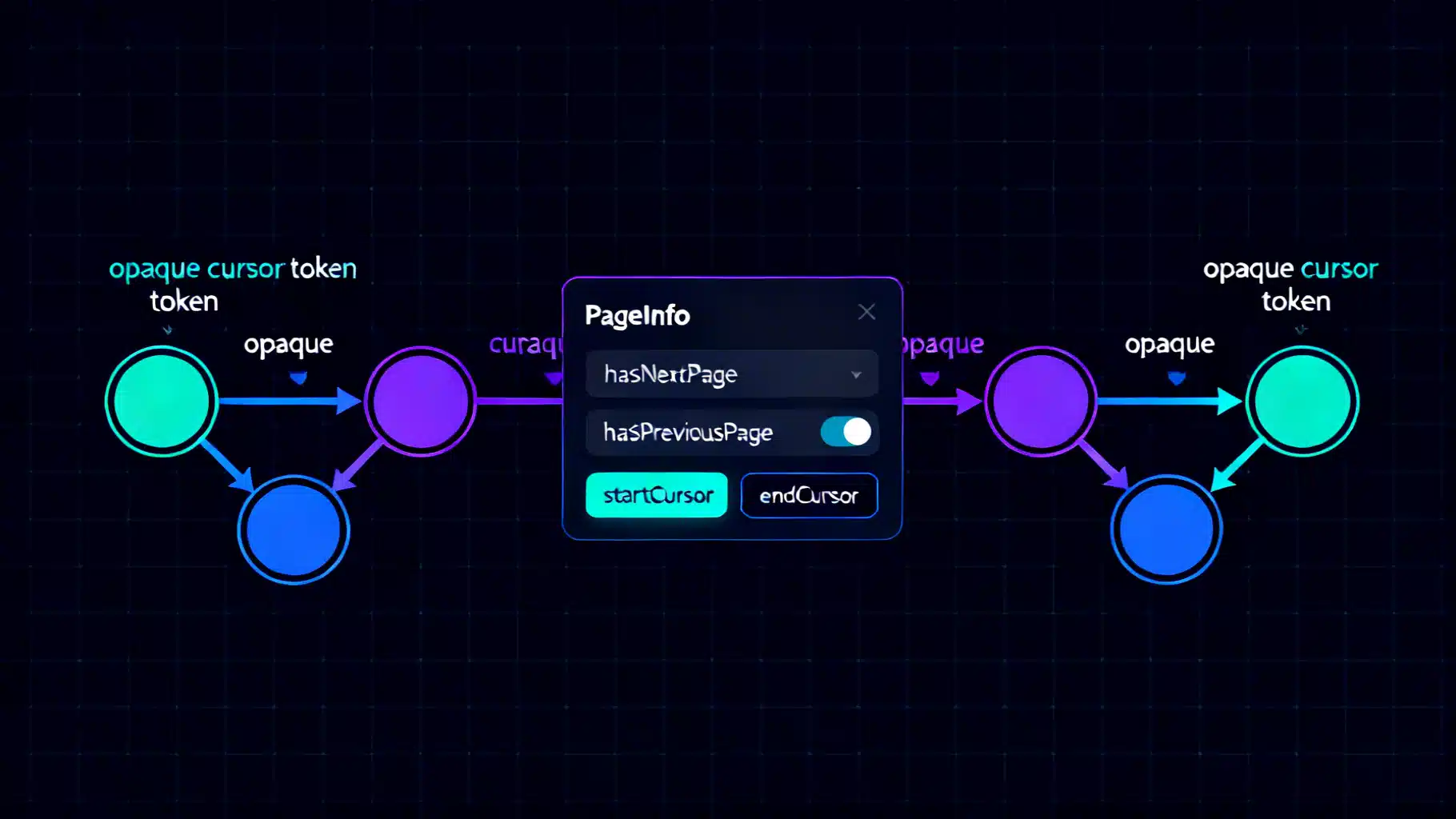

Mastering GraphQL Relay Pagination with Connections

Master Relay-style pagination in GraphQL with connections, cursors, and PageInfo. Learn schema design, keyset pagination, resolvers, and pitfalls.