Leak Confirms Anthropic Testing “Claude Mythos,” a New Tier Above Opus—Here’s What We Know

A Fortune-confirmed leak reveals Anthropic testing “Claude Mythos,” a new tier above Opus with major cyber gains—and risks—now under tight early access.

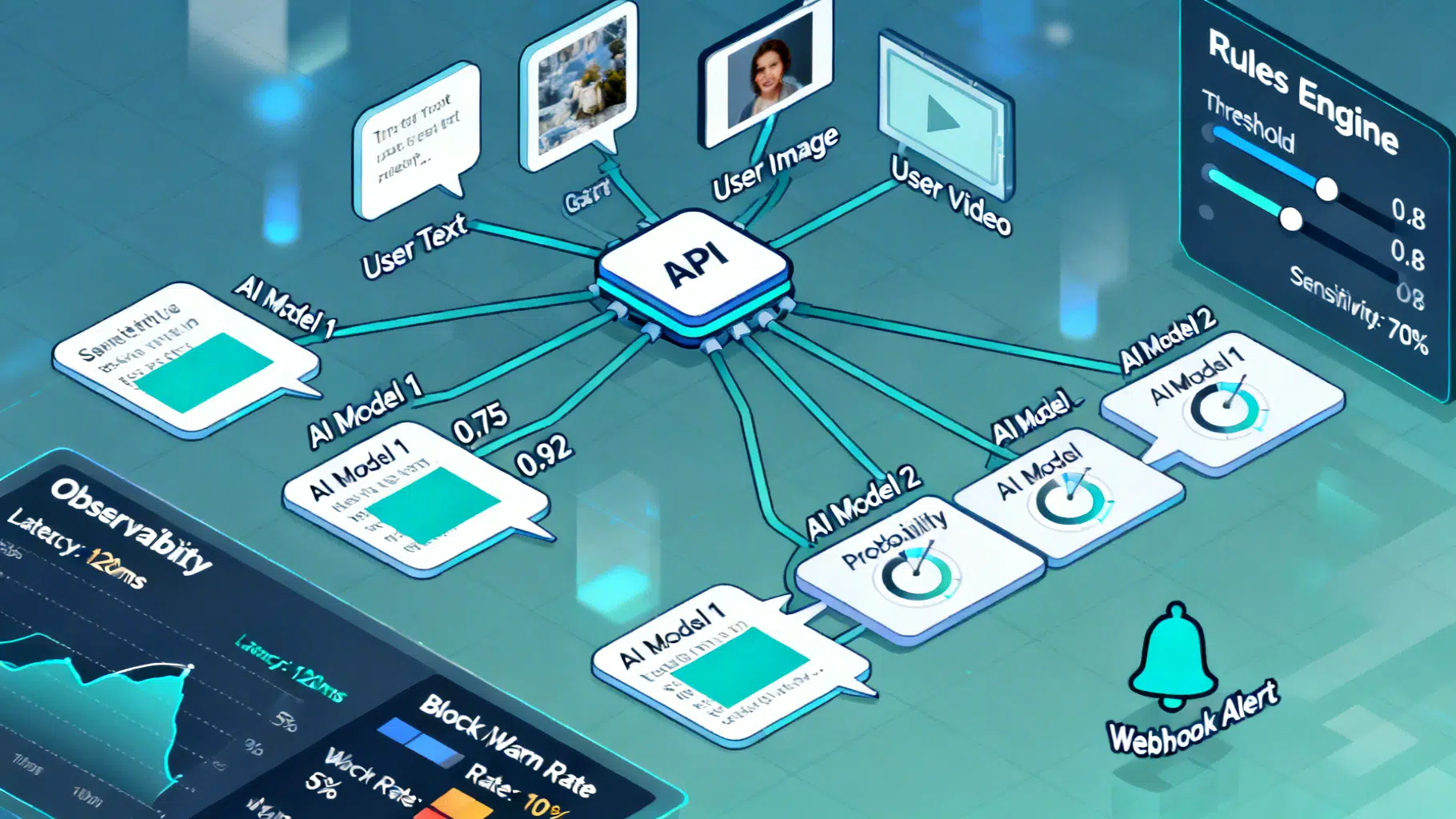

Image used for representation purposes only.

Breaking: What “Claude Mythos” Is — and How It Spilled Into Public View

Anthropic has acknowledged it is testing a new, significantly more powerful AI model after a cache of unpublished materials was discovered on a publicly accessible content system this week. Drafts in that cache repeatedly refer to the model as “Claude Mythos” and describe a new, higher‑end tier codenamed “Capybara” that sits above the company’s current flagship Opus line. Fortune reporters reviewed the drafts, Anthropic confirmed it is testing a next‑gen model with early‑access customers, and the company attributed the exposure to human error in configuring an external CMS tool. (fortune.com )

The documents, accessible until Anthropic locked them down late on Thursday, suggested the Capybara/Mythos model represents a “step change” in reasoning, coding, and cybersecurity performance — but also flagged unprecedented security risks. Fortune reports the cache contained close to 3,000 unpublished assets, including a draft blog post and materials for an invite‑only CEO summit in the U.K. where unreleased Claude capabilities would be shown. (fortune.com )

What The Leak Says About “Mythos” (a.k.a. Capybara)

According to the leaked draft, “Capybara” is a new marketing tier above Opus — Anthropic’s most capable public model family today — and “Claude Mythos” is the first model in that tier. The draft claims large gains on internal tests of software development, advanced reasoning, and cyber operations, and characterizes Mythos as “by far” the company’s most capable system to date. Anthropic told Fortune it is “being deliberate” about release timing because of the model’s strength and is piloting access with a small set of customers first. (fortune.com )

The same materials underscore risk management: Anthropic’s plan, per the draft, is to start with cybersecurity defenders so they can harden codebases ahead of a broader wave of AI‑driven exploits. That rollout posture is consistent with the company’s recent emphasis on disclosure norms for vulnerabilities discovered by Claude and on documenting model capabilities and safeguards via public “system cards.” (fortune.com )

How The Exposure Happened — and What It Reveals About AI Ops Hygiene

Anthropic told Fortune that an external CMS was misconfigured so early drafts were publicly searchable, a textbook example of the kind of cloud‑era error (think open object storage) that red‑teamers warn about. Security researchers who reviewed the cache described thousands of discoverable assets tied to Anthropic’s publishing pipeline before the company removed public access. While there’s no confirmation yet of broader unauthorized access, the episode highlights the operational risks around AI release management — especially when internal safety narratives and event plans are drafted long before launch day. (fortune.com )

Why “Mythos” Matters Now

- Capability inflection: Anthropic is signaling a model that materially advances core competencies — reasoning, coding, and cybersecurity — over Opus 4.6. That dovetails with the firm’s recent research cadence around cyber capabilities and its launch of a red‑team blog that has showcased Claude‑discovered Firefox vulnerabilities and multi‑stage attack evaluations on realistic ranges. (fortune.com )

- Safety posture: Only last month, Anthropic warned that its latest models could be misused for “heinous crimes,” including aspects of chemical‑weapons development, and described elevated susceptibility in certain autonomous “computer use” settings. The company has tied such frontier jumps to stricter deployment guardrails under its Responsible Scaling Policy. (axios.com )

- Real‑world adversary pressure: In parallel, Anthropic has alleged “industrial‑scale distillation” campaigns by rival labs using tens of thousands of fraudulent accounts to siphon Claude capabilities — a backdrop that raises the stakes for how (and how widely) a more capable tier like Capybara/Mythos is exposed. (pcgamer.com )

The Security Angle: A Double‑Edged Capability

Even before the leak, Anthropic had been publicly documenting how current Claude models can both help defenders and enable sophisticated offense. Company posts describe AI‑assisted espionage campaigns where models performed the majority of operational tasks, and they outline a new vulnerability‑disclosure playbook tailored to AI‑discovered bugs. If Mythos indeed outpaces Opus on cyber tasks, controlled access and staged release to blue teams first, as the draft proposes, would be a logical mitigation. (anthropic.com )

At the same time, Axios reporting in February emphasized that the company’s latest internal evaluations found higher misuse susceptibility in certain configurations and that Anthropics’ leadership has been warning policymakers that capability jumps could outstrip today’s guardrails. Mythos’ leaked framing — “far ahead” in cyber — will likely intensify scrutiny from regulators and customers over how Anthropic interprets its own safety thresholds. (axios.com )

What Anthropic Has (and Hasn’t) Confirmed

- Confirmed: Anthropic is testing a new, more capable model with select early‑access customers and considers it a “step change” in performance. The company removed public access to the drafts after Fortune contacted it and attributes the exposure to human error on an external CMS tool. (fortune.com )

- Not (yet) officially announced: A public brand name, pricing, general‑availability timeline, detailed benchmarks, and public system card for “Mythos/Capybara.” Those specifics were only present in draft materials reviewed by Fortune and have not been published on Anthropic’s official model documentation pages as of March 28, 2026. (fortune.com )

The Competitive Context

Frontier‑model rollouts have increasingly become staggered affairs — early testing under stricter safeguards, then wider availability after safety, reliability, and abuse‑resistance checks. Anthropic followed that script with earlier Opus releases, and leaked details suggest Mythos will push the same pattern further, with higher pricing and constrained access tied to cyber‑risk assessments. That posture tracks with the firm’s RSP 3.0 update and its recent expansion of model system cards for Opus 4.6 and Sonnet 4.6. (www-cdn.anthropic.com )

The leak also lands amid mounting external pressure. In late February, the company publicly accused Chinese labs of orchestrating 24,000+ fake accounts to extract Claude capabilities — a claim that, if accurate, underscores how quickly powerful behaviors can diffuse once accessible via public endpoints. Mythos’ extra‑cautious rollout — starting with defenders — appears designed to slow that diffusion while Anthropic studies real‑world impacts. (pcgamer.com )

What To Watch Next

- Official clarification: Expect a formal Anthropic post and system card, which would put hard numbers around Mythos/Capybara’s capabilities and the safety architecture gating broader access. Until then, the Fortune‑reviewed drafts remain the only window into the branding and tiering plan. (fortune.com )

- Early‑access focus: Which customers get Mythos first? The draft suggests cybersecurity teams and software maintainers — aligning with Anthropic’s new coordinated‑disclosure framework for AI‑discovered bugs. Look for partnerships or pilots in critical‑infrastructure and browser ecosystems, an area where Opus 4.6 has already found dozens of issues. (anthropic.com )

- Policy and procurement: With government and regulated‑industry buyers now weighing AI capability risk as procurement risk, watch for agencies and standards bodies to reference Mythos when debating thresholds for deployment and testing — particularly under “AI‑as‑researcher” and “computer use” modes flagged as higher‑risk in recent reporting. (axios.com )

Bottom Line

As of Saturday, March 28, 2026, “Claude Mythos” is not an officially launched product — but its existence and positioning have been effectively confirmed by Anthropic following a public leak of draft launch materials. Those materials, reviewed by Fortune, suggest the company is preparing to introduce a model tier above Opus, beginning with a cautious, defender‑first pilot aimed at getting ahead of the very risks the model may exacerbate. For customers and regulators alike, Mythos is now the bellwether for how Anthropic plans to balance frontier capability with operational safety in the months ahead. (fortune.com )

Related Posts

AGI in 2026: Capabilities Are Rising—Consensus Is Not

AGI in 2026: funding surges, compute scales, rules diverge. Where capabilities truly are—and what to watch next.

Implementing an AI Content Moderation API: Architecture, Policy, and Code

Design and implement a reliable AI content moderation API: taxonomy, architecture, code, policy config, thresholds, privacy, and evaluation best practices.

Model Context Protocol (MCP) Tutorial: Build, Connect, and Secure Your First Server

Build, secure, and connect your first Model Context Protocol (MCP) server—learn the primitives, transports, client setup, and must‑know security practices.