Implementing an AI Content Moderation API: Architecture, Policy, and Code

Design and implement a reliable AI content moderation API: taxonomy, architecture, code, policy config, thresholds, privacy, and evaluation best practices.

Image used for representation purposes only.

Overview

AI content moderation APIs classify, score, and act on user-generated content (UGC) to reduce harm and comply with policy and law. A robust implementation blends clear policy, resilient architecture, auditable decisions, and human oversight. This guide shows how to design your taxonomy, wire an API in code, tune thresholds, and run the system reliably at scale.

Define a moderation taxonomy first

Before code, write policy you can execute:

- Categories: hate, harassment/bullying, sexual content (with “sexual/minors” as a hard-stop), violence/threats, self-harm, extremism/terrorism, illegal activities, fraud/scams, drugs, weapons, PII/privacy, malware/abuse, copyright/IP.

- Severity: S0 (none), S1 (contextual/ambiguous), S2 (borderline), S3 (explicit), S4 (egregious). Map severities to actions.

- Actions: allow, allow-with-warning, soft-block (ask to edit), hard-block, escalate to human review.

- Regional overlays: some categories (e.g., hate symbols, political extremism) need locale-specific rules. Version these overlays (policy_version) and record them in every decision.

Document examples and counter-examples for each category. Provide annotator guidelines with edge cases (slurs reclaimed in-group, satire, medical context, historical citations).

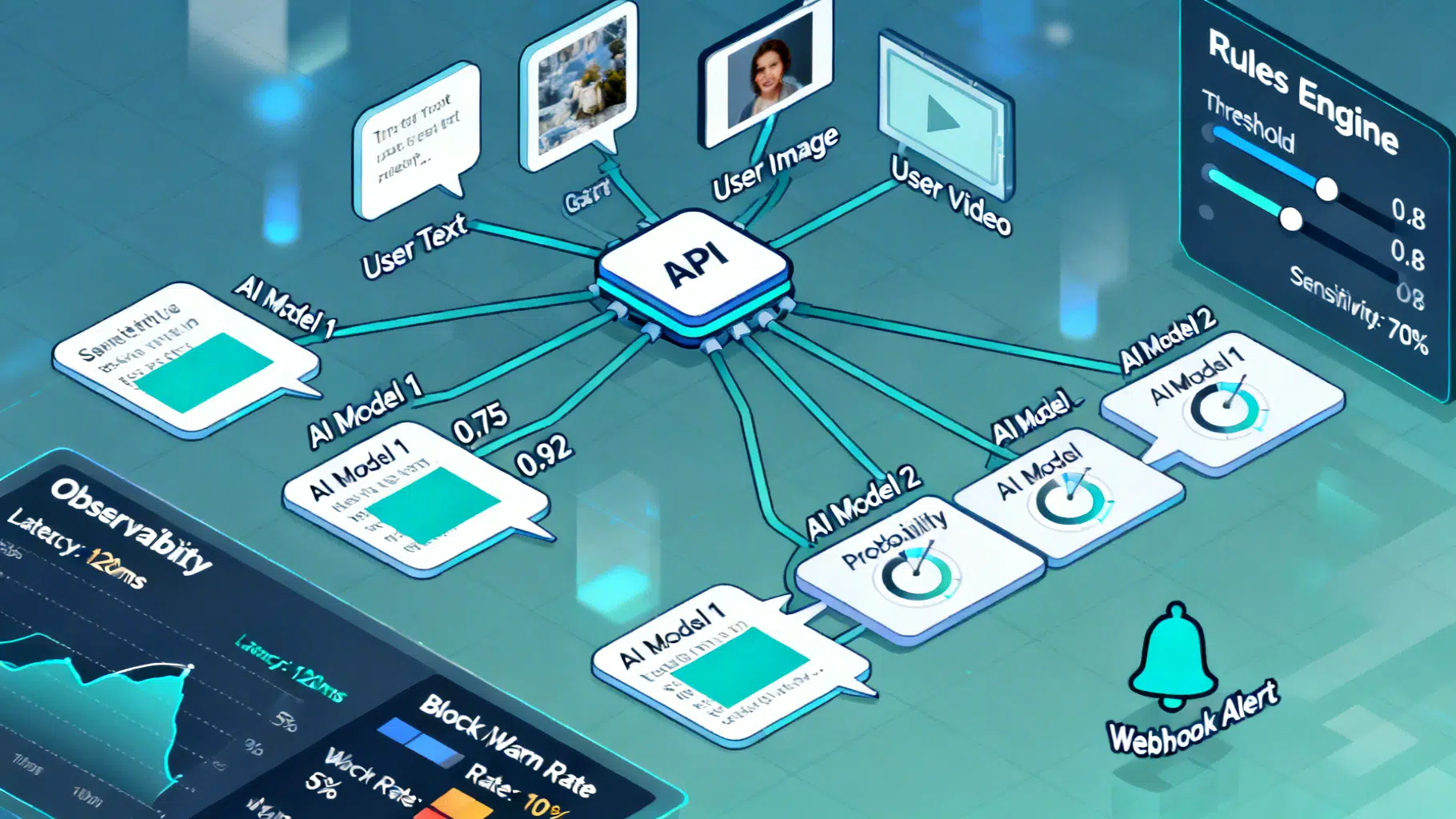

System architecture at a glance

A reliable moderation pipeline usually has:

- Ingress layer (API gateway)

- Validates schema, enforces auth, strips sensitive fields not required (minimization), assigns idempotency_key and trace_id.

- Scoring layer (models/adapters)

- One or more providers score content. Use an ensemble or fallback tree to reduce outages and blind spots.

- Decision engine (policy)

- Deterministic rules over model scores, allow/deny lists, customer/tenant overrides, and regulatory overlays.

- Action layer

- Returns allow/soft-block/hard-block, optional redactions, and user-facing messages. For async items (long video), post a webhook event.

- Observability & storage

- Structured decision logs, model telemetry, sampling, and privacy-aware retention. Support audits and appeals.

Typical flows:

- Synchronous gate for short text/chat (target p95 < 120 ms).

- Asynchronous workflow for long docs, images, audio/video; return 202 Accepted then POST to a webhook when done.

Request/response contract (JSON)

Define a stable, explicit schema. Example:

// POST /v1/moderate

{

"content": "string | base64 for media",

"content_type": "text|image|audio|video",

"language": "en",

"context": {"thread_id": "abc", "location": "US"},

"user_id": "u_123",

"metadata": {"tenant": "acme"},

"idempotency_key": "dedupe-uuid"

}

// 200 OK

{

"id": "mdr_789",

"trace_id": "trc_456",

"created_at": "2026-03-27T15:30:12Z",

"policy_version": "2026-03-us-v3",

"overall_action": "allow|warn|block|review",

"scores": {

"hate": 0.04,

"harassment": 0.21,

"sexual_minors": 0.001,

"violence": 0.08,

"self_harm": 0.02,

"pii": 0.10

},

"reasons": [

{"category": "harassment", "severity": "S2", "confidence": 0.78, "evidence": ["span: 34-52"]}

],

"redactions": [{"type": "pii.email", "span": [120, 140], "replacement": "[redacted]"}],

"messages": ["Please remove personal email addresses before posting."],

"appeal_token": "apl_001"

}

Example: a minimal FastAPI moderation proxy

The service validates input, fans out to provider adapters, runs the decision policy, and returns an action. This is a simplified reference you can expand.

# app.py

from fastapi import FastAPI, Header, HTTPException

from pydantic import BaseModel

from typing import Dict, List, Optional

import time, uuid

app = FastAPI()

class ModerateRequest(BaseModel):

content: str

content_type: str = "text"

language: Optional[str] = "en"

context: Optional[Dict] = None

user_id: Optional[str] = None

metadata: Optional[Dict] = None

idempotency_key: Optional[str] = None

class ProviderAdapter:

def score(self, req: ModerateRequest) -> Dict[str, float]:

# Replace with real provider call(s); retry + timeout + circuit breaker

# Return category->probability in [0,1]

return {"hate": 0.03, "harassment": 0.12, "sexual_minors": 0.0, "violence": 0.07, "self_harm": 0.01, "pii": 0.2}

THRESHOLDS = {

"hard_block": {"sexual_minors": 0.01},

"block": {"violence": 0.9, "self_harm": 0.8},

"warn": {"harassment": 0.15, "pii": 0.15},

}

adapter = ProviderAdapter()

@app.post("/v1/moderate")

async def moderate(payload: ModerateRequest, x_request_id: Optional[str] = Header(None)):

if payload.content_type not in {"text", "image", "audio", "video"}:

raise HTTPException(status_code=400, detail="unsupported content_type")

trace_id = x_request_id or f"trc_{uuid.uuid4()}"

scores = adapter.score(payload)

# Decision policy

action = "allow"; reasons = []

# Hard-block takes precedence

for cat, th in THRESHOLDS["hard_block"].items():

if scores.get(cat, 0) >= th:

action = "block"; reasons.append({"category": cat, "severity": "S4", "confidence": scores[cat]})

break

if action == "allow":

for cat, th in THRESHOLDS["block"].items():

if scores.get(cat, 0) >= th:

action = "block"; reasons.append({"category": cat, "severity": "S3", "confidence": scores[cat]})

if action == "allow":

for cat, th in THRESHOLDS["warn"].items():

if scores.get(cat, 0) >= th:

action = "warn"; reasons.append({"category": cat, "severity": "S2", "confidence": scores[cat]})

# Redaction example (email)

redactions = []

if scores.get("pii", 0) >= THRESHOLDS["warn"]["pii"]:

redactions.append({"type": "pii.email", "span": [0, 0], "replacement": "[redacted]"})

resp = {

"id": f"mdr_{uuid.uuid4()}",

"trace_id": trace_id,

"created_at": time.strftime("%Y-%m-%dT%H:%M:%SZ", time.gmtime()),

"policy_version": "2026-03-us-v3",

"overall_action": action,

"scores": scores,

"reasons": reasons,

"redactions": redactions,

"messages": ["Please remove personal data before posting."] if redactions else []

}

return resp

Invoke with curl:

curl -s -X POST https://your.api/v1/moderate \

-H "Content-Type: application/json" \

-H "Authorization: Bearer $TOKEN" \

-d '{"content":"Here is my email: a@b.com","content_type":"text"}'

Policy as configuration

Keep policy out of code so you can ship changes safely.

# policy.yaml

version: 2026-03-us-v3

hard_block:

sexual_minors: 0.01

block:

self_harm: 0.80

violence: 0.90

warn:

harassment: 0.15

pii: 0.15

allow_list:

- "breast cancer" # medical context

deny_list:

- "explicit slur X" # always block regardless of score

regional_overrides:

EU:

warn:

pii: 0.10

messages:

pii: "Please remove personal data before posting."

Load this file at startup; hot-reload with feature flags and version every decision with policy_version.

Sync vs. async and streaming

- Sync: short text and captions. Budget ~50–120 ms end-to-end. Use warm pools, keep-alive, and per-tenant caching for repeated content.

- Async: images/video or long text. Return 202 and enqueue. Deliver final decision via webhook:

// POST to client webhook

{

"event": "moderation.completed",

"id": "mdr_789",

"trace_id": "trc_456",

"overall_action": "review",

"scores": {"violence": 0.74},

"assets": [{"type":"image","thumbnail_url":"..."}]

}

- Streaming: for chat UIs, stream token-level decisions (warn early, redact before render). Gate model output as well as user input to prevent model-generated violations.

Reliability, scale, and cost

- Circuit breakers and fallbacks: if Provider A fails, fail over to Provider B; degrade to stricter rules or temporary block for high-risk categories.

- Retries: use exponential backoff with jitter; bound retries to keep tail latency predictable.

- Rate limiting: per API key and per tenant; burst + sustained.

- Idempotency: dedupe repeated POSTs using idempotency_key.

- Caching: hash content to cache repeated decisions (respect TTL and policy_version).

- Batch endpoints: /v1/moderate:batch for bulk backfills.

Evaluation and threshold calibration

- Label a stratified sample of your traffic with expert annotators. Include hard negatives (sarcasm, coded language) and benign confounders (medical/educational context).

- Compute per-category ROC curves, Precision-Recall, and select thresholds to hit business targets (e.g., 95% recall on sexual/minors, 98% precision on PII redactions to avoid over-blocking).

- Fairness checks: test false positives across dialects and identity terms; mitigate with allow-lists and context rules.

- Continuous monitoring: track drift in language and evasion tactics; re-calibrate quarterly or on policy change.

Privacy, security, and compliance

- Data minimization: send only necessary fields to vendors; hash user_ids; strip location unless needed for policy overlays.

- Encryption: TLS in transit; encrypt at rest; segregate per-tenant data; secrets in a vault; rotate keys.

- Retention: set short TTL for raw content; longer TTL for derived, privacy-safe decision logs.

- PII handling: built-in detectors for emails, phones, addresses; redact in-flight where possible.

- Regionality: route data to in-region processors to honor data residency.

- Transparency & appeals: expose reasons, evidence spans, and an appeal_token; provide SLAs for human review.

- Note: coordinate with counsel for jurisdiction-specific laws (e.g., minors’ safety, consumer transparency). This article is not legal advice.

Logging and observability

- Structured logs: trace_id, policy_version, model_versions, scores, action, latency, tenant, anonymized context.

- Metrics: p50/p95 latency, block/warn rates by category and tenant, provider error rates, cache hit ratio.

- Tracing: instrument spans for gateway, provider calls, and decision engine (OpenTelemetry).

- Audit trails: immutable, access-controlled logs for escalations and regulator inquiries.

Human-in-the-loop operations

- Escalation queues with priority (e.g., minors’ safety > harassment).

- Reviewer UI: show content, reasons, score curves, history, and quick outcomes; double-review a sample for quality.

- Feedback loop: reviewer decisions become training/eval data; use disagreement analysis to refine policy.

Testing strategy

- Unit tests for policy rules and threshold edges.

- Property-based tests (e.g., redaction never expands content length unless replacement is fixed).

- Adversarial tests: obfuscations, leetspeak, homoglyphs, hidden text, emojis.

- Load tests: ensure p95 stays within SLO at realistic concurrency.

Common pitfalls and how to avoid them

- Over-reliance on a single model: keep a tested fallback and vendor abstraction.

- Frozen thresholds: re-evaluate routinely; language evolves.

- No context: include thread history for harassment and self-harm risk.

- Opaque decisions: return reasons and evidence; enable appeals.

- Logging raw PII by default: minimize, redact, and enforce retention windows.

Quick implementation checklist

- Policy taxonomy, examples, severity → action mapping

- Versioned config with regional overlays

- Provider abstraction + fallback + circuit breaker

- Deterministic decision engine with tests

- Sync + async paths; webhooks; idempotency

- Redaction capability and output gating

- Observability (logs, metrics, traces) and audits

- Privacy/security controls and retention policy

- Calibration, fairness, and regression suite

- Human review workflow and appeals

Conclusion

An effective AI content moderation API is more than a classifier. It is a product surface that encodes your policy, safeguards privacy, withstands outages, and evolves with user behavior. Start with clear policy, ship a minimal but observable pipeline, and iterate with data, reviews, and careful calibration.

Related Posts

Implementing a Robust Webhook API: A Practical Guide

Design, secure, and operate reliable webhook APIs with signatures, retries, idempotency, observability, and great developer experience.

Designing a Robust AI Text Summarization API: Architecture to Production

How to build and use an AI text summarization API: models, request design, chunking, evaluation, security, and production best practices.

Webhooks vs Polling APIs: How to Choose, Design, and Operate

Webhooks vs polling APIs: compare latency, cost, scalability, reliability, security, patterns, and code examples—with a practical decision framework.