REST API Multipart File Upload: A Practical, End-to-End Guide

A concise, end-to-end guide to designing, implementing, and securing multipart/form-data file uploads in REST APIs, with examples in Node, Python, Java, .NET, and Go.

Image used for representation purposes only.

Overview

File uploads are a foundational capability for many REST APIs: user avatars, invoices, medical images, logs, and more. The most interoperable way to move files from clients to servers over HTTP is multipart/form-data. This guide walks through the wire format, API design choices, secure and scalable implementations, and working code in Node.js, Python (FastAPI), Java (Spring Boot), .NET, and Go.

What multipart/form-data actually is

Multipart is a container format defined by RFC 7578. Instead of sending a single payload, the request body is divided by boundaries into parts. Each part has its own headers (e.g., Content-Disposition, Content-Type) and an opaque body. Files and simple fields live side‑by‑side in the same request.

Key properties:

- Content-Type: multipart/form-data; boundary=… identifies the format and the unique boundary token.

- Each part begins with –boundary and ends with CRLF; the entire body ends with –boundary–.

- Content-Disposition indicates field name and, for files, the original filename.

- Content-Type of a part is optional for simple text fields but strongly recommended for files.

Wire-level anatomy

POST /v1/files HTTP/1.1

Host: api.example.com

Authorization: Bearer <token>

Content-Type: multipart/form-data; boundary=--------------------------974767299852498929531610575

----------------------------974767299852498929531610575

Content-Disposition: form-data; name="file"; filename="report.pdf"

Content-Type: application/pdf

Content-Transfer-Encoding: binary

%PDF-1.7 ...binary bytes...

----------------------------974767299852498929531610575

Content-Disposition: form-data; name="metadata"

Content-Type: application/json; charset=utf-8

{"title":"Q1","tags":["finance","confidential"]}

----------------------------974767299852498929531610575--

Designing your upload endpoint

- Resource model: treat uploads as creating a new file resource.

- POST /v1/files → 201 Created with a JSON body containing file id, size, contentType, checksum, and a Location header.

- Naming: prefer a single field name (e.g., file) for the binary part; keep additional fields as simple strings or a JSON-encoded metadata field.

- Size limits: document max file size; expose a header (e.g., Upload-Max-Size) or include in OpenAPI description.

- Idempotency: support an Idempotency-Key header for retried POSTs. On duplicate keys, return the original response.

- Integrity: optionally require a Content-MD5 header or a metadata.checksum field and verify it server-side.

- Response schema example:

{

"id": "file_abc123",

"name": "report.pdf",

"size": 1048576,

"contentType": "application/pdf",

"checksum": "md5:Q2hlY2tzdW0h",

"createdAt": "2026-04-01T12:34:56Z"

}

Client examples

cURL (single file + JSON metadata)

curl -X POST 'https://api.example.com/v1/files' \

-H 'Authorization: Bearer <token>' \

-F 'file=@/path/to/report.pdf;type=application/pdf' \

-F 'metadata={"title":"Q1","tags":["finance","confidential"]};type=application/json'

Browser (Axios with progress)

import axios from 'axios';

const form = new FormData();

form.append('file', fileInput.files[0], 'report.pdf');

form.append('metadata', JSON.stringify({ title: 'Q1', tags: ['finance', 'confidential'] }));

await axios.post('/v1/files', form, {

headers: { 'Content-Type': 'multipart/form-data' },

onUploadProgress: e => {

if (e.total) console.log(`Progress: ${Math.round((e.loaded / e.total) * 100)}%`);

}

});

Note: Fetch lacks standard upload progress events in most browsers; prefer Axios (XHR) or the Streams Upload API where available.

Command-line (multiple files)

curl -X POST 'https://api.example.com/v1/files/batch' \

-H 'Authorization: Bearer <token>' \

-F 'files[]=@a.jpg' -F 'files[]=@b.jpg' -F 'album=2026-Q1'

Server implementations

Node.js (Express + Multer)

import express from 'express';

import multer from 'multer';

import crypto from 'crypto';

const app = express();

const storage = multer.memoryStorage(); // or diskStorage for temp files

const upload = multer({

storage,

limits: { fileSize: 20 * 1024 * 1024 }, // 20 MB

});

app.post('/v1/files', upload.single('file'), async (req, res) => {

if (!req.file) return res.status(400).json({ error: 'file is required' });

const metadata = req.body.metadata ? JSON.parse(req.body.metadata) : {};

const checksum = 'md5:' + crypto.createHash('md5').update(req.file.buffer).digest('base64');

// TODO: persist to storage (e.g., S3, GCS) and database

return res.status(201).json({

id: 'file_' + Date.now(),

name: req.file.originalname,

size: req.file.size,

contentType: req.file.mimetype,

checksum,

metadata,

});

});

For large files, stream with Busboy or Multer diskStorage to avoid buffering entire files in memory.

Python (FastAPI)

from fastapi import FastAPI, File, UploadFile, Form, HTTPException

import json, hashlib

app = FastAPI()

@app.post('/v1/files')

async def upload(file: UploadFile = File(...), metadata: str | None = Form(None)):

# Stream to disk or cloud; here we hash while reading

hasher = hashlib.md5()

size = 0

with open('/tmp/' + file.filename, 'wb') as out:

while chunk := await file.read(1024 * 1024):

out.write(chunk)

hasher.update(chunk)

size += len(chunk)

meta = json.loads(metadata) if metadata else {}

return {

'id': 'file_123',

'name': file.filename,

'size': size,

'contentType': file.content_type,

'checksum': 'md5:' + hasher.digest().hex(),

'metadata': meta

}

Java (Spring Boot)

@RestController

@RequestMapping("/v1/files")

public class UploadController {

@PostMapping(consumes = MediaType.MULTIPART_FORM_DATA_VALUE)

public ResponseEntity<Map<String, Object>> upload(

@RequestPart("file") MultipartFile file,

@RequestPart(value = "metadata", required = false) String metadata

) throws Exception {

if (file.isEmpty()) return ResponseEntity.badRequest().build();

// Stream file.getInputStream() to storage; compute checksum if needed

Map<String, Object> body = new HashMap<>();

body.put("id", "file_123");

body.put("name", file.getOriginalFilename());

body.put("size", file.getSize());

body.put("contentType", file.getContentType());

return ResponseEntity.status(HttpStatus.CREATED).body(body);

}

}

.NET 8 (Minimal API)

app.MapPost("/v1/files", async (HttpRequest request) => {

var form = await request.ReadFormAsync();

var file = form.Files.GetFile("file");

if (file is null) return Results.BadRequest(new { error = "file is required" });

await using var stream = File.Create(Path.Combine("/tmp", file.FileName));

await file.CopyToAsync(stream);

return Results.Created($"/v1/files/{{file.FileName}}", new {

id = "file_123",

name = file.FileName,

size = file.Length,

contentType = file.ContentType

});

});

Go (net/http)

func upload(w http.ResponseWriter, r *http.Request) {

err := r.ParseMultipartForm(32 << 20) // 32MB temp storage

if err != nil { http.Error(w, err.Error(), http.StatusBadRequest); return }

file, header, err := r.FormFile("file")

if err != nil { http.Error(w, "file is required", http.StatusBadRequest); return }

defer file.Close()

out, err := os.Create(filepath.Join("/tmp", filepath.Base(header.Filename)))

if err != nil { http.Error(w, err.Error(), http.StatusInternalServerError); return }

defer out.Close()

if _, err = io.Copy(out, file); err != nil {

http.Error(w, err.Error(), http.StatusInternalServerError); return

}

w.Header().Set("Content-Type", "application/json")

w.WriteHeader(http.StatusCreated)

fmt.Fprintf(w, `{"id":"file_123","name":"%s"}`, header.Filename)

}

Handling large files safely

- Stream, don’t buffer: ensure your stack streams from socket → parser → storage. Memory storage is convenient for small files only.

- Set limits: enforce max file size (413 Payload Too Large if exceeded), max number of parts, and reasonable timeouts.

- Backpressure: prefer APIs that support flow control (Node streams, aiofiles, Go io.Copy) to avoid overwhelming memory.

- Temp storage hygiene: store to a temp directory with quotas and background cleanup.

- Checksums: require Content-MD5 or accept a metadata checksum; verify before committing.

- Asynchronous post-processing: virus scan, thumbnailing, OCR—offload via a job queue to keep uploads fast.

Resumable and multipart-at-rest

- HTTP range + chunking: split the file client-side, upload numbered chunks, finalize server-side; track upload session ids.

- tus.io protocol: a standardized resumable upload approach (create, patch chunks, head for status).

- Cloud object storage multipart (e.g., S3 Multipart Upload): initiate → upload parts (parallel) → complete; store the resulting object key.

Direct-to-cloud (pre-signed URL)

Reduce server bandwidth by letting the client PUT directly to object storage.

Flow:

- Client requests an upload session from your API.

- API returns a pre-signed URL, required headers (Content-Type, Content-MD5), and an object key.

- Client PUTs the file to the URL.

- Client notifies your API to finalize; you verify ETag/MD5 and persist metadata.

Benefits: better throughput, fewer hot servers, simpler scaling. Keep your API authoritative over metadata and authorization.

Security checklist

- Accept-list content types (e.g., images/*, application/pdf). Do not trust client-declared MIME alone—sniff magic numbers.

- Normalize and sanitize filenames; do not use user filenames in storage paths. Store with generated ids.

- Path traversal: never concatenate untrusted input into filesystem paths; always use safe base directories.

- Virus/malware scanning for user-supplied binaries.

- Quotas and rate limits per user/tenant; reject abuse early.

- Authentication and authorization: only allowed roles may upload; bind files to owners/tenants.

- Content-Length validation and early aborts when limits are exceeded.

- CORS: if browsers will call your API, configure allowed origins. multipart/form-data can be a simple request, but Authorization and custom headers trigger preflight; implement OPTIONS.

- Encryption at rest and in transit; rotate keys; scrub temp storage.

Performance and scalability

- Use zero-copy or streaming APIs where possible.

- Offload to object storage; keep app servers stateless.

- Compress only when appropriate (most binary formats are already compressed).

- Parallelism: tune worker concurrency; avoid per-upload global locks.

- Observability: log request id, user id, file size/type; track upload latency, bytes uploaded, error rates, and storage latencies.

Documenting with OpenAPI

paths:

/v1/files:

post:

summary: Upload a file

requestBody:

required: true

content:

multipart/form-data:

schema:

type: object

properties:

file:

type: string

format: binary

metadata:

type: string

encoding:

metadata:

contentType: application/json

responses:

'201':

description: Created

content:

application/json:

schema:

type: object

properties:

id: { type: string }

size: { type: integer }

contentType: { type: string }

'400': { description: Bad Request }

'413': { description: Payload Too Large }

'415': { description: Unsupported Media Type }

Testing strategy

- Happy path: valid file, valid metadata, typical sizes.

- Edge cases:

- Zero-length file

- Over-size file (expect 413)

- Wrong Content-Type (expect 415)

- Missing file field (expect 400)

- Truncated body or missing closing boundary (expect 400)

- Slowloris-style uploads (ensure timeouts)

- Tools: cURL, HTTPie, Postman/Insomnia, integration tests that assert binary integrity and metadata persistence.

Troubleshooting guide

- 400 Bad Request: malformed multipart body or missing required part name. Confirm boundary matches header and final boundary has trailing “–”.

- 401/403: invalid token or insufficient scope; ensure the Authorization header is included on CORS preflight if required.

- 413 Payload Too Large: increase server/client limits or chunk/resumable strategy.

- 415 Unsupported Media Type: check request Content-Type and part content types; validate accept-list.

- Timeouts: enable keep-alive, tune read/write timeouts, and ensure storage backends aren’t blocking writes.

- Memory bloat: confirm you are streaming; switch from in-memory buffers to disk or direct-to-cloud.

Production-ready checklist

- Streaming upload path with enforced limits

- Checksums verified before finalize

- Virus scan and asynchronous post-processing

- Pre-signed direct-to-cloud path for large files

- Idempotency-Key support and clear 201 responses

- CORS + OPTIONS for browser clients

- Observability (metrics, logs, traces) and alarms

- Retention, quotas, lifecycle policies documented

Conclusion

Multipart/form-data remains the most compatible way to upload files to REST APIs. By designing a clear contract, streaming safely, verifying integrity, and documenting behavior, you can deliver reliable uploads that scale from kilobytes to multi‑gigabyte media while keeping your platform secure and maintainable.

Related Posts

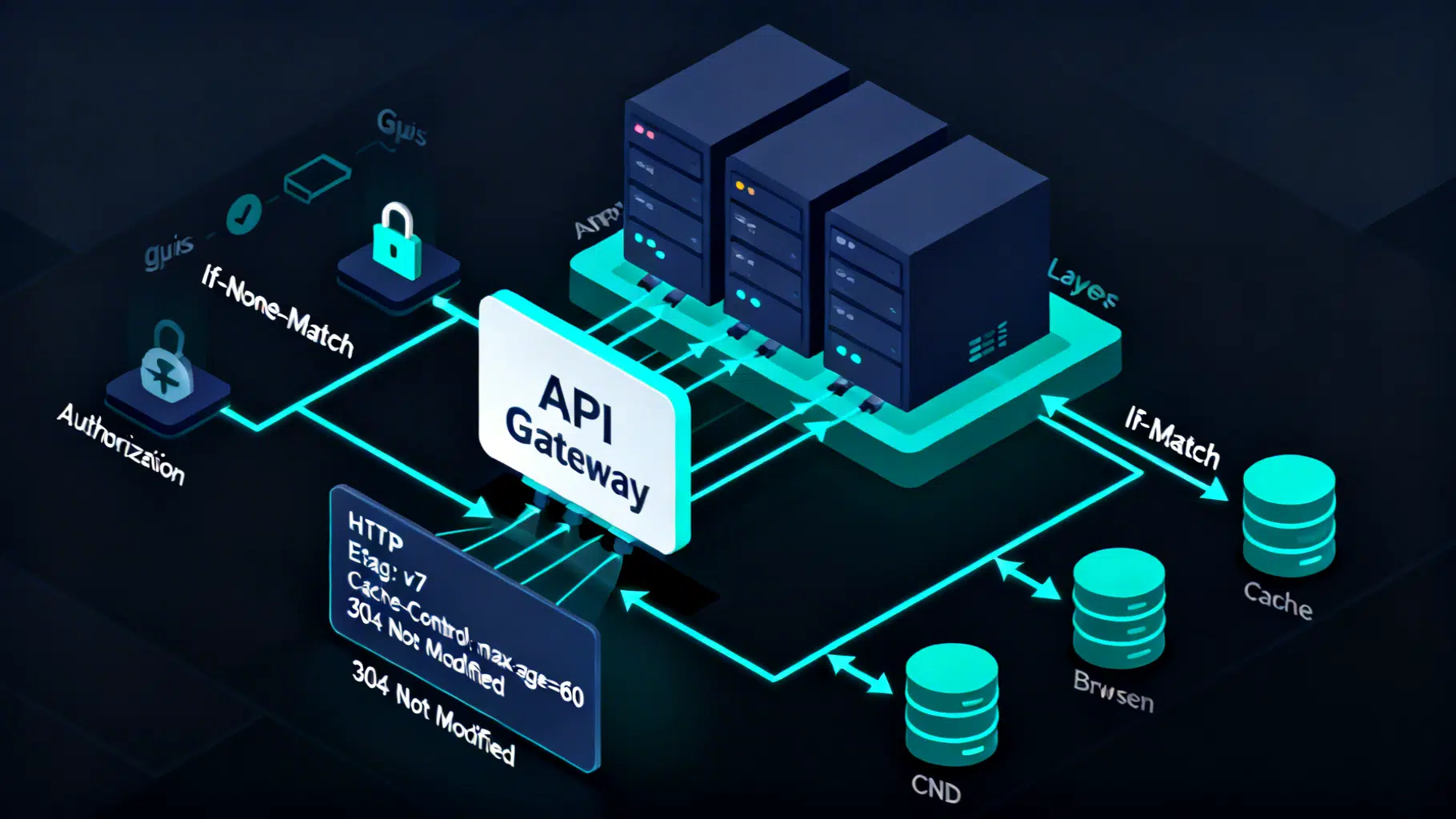

REST API Caching Strategies with ETags: A Practical Guide

A practical guide to REST API caching with ETags—how they work, when to use them, headers to pair with, and implementation tips with code.

DeepSeek API Integration Tutorial: From First Call to Production

Step-by-step DeepSeek API integration: base URL, models, cURL/Python/Node code, streaming, thinking mode, tool calls, errors, and production tips.

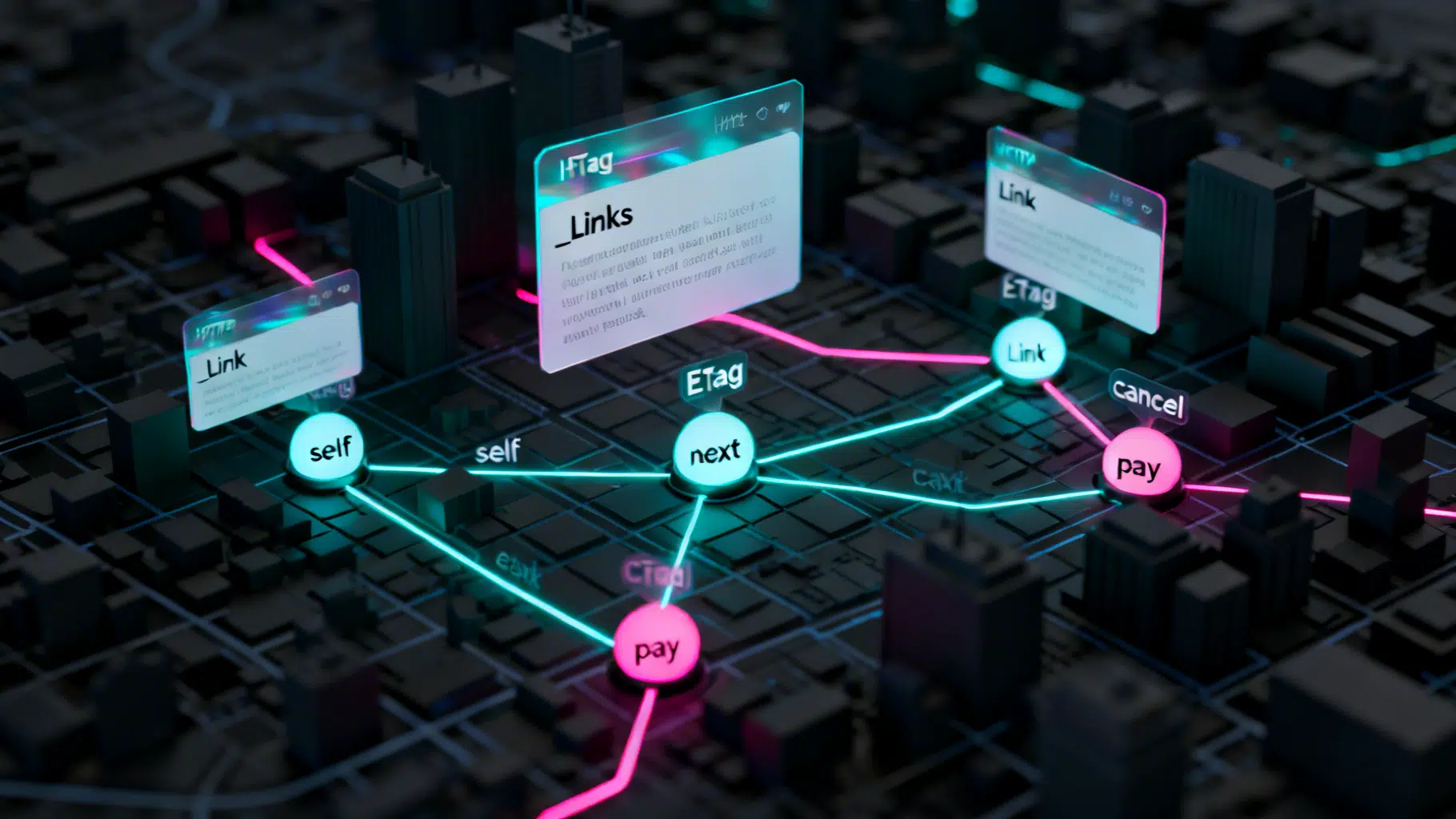

Implementing HATEOAS in REST APIs: A Practical Guide

A practical guide to implementing HATEOAS in REST APIs with formats, examples, tooling, testing, and rollout strategies.