Designing resilient REST API webhook retry mechanisms

Design reliable webhook retries: backoff with jitter, idempotency, Retry-After, DLQs, security, and ops patterns for resilient REST API webhooks.

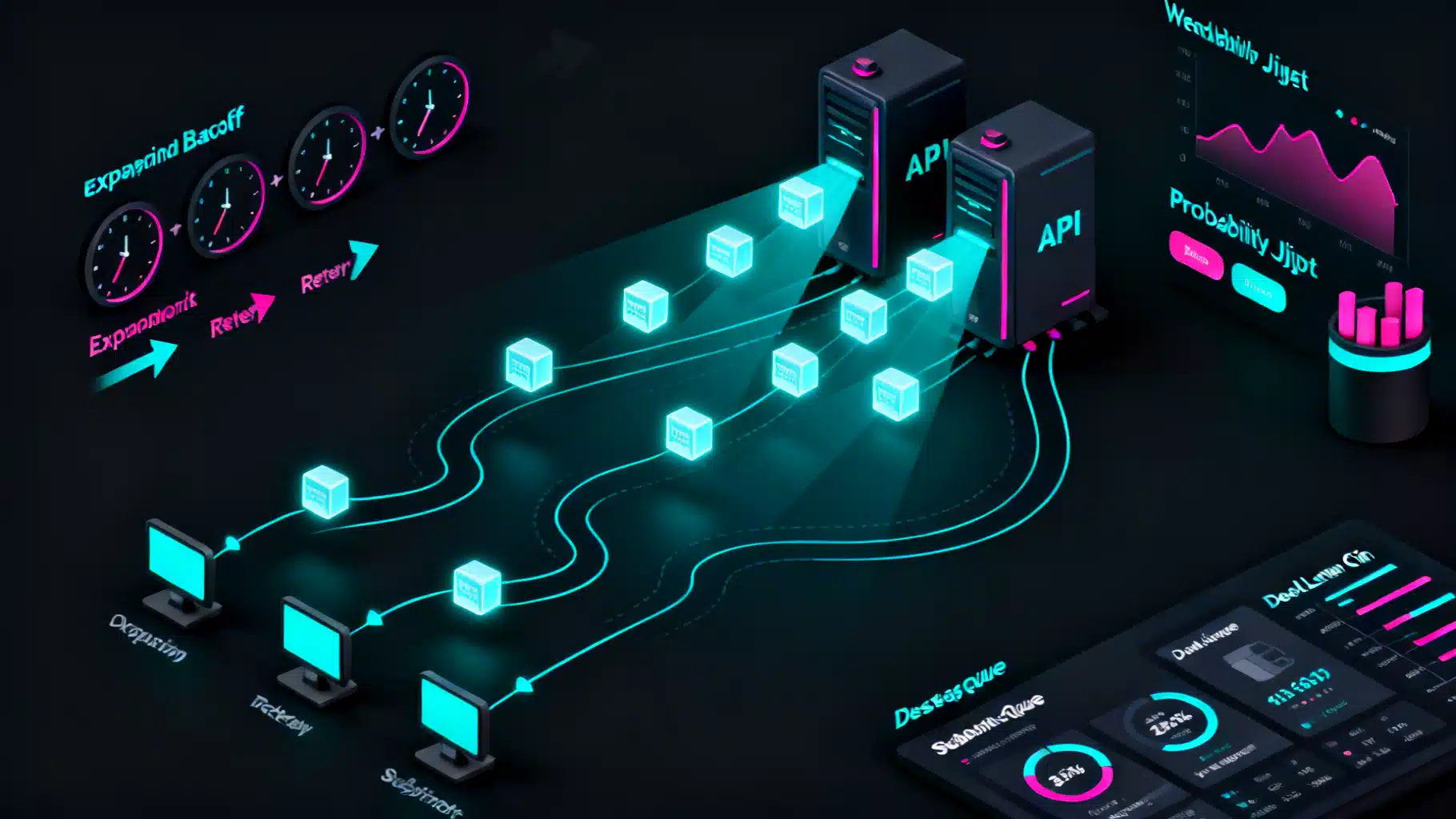

Image used for representation purposes only.

Overview

Webhooks turn your REST API into an event-driven platform by pushing notifications to subscriber endpoints. But the open internet is lossy: endpoints go down, networks split, DNS fails, TLS handshakes time out, and servers rate-limit. Reliable delivery therefore depends on robust retry mechanisms. This article explains how to design, implement, and operate production-grade webhook retries—from backoff and jitter to idempotency, dead-letter queues, and observability—so your events arrive once, only-once in effect, and fast.

Delivery semantics: what you can (and can’t) guarantee

- At-most-once: never retries; events may be lost. Not acceptable for critical events.

- At-least-once: retries until acknowledged; duplicates can occur. This is the pragmatic default for webhooks.

- Exactly-once: not realistically achievable over HTTP without cooperation from receivers. Emulate it with idempotency keys and deduplication.

Design your system around at-least-once delivery with strong idempotency on the receiver side.

Defining success and failure

A retry engine needs crisp rules:

- Success: any 2xx response (200–299). Avoid parsing bodies for success. The receiver should return 2xx after safely queuing the work.

- Temporary failure (retryable): network errors, timeouts, TCP resets; HTTP 408, 409, 425, 429, 500–599. Respect Retry-After when present.

- Permanent failure (non-retryable): malformed request or unsupported condition—commonly 400, 401, 403, 404, 410, 415, 501. Permit per-integration overrides because some receivers misuse codes.

- Timeouts: treat as retryable. Keep request timeouts short (e.g., 5–10 seconds) so you can fail fast and retry.

Tip: keep a small allowlist/denylist to adapt to idiosyncratic partners. For example, some integrations use 404 for temporary maintenance—your operator can flip a switch to treat 404 as retryable for them.

Backoff strategies that don’t melt your fleet

Synchronous, aggressive retries amplify outages. Use backoff.

- Fixed backoff: constant delay (e.g., 30s). Simple but can cause thundering herds.

- Exponential backoff: delay grows as base × 2^attempt. Faster recovery, lower load.

- Exponential backoff with jitter: add randomness to spread retries across time. Prefer full jitter or decorrelated jitter.

Formulas (T is next delay, attempt starts at 1):

- Exponential: T = min(max_delay, base × 2^(attempt-1))

- Full jitter: T = random(0, min(max_delay, base × 2^(attempt-1)))

- Decorrelated jitter: T = min(max_delay, random(base, prev_delay × 3))

Choose bounds. Example defaults:

- base = 1–5 seconds

- max_delay = 1–10 minutes (per tenant/endpoint)

- delivery_ttl = 72 hours (stop after this window)

Provide a deterministic schedule for debugging. Example (illustrative): 1m, 2m, 4m, 8m, 15m, 30m, 1h, 2h, 4h, 8h, 16h, 24h, then daily until TTL.

Respecting server backpressure

If the receiver sends 429 or 503 with a Retry-After header:

- If Retry-After is a number, wait that many seconds.

- If it is a HTTP-date, wait until that time.

- Cap with your max_delay to protect your backlog.

When missing, fall back to your backoff strategy.

Idempotency: the antidote to duplicates

Because at-least-once delivery yields duplicates, every webhook must include a stable, unique identifier per event and (ideally) a delivery attempt counter.

Recommended fields:

- event_id: UUID or ULID, unique system-wide.

- event_type and resource identifiers: to scope semantics.

- produced_at: timestamp for observability and ordering.

- signature and timestamp: for authenticity and replay protection.

Receiver best practice:

- Verify the signature before any side effects.

- Check a dedup store (e.g., Redis, Postgres unique index) for event_id. If seen, return 200 and do nothing.

- If new, persist event_id with a TTL (e.g., 7–30 days, aligned with your replay window), enqueue work, and return 2xx quickly.

This pattern yields exactly-once effects even with duplicate deliveries.

Ordering and concurrency

Global ordering of webhooks is fragile. Prefer per-resource ordering:

- Include sequence numbers or version in the payload per aggregate (e.g., user_version).

- Process events per resource key serially, or detect out-of-order arrivals and wait until missing sequence numbers arrive (with a timeout).

- Document that ordering is best-effort across aggregates.

Handling permanent failures and poison events

- Dead-letter queue (DLQ): when delivery_ttl expires or a non-retryable status is returned, move the event to a DLQ with rich metadata (last status, body hash, headers, attempt count).

- Quarantine: isolate repeated 4xx from the same endpoint to protect your system.

- Replay tooling: allow operators (and customers) to fix endpoints and trigger replays from DLQ or from an event archive, optionally filtered by event_type and date.

Security considerations for retries

- TLS everywhere; pin minimum TLS version.

- HMAC signatures: compute over the signed timestamp and raw payload; compare in constant time. Example header: X-Signature: scheme=v1,ts=…,sig=…

- Timestamp tolerance: reject messages older than a small window (e.g., 5 minutes) to limit replay risk. Retries should refresh the signature timestamp.

- Secret rotation: support multiple active signing secrets.

- IP allow lists and mTLS (for high-trust, private integrations).

Never log full payloads that contain secrets; use hashing/redaction.

Timeouts, size limits, and payload hygiene

- Sender: short connect+read timeouts (e.g., 5–10s). Avoid retry storms by not waiting 60s per attempt.

- Receiver: respond fast (under 1s) after enqueueing. If heavy processing is required, do it asynchronously.

- Limit payload size. For larger events, deliver a reference (event_id) and require the receiver to fetch details via an authenticated API.

Observability and SLOs

Track, alert, and visualize at least:

- Delivery success rate (per endpoint, per tenant, per event_type)

- Time-to-first-success (TTFS) and time-to-deliver (TTD)

- Attempt distribution and backlog size

- Top failure codes and endpoints

- DLQ rate and age of oldest message

Set SLOs, e.g., “99.9% of webhooks are delivered within 10 minutes.” Backpressure policies and retry knobs should be visible in dashboards.

Example: sender-side retry engine (pseudocode)

import random, time, requests

class RetryPolicy:

def __init__(self, base=2, max_delay=300, ttl_seconds=72*3600, max_attempts=100):

self.base = base

self.max_delay = max_delay

self.ttl_seconds = ttl_seconds

self.max_attempts = max_attempts

def next_delay(self, attempt, prev_delay=None):

# exponential backoff with full jitter

exp = min(self.max_delay, self.base * (2 ** (attempt - 1)))

return random.uniform(0, exp)

class WebhookDispatcher:

def __init__(self, policy=RetryPolicy(), http_timeout=8):

self.policy = policy

self.http_timeout = http_timeout

def deliver(self, endpoint, payload, headers, produced_at):

attempt, start = 1, time.time()

prev_delay = None

while attempt <= self.policy.max_attempts and (time.time() - start) < self.policy.ttl_seconds:

try:

resp = requests.post(endpoint, json=payload, headers=headers, timeout=self.http_timeout)

if 200 <= resp.status_code < 300:

return { 'status': 'ok', 'attempt': attempt }

if resp.status_code in (429, 503) and 'Retry-After' in resp.headers:

delay = parse_retry_after(resp.headers['Retry-After'])

elif resp.status_code >= 500 or resp.status_code in (408, 409, 425):

delay = self.policy.next_delay(attempt, prev_delay)

else:

break # non-retryable

except requests.RequestException:

delay = self.policy.next_delay(attempt, prev_delay)

time.sleep(delay)

prev_delay = delay

attempt += 1

# Move to DLQ with metadata

return { 'status': 'failed', 'attempt': attempt-1, 'dlq': True }

Notes:

- The loop respects max_attempts and a delivery TTL.

- Retry-After, if present, takes precedence.

- Non-retryable responses exit early to avoid useless retries.

Example: receiver-side idempotent handler with signature verification

const crypto = require('crypto');

const express = require('express');

const Redis = require('ioredis');

const app = express();

const redis = new Redis(process.env.REDIS_URL);

app.post('/webhooks', express.raw({ type: '*/*' }), async (req, res) => {

const signatureHeader = req.header('X-Signature');

const timestamp = req.header('X-Signature-Timestamp');

const body = req.body; // raw Buffer

if (!verifySignature(body, timestamp, signatureHeader, process.env.SIGNING_SECRET)) {

return res.status(400).send('invalid signature');

}

const event = JSON.parse(body.toString('utf8'));

const key = `evt:${event.id}`; // event.id provided by sender

// Set a short NX key to achieve idempotency; extend or persist as needed

const wasNew = await redis.set(key, '1', 'NX', 'EX', 60 * 60 * 24 * 14); // 14 days

if (!wasNew) {

return res.status(200).send('duplicate');

}

// Offload heavy work to a queue; ack quickly

await enqueueForProcessing(event);

return res.status(202).send('accepted');

});

function verifySignature(body, ts, header, secret) {

if (!ts || !header) return false;

const now = Math.floor(Date.now() / 1000);

if (Math.abs(now - parseInt(ts, 10)) > 300) return false; // 5-minute tolerance

const payload = `${ts}.${body.toString('utf8')}`;

const hmac = crypto.createHmac('sha256', secret).update(payload).digest('hex');

// header format example: "scheme=v1,ts=...,sig=..." -> parse sig

const sig = header.split('sig=')[1];

return crypto.timingSafeEqual(Buffer.from(hmac), Buffer.from(sig));

}

Key points:

- Use express.raw to avoid body mutation before signature verification.

- Perform a constant-time comparison.

- Return 2xx immediately after enqueueing; do the real work asynchronously.

Rate limiting, fairness, and isolation

- Per-endpoint concurrency caps: avoid overwhelming slow receivers.

- Token buckets per tenant: ensure fairness during spikes.

- Circuit breakers: temporarily pause an endpoint after repeated failures; retry with longer intervals.

- Isolation: shard queues by tenant or endpoint to prevent a single bad actor from clogging the global pipeline.

Payload evolution and schema safety

Retries may cross deployment boundaries. To avoid breaking receivers:

- Maintain backward compatibility; add fields, don’t remove or repurpose.

- Include a schema_version; keep old versions for the TTL period.

- Offer a self-serve replay to backfill receivers after they upgrade.

Operational playbook

- Failure injection: regularly simulate 500s, timeouts, and 429s to validate backoff and jitter.

- Blackhole tests: drop all responses and ensure DLQ + alerts kick in.

- Chaos on schedules: randomize cron-driven batch sends to avoid synchronized surges.

- Runbooks: document how to promote DLQ items back to the live queue and how to toggle per-endpoint retry overrides.

Checklist for production readiness

Sender side:

- Exponential backoff with jitter and max_delay

- Respect Retry-After and cap it

- Short HTTP timeouts with connection reuse

- Per-endpoint concurrency and circuit breaker

- Delivery TTL and DLQ with replay tools

- Structured logs with event_id and attempt

- Metrics: success rate, TTD, backlog, DLQ

Receiver side:

- Signature verification with timestamp tolerance

- Idempotent processing via event_id store

- Fast 2xx after enqueue; async processing

- Clear 4xx vs 5xx responses

- Rate-limit and backpressure (429 + Retry-After)

- Observability: dedup hits, processing lag

Conclusion

Reliable webhook delivery is a systems problem: you must treat the internet as unreliable, plan for duplicates, and design for backpressure. A sound retry strategy—exponential backoff with jitter, respect for Retry-After, tight timeouts—paired with idempotent receivers, DLQs, and strong observability turns best-effort HTTP into dependable event delivery. Start with at-least-once semantics, make effects idempotent, and your webhooks will remain resilient even when everything else is failing.

Related Posts

Implementing a Robust Webhook API: A Practical Guide

Design, secure, and operate reliable webhook APIs with signatures, retries, idempotency, observability, and great developer experience.

Designing Idempotent APIs: A Practical Guide

A practical guide to API idempotency: keys, retries, storage design, error handling, and patterns for exactly-once effects in distributed systems.

The Enterprise Blueprint for API Governance Standards

A practical blueprint for enterprise API governance: standards, security, lifecycle, observability, and a 90‑day rollout plan to scale APIs safely.