gRPC vs REST: A Practical Performance Comparison and Tuning Guide

gRPC vs REST performance: how they differ, what to measure, when each wins, and tuning tips to hit your latency, throughput, and cost goals.

Image used for representation purposes only.

Overview

API performance decisions ripple across latency, throughput, infrastructure cost, and developer experience. This article compares gRPC and REST from a performance perspective: why one is often faster, when that advantage disappears, what to measure, and how to tune both stacks for real-world workloads.

Quick definitions

- REST: Resource-oriented APIs, typically over HTTP/1.1 or HTTP/2, most often using JSON. Simple, ubiquitous, cache-friendly, browser-native.

- gRPC: RPC framework over HTTP/2 using Protocol Buffers (binary). Strongly typed contracts, code generation, and built‑in streaming.

Both can be secured with TLS, load balanced, observed, and automated. Performance differences stem primarily from serialization format and transport behavior.

Why performance differs

- Serialization format

- gRPC uses Protocol Buffers (varint encoding, compact binary). Smaller payloads → fewer bytes on the wire → less time in network and fewer CPU cycles parsing.

- REST commonly uses JSON (text, human-readable). Larger payloads and heavier parse costs, though modern JSON libraries are highly optimized and compression can narrow the gap.

- Transport behavior

- HTTP/2 multiplexing: gRPC requires HTTP/2, which multiplexes many streams over one TCP connection and applies HPACK header compression. This cuts head-of-line blocking at the HTTP layer and reduces connection churn.

- REST commonly runs on HTTP/1.1 keep‑alive. With good connection pooling, it performs well; with HTTP/2 enabled, REST narrows the transport advantage.

- Streaming patterns

- gRPC supports unary, server-streaming, client-streaming, and bidirectional streaming. For chatty or long‑lived interactions, streaming eliminates per-request overhead and amortizes handshakes.

- REST can stream (chunked responses, SSE, WebSockets), but it’s not standardized at the protocol+contract level the way gRPC is, so implementations vary.

- Tooling and code generation

- gRPC codegen stubs tend to be highly efficient and allocate less.

- REST frameworks range from lightweight to heavy; middleware can add measurable overhead if not tuned.

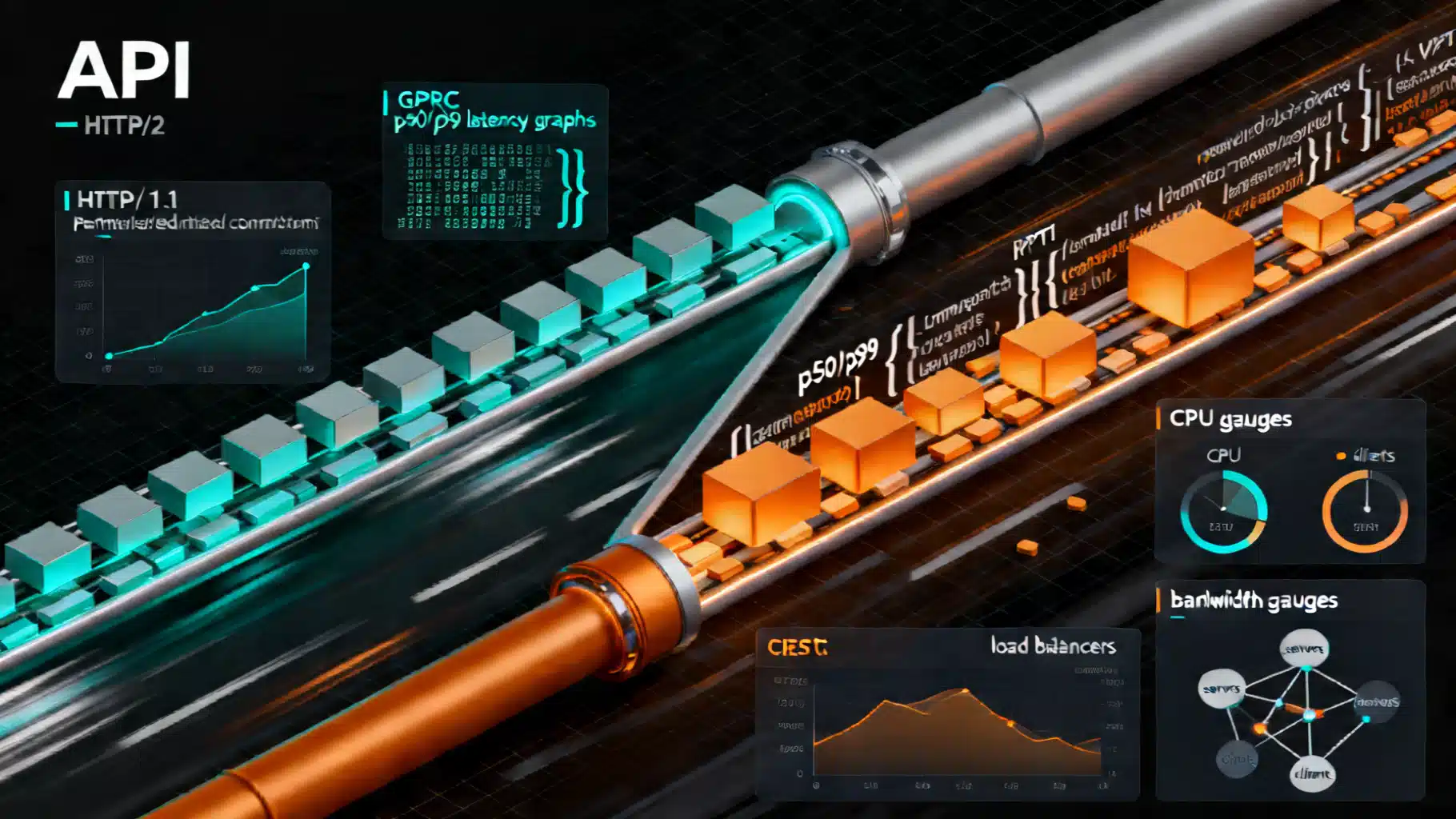

Performance dimensions that matter

- Latency: p50, p90, p99 (tail latency). The binary format and fewer bytes often give gRPC lower tail latency at scale.

- Throughput (RPS or streams): gRPC typically handles more concurrent calls per connection via HTTP/2 multiplexing.

- CPU: JSON parse/serialize cost shows up quickly at high QPS or with large payloads; Protobuf reduces CPU.

- Network bytes on wire: Protobuf is smaller uncompressed; JSON+gzip can approach Protobuf sizes for large payloads.

- Connection management: Long‑lived HTTP/2 connections (gRPC) avoid frequent TCP+TLS handshakes; REST must rely on aggressive keep‑alive/pooling.

- Cold starts: Serverless or autoscaling environments pay a penalty regardless of protocol; long‑lived gRPC streams can hide some costs after warmup.

When gRPC is usually faster

- High QPS with small to medium messages (≤ tens of KB)

- Chatty microservices where each user operation triggers many RPCs

- Real‑time and streaming use cases (telemetry, chat, trading, IoT)

- Mobile/edge where bytes on the wire translate directly to user‑perceived latency or battery

When REST can match or beat gRPC

- Public APIs fronted by CDNs: HTTP caching, ETags, and edge presence can dwarf per‑request protocol overhead.

- Large, compressible payloads: JSON with gzip/br can approach Protobuf sizes; transport overhead becomes less dominant.

- Browser clients: REST is native; gRPC-Web adds a proxy hop that can erode advantages.

- Simple, low‑QPS scenarios: Differences may be negligible; developer/consumer familiarity can dominate.

Expected qualitative results (what you’ll likely see)

- Small payloads, high concurrency: gRPC shows lower p50 and smaller p99 tails; higher RPS at the same CPU.

- Medium payloads (10–200 KB): gRPC still leads; JSON+gzip narrows the byte gap but CPU parse cost remains.

- Very large payloads (MBs): Disk/IO and compression dominate; differences shrink. Throughput tuning and backpressure matter more than protocol choice.

- WAN with middleboxes: Poor HTTP/2 support in a proxy or LB can erase gRPC gains. Validate your path end‑to‑end.

A fair benchmarking methodology

To avoid misleading conclusions:

- Use production‑like runtimes and versions for both stacks.

- Warm up the system (JIT, caches, connection pools) for at least several minutes.

- Measure p50/p90/p99 latencies, throughput, CPU, memory, GC pauses, and bytes on the wire.

- Test over realistic networks (intra‑AZ, cross‑AZ/region, and through your actual load balancer/proxies).

- Fix one variable at a time: message size, concurrency, connection reuse, compression.

- Run long enough to capture tail behavior and periodic GC or autoscaler effects (10–30 minutes per trial).

Minimal test services

gRPC (Proto)

syntax = "proto3";

package echo;

message EchoRequest { string message = 1; }

message EchoResponse { string message = 1; }

service Echo {

rpc Say(EchoRequest) returns (EchoResponse);

rpc Stream(EchoRequest) returns (stream EchoResponse);

}

REST (JSON)

POST /echo HTTP/1.1

Content-Type: application/json

{"message":"hello"}

Driving load

- gRPC: ghz/fortio/vegeta with persistent channels, TLS enabled. Example:

ghz --insecure --proto echo.proto --call echo.Echo.Say \

-d '{"message":"hello"}' -c 200 -n 200000 0.0.0.0:50051

- REST: wrk/wrk2/vegeta with HTTP/2 if supported, TLS on, keep‑alive enabled. Example with wrk2 at a fixed rate:

wrk -t8 -c200 -d5m -R20000 -s post.lua https://api.example.com/echo

- Capture bytes on the wire with tcpdump/pcap or cloud LB metrics; correlate with process CPU and memory.

Tuning gRPC for performance

- Reuse channels: Create one channel per destination and reuse it; avoid creating/destroying per call (expensive TLS+HTTP/2 setup).

- Concurrency: Increase max concurrent streams per connection if client and server support it; watch server thread/worker limits.

- Flow control: Tune HTTP/2 window sizes for high‑bandwidth, high‑latency links.

- Message design: Prefer smaller, flatter messages; batch small items into a single RPC or use streaming.

- Compression: Enable per‑RPC compression for medium/large payloads; avoid for tiny messages (CPU > savings).

- Deadlines and retries: Always set deadlines; use client‑side retries with exponential backoff for idempotent calls only.

- Avoid reflection in production: Disable gRPC server reflection on hot paths.

- Pooled allocators: In languages like C++/Go/Java, prefer pooled buffers and avoid per‑call allocations.

Tuning REST for performance

- HTTP/2: Enable it end‑to‑end (LB, service). It narrows many transport‑level gaps.

- Keep‑alive and pooling: Aggressive client pooling minimizes handshakes; set sensible idle timeouts.

- Compression: Gzip or Brotli for medium/large payloads; negotiate Content‑Encoding properly.

- JSON performance: Use fast JSON libraries and preallocated buffers; avoid reflection-heavy mappers on hot paths.

- Payload design: Remove redundant fields, adopt sparse responses (fields mask), or consider compact formats like CBOR/MessagePack when both sides agree.

- Caching: Use ETags, Cache-Control, and content fingerprinting. A cache hit beats any protocol micro-optimization.

- Idempotency: Implement idempotency keys for safe retries at clients/LBs.

Operational factors that skew results

- Load balancers and proxies: Ensure HTTP/2 support for gRPC, otherwise you may see connection downgrades or stream resets.

- mTLS and certificate rotation: Handshake cost matters under connection churn; long‑lived connections mitigate it.

- Autoscaling: Scale on CPU and queue depth/tail latency, not just average RPS. Warm pools reduce cold‑start spikes.

- Observability overhead: Excessive logging/metrics on every call can dominate latency more than protocol choice; sample and aggregate wisely.

- Head‑of‑line blocking: TCP‑level HOL remains for both protocols; packet loss on high‑latency links can inflate p99s regardless of serialization.

Cost implications

- CPU savings: Protobuf’s lower parse/serialize overhead often reduces CPU by double‑digit percentages at scale.

- Network egress: Smaller messages reduce egress fees; savings accumulate for chatty microservice meshes and mobile clients.

- Infrastructure: Higher per‑node throughput can mean fewer instances or slower autoscale growth under spikes.

Decision guide

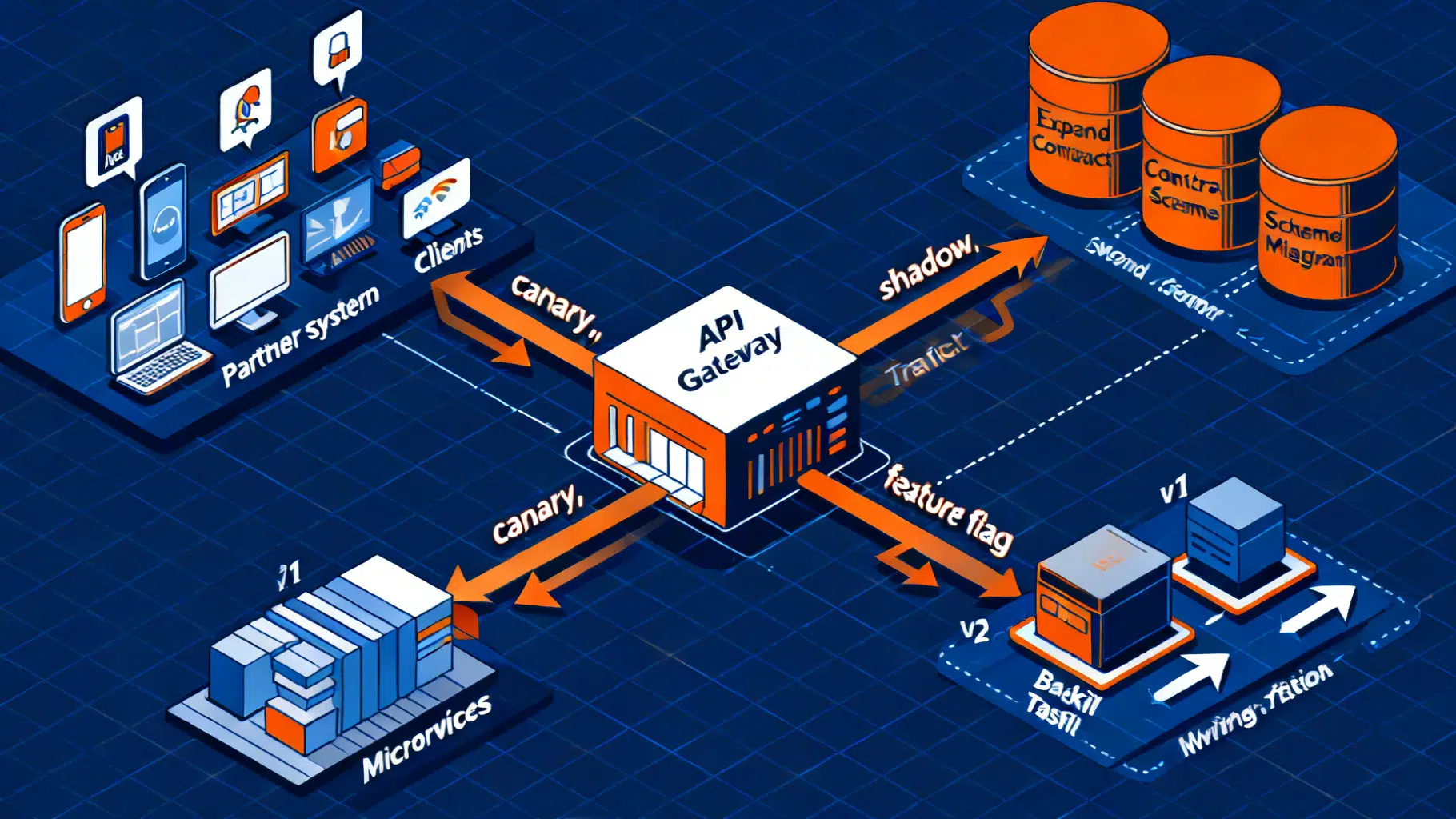

Choose gRPC if:

- You control both client and server and can share schemas.

- You need streaming or very high call volume between services.

- You prioritize strong typing and codegen across polyglot stacks.

Choose REST if:

- You target browsers or third‑party developers first.

- You benefit from CDN caching and public internet reach.

- Your payloads are large and compressible, and latency budgets are modest.

Often, the pragmatic answer is “both”: internal services speak gRPC; a public REST facade (or gRPC‑Gateway) exposes a JSON API for external consumers.

Common pitfalls to avoid

- Creating a new gRPC channel per request (handshake and TCP slow‑start crush throughput).

- Benchmarking without TLS when production requires it.

- Testing in a lab without the same load balancer/proxy path as production.

- Ignoring tail latency (p99+). Users feel tails, not medians.

- Comparing gRPC over HTTP/2 to REST over HTTP/1.1 with short keep‑alive timeouts—enable HTTP/2 and pooling for a fair fight.

Example outcomes to look for (sanity checks)

- For 1–2 KB messages at high concurrency, you should see gRPC lower p99 by a noticeable margin and consume less CPU per request.

- Turning on JSON gzip for 50–200 KB payloads should reduce network bytes substantially and narrow the gap, while CPU cost remains higher than Protobuf.

- Introducing a proxy that downgrades HTTP/2 will often erase gRPC’s edge; fix the proxy before tuning code.

Checklist before you decide

- Run a week of A/B traffic with production inputs, measuring p50/p95/p99, errors, retries, CPU, memory, GC, and egress.

- Validate end‑to‑end HTTP/2 for gRPC and pooling/timeouts for REST.

- Confirm observability overhead is sampled and batched.

- Benchmark with and without compression at realistic payload sizes.

- Verify retry semantics and idempotency for both clients.

Conclusion

gRPC’s binary serialization, code generation, and mandatory HTTP/2 typically deliver lower latency, higher throughput, and lower CPU—especially for small/medium messages and streaming workloads. REST can match or surpass it when HTTP/2, compression, and caching are applied, particularly for large payloads and public, browser‑facing APIs. Measure in your environment, tune both sides, and let p99 and cost per successful call—not assumptions—drive the decision.

Related Posts

The definitive guide to API resource naming conventions

Clear rules for naming API resources, fields, and events across REST, GraphQL, and gRPC—with examples, pitfalls, and a practical checklist.

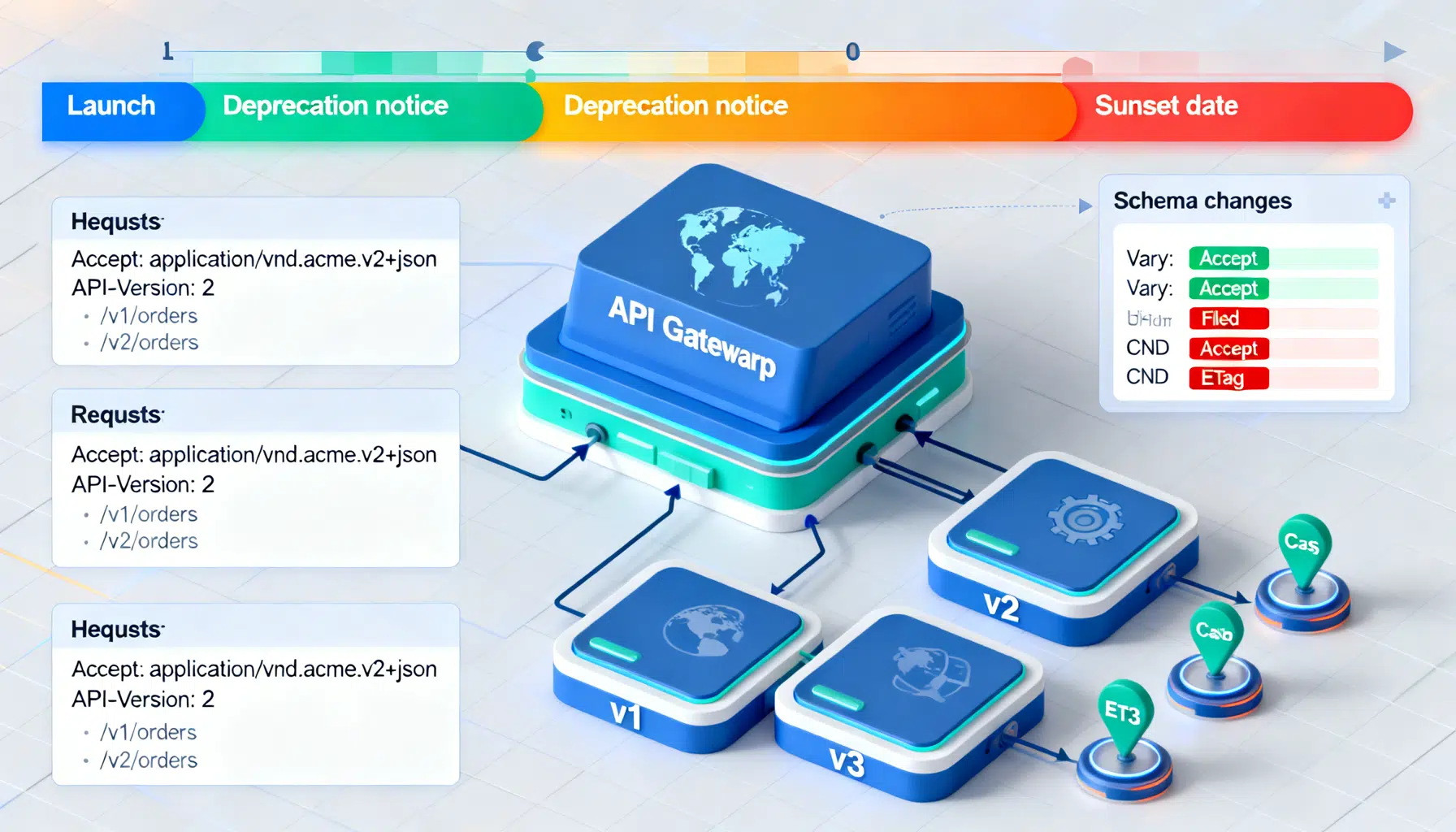

API Backward Compatibility Strategies: Designing Change Without Breaking Clients

Practical strategies to keep APIs backward compatible—versioning, additive changes, deprecation, rollout, and testing for REST, GraphQL, and gRPC.

The API Versioning Playbook: Best Practices, Patterns, and Pitfalls

A practical playbook for API versioning: strategies, SemVer, backward compatibility, deprecation, testing, and rollout patterns for stable, evolving APIs.