Vector Search vs. Keyword Search: A Practical Guide for 2026

A practical 2026 guide comparing vector vs. keyword search: principles, pros/cons, costs, evaluation, and when to choose hybrid—with code snippets.

Image used for representation purposes only.

Overview

Vector search and keyword search solve the same problem—finding the most relevant items—but they do it with very different lenses. Keyword search relies on lexical overlap and efficient inverted indexes. Vector search represents text (and other modalities) as high‑dimensional vectors so that “meaning” can be matched even when the exact words differ. In modern systems—especially retrieval‑augmented generation (RAG), chatbots, product discovery, and enterprise knowledge search—the best results often come from combining both.

This article explains how each approach works, where each shines, how to evaluate them, and how to choose (or blend) them in production.

Mental model

- Keyword search: “Find documents that share the same words.”

- Vector search: “Find documents close in meaning, even if they use different words.”

- Hybrid search: “Use both signals and let them vote.”

How keyword search works (in brief)

Keyword systems index terms to the documents that contain them.

- Tokenization and normalization: break text into terms; apply lowercasing, stopword removal, stemming/lemmatization as needed.

- Inverted index: a mapping from term → postings list of document IDs and positions.

- Scoring: classic schemes like TF‑IDF and BM25 emphasize rare, frequent, and well‑placed terms. Phrase/proximity queries improve precision.

- Filtering and ranking: boolean filters (e.g., category:finance), field boosts, synonyms, and typo‑tolerant matching (e.g., edit distance) refine recall and control precision.

Strengths:

- Fast, memory‑efficient at large scale.

- Transparent: you can explain why a document matched.

- Easy to tune with boosts, synonyms, and business rules.

Limitations:

- Vocabulary mismatch: “couch” vs “sofa.”

- Struggles with intent and paraphrase.

- Multilingual/zero‑shot use cases are harder without extensive dictionaries.

How vector search works (in brief)

Vector systems turn text (or images, audio, code) into dense numerical vectors (embeddings) so that semantically similar items are near each other.

- Embeddings: models map inputs to d‑dimensional vectors (e.g., d=384–1536). Cosine similarity or inner product often measures closeness.

- Indexing for speed: approximate nearest neighbor (ANN) structures like HNSW graphs, IVF (inverted files), and product quantization (PQ) enable sub‑linear search with tunable recall/latency trade‑offs.

- Multimodal support: the same machinery can index images or audio if you have corresponding encoders.

Strengths:

- Captures synonyms, paraphrases, and context.

- Works across languages and modalities.

- Robust for long or short queries where lexical overlap is weak.

Limitations:

- Heavier memory/compute footprint; tuning recall vs latency is non‑trivial.

- Less transparent scoring; requires monitoring and evaluation to build trust.

- Embedding drift over time; re‑indexing is needed when models change.

When keyword wins, when vector wins

Choose keyword search if:

- Queries contain distinctive terms, codes, or exact strings (e.g., part numbers, error IDs, legal citations).

- You need strict boolean logic, fielded queries, and explainability.

- You’re resource‑constrained and need predictable performance with modest hardware.

Choose vector search if:

- Users phrase the same intent many ways (customer support, knowledge search, FAQs).

- Multilingual search or cross‑domain generalization matters.

- You’re powering RAG for LLMs and need semantically rich context.

In practice, hybrid is the default for product‑grade relevance.

Hybrid patterns that work

- Score blending: run BM25 and ANN separately; normalize scores (e.g., z‑score or min‑max) and blend: final = α·bm25 + (1−α)·vector.

- Reciprocal Rank Fusion (RRF): combine top‑k lists by rank, simple and strong.

- Two‑stage retrieval + re‑ranking: ANN/BM25 fetch candidates; a cross‑encoder or LLM re‑ranks a small set (e.g., top 50 → top 5).

- Sparse + dense fusion: combine BM25 with learned sparse representations (e.g., SPLADE‑like) plus dense vectors; covers both exact terms and semantics.

- Multi‑vector per doc: split documents into passages or use late‑interaction models (e.g., ColBERT‑style) to improve matching granularity.

Quality measurement you can trust

Offline metrics (labelled queries):

- Recall@k / Precision@k: fraction of relevant items retrieved.

- nDCG@k: order‑aware quality (higher is better).

- MRR: how quickly the first relevant item appears.

Online metrics (production):

- Click‑through rate, save/add‑to‑cart, dwell time.

- Query reformulation rate (lower is better).

- Human judgments (e.g., side‑by‑side ratings) for critical surfaces.

Use both: establish a BM25 baseline, then show that vector or hybrid materially improves nDCG@10 and online KPIs at your latency budget.

Latency, memory, and scale

- Latency budget: aim for p95 under your UI’s threshold (e.g., 150–300 ms end‑to‑end). ANN parameters (graph efSearch, IVF nprobe) trade latency for recall.

- Memory footprint: dense vectors are big—N·d·bytes_per_dim. Float32 costs ~4 bytes/dim; quantization (FP16/INT8/PQ) cuts memory 2–8× with a small recall hit.

- Throughput: pre‑filter candidates (e.g., by tenant, language, time) before ANN to reduce search space.

- Index maintenance: shard by tenant/time; build shadow indexes for re‑embedding; swap atomically.

- Caching: cache embeddings for frequent queries; cache top‑k results for head queries; warm ANN structures at startup.

Cost model (back‑of‑the‑envelope)

- Ingest cost ≈ tokens_to_embed × embed_cost_per_token + indexing_compute.

- Storage cost ≈ N × d × bytes_per_dim × replication_factor (minus compression).

- Query cost ≈ ANN_flops + reranker_flops + network egress.

Lower costs by:

- Aggressive chunking (right‑size passages), PQ/IVF, and float16.

- Hybrid early‑exit (BM25 gate before ANN) for exact‑match queries.

- Tiering: hot ANN for recent/important content; cold BM25 or batch retrieval for the long tail.

Data, drift, and governance

- Model versioning: store embedding_model_version with each vector; support dual‑write during migrations.

- Right to be forgotten: maintain doc‑to‑vector mappings for deletions across all shards.

- PII and security: embeddings can leak info; encrypt at rest, restrict access, and consider differential privacy for sensitive domains.

- Monitoring: track distribution shifts (cosine norms, centroid drift) and alert on sudden recall drops.

Implementation snapshots

Below are compact examples to ground the concepts. They are illustrative; adapt to your stack and versions.

Keyword (BM25) with a search engine

PUT /docs

{

"mappings": {"properties": {"title": {"type": "text"}, "body": {"type": "text"}}},

"settings": {"analysis": {"analyzer": {"default": {"type": "standard"}}}}

}

POST /docs/_search

{

"query": {

"bool": {

"must": [{"match": {"body": "reset my router keeps disconnecting"}}],

"filter": [{"term": {"locale": "en"}}]

}

},

"highlight": {"fields": {"body": {}}}

}

Vector search with PostgreSQL + pgvector

-- Enable extension and create a table with a 768‑dimensional vector

CREATE EXTENSION IF NOT EXISTS vector;

CREATE TABLE docs (

id BIGSERIAL PRIMARY KEY,

title TEXT,

body TEXT,

embedding VECTOR(768)

);

-- Query with cosine distance (<=> is distance; lower is better)

WITH q AS (

SELECT '[0.01, 0.02, ...]'::vector AS v

)

SELECT id, 1 - (embedding <=> q.v) AS score

FROM docs, q

ORDER BY embedding <=> q.v

LIMIT 10;

ANN with a vector library (Python pseudo‑example)

import numpy as np

import faiss # or another ANN library

# d = embedding dimensionality

index = faiss.IndexHNSWFlat(d=768, M=32)

index.hnsw.efConstruction = 200

# Add vectors

index.add(np.load('embeddings.npy')) # shape: (N, 768)

# Query

q = np.load('query_embedding.npy') # shape: (1, 768)

D, I = index.search(q, k=10) # I: indices, D: distances

Hybrid blending (conceptual)

# Normalize scores to [0,1] then blend

hybrid = alpha * bm25_score + (1 - alpha) * vector_score

# Tune alpha per query type; consider RRF for simplicity and robustness

RAG considerations

If you’re building retrieval‑augmented generation:

- Use domain‑specific embeddings or fine‑tuned models to reduce hallucinations.

- Chunk documents with overlap; store metadata (source, section) for faithful citations.

- Retrieve top‑k (e.g., 20), re‑rank to top‑n (e.g., 5), then feed to the generator.

- Deduplicate near‑identical passages to avoid repetitive context.

- Log prompt+retrieval pairs to continuously improve your corpus and relevance.

Tuning tips and common pitfalls

- Don’t skip a keyword baseline. Establish BM25 nDCG@10 and latency; prove improvements.

- Over‑chunking hurts coherence; under‑chunking dilutes signal. Aim for semantically coherent segments (e.g., 100–300 words) and validate empirically.

- Beware stopword removal in keyword search if phrase intent matters; consider proximity queries.

- In vector search, start with float32, then move to FP16 or PQ once quality margins are known.

- Always cap candidate set sizes before expensive re‑rankers.

- Evaluate per‑segment: head, torso, long tail queries behave differently.

Decision guide (quick)

- Need exact terms, filters, and fast explainability? Start with keyword.

- Need synonym/intent matching, multilingual robustness, or RAG? Add vector.

- Can’t decide? Ship a hybrid with RRF or score blending, plus cross‑encoder re‑ranking for the top 50 candidates.

Checklist for launch

- Baselines: BM25 metrics captured and reproducible.

- Offline eval: nDCG@10, Recall@50 vs. gold labels; sensitivity tests across query types.

- Online eval: A/B with guardrails (latency, error budgets).

- Operations: embedding versioning, re‑index strategy, deletion pipeline, PII controls.

- Documentation: explainability artifacts (why‑did‑this‑rank), monitoring dashboards.

Conclusion

Keyword and vector search aren’t adversaries—they’re complementary tools. Keyword search offers controllability and speed; vector search adds semantic reach. Most high‑stakes applications (RAG, customer support, product search) benefit from hybrid architectures: retrieve broadly with dense and/or sparse signals, apply business rules and filters, then re‑rank. Start simple, measure relentlessly, and evolve toward a balanced system that meets your users’ intent within your latency and cost budgets.

Related Posts

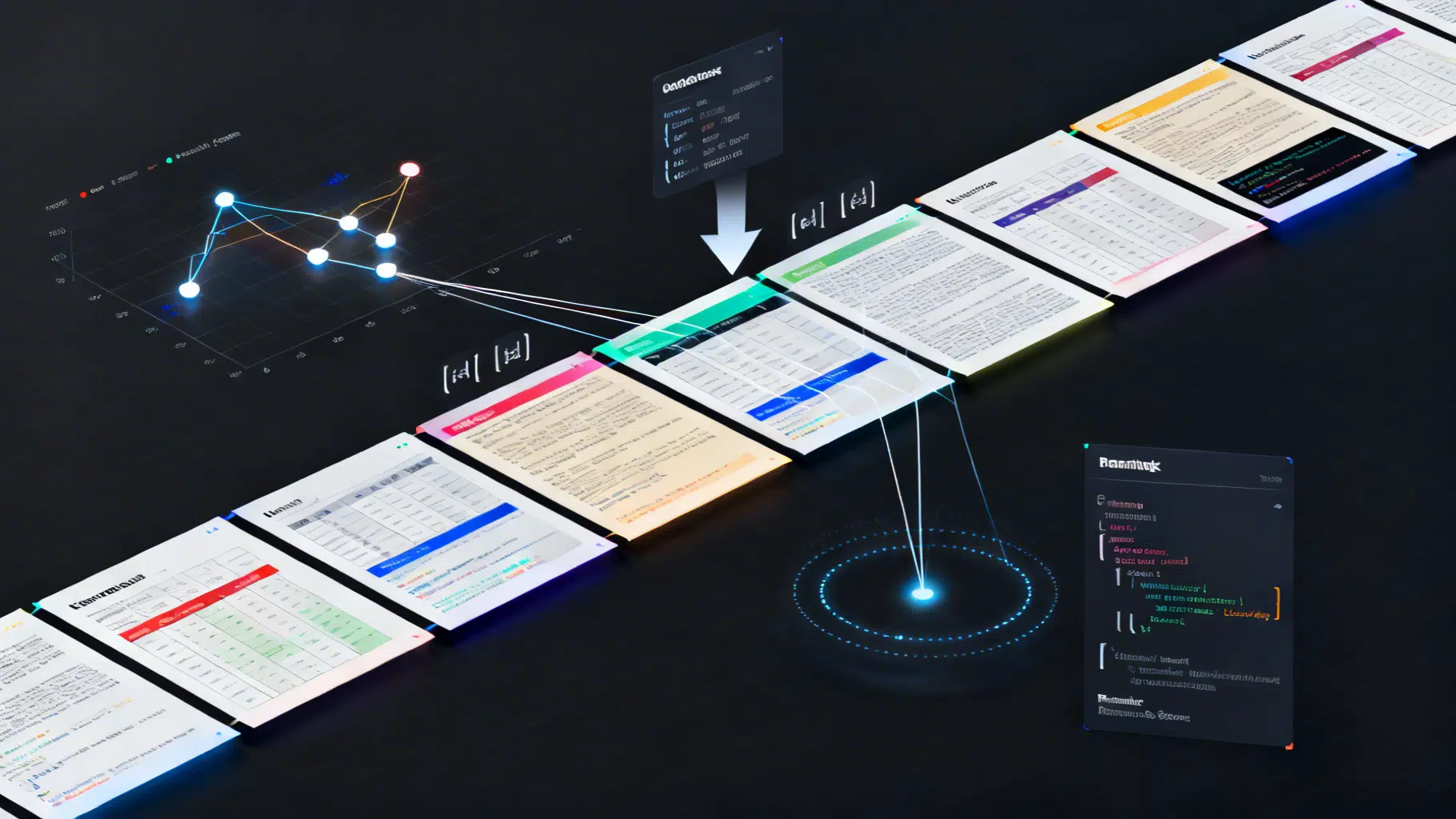

Advanced Chunking Strategies for Retrieval‑Augmented Generation

A practical guide to advanced chunking in RAG: semantic and structure-aware methods, parent–child indexing, query-driven expansion, and evaluation tips.

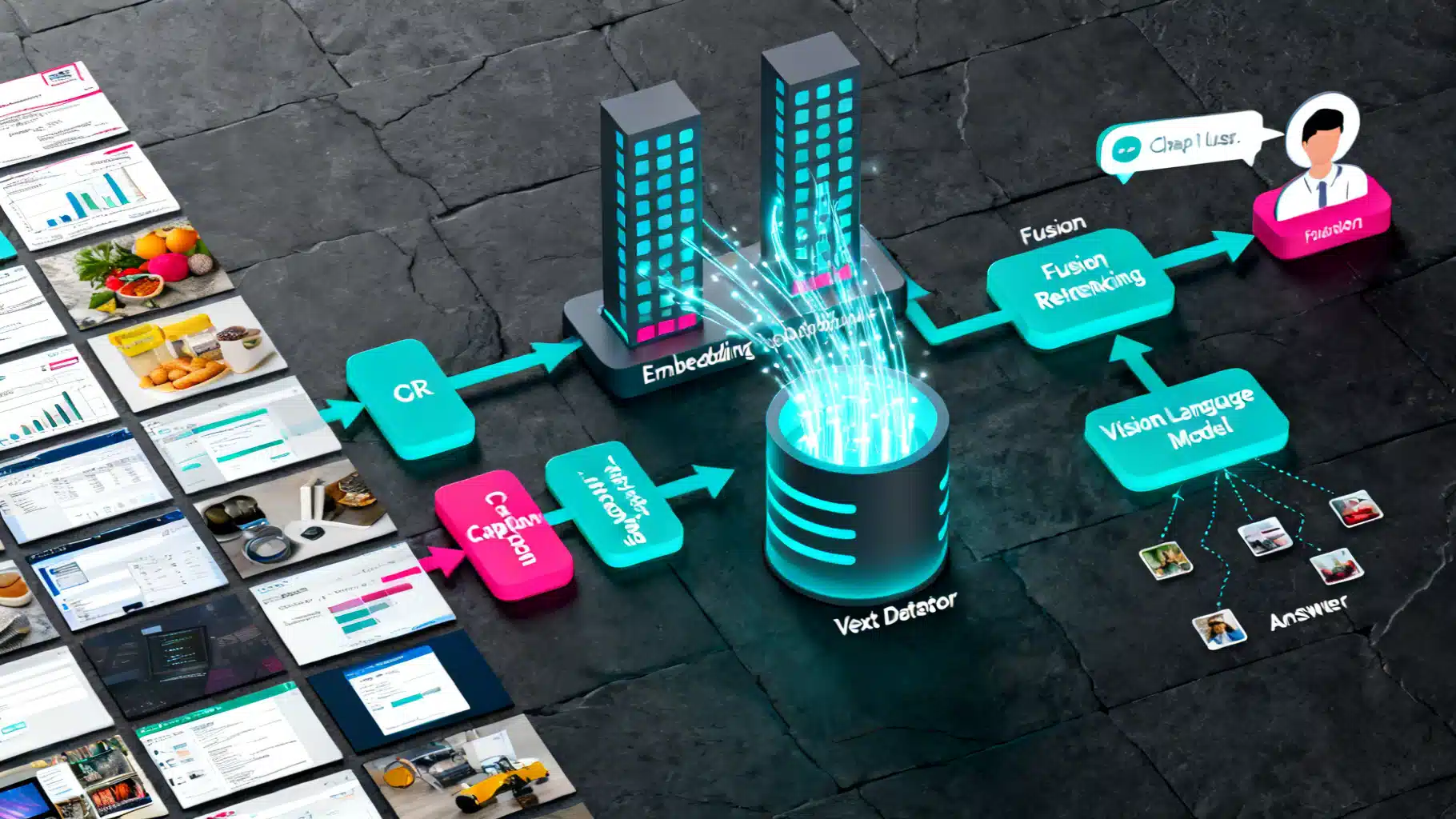

A Practical Guide to Multi‑Modal RAG: Images Plus Text, End‑to‑End Tutorial

Build a practical multi‑modal RAG system that retrieves from images and text using OCR, captions, CLIP embeddings, and vector search.

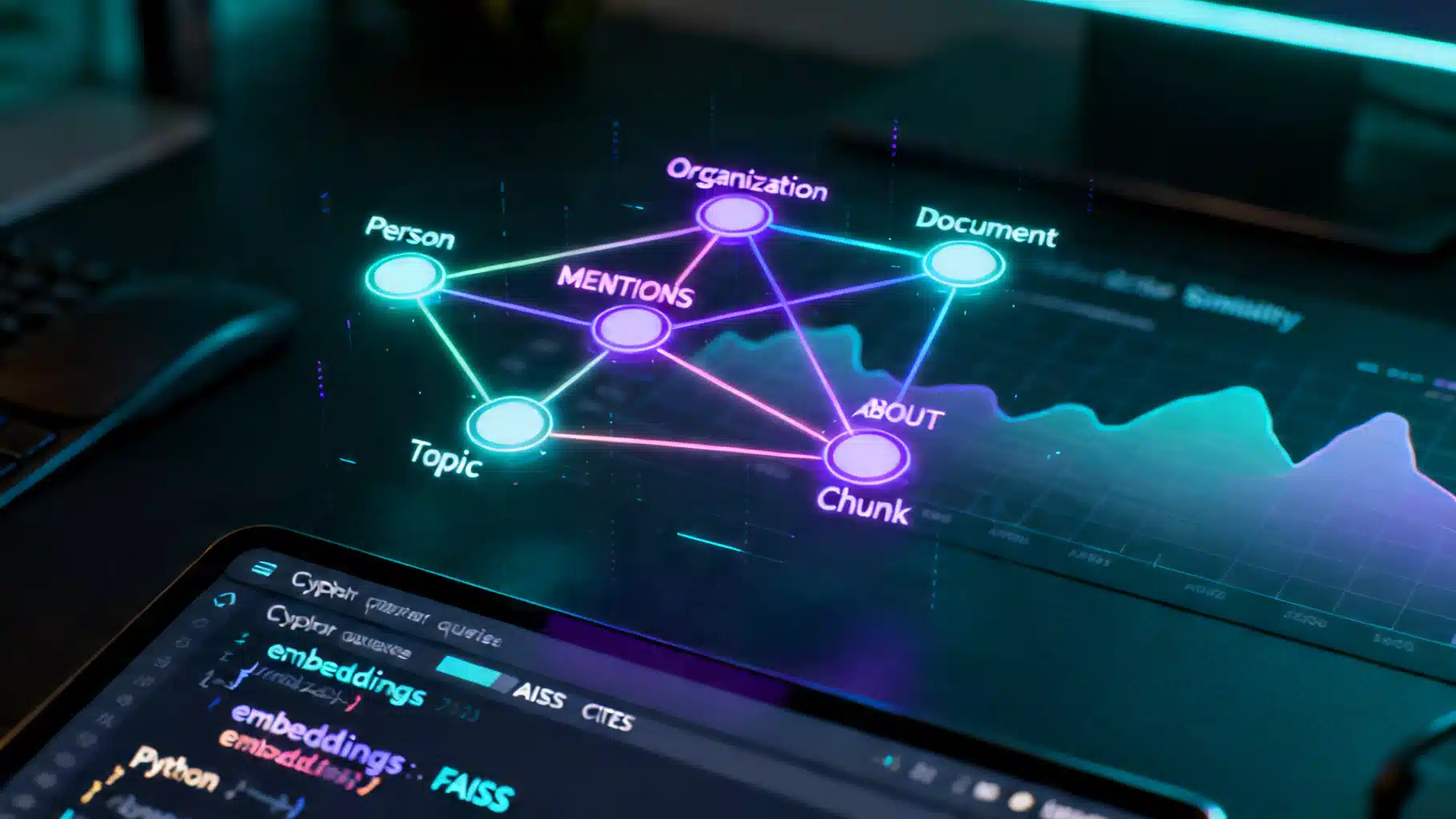

A Practical Tutorial on Knowledge Graph–Enhanced AI Retrieval (GraphRAG)

Build a production-ready tutorial for knowledge graph–enhanced AI retrieval: schema, ingestion, Cypher, hybrid search, and evaluation.