Function Calling vs. Tool Use in LLMs: Architecture, Trade-offs, and Patterns

A practical guide to function calling vs. tool use in LLMs: architectures, trade-offs, design patterns, reliability, security, and evaluation.

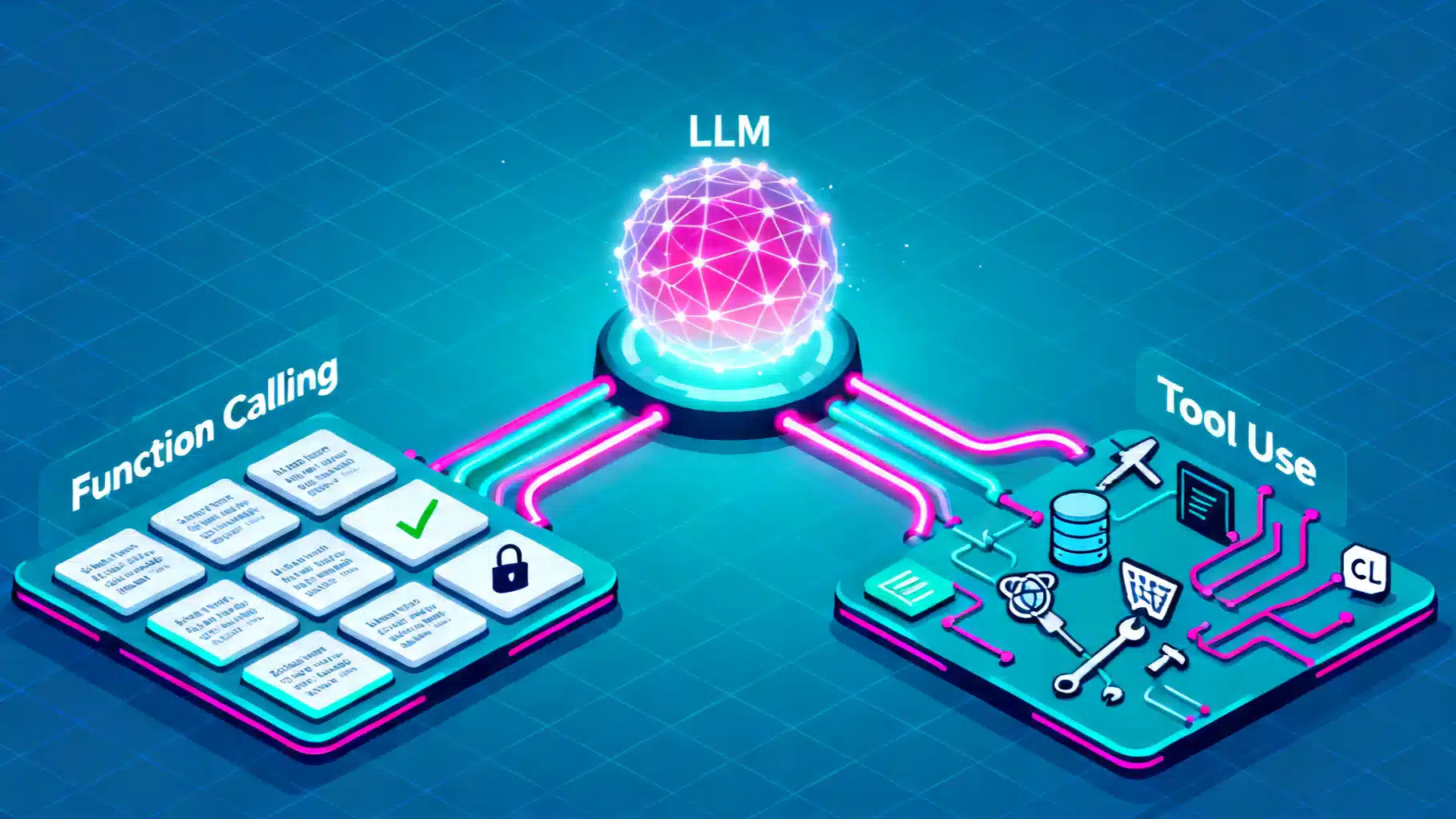

Image used for representation purposes only.

Overview

Function calling and tool use are two closely related techniques that let large language models (LLMs) take actions, not just generate text. They differ mainly in contract strictness and orchestration responsibility: function calling emphasizes a typed, structured interface (the model emits a function name and arguments), while tool use is the broader practice of letting models invoke external capabilities (search, databases, code execution, APIs) through any mechanism you design. In practice, function calling is a special case of tool use that trades model freedom for reliability and observability.

This article explains how each approach works, where they shine, how to design robust schemas and handlers, and what to watch for in production.

Mental Model

- LLM core: produces tokens conditioned on prompts.

- Planner: the LLM decides whether it needs outside information or actions.

- Actuator: your runtime executes the requested tool/function and returns results.

- Feedback: results are fed back to the LLM for further steps or a final answer.

Function calling narrows the plan→act boundary to a structured call: the model must pick from a declared set of functions and emit arguments matching a schema. Tool use allows looser patterns (e.g., emitting JSON snippets, XML tags, or delimiter-based commands) that your orchestrator interprets.

When to Use Which

Choose function calling when you need:

- High reliability: typed arguments and validation reduce parsing errors.

- Clear governance: a fixed tool registry with least-privilege access.

- Strong observability: every call has a name, args, and result you can log and trace.

Prefer general tool use when you need:

- Rapid experimentation: try novel tool protocols or emergent behaviors.

- Unusual data shapes: multi-part outputs, binary attachments, or streaming payloads.

- Cross-model portability: keep a vendor-agnostic protocol or custom markup.

Many production systems blend both: function calling for critical paths; flexible tool prompts for R&D or edge tools.

Designing the Contract: Schemas, Defaults, and Guardrails

A good function/tool contract is a data product. Design it intentionally:

- Types and enums: constrain what the model can request.

- Required vs optional fields: reduce ambiguity and retries.

- Defaults and examples: give the model strong priors.

- Descriptive names: function and field names should be self-explanatory.

- Deterministic flags: provide switches to force or forbid tool use in certain turns.

Example JSON schema for a weather lookup function:

{

"name": "get_weather",

"description": "Retrieve current weather for a city.",

"parameters": {

"type": "object",

"properties": {

"city": {"type": "string", "description": "City name, e.g., 'Paris'"},

"units": {"type": "string", "enum": ["metric", "imperial"], "default": "metric"}

},

"required": ["city"]

}

}

Orchestration Patterns

- Single-step call: The LLM decides on one function, you execute it, return the result to the model, and finalize.

- Multi-step agent loop: The LLM plans, calls multiple tools, synthesizes, and stops on a termination condition.

- Router + specialists: A small, fast model routes to one of several specialized tools or chains; the large model is only invoked as needed.

- Human-in-the-loop: Tool outputs exceeding risk thresholds trigger review.

Reliability Engineering

- Validation: enforce JSON Schema or typed classes (e.g., Pydantic) before executing.

- Retries with hints: on validation failure, return a compact, non-leaky error message and allow a single retry.

- Idempotency: include request IDs so duplicated calls don’t double-charge users or mutate state twice.

- Timeouts and cancelation: abort slow tools; surface partial context to the model.

- Caching: cache deterministic tool results (e.g., product metadata) to cut latency and cost.

- Deterministic prompts: isolate tool descriptions from user content to reduce injection risk.

Security and Safety

- Least privilege: expose only the minimal tool surface and scopes.

- Parameter whitelisting: strict enums and patterns for high-risk fields (URLs, file paths, shell args).

- Output filtering: sanitize tool outputs before re-feeding to the model (especially HTML/JS).

- Prompt injection defense: never pass untrusted text directly into a shell, SQL, or code interpreter. Add provenance tags to clarify “this text is untrusted.”

- Audit logging: record who/what triggered each tool with timestamps and hashed payloads.

Observability and Tracing

Capture, correlate, and visualize:

- Invocation spans: model → function/tool → external API.

- Inputs/outputs (redacted): structured logs with PII scrubbing.

- Model tokens: per-invocation token counts and latency.

- Outcome labels: success, validation error, provider error, timeout, human review.

With this data you can compute success rates, p95 latency, and per-tool cost; you’ll also spot brittle schemas and injection attempts.

Latency and Cost Tuning

- Parallelization: allow the model to request a set of independent tool calls, then execute in parallel.

- Early-return: if a direct answer is present in context, forbid tool use for that turn.

- Tool prefetch: precompute cheap lookups likely needed by most prompts.

- Streaming: return partial tool results if the consuming model can benefit.

- Smaller models for tool-heavy tasks: if tools do most of the work, a compact planner often suffices.

Evaluation Strategies

- Golden datasets: prompts with known correct tool invocations and outputs.

- Fault injection: simulate API 500s, schema mismatches, and timeouts; verify graceful degradation.

- Security drills: inject malicious tool descriptions and poisoned user content to test defenses.

- Shadow mode: run the new tool/contract alongside the old one to compare traces before cutover.

Practical Examples

Python: Function Calling Runtime

from typing import TypedDict, Literal

import json

class WeatherArgs(TypedDict):

city: str

units: Literal["metric", "imperial"]

TOOLS = {

"get_weather": {

"schema": WeatherArgs,

"handler": lambda args: {"temp": 18.2, "units": args.get("units", "metric")}

}

}

def call_tool(name: str, args: dict):

if name not in TOOLS:

return {"error": "unknown_tool"}

schema = TOOLS[name]["schema"]

try:

typed = schema(**args) # type validation

except TypeError as e:

return {"error": "validation_error", "message": str(e)}

return TOOLS[name]["handler"](typed)

# LLM emits: {"tool": "get_weather", "arguments": {"city": "Paris", "units": "metric"}}

response = call_tool("get_weather", {"city": "Paris", "units": "metric"})

TypeScript: Flexible Tool Use via Tags

type ToolResult = { ok: true; data: any } | { ok: false; error: string };

const tools = {

search: async (q: string): Promise<ToolResult> => {

if (!q || q.length < 3) return { ok: false, error: "query_too_short" };

return { ok: true, data: [/* results */] };

},

};

// Model is prompted to emit <tool name="search">query</tool>

function parseToolTag(text: string) {

const m = text.match(/<tool name=\"(.*?)\">([\s\S]*?)<\/tool>/);

if (!m) return null;

return { name: m[1], arg: m[2].trim() };

}

async function orchestrate(modelOutput: string) {

const call = parseToolTag(modelOutput);

if (!call || !(call.name in tools)) return { final: modelOutput };

const result = await tools[call.name](call.arg);

return { tool: call.name, result };

}

The first example shows strict, typed function calling; the second illustrates a more flexible tool-use protocol that a variety of models can follow.

Forcing, Suggesting, and Forbidding Tool Use

- Force: when inputs match clear predicates (e.g., “What’s the weather in X?”), set a system rule or router to force the call.

- Suggest: include high-quality, compact tool descriptions and examples so the model autonomously chooses well.

- Forbid: when the user asks for opinions or generative text where tools add latency without value.

Designing for Multi-Tool Plans

- Decomposition: let the model produce a short plan sketch before calling anything.

- Parallel steps: mark independent subgoals so the orchestrator can fan out.

- Budgeting: attach a cost/time budget to the plan; halt if exceeded.

- Checkpoints: persist intermediate results for explainability and retries.

Common Pitfalls

- Overbroad tools: a single “do_everything” function leads to ambiguity and weak priors.

- Unvalidated free text: passing raw model output into shells, SQL, or HTTP without checks.

- Silent failures: swallowing tool errors and returning confident but wrong answers.

- Leaky retries: retrying non-idempotent operations (payments, writes) without safeguards.

- Underspecified enums: models guess arbitrary strings that don’t map to your backend.

Choosing the Right Abstraction

Ask:

- What is the risk if the model fabricates arguments?

- Do I need vendor portability or am I fine with a provider-specific function API?

- Are my tools deterministic and cacheable, or slow and stochastic?

- Who debugs incidents at 2 a.m.? Favor structures that make traces self-explanatory.

A pragmatic path is to start with function calling for core capabilities (search, retrieval, CRUD on internal systems) and add flexible tool-use protocols around it for exploratory or UI-integrated features.

Conclusion

Function calling gives you strong contracts, safety, and observability. General tool use gives you flexibility and portability. Most real systems combine both: strict functions for the backbone and flexible tools for edge creativity. Treat tool contracts like APIs: version them, test them, monitor them, and secure them. With solid schemas, careful orchestration, and robust evaluation, LLMs become dependable operators—not just eloquent narrators.

Related Posts

Implementing Reliable Tool Calling for AI Agents: Architecture, Schemas, and Best Practices

Hands-on guide to reliable, secure tool calling for AI agents: architecture, schemas, control loops, error handling, observability, and evaluation.

Integrating an AI Writing Assistant via API: Architecture, Code, and Best Practices

A practical guide to integrating an AI writing assistant via API—architecture, prompt design, code samples, safety, evaluation, and performance optimization.

AI Text Summarization API Comparison: A Practical Buyer’s Guide for 2026

A practical, vendor-agnostic guide to evaluating, implementing, and scaling AI text summarization APIs in 2026.