From Dataset to Endpoint: A Practical AI Text Classification API Tutorial

Build, deploy, and scale a production-ready AI text classification API with Python and FastAPI—training, serving, security, metrics, and monitoring.

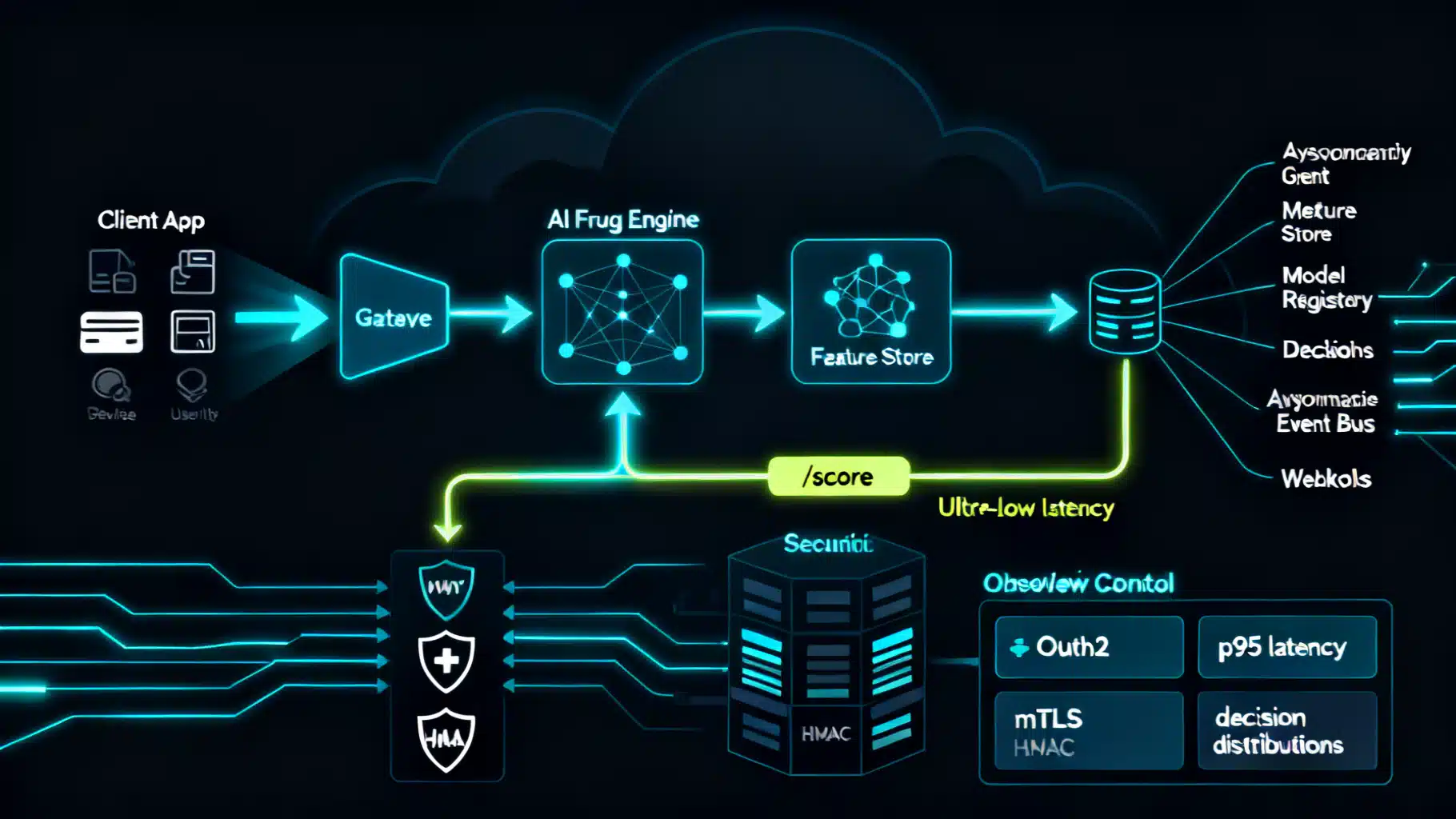

Image used for representation purposes only.

Overview

Building a text classification API is one of the fastest ways to bring NLP into a product. In this tutorial, you will design, train, and deploy a production-ready API that labels text (e.g., sentiment, topic, intent). You will get:

- A clear system architecture

- Training code for a strong baseline model

- A FastAPI microservice with secure endpoints

- Client examples (curl, Python, JavaScript)

- Guidance on evaluation, monitoring, and scaling

What you will build

We will ship a /v1/classify endpoint that:

- Supports single- and multi-label classification

- Returns top_k labels with calibrated confidence scores

- Enforces API-key authentication

- Logs structured events for monitoring

- Scales horizontally (container-friendly)

Prerequisites

- Python 3.10+

- Basic familiarity with virtualenv or uv

- A labeled dataset (CSV or JSONL)

Recommended packages:

- scikit-learn (baseline modeling)

- pandas, numpy (data wrangling)

- fastapi, uvicorn (serving)

- pydantic (validation)

- joblib (model persistence)

Choosing your classification setup

Before writing code, lock down these design decisions:

- Label cardinality

- Single-label: exactly one label per text (e.g., topic).

- Multi-label: zero or more labels per text (e.g., safety categories).

- Data size and budget

- <50k examples: start with TF-IDF + linear classifier; cheap and fast.

-

50k examples or nuanced language: consider transformer-based fine-tuning.

- Latency vs. accuracy

- On-CPU, sub-50 ms: linear models.

- On-GPU, 50–200 ms: small transformers (e.g., DistilBERT-class).

- Adaptability

- Zero-shot (prompt or entailment) to start quickly.

- Fine-tune for stable, repeatable performance.

Tip: launch with a linear baseline, then A/B test a transformer upgrade.

Data preparation and labeling

- Normalize text: lowercase, strip excessive whitespace; preserve emojis if informative.

- De-duplicate; remove near-duplicates to avoid leakage.

- Handle class imbalance: stratify splits; consider class weights.

- Split: train/validation/test (e.g., 80/10/10) with stratification.

- For multi-label: store labels as lists.

Example CSV schema:

- text: the raw input

- labels: comma-separated list (for multi-label) or single value

Train a lightweight baseline (scikit-learn)

The following code trains a TF-IDF + Logistic Regression classifier and persists both vectorizer and model. It supports single-label classification. Extend for multi-label with OneVsRestClassifier.

# train.py

import pandas as pd

from sklearn.model_selection import train_test_split

from sklearn.feature_extraction.text import TfidfVectorizer

from sklearn.linear_model import LogisticRegression

from sklearn.pipeline import Pipeline

from sklearn.metrics import classification_report

from sklearn.utils.class_weight import compute_class_weight

import joblib

import numpy as np

# 1) Load data

# CSV columns: 'text', 'label'

df = pd.read_csv('data/train.csv')

X = df['text'].astype(str)

y = df['label'].astype(str)

# 2) Split

X_train, X_test, y_train, y_test = train_test_split(

X, y, test_size=0.2, random_state=42, stratify=y

)

# 3) Compute class weights (helps on imbalance)

classes = np.unique(y_train)

class_weights = compute_class_weight('balanced', classes=classes, y=y_train)

class_weight_map = {c: w for c, w in zip(classes, class_weights)}

# 4) Build pipeline

pipe = Pipeline([

('tfidf', TfidfVectorizer(

ngram_range=(1,2),

min_df=2,

max_features=100_000,

sublinear_tf=True

)),

('clf', LogisticRegression(

max_iter=200,

n_jobs=-1,

class_weight=class_weight_map

))

])

# 5) Train

pipe.fit(X_train, y_train)

# 6) Evaluate

pred = pipe.predict(X_test)

print(classification_report(y_test, pred, digits=3))

# 7) Persist

joblib.dump(pipe, 'artifacts/model.joblib')

joblib.dump(classes, 'artifacts/classes.joblib')

Notes:

- For multi-label: use OneVsRestClassifier(LogisticRegression(…)) and store a binarizer for labels.

- For calibration: wrap with CalibratedClassifierCV to improve probability quality.

Serving the model as an API (FastAPI)

We will implement:

- POST /v1/classify: single text classification

- POST /v1/batch: batch classification

- GET /health: health check

Security: require an API key via the header x-api-key.

# app.py

import os

import time

import joblib

from fastapi import FastAPI, HTTPException, Header

from pydantic import BaseModel, Field, conint, confloat

from typing import List, Optional

MODEL_PATH = 'artifacts/model.joblib'

CLASSES_PATH = 'artifacts/classes.joblib'

API_KEY = os.getenv('API_KEY', 'dev-key-change-me')

pipe = joblib.load(MODEL_PATH)

classes = joblib.load(CLASSES_PATH)

app = FastAPI(title='Text Classification API', version='1.0.0')

class ClassifyRequest(BaseModel):

text: str = Field(..., min_length=1, max_length=10000)

top_k: conint(ge=1, le=10) = 3

threshold: confloat(ge=0.0, le=1.0) = 0.0

class LabelScore(BaseModel):

label: str

score: float

class ClassifyResponse(BaseModel):

labels: List[LabelScore]

top_k: int

model_version: str

latency_ms: int

class BatchRequest(BaseModel):

texts: List[str] = Field(..., min_items=1, max_items=512)

top_k: conint(ge=1, le=10) = 3

threshold: confloat(ge=0.0, le=1.0) = 0.0

class BatchResponseItem(BaseModel):

index: int

labels: List[LabelScore]

class BatchResponse(BaseModel):

results: List[BatchResponseItem]

model_version: str

def auth_guard(key: Optional[str]):

if key != API_KEY:

raise HTTPException(status_code=401, detail='Invalid or missing API key')

@app.get('/health')

def health():

return {'ok': True, 'model_loaded': True}

@app.post('/v1/classify', response_model=ClassifyResponse)

def classify(req: ClassifyRequest, x_api_key: Optional[str] = Header(None)):

auth_guard(x_api_key)

start = time.time()

# Predict probabilities for all classes

if hasattr(pipe, 'predict_proba'):

proba = pipe.predict_proba([req.text])[0]

else:

# Fallback via decision_function -> min-max scale

import numpy as np

scores = pipe.decision_function([req.text])[0]

proba = (scores - scores.min()) / (scores.max() - scores.min() + 1e-8)

# Rank labels

ranked = sorted(zip(classes, proba), key=lambda x: x[1], reverse=True)

# Apply threshold and top_k

filtered = [

LabelScore(label=lab, score=float(sc))

for lab, sc in ranked if sc >= req.threshold

][:req.top_k]

latency = int((time.time() - start) * 1000)

return ClassifyResponse(

labels=filtered,

top_k=req.top_k,

model_version=app.version,

latency_ms=latency

)

@app.post('/v1/batch', response_model=BatchResponse)

def batch(req: BatchRequest, x_api_key: Optional[str] = Header(None)):

auth_guard(x_api_key)

texts = req.texts

if hasattr(pipe, 'predict_proba'):

matrix = pipe.predict_proba(texts)

else:

import numpy as np

scores = pipe.decision_function(texts)

# Min-max per row

row_min = scores.min(axis=1, keepdims=True)

row_max = scores.max(axis=1, keepdims=True)

matrix = (scores - row_min) / (row_max - row_min + 1e-8)

results = []

for i, probs in enumerate(matrix):

ranked = sorted(zip(classes, probs), key=lambda x: x[1], reverse=True)

filtered = [

LabelScore(label=lab, score=float(sc))

for lab, sc in ranked if sc >= req.threshold

][:req.top_k]

results.append(BatchResponseItem(index=i, labels=filtered))

return BatchResponse(results=results, model_version=app.version)

Run locally:

export API_KEY='your-secret-key'

uvicorn app:app --host 0.0.0.0 --port 8000 --workers 2

Try it out

- Single request (curl):

curl -X POST 'http://localhost:8000/v1/classify' \

-H 'Content-Type: application/json' \

-H 'x-api-key: your-secret-key' \

-d '{"text": "Love the product, shipping was fast!", "top_k": 3, "threshold": 0.05}'

- Python client:

import requests

payload = {

'text': 'Love the product, shipping was fast!',

'top_k': 3,

'threshold': 0.05

}

resp = requests.post(

'http://localhost:8000/v1/classify',

headers={'x-api-key': 'your-secret-key'},

json=payload

)

print(resp.json())

- JavaScript (fetch):

const resp = await fetch('http://localhost:8000/v1/classify', {

method: 'POST',

headers: {

'Content-Type': 'application/json',

'x-api-key': 'your-secret-key'

},

body: JSON.stringify({ text: 'Need help with my order', top_k: 2, threshold: 0.1 })

});

const data = await resp.json();

console.log(data);

Response schema (example)

{

"labels": [

{ "label": "positive", "score": 0.92 },

{ "label": "shipping", "score": 0.11 }

],

"top_k": 3,

"model_version": "1.0.0",

"latency_ms": 12

}

Confidence and thresholds

- Calibrate scores: apply Platt scaling or isotonic regression (CalibratedClassifierCV) using validation data.

- Choose threshold: optimize for business metric (e.g., F1, precision at K, or alert cost).

- For multi-label: set per-label thresholds based on PR curves; highly imbalanced labels often need higher cutoffs.

Evaluation: metrics that matter

- Accuracy (single-label, balanced classes)

- Precision, recall, F1 (macro and weighted)

- ROC-AUC (per class) and PR-AUC (preferred on imbalance)

- Confusion matrix to spot systematic errors

Example evaluation snippet:

from sklearn.metrics import classification_report, confusion_matrix

import seaborn as sns, matplotlib.pyplot as plt

print(classification_report(y_test, pred, digits=3))

cm = confusion_matrix(y_test, pred, labels=classes)

sns.heatmap(cm, annot=False, cmap='Blues', xticklabels=classes, yticklabels=classes)

plt.xlabel('Predicted'); plt.ylabel('True'); plt.tight_layout(); plt.show()

Monitoring in production

Log structured events for each request. Store minimal PII; hash user IDs if needed.

Suggested event fields:

- timestamp, request_id

- model_version, route

- text_hash (not raw text), text_length

- top_k, threshold

- predicted_labels, scores

- latency_ms, status_code

Example log (JSON):

{

"ts": "2026-05-15T17:22:01Z",

"route": "/v1/classify",

"model_version": "1.0.0",

"text_len": 46,

"predicted": [

{ "label": "positive", "score": 0.92 }

],

"latency_ms": 14,

"status_code": 200

}

Drift detection:

- Track label distribution over time vs. baseline (KL divergence or PSI).

- Track average confidence; sudden drops can signal drift.

- Shadow deploy new models and compare offline before switching traffic.

Scaling and performance

- Server tuning

- Use uvicorn workers equal to CPU cores for CPU-bound linear models.

- Pin TF-IDF to CPU; it is fast and memory-light.

- Batching

- For high QPS, implement micro-batching in /v1/batch; amortize vectorization cost.

- Caching

- Cache frequent short inputs (e.g., FAQs) with an LRU cache keyed by a text hash.

- Memory

- Limit max_features in TF-IDF; monitor RSS.

- Containerization

# Dockerfile

FROM python:3.11-slim

WORKDIR /app

COPY requirements.txt .

RUN pip install -r requirements.txt --no-cache-dir

COPY artifacts/ artifacts/

COPY app.py .

ENV API_KEY=change-me

EXPOSE 8000

CMD ["uvicorn", "app:app", "--host", "0.0.0.0", "--port", "8000", "--workers", "2"]

Versioning and backward compatibility

- Route-version your API (e.g., /v1/…).

- Semantic version your model (major.minor.patch) and return the version in responses.

- When adding labels, consider a mapping layer so client code does not break.

Security and governance

- Authentication: API key in x-api-key; rotate keys periodically.

- Authorization: per-key rate limits and quotas.

- Privacy: redact PII pre-ingest when possible; log hashes, not payloads.

- Compliance: document training data sources and retention policies.

Testing and CI/CD

- Unit tests for preprocessing and label mapping.

- Contract tests: validate response schema, error codes, and headers.

- Load tests: measure p50/p95/p99 latencies and throughput.

- Canary releases: ship new model to a small slice of traffic first.

Extending to multi-label

- Replace the classifier with OneVsRestClassifier(LogisticRegression(…)) or LinearSVC.

- Use MultiLabelBinarizer to encode labels.

- Threshold per class (vector) instead of a single global cutoff.

Troubleshooting checklist

- Low recall on minority classes: increase class_weight, collect more data, tune thresholds.

- Overfitting: reduce max_features, add regularization (C parameter), use stratified CV.

- Unstable probabilities: apply calibration and use larger validation sets.

- Unexpected latency spikes: cap text length server-side; drop huge payloads with 413.

Next steps

- Swap the baseline with a distilled transformer for a quality boost.

- Add explanation hooks: return top features (for linear models) or attention-based rationale.

- Build an annotation loop: feed low-confidence cases back to labeling.

With this foundation, you have a reliable, secure, and observable text classification API that can evolve as your data and requirements grow.

Related Posts

AI Fraud Detection API Integration: Architecture, Security, and Implementation Guide

Practical guide to AI fraud detection API integration: architecture, payloads, security, thresholds, MLOps, and operations with code samples.

Building Social Media Insights with AI Sentiment Analysis APIs: Architecture, Metrics, and Code

How to integrate AI sentiment analysis APIs into social media stacks—architecture, metrics, sample code, and best practices for reliable, real-time insights.

AI Summarization APIs for News: Architecture, Quality, and Compliance

Design a reliable AI summarization API for news: architecture, schema, grounding, evaluation, safety, compliance, and cost strategies.