Designing API Throttling and User Tiers: A Practical Guide for Scalable Platforms

Design robust API throttling and user tier management with algorithms, policies, headers, and billing integration.

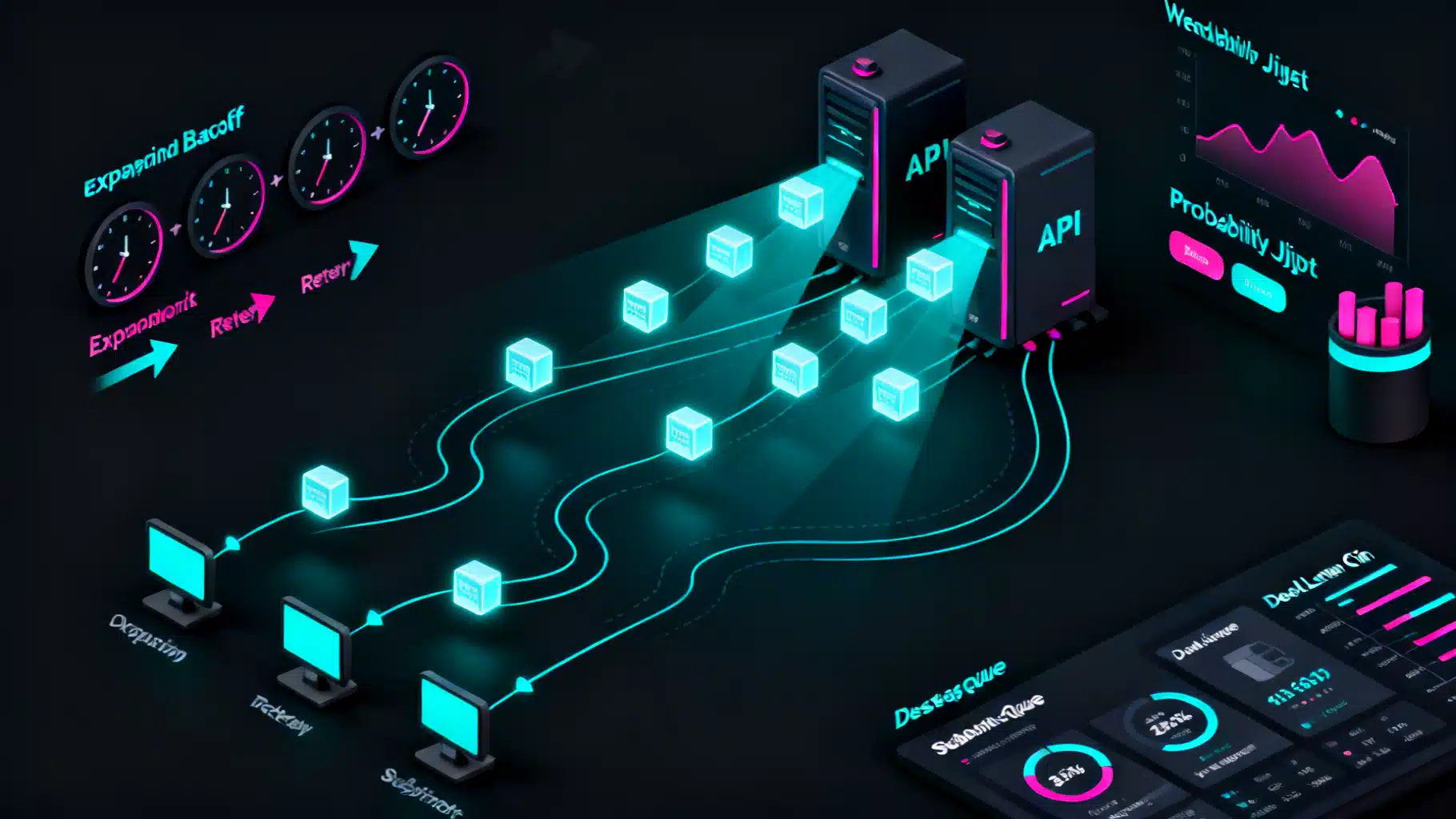

Image used for representation purposes only.

Overview

API throttling and user tier management are two sides of the same coin: protecting your platform while delivering predictable performance and a sustainable business model. Throttling controls the rate of requests and resource usage; tiers define who gets how much, under what conditions, and at what price. When these systems are designed together, you get fairness for everyone, safety for your backend, and clear value for paying customers.

This guide walks through design goals, algorithms, policy modeling, implementation patterns, observability, and edge cases—plus practical code/config snippets you can adapt today.

Goals and guardrails

- Protect core resources from traffic spikes and abuse.

- Deliver consistent latency and error rates (SLOs) during peak times.

- Create clear upgrade paths mapped to business value.

- Offer transparent, developer-friendly limits that are easy to reason about.

- Remain cost-aware: limit what is expensive (CPU, DB, bandwidth) rather than only request counts.

What to throttle: dimensions and meters

Different workloads require different meters. Mix and match:

- Request rate: requests per second/minute (RPS/RPM).

- Concurrency: concurrent requests, connections, or tasks.

- Data volume: bytes per second or daily GB.

- Operation cost: weighted points per endpoint (e.g., 1 point for GET /users, 10 for POST /reports).

- Special cases: long polling, server-sent events, WebSockets, GraphQL cost, batch endpoints.

Tip: use operation-cost points for “expensive” endpoints so Free tier users can still explore without creating hotspots.

Tier design: mapping value to limits

A clean tier model typically includes:

- Free: low rate + small monthly quota, burst allowed for snappy dev UX; strict concurrency caps.

- Pro: 5–10× Free limits, higher burst, access to heavier endpoints.

- Enterprise: negotiated limits per tenant; priority lanes and stricter SLOs.

Define limits on multiple axes to avoid single-metric blind spots:

- Rate: e.g., 5 RPS Free, 50 RPS Pro, 500 RPS Enterprise.

- Burst: tokens for short spikes (e.g., 20 for Free, 200 for Pro).

- Quota: monthly points or requests (e.g., 1M points Free, 30M Pro).

- Concurrency: max in-flight requests or jobs.

- Data: egress caps if bandwidth matters.

Algorithms you actually need

- Fixed window: simple counters per window (e.g., per minute). Pros: easy; Cons: window boundary spikes.

- Sliding window: smoothed version using rolling time; better fairness.

- Token bucket: users accumulate tokens at a rate; can spend bursts up to bucket size. Great for short spikes.

- Leaky bucket: smooths out bursts by draining at a constant rate; good for shaping traffic.

A pragmatic combo:

- Token bucket for per-second rate/bursts.

- Sliding window for per-minute fairness.

- Separate counters for monthly quotas and daily egress.

Policy matrix: tie endpoints to costs and identities

Define policies by:

- Identity: API key, OAuth client, user, organization, and optionally IP for abuse scenarios.

- Endpoint class: cheap reads, moderate writes, expensive reports.

- Region: keep limits local to reduce latency and improve fairness.

- Priority: enterprise traffic may have its own lane/queue.

Example matrix snippet:

- Free: 5 RPS, burst 20, 1 concurrent report, 1M monthly points.

- Pro: 50 RPS, burst 200, 5 concurrent reports, 30M monthly points.

- Enterprise: negotiated per-tenant, with priority queueing.

Communicating limits: headers and status codes

Always return transparent limit info in responses:

- 429 Too Many Requests when blocked.

- Retry-After for when the client should try again.

- RateLimit-Limit, RateLimit-Remaining, RateLimit-Reset headers for clarity.

Example response headers:

HTTP/1.1 200 OK

RateLimit-Limit: 300; window=60

RateLimit-Remaining: 120

RateLimit-Reset: 24

Implementation patterns

You can enforce limits at different layers. Most mature systems use a hybrid.

- Edge/API gateway: best for global protection and uniform policy. Low latency, centralized logging.

- Service mesh/sidecar: good for per-service shaping and resilience.

- Application layer: needed for business-aware limits (e.g., cost points, tenant-level exceptions).

- Distributed store: Redis is common for counters and token buckets. Consider sharding keys and regional isolation.

NGINX (or gateway) token bucket example

# Define a zone and rate per client (e.g., per API key in a header)

map $http_x_api_key $api_key { default $http_x_api_key; }

limit_req_zone $api_key zone=pro_tier:10m rate=50r/s;

server {

location /api/ {

limit_req zone=pro_tier burst=200 nodelay; # allow short bursts

proxy_pass http://app_backend;

}

}

Redis Lua: sliding window counter (simplified)

-- KEYS[1] = rate key (e.g., rate:{api_key})

-- ARGV[1] = now (ms), ARGV[2] = window (ms), ARGV[3] = limit

local now = tonumber(ARGV[1])

local window = tonumber(ARGV[2])

local limit = tonumber(ARGV[3])

-- Trim old entries

redis.call('ZREMRANGEBYSCORE', KEYS[1], 0, now - window)

-- Add current hit

redis.call('ZADD', KEYS[1], now, now)

local count = redis.call('ZCARD', KEYS[1])

redis.call('PEXPIRE', KEYS[1], window)

if count > limit then return {0, count} else return {1, count} end

Node.js Express middleware: token bucket with Redis (sketch)

async function tokenBucket(req, res, next) {

const key = `tb:${req.get('X-API-Key')}`

const rate = 50; // tokens per second

const capacity = 200; // burst size

const now = Date.now();

// Refill tokens based on elapsed time

const data = await redis.hgetall(key)

let tokens = data.tokens ? parseFloat(data.tokens) : capacity

let ts = data.ts ? parseInt(data.ts) : now

tokens = Math.min(capacity, tokens + (rate * (now - ts)) / 1000)

if (tokens < 1) {

const retry = Math.ceil((1 - tokens) / rate)

res.set('Retry-After', retry.toString())

return res.status(429).send('Too Many Requests')

}

await redis.hset(key, 'tokens', tokens - 1, 'ts', now)

await redis.expire(key, 60)

next()

}

Bursting, smoothing, and fairness

- Bursts create great UX for quick onboarding and CLI scripts. Token bucket with a moderate capacity (e.g., 4–5× the per-second rate) is a solid default.

- For background jobs, consider queue-based concurrency limits. Assign each tenant a concurrency token pool; workers acquire tokens before running jobs.

- If latency SLOs slip under load, switch to leaky-bucket shaping at the edge for smoothing.

Quotas vs. throttles

- Throttles protect instant capacity (seconds to minutes).

- Quotas protect budget/cost (days to months).

Patterns that work well together:

- Monthly quota of “points” aligned to tier pricing.

- Daily guardrails to prevent draining the month in hours.

- Soft-limit alerts at 80/90/100% via email/webhooks; configurable auto-upgrade rules.

- Hard-stop with grace period for Free; overage billing for Pro/Enterprise as contract allows.

Observability and operations

Track at least:

- Per-tenant: RPS, 95/99th latency, 4xx/5xx, 429 rate, concurrency, egress, cost points.

- Global: saturation of shared resources (DB connections, queue depth, cache hit rate).

- Limit headroom: remaining tokens/bucket usage percentiles.

Operational practices:

- Feature flags to change limits safely without deploys.

- Per-tenant overrides for incident response.

- Audit logs for policy changes.

- Dashboards for Top N tenants by usage and by 429s.

Client guidance: make limits usable

Document clear client behaviors:

- Use exponential backoff with jitter on 429/503.

- Respect Retry-After and RateLimit-Reset.

- Cache GET responses, use ETags/If-None-Match.

- Prefer batch endpoints where supported; avoid chatty loops.

- Use idempotency keys for writes to avoid duplicate work after retries.

Security, abuse, and multi-tenant fairness

- Combine identity-based limits (API key/org) with IP-based heuristics for obvious abuse. Beware NATs and mobile networks.

- Device fingerprinting or HMAC-signed requests can help prevent key sharing.

- Implement anomaly detection (sudden 10× usage) and automated temporary clamps.

- For premium tenants, isolate with dedicated queues/compute to guarantee fairness.

Edge cases and special protocols

- Clock skew: favor monotonic time sources; don’t rely on client clocks.

- Long polling/SSE/WebSockets: use concurrency tokens and per-connection caps rather than request-per-second.

- Streaming uploads/downloads: throttle by bytes/sec with backpressure.

- Webhooks: apply sender-level limits; on receiver errors, backoff with jitter.

- GraphQL: enforce cost-based limits per operation rather than per request.

- Batches: price by items processed; set a sane max batch size.

Data model and keys

Design keys to avoid hot spots and enable sharding:

- Prefix by region + tenant: rate:us-east:org_123

- Separate keys per dimension: rps, burst, quota, concurrency.

- Use short TTLs for rate windows; longer for monthly quotas.

Rolling out throttling without breaking users

- Instrument first: measure current usage for 2–4 weeks.

- Dry-run mode: compute decisions but don’t block; emit headers and logs.

- Announce limits with examples and upgrade paths.

- Gradual enforcement: start above the 99th percentile, then step down.

- Tenant-specific exceptions for a cooling-off period.

Example tier blueprint (adapt as needed)

- Free

- 5 RPS, burst 20

- 1M monthly points

- 2 concurrent jobs, 1GB/day egress

- Pro

- 50 RPS, burst 200

- 30M monthly points

- 10 concurrent jobs, 20GB/day egress

- Enterprise

- Custom limits and dedicated queues

- Priority traffic class and higher SLOs

Testing and game days

- Load test tiers independently and in aggregate; validate isolation.

- Chaos drills: simulate regional cache loss or Redis latency spikes.

- Verify headers and client retry behavior under 429 storms.

- Confirm dashboards, alerts, and on-call runbooks.

Quick checklist

- Choose meters: rate, quota, concurrency, data, cost points.

- Map limits to tiers with clear upgrade paths.

- Use token bucket + sliding window; add leaky shaping if needed.

- Enforce at edge + app; back with Redis and region-scoped keys.

- Emit RateLimit-* and Retry-After; document client backoff.

- Monitor 429s, headroom, and tenant SLOs; enable overrides.

- Roll out in dry-run, then enforce gradually.

Conclusion

Thoughtful throttling and tiering deliver a smoother developer experience, protect your infrastructure, and create clear business value. Start by measuring, pick algorithms that fit your workload, communicate limits transparently, and iterate with data. With the right design, your API can scale predictably and profitably.

Related Posts

Designing resilient REST API webhook retry mechanisms

Design reliable webhook retries: backoff with jitter, idempotency, Retry-After, DLQs, security, and ops patterns for resilient REST API webhooks.

The Enterprise Blueprint for API Governance Standards

A practical blueprint for enterprise API governance: standards, security, lifecycle, observability, and a 90‑day rollout plan to scale APIs safely.

Designing Resilient APIs with the Circuit Breaker Pattern

Learn how the API circuit breaker pattern prevents cascading failures, with design choices, observability, and code examples in Java, .NET, Node.js, and Python.