The Essential Guide to Evaluating Retrieval‑Augmented Generation: Metrics that Matter

A practical, end-to-end guide to RAG evaluation metrics—from retrieval and grounding to faithfulness, relevance, and online impact.

Image used for representation purposes only.

Overview

Retrieval‑augmented generation (RAG) promises grounded, up‑to‑date answers by combining information retrieval with large language models (LLMs). But RAG quality is only as good as the system’s weakest link. To improve it confidently, you need a metrics toolkit that separates retrieval issues from grounding failures and generation mistakes—and that ties model‑side progress to user impact.

This guide presents a practical, end‑to‑end evaluation framework for RAG. It covers what to measure, how to compute it, and how to use the results to debug and ship better systems.

The RAG evaluation stack at a glance

Think in layers and evaluate each layer explicitly:

- Retrieval quality: Did we fetch the right evidence? (ranking, coverage)

- Augmentation (context) quality: Is the provided context relevant, sufficient, and not noisy? (groundedness opportunities)

- Generation quality: Is the answer faithful to the evidence, relevant to the question, complete, concise, and safe?

- End‑to‑end task success: Did the system solve the user’s task? (accuracy, solve rate, deflection, satisfaction)

- Online health: Latency, cost, stability, and safety in production.

Core definitions

- Query: The user question or task.

- Ground truth/label: The known‑correct answer and/or supporting sources (if available).

- Supportive passage: A retrieved passage that directly contains or entails information needed to answer.

- Faithfulness (a.k.a. groundedness): All answer claims are supported by the provided context.

- Hallucination: An unsupported or contradicted claim presented as fact.

Retrieval metrics (ranking and coverage)

Use these when you have relevance judgments (q, doc, label). If you don’t, bootstrap with weak labels or LLM‑assisted judgments, then validate on a human‑labeled slice.

- Recall@k: Fraction of queries for which at least one supportive passage appears in the top‑k.

- Precision@k: Fraction of top‑k results that are supportive.

- MRR (Mean Reciprocal Rank): Average of 1/rank of the first supportive result.

- nDCG@k: Position‑sensitive gain when you have graded relevance (e.g., strongly vs. weakly supportive).

- R‑Precision / mAP: Useful when there are multiple gold passages per query.

- Multi‑hop recall: Supportive chain recall across hops or sub‑questions.

- Reranker hit rate: Whether reranking promoted a supportive passage into the visible window.

Diagnostic slices:

- Query length and type (factoid, multi‑hop, procedural, opinionated)

- Domain (legal, medical, financial, code, product)

- Document length and chunking strategy

- Temporal queries (time‑sensitive vs. evergreen)

Augmentation (context) metrics

After retrieval and reranking, measure the quality of the final context provided to the LLM.

- Context precision: Proportion of provided chunks that are supportive for the query.

- Context recall: Whether at least one supportive chunk is present; optionally the fraction of known gold chunks present.

- Support density: Share of tokens in the context that contribute directly to the answer.

- Redundancy/Diversity: Near‑duplicate rate; topical coverage across distinct sources.

- Citation availability: Whether each atomic claim in the answer can be backed by at least one provided chunk.

- Compression quality: If you summarize passages before feeding them to the model, measure loss of key facts vs. token savings.

Why this matters: High retrieval recall with low context precision still confuses the generator. These metrics tell you when to focus on reranking, filtering, or summarizing before generation.

Generation metrics (faithfulness, relevance, completeness)

Focus on whether the answer is correct, grounded in the provided context, and helpful.

- Faithfulness/groundedness: Does every factual claim in the answer appear in (or is entailed by) the context? Often computed via:

- Entailment/NLI scoring between answer claims and evidence spans.

- Span‑level attribution: Mapping claims to specific citations with alignment scores.

- Answer relevance: Semantic alignment of the answer with the user query (LLM‑judge or embedding similarity), penalizing off‑topic content.

- Completeness/coverage: Degree to which all key aspects of the query are addressed (checklist or rubric‑based scoring).

- Conciseness/verbosity: Brevity without loss of substance (length‑normalized relevance; penalty for repetition and fluff).

- Harmlessness/safety: Toxicity, PII leakage, policy compliance.

- Calibration: Confidence/hedging appropriateness; Expected Calibration Error (ECE) over self‑reported confidence.

Tip: Traditional text‑similarity metrics (ROUGE, BLEU, METEOR, BERTScore) are weak proxies for RAG because semantically correct but paraphrased answers can score poorly. Use them as secondary signals, not primary KPIs, unless your task is strict text overlap (e.g., extractive QA).

End‑to‑end task metrics

When you have labeled answers:

- Exact Match (EM), token‑level F1: Standard for factoid QA with short gold answers.

- Q‑A Fact scores: Measures factual consistency against references.

When you do not have labels:

- LLM‑as‑judge rubric: Pointwise (1–5) or pairwise (A vs. B) grading on faithfulness, correctness, and usefulness with cited evidence requirements.

- Outcome proxies: Solve rate, escalation/hand‑off rate, return visits, edit distance between suggested and final user action (for agentic tasks).

LLM‑as‑judge, done carefully

LLM judges are powerful but can be biased. Improve reliability by:

- Using multi‑criteria rubrics with definitions and examples.

- Forcing judges to check citations and quote supporting spans.

- Blind, pairwise comparison (candidate A vs. B) to reduce verbosity bias.

- Multiple judges + majority vote or median.

- Calibration checks against a human‑labeled gold slice; monitor drift.

Example judge prompt skeleton:

You are grading a RAG answer.

Criteria (0–1): Faithfulness, Relevance, Completeness, Conciseness.

Instructions:

1) Only credit claims supported by the provided Context.

2) Cite line numbers or quotes from Context for each credited claim.

3) Return JSON: {faithfulness, relevance, completeness, conciseness, final_score}.

Query: <Q>

Context: <ranked passages with line numbers>

Answer: <A>

Putting it together: a diagnostic workflow

- Establish baselines:

- No‑context baseline (LLM without retrieval)

- Oracle retrieval (inject known‑gold passages) to estimate headroom for generation

- Current RAG pipeline

- Attribute errors:

- If oracle » current: retrieval/reranking/context issues dominate.

- If current ≈ no‑context: retrieval likely failing; investigate recall@k, MRR.

- If oracle ≈ current and both are weak: generation/faithfulness issues.

- Iterate with targeted changes: chunking strategy, cross‑encoder reranker, context summarization, citation‑aware decoding, system prompts, or post‑editors.

- Lock in with A/B tests on live traffic, watching solve rate, deflection, latency, and safety.

Metric recipes

Below are minimal, practical computations you can adopt quickly.

Retrieval: Recall@k and MRR

from collections import defaultdict

# qrels: {query_id: set(gold_doc_ids)}

# runs: {query_id: [doc_id1, doc_id2, ...]} # ranked list

def recall_at_k(qrels, runs, k=10):

hits = 0

for q, gold in qrels.items():

topk = runs.get(q, [])[:k]

hits += int(any(d in gold for d in topk))

return hits / max(1, len(qrels))

def mrr(qrels, runs, k=10):

total = 0.0

for q, gold in qrels.items():

rr = 0.0

for i, d in enumerate(runs.get(q, [])[:k], start=1):

if d in gold:

rr = 1.0 / i

break

total += rr

return total / max(1, len(qrels))

Context quality: precision/recall

# labels: {(query_id, doc_id): 1 if supportive else 0}

# context: {query_id: [doc_id1, doc_id2, ...]} # final provided chunks

def context_precision(query_id):

docs = context.get(query_id, [])

if not docs: return 0.0

sup = sum(labels.get((query_id, d), 0) for d in docs)

return sup / len(docs)

def context_recall(query_id):

# recall here: did at least one supportive doc make it in?

return float(any(labels.get((query_id, d), 0) for d in context.get(query_id, [])))

Faithfulness via entailment (claim‑level)

- Split the answer into atomic claims (sentence or clause level).

- For each claim, compute max entailment score against the provided passages.

- Faithfulness = fraction of claims with entailment ≥ threshold.

# pseudo: entail(claim, passage) -> score in [0,1]

def faithfulness(claims, passages, tau=0.8):

supported = 0

for c in claims:

s = max(entail(c, p) for p in passages) if passages else 0

supported += int(s >= tau)

return supported / max(1, len(claims))

Constructing reliable test sets

- Seed with real user queries from logs (with consent and privacy safeguards).

- Add adversarial and long‑tail cases: multi‑hop, numeric, temporal, and ambiguous queries.

- Create gold sources: identify one or more authoritative passages per query.

- Build negative controls: queries with no answer in the corpus; ensure the system abstains with high faithfulness.

- Refresh periodically to detect data and model drift.

Annotation tips:

- Use clear rubrics with positive/negative examples.

- Double‑annotate a subset; compute inter‑annotator agreement (e.g., Cohen’s κ) and resolve conflicts.

- Capture rationales (spans) to enable span‑level citation checks downstream.

Online evaluation and product metrics

Even the best offline suite can miss product fit. Track:

- Solve/deflection rate: Did users avoid escalation to human support or external search?

- Time to useful answer: Time‑to‑first‑token and time‑to‑final.

- Edit distance: For agentic tasks, distance between suggested and accepted action.

- Abstain rate: Share of queries where the system says “not enough evidence” when appropriate.

- Safety incidents: Toxicity, PII leakage, policy flags per 1k interactions.

- Cost per successful task: Tokens and retrieval compute normalized by success.

Run controlled experiments (A/B or interleaving) with guardrails for safety and latency. Keep rollbacks easy.

Choosing KPIs for different RAG tasks

- Short‑form factoid QA: Recall@k, MRR, EM/F1, faithfulness.

- Long‑form answers (reports, briefs): Context precision/recall, faithfulness, completeness, citation correctness, conciseness.

- Procedural/How‑to: Faithfulness, step coverage (completeness), safety.

- Multi‑hop reasoning: Multi‑hop recall, chain support coverage, faithfulness.

- Code and API Q&A: Recall@k, pass@k (for snippets), test execution success, citation correctness.

Common pitfalls and how to avoid them

- Over‑relying on ROUGE/BLEU: They reward lexical overlap, not truth. Pair with faithfulness and citation checks.

- Ignoring augmentation quality: High retrieval recall with noisy context degrades generation.

- Single‑score complacency: Always decompose; your top‑line metric should never be your only metric.

- Uncalibrated LLM judges: Validate against human gold; use pairwise and multi‑judge aggregation.

- Static test sets: Refresh regularly; include time‑sensitive and out‑of‑distribution slices.

A practical metric set to start with

For most teams, a balanced, low‑friction starter suite is:

- Retrieval: Recall@10, MRR@10, nDCG@10

- Augmentation: Context precision, context recall, redundancy rate

- Generation: Faithfulness (entailment‑based), answer relevance (LLM‑judge), completeness (rubric), citation correctness

- End‑to‑end: EM/F1 (if gold answers), solve rate, abstain rate, latency, cost‑per‑solve

Automate dashboards and tie each experiment to this suite. Use ablations (oracle/no‑context) to localize gains.

Conclusion

Great RAG systems are engineered, not wished into existence. Evaluating them well means separating concerns—measuring retrieval, augmentation, and generation with metrics that reflect real user value, then validating those metrics online. Start simple, decompose errors, and iterate with targeted changes. With the right metrics, progress becomes unmistakable—and repeatable.

Related Posts

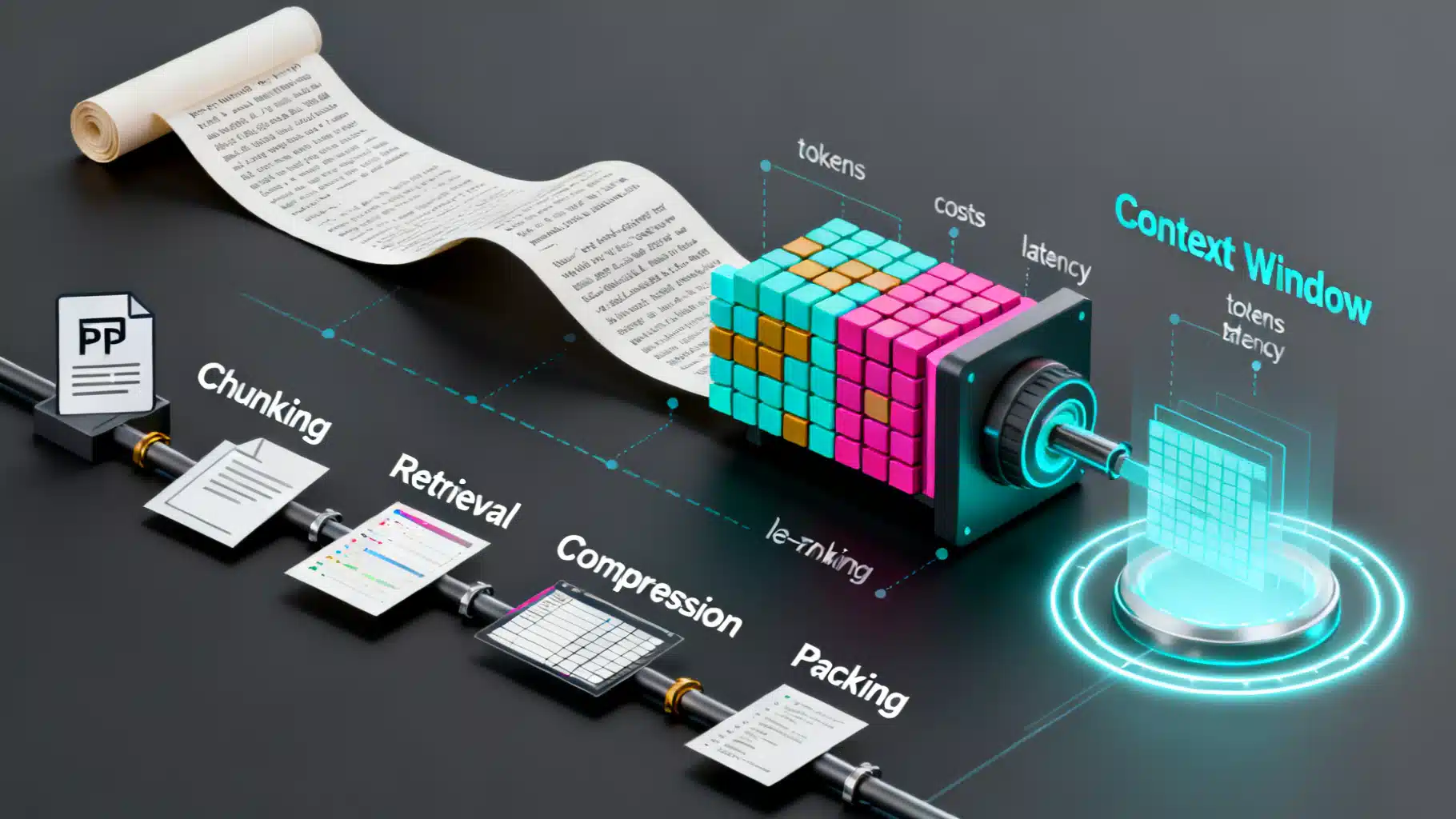

LLM Context Window Optimization: Strategies for Speed, Cost, and Accuracy

Practical strategies to optimize LLM context windows—reduce cost and latency while preserving accuracy with RAG, chunking, compression, caching, and evaluation.

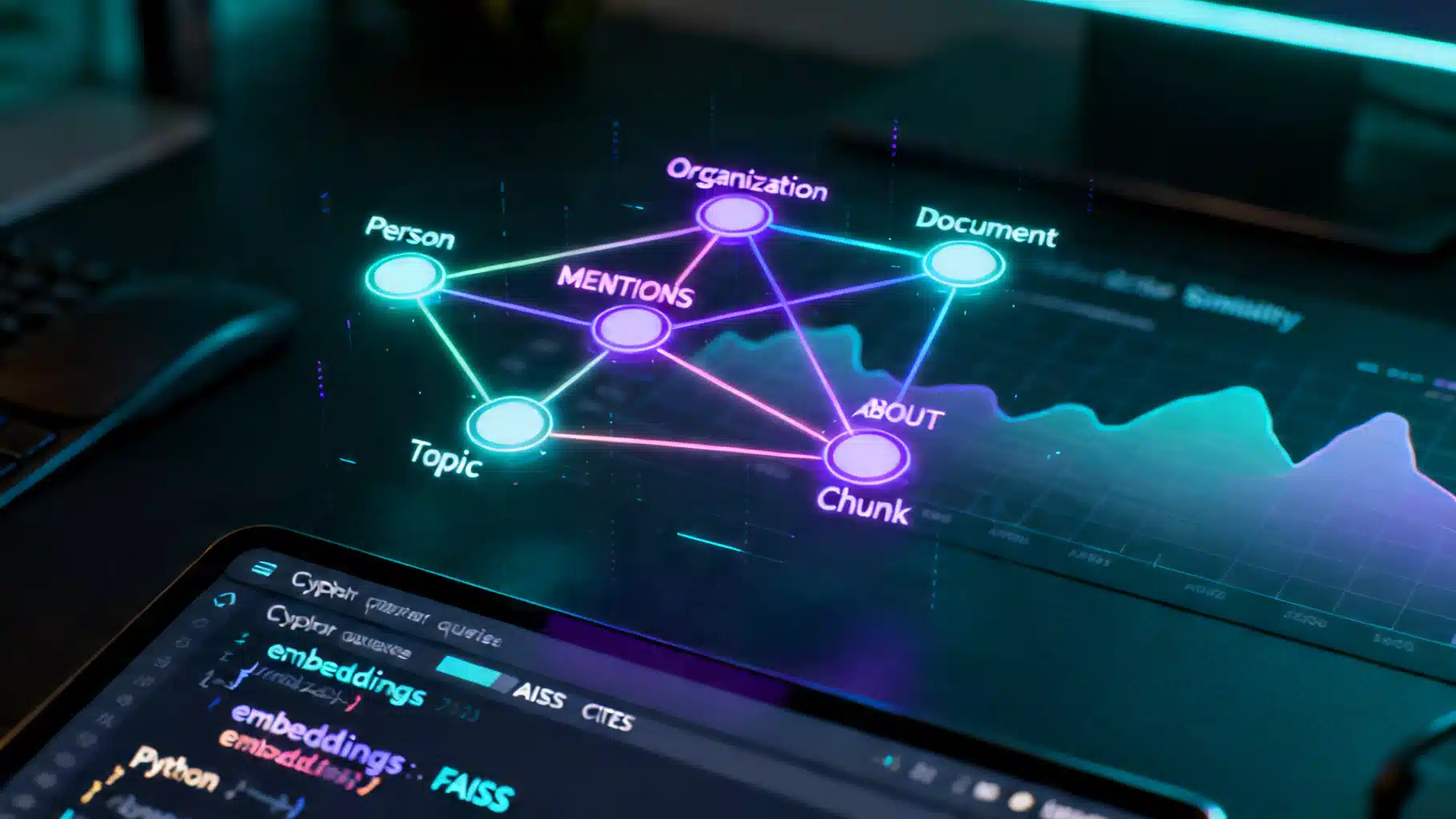

A Practical Tutorial on Knowledge Graph–Enhanced AI Retrieval (GraphRAG)

Build a production-ready tutorial for knowledge graph–enhanced AI retrieval: schema, ingestion, Cypher, hybrid search, and evaluation.

LLM Fine-Tuning Dataset Preparation: An End-to-End Guide

A step-by-step guide to preparing high-quality datasets for LLM fine-tuning, from sourcing and cleaning to formats, safety, splits, and evaluation.