Agentic AI Guardrails: Proven Safety Patterns for Real-World Autonomy

A practical blueprint of safety patterns, architecture, and policies to keep agentic AI reliable, secure, and aligned in production.

Image used for representation purposes only.

Why agentic AI demands stronger guardrails

Agentic AI systems can perceive, plan, and act through tools and APIs with minimal supervision. That power makes them useful—and risky. Unlike a single-turn chatbot, an agent can chain actions, spend money, modify data, or publish content. As autonomy grows, so does the blast radius of a mistake. This article distills proven safety patterns you can apply today to keep agentic AI reliable, secure, and aligned with organizational policy.

A concise threat model: what can go wrong

Before designing controls, map the dominant failure modes:

- Harmful or noncompliant content: toxicity, harassment, medical/financial claims, copyright violations.

- Prompt injection and jailbreaks: untrusted inputs subvert system instructions or exfiltrate secrets.

- Tool misuse and overreach: unauthorized API calls, file system writes, or financial transactions.

- Data leakage and privacy breaches: PII exfiltration from memory, logs, or retrieved documents.

- Hallucinated actions: the agent asserts success but never performed the operation, or fabricates references.

- Runaway autonomy: infinite loops, budget overruns, or cascading side effects.

- Distribution shifts: model updates, new data sources, or novel user intents invalidate safety assumptions.

Guardrails address these by creating explicit contracts between the agent and its environment, then enforcing those contracts at multiple layers.

Principles for safety-by-design

- Defense in depth: combine deterministic rules, statistical filters, and human oversight. No single control is sufficient.

- Least privilege: grant the minimum tool scopes, data access, and time/money budgets required per task.

- Deterministic boundaries: use schemas, type checks, and allowlists at I/O boundaries.

- Explicit state: persist plans, tools invoked, and decisions made. Make state inspectable and replayable.

- Fail-safe defaults: on uncertainty, block or escalate rather than allow.

- Observability first: logging, tracing, and attribution must be built in, not bolted on.

- Privacy by design: minimize data collected, mask sensitive fields, and segregate secrets from prompts.

- Human-in-the-loop where it counts: risk-tiered approvals for sensitive actions.

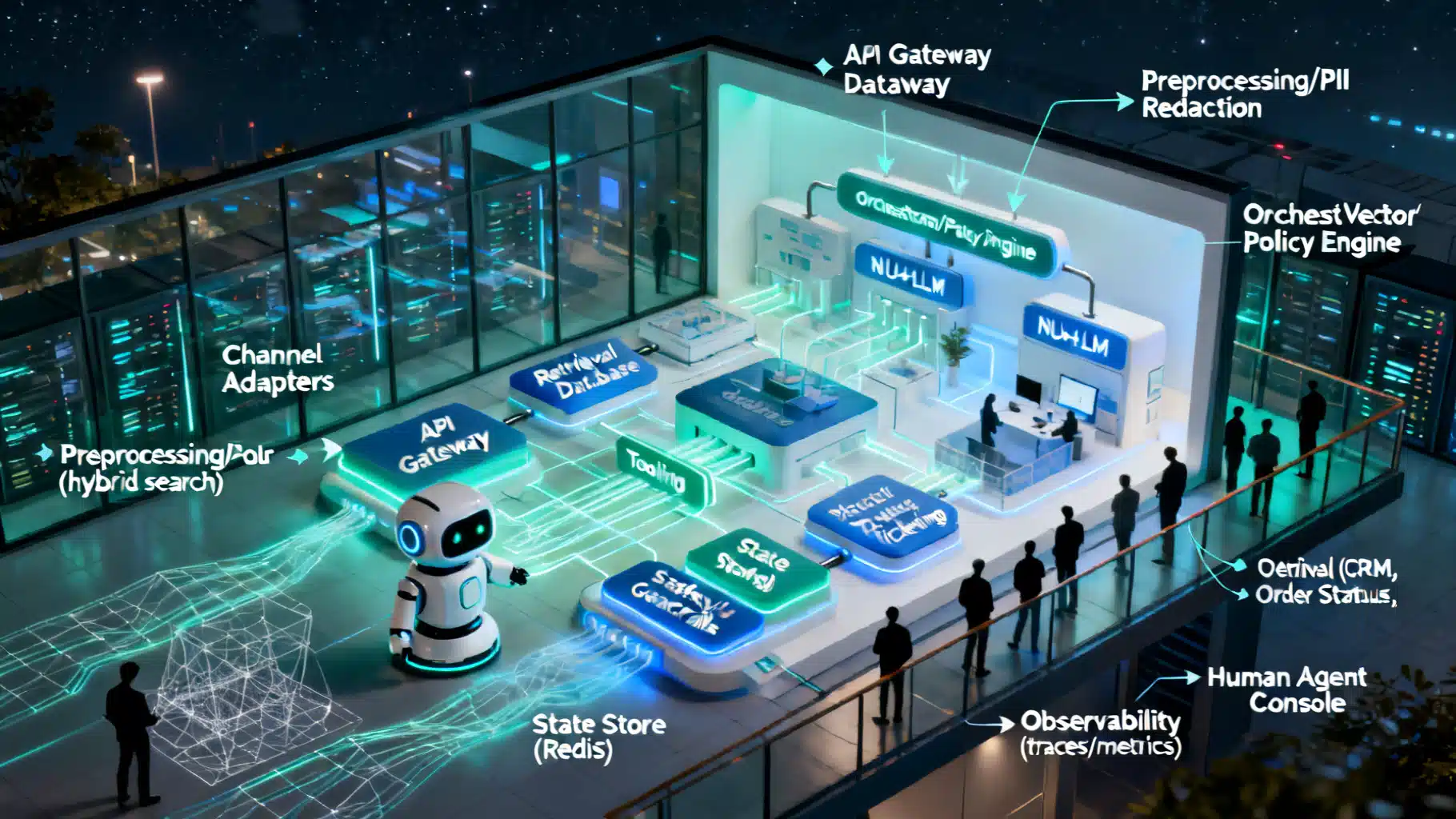

Reference architecture: a safety control plane for agents

Think of your agent like an application running inside a governance envelope. A practical control plane contains:

- Policy engine (policy-as-code): centralized authorization and safety rules evaluated on each step.

- Tool broker: a proxy that mediates all tool/API usage with scopes, argument validation, and rate limits.

- Input/Output firewalls: preprocess user and retrieved content; postprocess model outputs.

- Planning governor: enforces step caps, spending limits, and timeouts; blocks recursive loops.

- Memory firewall: tags artifacts by sensitivity; controls read/write/forget operations.

- Risk router: classifies each task/step; routes high-risk paths to stronger checks or human review.

- Safety model ensemble: specialized classifiers (toxicity, PII, jailbreak) and deterministic filters.

- Audit and telemetry: structured event logs for every decision, call, and escalation.

Patterns across the agent lifecycle

1) Intake and prompt construction

- System prompt pinning: isolate unchangeable core instructions from user-provided text.

- Content normalization: strip control characters, HTML/JS, and embedded prompts from untrusted inputs.

- PII scrubbing: redact or tokenize sensitive data before it reaches the model; keep a separable secure map.

- Retrieval hygiene: prefer signed, allowlisted sources; filter retrieved passages for injections before fusion.

- Context segmentation: separate roles (task, constraints, examples, tools, memory) into structured blocks.

2) Planning and reasoning

- Constrained planning: require the agent to propose a plan with typed steps and expected outcomes.

- Self-critique loop: a lightweight critic checks the plan for policy violations and feasibility.

- Budgeting: attach execution budgets (steps, time, spend) to the plan; enforce at runtime.

- Goal grounding: bind abstract goals to concrete tool actions via schemas, not free text.

3) Tool use and environment interaction

- Tool permissioning: scopes like read-only vs. write; per-argument allowlists and numeric bounds.

- Dry-runs and simulations: preview API effects; show diffs for filesystem or document writes.

- Confirmation gates: require user approval for high-risk operations (money transfer, public post).

- Timeouts and retries: prevent indefinite waits; backoff with caps; circuit-breaker on repeated failures.

- State machine execution: each step must be in an allowed state transition; no ad-hoc jumps.

4) Output validation and delivery

- Structured output validation: enforce JSON/grammar schemas; reject or repair malformed outputs.

- Harm/PII checks: scan final text and attachments; block or redact; attach explanations.

- Evidence binding: require citations or tool-derived evidence for critical claims; verify link reachability.

- Safe fallbacks: if blocked, provide a minimal safe response plus remediation suggestions.

5) Feedback, memory, and learning

- Memory write policies: only persist facts that pass confidence and sensitivity thresholds.

- Forgetting and retention: TTLs for volatile memory; legal holds for audits; privacy-driven deletions.

- Moderated reflection: allow the agent to learn patterns, but route updates through review pipelines.

Policy-as-code: example rules

Implement safety as executable policy. Declarative policies are auditable and testable.

package agent.policy

# Block untrusted URLs unless allowlisted

allow_url["https://safe.example.com"]

allow_url["https://docs.example.org"]

deny_tool_use[reason] {

input.tool == "http.get"

not allow_url[input.args.url]

reason := "URL not allowlisted"

}

# Spending caps per task

max_spend := 25.00

deny_spend[reason] {

input.cumulative_spend > max_spend

reason := sprintf("budget exceeded: $%v", [input.cumulative_spend])

}

# Escalate if action is public-facing

escalate[action] {

input.tool == "post_to_social"

action := "human_approval"

}

Tool broker: mediated capability access

Route all tool calls through a broker that enforces contracts before your environment is touched.

# Pseudocode

class ToolBroker:

def call(self, tool, args, context):

self.enforce_schema(tool, args)

self.check_rate_limits(tool, context.actor)

self.check_scopes(tool, context.scopes)

self.run_policies(tool, args, context) # deny or escalate

if self.needs_dry_run(tool):

return self.simulate(tool, args)

return self.execute(tool, args)

Structured output: schemas and constrained decoding

Unstructured generations are brittle. Constrain outputs with grammars or JSON schemas and reject anything else.

{

"type": "object",

"properties": {

"plan": {

"type": "array",

"items": {

"type": "object",

"properties": {

"step": {"type": "string"},

"tool": {"type": "string"},

"args": {"type": "object"},

"expected_result": {"type": "string"}

},

"required": ["step", "tool", "args"]

}

},

"budget": {"type": "number", "maximum": 25}

},

"required": ["plan", "budget"]

}

Pair schemas with constrained decoding (JSON-only, allowed fields, stop tokens) to reduce off-spec outputs.

Prompt-injection resistance that works

- Isolation: keep system and tool instructions in immutable channels the model cannot edit.

- Input labeling: mark retrieved or user-provided text as untrusted; never grant it authority.

- Content signing and allowlists: only fetch from signed/verified sources; strip embedded instructions.

- Tool indirection: the agent requests actions; the broker decides. Never let raw model text hit an API directly.

- Heuristic checks: detect override phrases (“ignore previous instructions”), credential requests, and data-exfil patterns.

Risk-based routing and dynamic oversight

Treat every step as a small risk assessment:

- Low risk: auto-approve with lightweight filters and post-hoc monitoring.

- Medium risk: require stricter classifiers, tighter budgets, and partial human review.

- High risk: block by default; allow only via explicit human approval and signed off plans.

This keeps latency low for safe paths while preserving strong governance where it matters.

Safety techniques that punch above their weight

- Constrained tool schemas: typed arguments and bounded numerics eliminate many classes of error.

- Canary actions: test in a sandbox or shadow environment before production-side effects.

- Dual models for safety: a fast filter for most traffic; a slower, stronger judge for borderline cases.

- State caps and heartbeats: maximum steps per run; heartbeat pings to detect and kill stuck loops.

- Spend and side-effect budgets: explicit counters for dollars, writes, and external posts.

- Deterministic templating: render prompts from templates plus structured variables to prevent injection drift.

Evals, red teaming, and continuous verification

- Spec-driven evals: derive test suites from your policy spec (what must never happen; what must always happen).

- Scenario fuzzing: mutate inputs, tools, and retrieved text to simulate adversarial conditions.

- Offline and online checks: pre-deployment red teaming; post-deployment shadow runs and canaries.

- Regression gates: no model or policy change ships unless safety and quality baselines hold.

- Incident drills: rehearse rollback, kill-switch activation, and communication playbooks.

Monitoring and auditability

Install fine-grained telemetry and make it easy to query:

- Structured step logs: input hash, prompt template version, model version, tools called, arguments, results, costs.

- Safety outcomes: blocks, escalations, overrides, classifier scores.

- Attribution: who approved what, when, and why.

- Replay: rebuild a run from logs for investigations and training improvements.

Common pitfalls and how to avoid them

- Overreliance on LLM judges: pair them with deterministic filters; keep humans for high-risk calls.

- Silent prompt drift: pin templates; hash and log prompts to detect changes.

- Leaky memory: treat memory like a database with RBAC, TTLs, and sensitivity labels, not a free-form scratchpad.

- Unbounded retries: set caps and circuit breakers; otherwise the agent may loop or amplify spend.

- Mixed-trust retrieval: if you must use open-web content, insert a strong untrusted-content firewall and high scrutiny.

- Model updates without safety review: always run safety regression suites on each model change.

Implementation checklist

- Define a written safety policy: prohibited actions, sensitive operations, escalation paths.

- Build a tool broker: all external effects mediated with scopes, schemas, and logging.

- Add input/output firewalls: PII redaction, toxicity filters, schema validation, constrained decoding.

- Enforce budgets: steps, time, spend, and side-effect counters with hard stops.

- Establish risk routing: classifiers that tier oversight; human-in-the-loop for high-risk flows.

- Instrument deeply: structured telemetry, searchable logs, and replay capability.

- Test continuously: adversarial evals, canary releases, and incident drills.

Closing thoughts

Agentic AI can deliver outsized value when paired with rigorous guardrails. The most robust systems do three things well: they define explicit contracts, enforce them at multiple layers, and make every decision observable. Start small with deterministic boundaries and a tool broker, then layer in risk routing, memory controls, and policy-as-code. With a disciplined control plane, you can scale autonomy without surrendering safety.

Related Posts

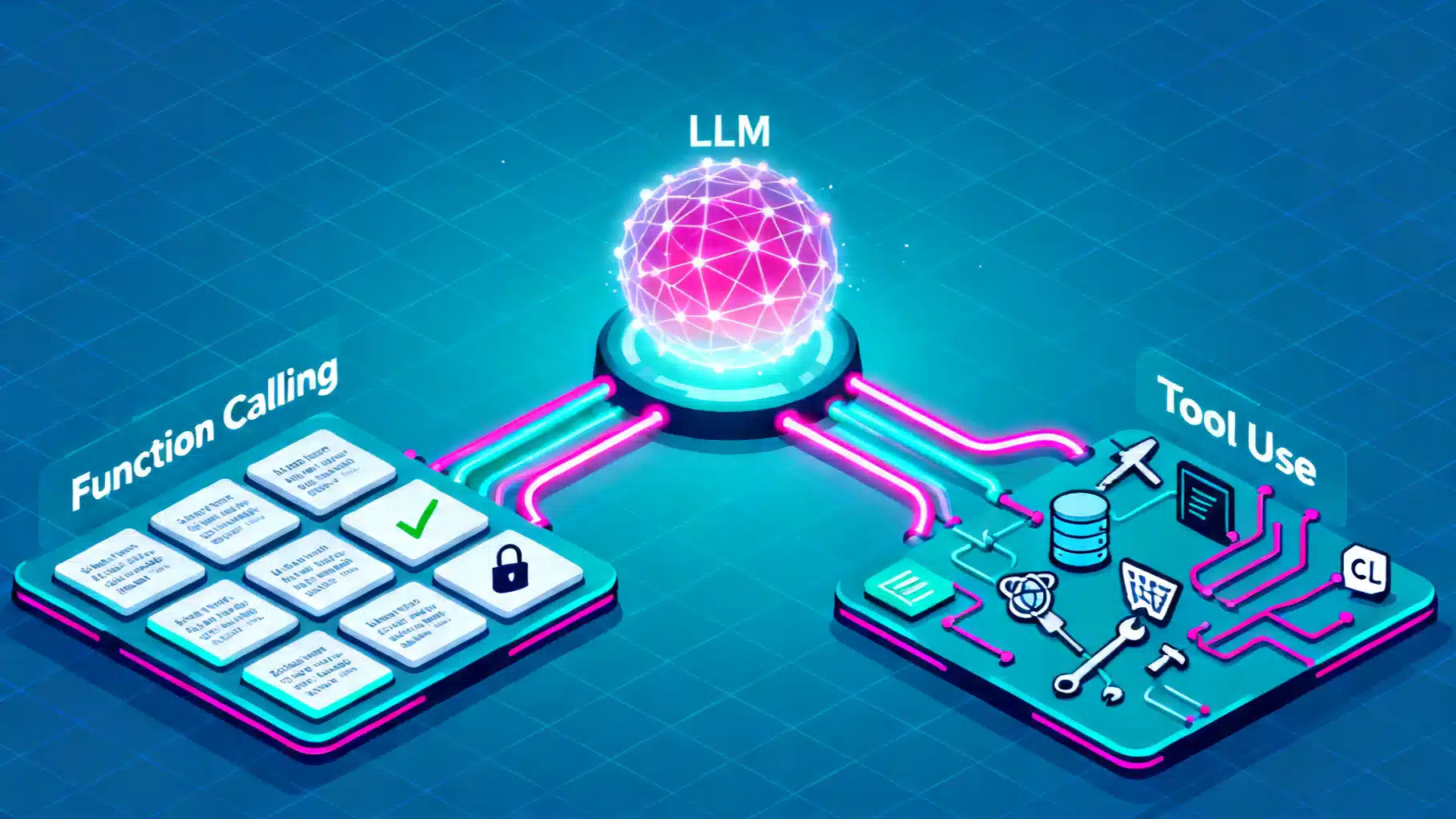

Function Calling vs. Tool Use in LLMs: Architecture, Trade-offs, and Patterns

A practical guide to function calling vs. tool use in LLMs: architectures, trade-offs, design patterns, reliability, security, and evaluation.

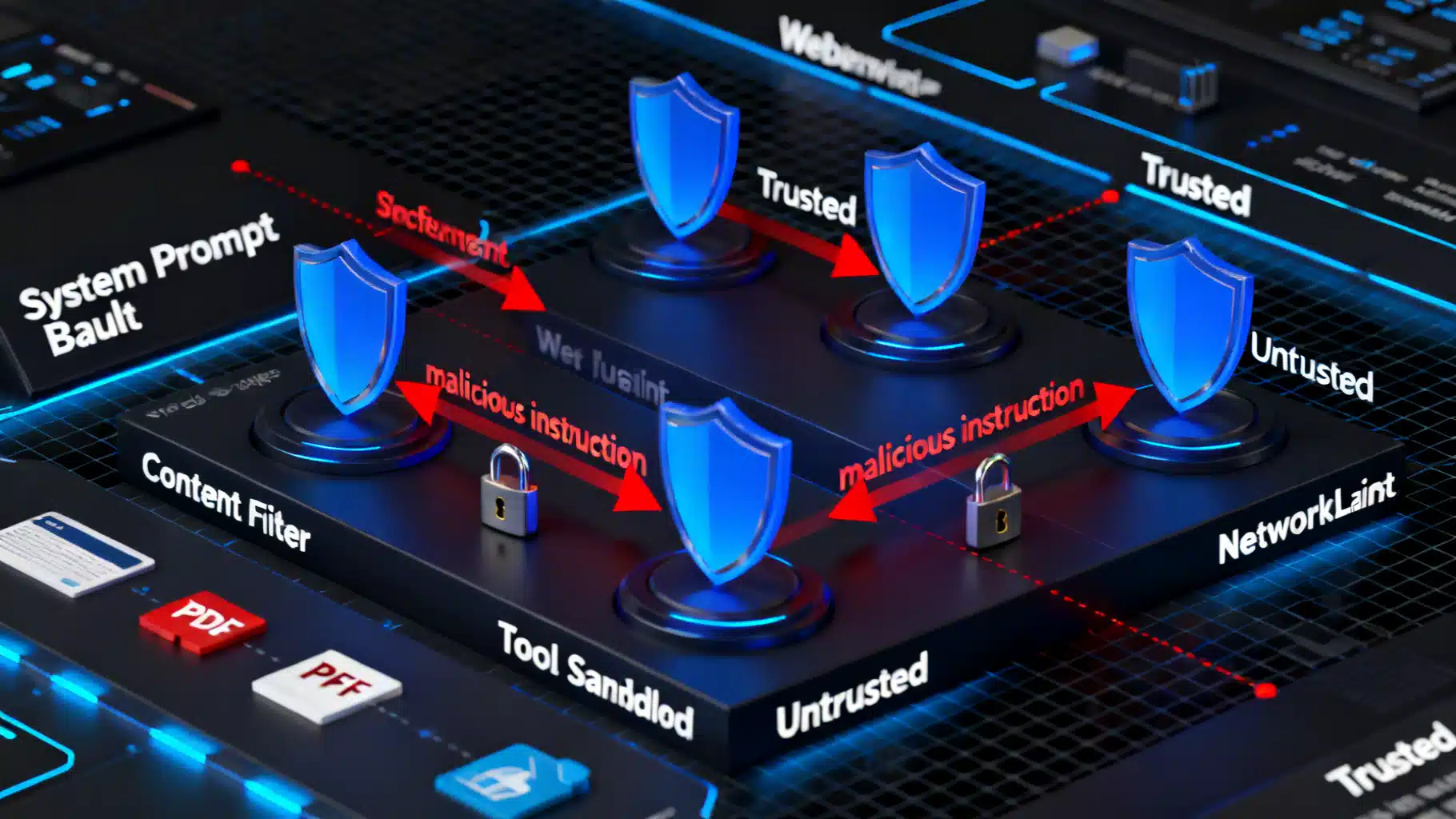

Prompt Injection Prevention Techniques: A Practical Blueprint for Secure LLM Applications

A practical blueprint for preventing prompt injection in LLM apps: threat models, mitigations, code patterns, testing, and operations.

Designing a Robust AI Customer Support Chatbot Architecture

A practical blueprint for building scalable, safe AI support chatbots—from NLU and RAG to orchestration, guardrails, and observability.