Build a Production‑Ready Predictive Analytics API: A Step‑by‑Step Tutorial

Build a production-ready predictive analytics API with Python and FastAPI—training, serving, security, testing, and MLOps in one tutorial.

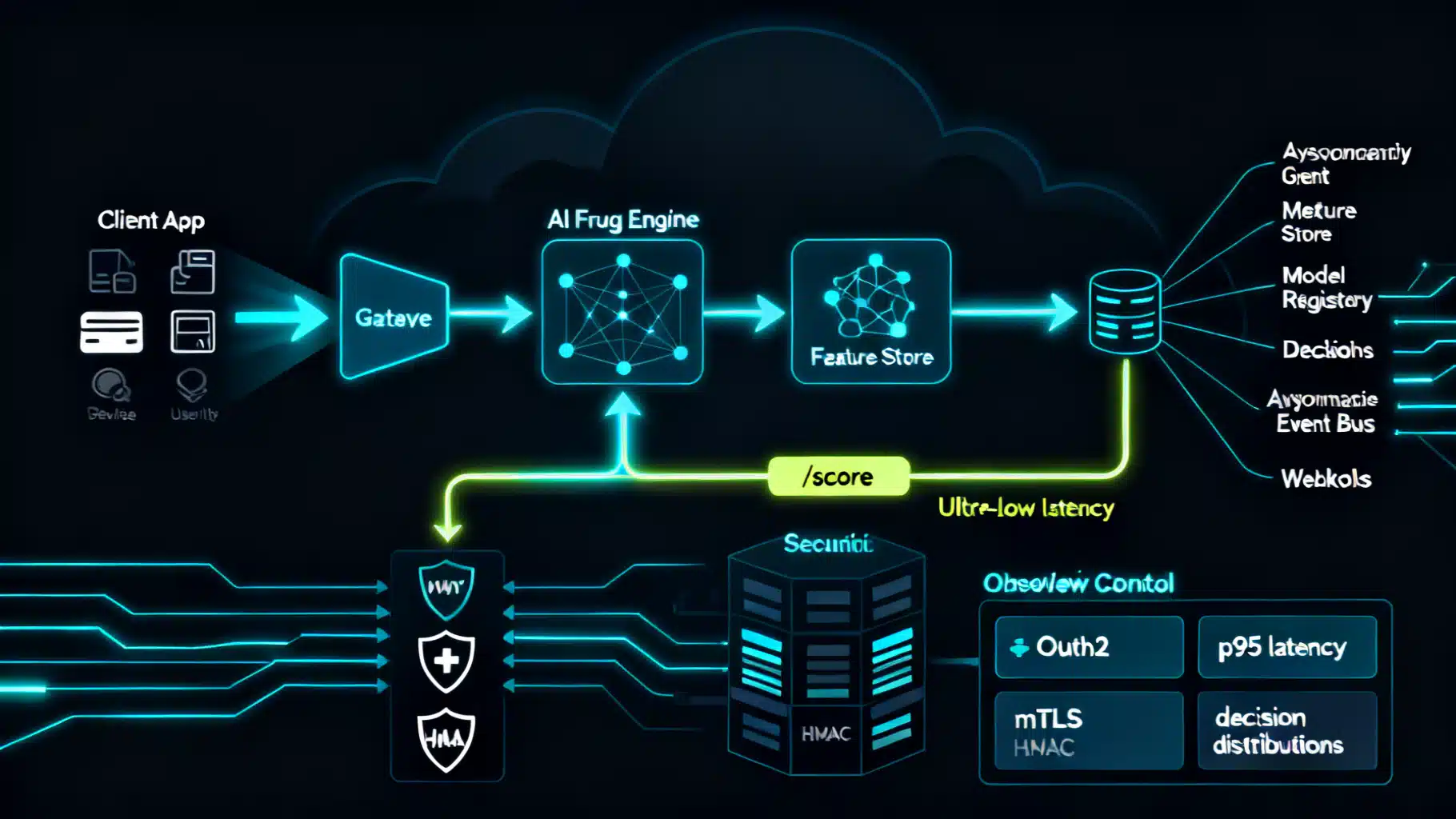

Image used for representation purposes only.

Overview

Predictive analytics turns historical data into foresight. In this tutorial, you’ll build a production‑ready AI predictive analytics API that scores real‑time requests, returns calibrated probabilities, and is secure, observable, and easy to evolve. We’ll use Python, scikit‑learn, and FastAPI, but the design patterns apply to any stack.

What you’ll learn:

- Frame a prediction problem and define an API contract.

- Train, evaluate, and persist a model pipeline.

- Serve low‑latency predictions via FastAPI.

- Add authentication, rate limiting, logging, and health/metadata endpoints.

- Containerize for deployment and set up monitoring and retraining hooks.

Example use case: customer churn prediction for a subscription business.

Architecture at a Glance

Data -> Feature Engineering -> Trained Model -> Model Artifact (versioned) -> REST API (FastAPI) -> Gateway/Clients

Supporting layers:

- Auth (API key or OAuth 2.0)

- Observability (structured logs, metrics, traces)

- CI/CD and Containerization (Docker)

- Model Registry + Feature Definitions

- Monitoring (latency, error rate, data drift) and retraining pipeline

Prerequisites

- Python 3.10+

- Basic understanding of pandas and scikit‑learn

- Docker (for containerization)

- A CSV dataset (we’ll simulate structure below)

Step 1: Frame the Prediction Problem and API Contract

Problem: Predict probability of churn within 30 days for an active user.

Target: churned (1) vs retained (0)

Core features (example):

- tenure_days (numeric)

- monthly_spend (numeric)

- support_tickets_30d (numeric)

- plan_tier (categorical: basic, pro, business)

- country (categorical)

- last_login_hours (numeric)

API contract (request):

{

"tenure_days": 214,

"monthly_spend": 49.0,

"support_tickets_30d": 1,

"plan_tier": "pro",

"country": "US",

"last_login_hours": 5

}

API contract (response):

{

"model_version": "2026-05-17_01",

"churn_probability": 0.18,

"prediction": "retain",

"explanations": {"top_features": ["last_login_hours", "tenure_days", "plan_tier=pro"]}

}

Step 2: Train and Package a Model Pipeline

We’ll build a robust scikit‑learn pipeline with preprocessing baked in, then persist it.

# train.py

import os, json, joblib

import numpy as np

import pandas as pd

from datetime import date

from sklearn.compose import ColumnTransformer

from sklearn.preprocessing import OneHotEncoder, StandardScaler

from sklearn.pipeline import Pipeline

from sklearn.metrics import roc_auc_score, brier_score_loss

from sklearn.linear_model import LogisticRegression

from sklearn.model_selection import train_test_split

# 1) Load data

# Expect columns: features listed below + 'churned' target

df = pd.read_csv("churn_training.csv")

numeric = ["tenure_days", "monthly_spend", "support_tickets_30d", "last_login_hours"]

categorical = ["plan_tier", "country"]

X = df[numeric + categorical]

y = df["churned"].astype(int)

X_train, X_test, y_train, y_test = train_test_split(X, y, test_size=0.2, stratify=y, random_state=42)

# 2) Preprocess + Model

pre = ColumnTransformer([

("num", StandardScaler(), numeric),

("cat", OneHotEncoder(handle_unknown="ignore"), categorical)

])

clf = Pipeline([

("pre", pre),

("model", LogisticRegression(max_iter=200, class_weight="balanced"))

])

clf.fit(X_train, y_train)

# 3) Evaluate

probs = clf.predict_proba(X_test)[:,1]

auc = roc_auc_score(y_test, probs)

bs = brier_score_loss(y_test, probs)

print({"roc_auc": round(auc, 4), "brier": round(bs, 4)})

# 4) Persist artifact + metadata

version = date.today().strftime("%Y-%m-%d") + "_01"

joblib.dump(clf, f"model_{version}.joblib")

meta = {

"version": version,

"created_at": date.today().isoformat(),

"features": numeric + categorical,

"target": "churned",

"metrics": {"roc_auc": float(auc), "brier": float(bs)}

}

with open(f"model_{version}.json", "w") as f:

json.dump(meta, f, indent=2)

print("Saved", version)

Tips:

- Keep preprocessing inside the pipeline to guarantee training/serving parity.

- Start simple (logistic regression). Swap in tree‑based models later if needed.

- Store model + metadata together; register the version string in your CI.

Step 3: Serve Real‑Time Predictions with FastAPI

Expose three endpoints: health, metadata, and predict.

# app.py

import os, joblib, json

from fastapi import FastAPI, Header, HTTPException, Request

from pydantic import BaseModel, Field

API_KEY = os.getenv("API_KEY", "dev-key")

MODEL_VERSION = os.getenv("MODEL_VERSION")

MODEL_PATH = os.getenv("MODEL_PATH", "model_2026-05-17_01.joblib")

META_PATH = MODEL_PATH.replace(".joblib", ".json")

app = FastAPI(title="Churn Prediction API", version="1.0.0")

model = joblib.load(MODEL_PATH)

with open(META_PATH) as f:

META = json.load(f)

if MODEL_VERSION is None:

MODEL_VERSION = META["version"]

class Payload(BaseModel):

tenure_days: float = Field(..., ge=0)

monthly_spend: float = Field(..., ge=0)

support_tickets_30d: float = Field(..., ge=0)

plan_tier: str

country: str

last_login_hours: float = Field(..., ge=0)

@app.middleware("http")

async def auth_mw(request: Request, call_next):

if request.url.path.startswith("/v1/"):

if request.headers.get("x-api-key") != API_KEY:

raise HTTPException(status_code=401, detail="Invalid API key")

return await call_next(request)

@app.get("/health")

def health():

return {"status": "ok"}

@app.get("/v1/metadata")

def metadata():

return {"model_version": MODEL_VERSION, "training": META["metrics"], "features": META["features"]}

@app.post("/v1/predict")

def predict(p: Payload):

X = [[p.tenure_days, p.monthly_spend, p.support_tickets_30d, p.last_login_hours, p.plan_tier, p.country]]

prob = float(model.predict_proba(X)[0,1])

return {

"model_version": MODEL_VERSION,

"churn_probability": round(prob, 4),

"prediction": "churn" if prob >= 0.5 else "retain",

"explanations": {"top_features": []} # fill with SHAP later

}

Run locally:

export API_KEY=dev-key

uvicorn app:app --host 0.0.0.0 --port 8000 --workers 2

Test with curl:

curl -X POST http://localhost:8000/v1/predict \

-H 'Content-Type: application/json' \

-H 'x-api-key: dev-key' \

-d '{"tenure_days":214,"monthly_spend":49.0,"support_tickets_30d":1,"plan_tier":"pro","country":"US","last_login_hours":5}'

Simple JS client:

const res = await fetch("/v1/predict", {

method: "POST",

headers: {"Content-Type":"application/json", "x-api-key": "<key>"},

body: JSON.stringify({tenure_days:214, monthly_spend:49, support_tickets_30d:1, plan_tier:"pro", country:"US", last_login_hours:5})

});

const data = await res.json();

Step 4: Add Safety, Reliability, and Performance

- Validation: Pydantic schemas already enforce types and ranges. Add custom checks for rare categories.

- Timeouts: Set server/read timeouts (via ASGI or gateway). Keep inference under 100 ms P95 when possible.

- Rate limiting: Use a reverse proxy (e.g., NGINX/Envoy) or an in‑app token bucket.

- Caching: Cache identical requests briefly if your features are stable.

- Batch predict: Offer a bulk endpoint to reduce overhead for large jobs.

Minimal in‑app rate limit example (memory, per‑key):

from time import time

from fastapi import Depends

WINDOW, LIMIT = 60, 120 # 120 req/min

_BUCKETS = {}

def check_rate(x_api_key: str = Header(None)):

now = int(time())

w = now // WINDOW

k = (x_api_key or "anon", w)

_BUCKETS[k] = _BUCKETS.get(k, 0) + 1

if _BUCKETS[k] > LIMIT:

raise HTTPException(429, detail="Rate limit exceeded")

@app.post("/v1/predict-rl")

def predict_rl(p: Payload, _=Depends(check_rate)):

return predict(p)

Step 5: Containerize and Document

Dockerfile:

# Dockerfile

FROM python:3.11-slim

WORKDIR /app

COPY requirements.txt ./

RUN pip install --no-cache-dir -r requirements.txt

COPY model_*.joblib model_*.json ./

COPY app.py ./

ENV API_KEY=change-me

EXPOSE 8000

CMD ["uvicorn", "app:app", "--host", "0.0.0.0", "--port", "8000", "--workers", "2"]

requirements.txt (example):

fastapi==0.115.*

uvicorn[standard]==0.30.*

scikit-learn==1.5.*

joblib==1.4.*

pandas==2.2.*

OpenAPI docs are autogenerated at /docs. Export a static schema for clients if needed:

curl http://localhost:8000/openapi.json > openapi.json

Step 6: Observability and Quality Gates

- Logging: Emit structured JSON logs with request_id, status_code, latency_ms, model_version, and feature summary (never log raw PII).

- Metrics: Track p50/p95 latency, throughput, 2xx/4xx/5xx, cache hit rate, and input missing‑rate per feature.

- Tracing: Add trace IDs to correlate feature fetch latency vs inference.

- Quality: Monitor calibration drift: track average predicted probability vs realized rates over time.

Example structured log (Python):

import time, json, uuid, logging

logging.basicConfig(level=logging.INFO)

@app.post("/v1/predict-logged")

def predict_logged(p: Payload, request: Request):

t0 = time.time(); rid = str(uuid.uuid4())

out = predict(p)

logging.info(json.dumps({

"rid": rid,

"route": "/v1/predict",

"status": 200,

"latency_ms": int((time.time()-t0)*1000),

"model_version": out["model_version"],

"country": p.country,

"plan_tier": p.plan_tier

}))

return out

Step 7: Data and Model Governance

- Versioning: Include model_version in responses and store artifacts immutably (e.g., s3://…/models/{version}).

- Reproducibility: Pin library versions and persist training code, seed, and preprocessing.

- Backward compatibility: Avoid breaking changes in request schema; use new endpoints or versioned fields.

- Security: Validate inputs, sanitize logs, rotate API keys, and keep models private.

Step 8: Deployment Patterns

- Single container behind a managed load balancer (small traffic).

- Horizontal scale: multiple replicas; use sticky caches or stateless design.

- Serverless: package as a function if cold starts are acceptable.

- GPU/accelerated: consider ONNX Runtime or TorchScript for deep models.

Blue/green rollout idea:

- Deploy vNew alongside vOld.

- Send 5% traffic to vNew; compare metrics.

- Ramp to 100% if healthy; keep vOld for rollback.

Step 9: Testing the API

- Unit tests: validate preprocessing and edge cases (e.g., unseen categories).

- Contract tests: ensure OpenAPI schema matches implementation.

- Load tests: confirm P95 latency and error rate at target QPS.

Pytest snippet:

from fastapi.testclient import TestClient

from app import app

def test_predict_happy_path():

c = TestClient(app)

r = c.post("/v1/predict", headers={"x-api-key":"dev-key"}, json={

"tenure_days":214,"monthly_spend":49.0,"support_tickets_30d":1,

"plan_tier":"pro","country":"US","last_login_hours":5

})

assert r.status_code == 200

body = r.json()

assert 0.0 <= body["churn_probability"] <= 1.0

Step 10: Interpretability and Feedback Loops

- Feature attributions with SHAP for tabular models; return top features for transparency.

- Feedback ingestion: accept post‑event labels via a secure batch pipeline to compute live metrics and trigger retraining.

- Calibration: consider Platt scaling or isotonic regression if probabilities are miscalibrated.

Handling Data Drift and Retraining

- Track population stats (means, categorical frequencies) at inference time.

- Alert if PSI (Population Stability Index) exceeds thresholds.

- Retraining cadence: schedule weekly/monthly depending on drift and business change.

- Always validate new models against a holdout set and a shadow deployment before promotion.

Common Pitfalls to Avoid

- Training/serving skew from separate preprocessing code paths.

- Silent failures due to missing or renamed input fields.

- Logging PII or full payloads to third‑party systems.

- One‑off scripts without versioning or metadata.

- Returning hard classifications only; always include probabilities.

Extending This Tutorial

- Swap LogisticRegression for Gradient Boosting or XGBoost.

- Add batch endpoint and async processing for large files.

- Integrate a feature store for consistent offline/online features.

- Implement OAuth 2.0 or mTLS for higher security.

- Export Prometheus metrics and OpenTelemetry traces.

Conclusion

You now have a complete path from problem framing to a secure, observable, versioned predictive analytics API. Start simple, measure relentlessly, and evolve the model and platform with disciplined MLOps practices. With a clear contract, rigorous monitoring, and safe rollout strategies, your API can deliver trustworthy predictions at scale.

Related Posts

From Dataset to Endpoint: A Practical AI Text Classification API Tutorial

Build, deploy, and scale a production-ready AI text classification API with Python and FastAPI—training, serving, security, metrics, and monitoring.

AI Fraud Detection API Integration: Architecture, Security, and Implementation Guide

Practical guide to AI fraud detection API integration: architecture, payloads, security, thresholds, MLOps, and operations with code samples.

Building Social Media Insights with AI Sentiment Analysis APIs: Architecture, Metrics, and Code

How to integrate AI sentiment analysis APIs into social media stacks—architecture, metrics, sample code, and best practices for reliable, real-time insights.