Structured Observability for APIs: From JSON Logs to SLOs

A practical guide to structured API logging and observability: schemas, tracing, metrics, correlation IDs, pipelines, and cost-efficient practices.

Image used for representation purposes only.

Why Structured Observability Matters for APIs

APIs are the connective tissue of modern systems. When they slow down or fail, everything above them wobbles. Traditional, ad‑hoc logs make root-cause analysis painful: unstructured strings, missing context, and no way to tie one request’s journey across services. Structured observability—combining structured logs, metrics, and traces—turns raw events into reliable insight you can query, aggregate, and alert on with precision.

This article is a practical playbook for API teams to design, emit, collect, and use structured data that answers the questions you actually ask during incidents and performance work.

Logs, Metrics, Traces: The Contract

- Logs: Immutable facts about events. They should be structured, low-ambiguity, and queryable. Great for forensics and debugging.

- Metrics: Compact numeric time series derived from events, ideal for SLOs, dashboards, and alerts.

- Traces: Causal context across services and components. Ideal for pinpointing where time is spent and how requests propagate.

Key principle: logs, metrics, and traces must correlate via shared identifiers (trace_id, span_id, request_id). If you cannot click from an alert to a trace to rich logs for the affected spans, your observability is incomplete.

Design a Durable API Log Schema

Define a versioned schema before shipping logs. Consistency beats completeness; you can add fields, but you can’t fix chaos after the fact.

Required fields (recommendation):

- timestamp (RFC3339/ISO‑8601, UTC)

- level (DEBUG/INFO/WARN/ERROR)

- service (component name) and service_version

- environment (prod/stage/dev)

- http.method, http.route (template, not raw path), http.status_code

- duration_ms (end-to-end request latency within the service)

- request_id (gateway-generated, stable per request)

- trace_id, span_id (W3C Trace Context)

- user.id_hash (non-PII surrogate), session.id (if applicable)

- client.ip (normalized), user_agent

- outcome (success/error/time_out/cancelled)

- error.type, error.message, error.stack (for errors)

- sample_rate (integer percentage or floating point 0–1)

- infra.zone, infra.node (for placement/affinity debugging)

- cost.hint (optional, tiering/sampling hint)

- schema.version

Example event (minimized):

{

"timestamp": "2026-04-26T12:34:56.789Z",

"level": "INFO",

"service": "payments-api",

"service_version": "2.7.3",

"environment": "prod",

"http.method": "POST",

"http.route": "/v1/charges/{id}",

"http.status_code": 201,

"duration_ms": 123,

"request_id": "req_abc123",

"trace_id": "4bf92f3577b34da6a3ce929d0e0e4736",

"span_id": "00f067aa0ba902b7",

"user.id_hash": "u_9f2c7e",

"client.ip": "203.0.113.5",

"user_agent": "MobileApp/5.2",

"outcome": "success",

"sample_rate": 10,

"schema.version": 1

}

Notes:

- Use http.route as a template (e.g., /orders/{id}) to avoid cardinality explosions from raw paths.

- Hash or tokenize user identifiers to avoid PII leakage while preserving join-ability.

- Prefer snake_case or dot.notation consistently; dot.notation groups fields by domain.

PII, Security, and Compliance by Construction

- Classify fields: public, internal, sensitive, restricted. Keep a registry.

- Avoid raw payload logging. If you must, log summaries: lengths, counts, or sample redacted exemplars.

- Redact at source with allowlists (safer than deny lists). Examples: mask credit_card.last4 only, never full numbers.

- Hashing strategy: salted, keyed hashes for user.id_hash; do not use reversible encodings.

- Access control: route sensitive streams to restricted indexes/namespaces; enable field-level ACLs.

- Retention tiers: short hot (7–14 days), warm (30–90 days), cold/archive (months+) with restore workflows.

Emit Logs Where They Happen: Middlewares and Interceptors

Instrument API edges uniformly. Centralize common context injection (trace, request, user) in middleware so handlers stay clean.

Example: Node.js (Express) request logger with W3C trace context propagation:

const express = require('express');

const { v4: uuidv4 } = require('uuid');

const app = express();

app.use((req, res, next) => {

const start = process.hrtime.bigint();

req.request_id = req.headers['x-request-id'] || uuidv4();

const traceparent = req.headers['traceparent'];

req.trace_id = (traceparent && traceparent.split('-')[1]) || uuidv4().replace(/-/g, '');

res.on('finish', () => {

const duration_ms = Number(process.hrtime.bigint() - start) / 1e6;

const event = {

timestamp: new Date().toISOString(),

level: 'INFO',

service: 'orders-api',

environment: process.env.NODE_ENV || 'dev',

'http.method': req.method,

'http.route': req.route?.path || req.path,

'http.status_code': res.statusCode,

duration_ms,

request_id: req.request_id,

trace_id: req.trace_id,

outcome: res.statusCode >= 500 ? 'error' : 'success'

};

console.log(JSON.stringify(event));

});

next();

});

Tips:

- Always log at response finish to capture actual status and duration.

- Normalize http.route at the router layer to avoid raw paths.

- Don’t let libraries auto-log payloads; explicitly control fields.

Correlation: The W3C Trace Context Is Your Backbone

Adopt W3C Trace Context across all services (traceparent, tracestate). Gateways should create request_id and start a root span; downstream services must propagate ids through HTTP headers and messaging metadata.

Essentials:

- Generate and propagate trace_id/span_id for every request.

- Attach trace_id, span_id, and request_id to every log event.

- For async flows (queues, streams), copy the trace context into message headers; continue the trace when consuming.

- Use link spans for fan-out/fan-in patterns.

Traces and Logs: Two Sides of the Same Coin

- Write critical log messages as span events or link logs to spans via trace_id/span_id.

- Include exemplars in metrics (a sample trace_id for a high-latency bucket) to jump from charts to traces instantly.

- Standard span attributes to always set at API boundaries: http.method, http.route, http.status_code, net.peer.ip, client.address, user_agent.original.

Sampling That Preserves Insight

Collect everything in development and staging. In production:

- Head sampling for volume protection (e.g., 10–20%).

- Tail or dynamic sampling to keep interesting requests at higher rates: errors, high latency, rare routes, high-value tenants.

- Per-endpoint budgets: sample common 200 OKs lower than 5xx or slow 2xx.

- Document sampling in the log schema (sample_rate) so queries can weight counts back to estimates when appropriate.

From Logs to Metrics: Derive What You Alert On

Define log-based metrics that reflect your user experience (golden signals):

- Request rate (RPS): count by service and route.

- Error rate: proportion of 5xx (and relevant 4xx) responses.

- Latency: p50/p90/p95/p99 by route and method.

- Saturation: queue length, concurrency, backpressure signals from infra.

Use derived metrics for SLOs and alerts; use traces and logs for diagnosis.

High-Cardinality Without the Bill Shock

- Use route templates; never raw URLs.

- Quantize numeric ids (e.g., bucket by powers of 10) if they must appear.

- Hash user ids; never store emails or phone numbers as labels.

- Keep user_agent raw only in logs; classify into families for metrics.

- Consider cardinality budgets per team, enforced in CI via a schema linter.

Storage and Cost Control Architecture

A pragmatic, tool-agnostic pipeline:

- Ingest: OpenTelemetry SDKs emit traces/metrics; app logs to stdout as JSON.

- Collection: Sidecars/agents (e.g., Fluent Bit, Vector) tail stdout; OpenTelemetry Collector receives OTLP.

- Enrichment: Add environment, node, and zone; normalize http.route; redact fields.

- Routing:

- Logs → hot store (e.g., Elasticsearch/OpenSearch/ClickHouse/Loki) with index templates per service.

- Traces → trace store (e.g., Tempo/Jaeger/Elastic APM/Cloud vendor).

- Metrics → TSDB (e.g., Prometheus/Mimir/Cortex/VictoriaMetrics).

- Retention tiers and compaction: Move old/low-value data to warm/cold tiers.

- Visualization and alerting: Grafana/Kibana/Cloud UIs; integrate paging.

Cost levers:

- Reduce log verbosity in hot path; prefer structured events that summarize work (one request log, not ten debug lines).

- Drop debug in prod except behind dynamic sampling toggles.

- Index only searchable fields; store the rest unindexed for retrieval.

- Use compression and chunking optimized for your backend (e.g., Parquet/Delta for data lake archival).

Query Patterns That Matter

- Top erroring routes in the last 15 minutes.

- Routes contributing most to p95 latency.

- Sudden error-rate increase correlated with a deployment.

- Tenant or region-specific degradations.

Example pseudo-queries:

- Top routes by p95 latency:

SELECT http.route, APPROX_PERCENTILE(duration_ms, 0.95) AS p95

FROM api_logs

WHERE timestamp > now() - INTERVAL 15 MINUTE AND environment = 'prod'

GROUP BY http.route

ORDER BY p95 DESC

LIMIT 10;

- Errors correlated with deploy:

SELECT window_start, SUM(errors) AS errors, MAX(service_version) AS version

FROM (

SELECT TUMBLE_START(timestamp, INTERVAL 5 MINUTE) AS window_start,

CASE WHEN http.status_code >= 500 THEN 1 ELSE 0 END AS errors,

service_version

FROM api_logs

WHERE timestamp > now() - INTERVAL 2 HOUR AND environment = 'prod'

)

GROUP BY window_start

ORDER BY window_start;

SLOs, Alerts, and On-Call Ergonomics

- SLIs: availability (non-5xx / total), latency (p95 under threshold), correctness (business validations), and freshness (for async APIs).

- SLOs: 99.9% availability over 30 days; p95 < 300 ms for read routes over 7 days.

- Error budgets: drive release pace and incident severity.

- Alerting: combine burn-rate alerts for SLOs with fast feedback (e.g., 2h/6h windows). Alert on symptoms (latency, errors), not causes.

- Runbooks: every alert links to a trace explorer with filters pre-applied (service, route, version, zone).

Testing Observability Like You Test Code

- Instrumentation tests: ensure every request produces a request log with trace_id and normalized http.route.

- Redaction tests: send seeded PII through staging and assert it never appears in stored events.

- Load tests: verify p95/p99 under target and that collectors don’t become bottlenecks.

- Chaos drills: kill pods, throttle networks, rotate certificates—observe signals while inducing failure.

Governance: Keep It Boring and Documented

- Schema registry: version fields; publish JSON schema and examples.

- Breaking changes policy: only additive field changes during business hours; deprecate with grace periods.

- Ownership: each service owns its dashboards, alerts, and runbooks; platform team owns shared pipeline and guardrails.

- Backfill strategy: when adding new fields, plan partial backfills for comparability.

A 30‑Day Adoption Plan

Week 1:

- Define schema v1 and redaction rules.

- Enable W3C Trace Context in gateway and two services.

Week 2:

- Add middleware-based structured logging to all API edges.

- Stand up collector(s) and route logs/traces to target stores.

Week 3:

- Create golden-signal dashboards and two SLOs per service.

- Implement head sampling (e.g., 20%) and dynamic tail sampling for errors/slow paths.

Week 4:

- Add log-based metrics and burn-rate alerts.

- Write incident runbooks and implement ownership.

- Run a chaos drill and refine dashboards.

Common Failure Modes (and Fixes)

- High-cardinality explosion: use http.route templates; remove raw ids from labels; hash identifiers.

- Noisy debug logs: introduce dynamic log levels and sampling toggles; drop debug in prod by default.

- Orphan logs with no trace_id: fix propagation; instrument middleware to attach ids to all logs.

- Payload PII leaks: replace blacklist regexes with allowlists; write tests.

- Expensive queries during incidents: pre-build saved queries and use derived metrics for alert pivots.

Quick Operational Checklist

- Logs are JSON and validate against schema.version.

- Every log has request_id, trace_id, http.route, and duration_ms.

- PII policy enforced at source; sensitive fields never indexed.

- Sampling configured (head + dynamic/tail) with sample_rate recorded.

- Dashboards show rate, error, latency by route and version.

- SLOs defined with burn-rate alerts; links jump to traces and logs.

- Storage tiers and retention documented; index templates applied.

- Runbooks exist; on-call can reproduce the top 5 queries quickly.

Conclusion

Structured observability for APIs is about making the right data easy to produce and easier to use. A small number of well-chosen fields, consistent correlation across services, and deliberate pipelines let you answer the only two questions that matter during an incident: what changed, and where is the time going? Put structure first, then scale with sampling, tiering, and governance. Your mean-time-to-why will thank you.

Related Posts

GraphQL Error Handling Best Practices: Clear, Secure, and Resilient APIs

A practical guide to GraphQL error handling: schema design, HTTP codes, partial data, masking, client patterns, observability, and examples.

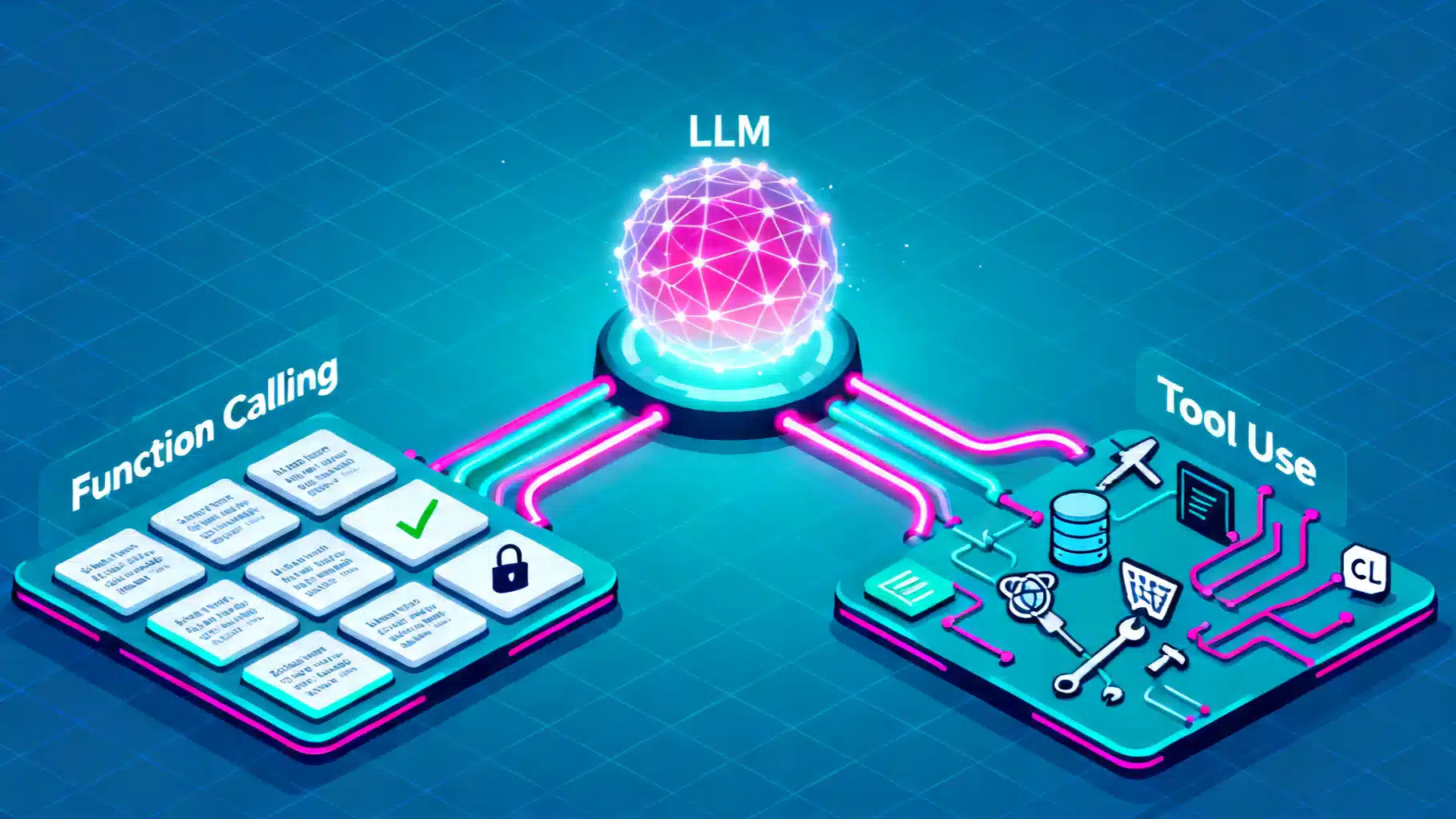

Function Calling vs. Tool Use in LLMs: Architecture, Trade-offs, and Patterns

A practical guide to function calling vs. tool use in LLMs: architectures, trade-offs, design patterns, reliability, security, and evaluation.

Designing API Throttling and User Tiers: A Practical Guide for Scalable Platforms

Design robust API throttling and user tier management with algorithms, policies, headers, and billing integration.