Building an AI Marketing Copy Generation API: Architecture, Control, and ROI

Design a production-grade AI marketing copy generation API: architecture, prompts, guardrails, evaluation, and code examples.

Image used for representation purposes only.

Overview

AI-powered copy generation has matured from a marketing experiment into a core capability for growth teams. A well-designed API lets product, lifecycle, and performance marketers generate on-brand copy—headlines, ad variants, email subject lines, product descriptions—programmatically and safely. This article walks through the architecture, data modeling, prompts, guardrails, evaluation, and operations needed to run a production-grade AI marketing copy generation API.

Core capabilities to support

- Channels: ads (search, social, display), email, push, in-app, landing pages, app store listings.

- Content types: headlines, body copy, CTAs, captions, descriptions, snippets, meta tags.

- Controls: tone, voice, length, keywords, reading level, target audience, region/language.

- Guardrails: brand rules, legal constraints, banned terms, factual verification, reference-only claims.

- Operations: A/B variant generation, streaming previews, structured outputs, analytics hooks.

Build vs. buy: a pragmatic lens

- Buy if you need quick time-to-value, a managed moderation stack, and non-differentiated features.

- Build if your brand voice, compliance checks, or product knowledge integration (catalogs, pricing, availability) are competitive advantages.

- Hybrid is common: vendor LLM + your orchestration, retrieval, guardrails, and evaluation.

Reference architecture

A typical pipeline involves:

- Request normalization: validate inputs, expand templates, attach defaults (tone, region, campaign).

- Retrieval: assemble context from brand voice, product catalog, past winners, and style guides.

- Prompt assembly: deterministic template with variables and constraints, plus examples.

- Generation: call the model (with temperature controls) and request structured JSON output.

- Post-processing: lint for length, banned terms, reading level, and keyword coverage.

- Verification: fact-check against product data and policy rules; block or revise if needed.

- Scoring: heuristic and model-based quality scoring; optional toxicity/PII checks.

- Delivery: return variants, rationale, and metadata; log for analytics.

Data modeling: a minimal schema

Use structured inputs and outputs to reduce ambiguity.

Example input payload:

{

"channel": "paid_social",

"objective": "clicks",

"audience": {"persona": "value_shopper", "region": "US", "language": "en"},

"product": {

"id": "SKU-123",

"name": "Nimbus Running Shoes",

"features": ["breathable mesh", "lightweight foam", "recycled materials"],

"price": 89.99

},

"constraints": {

"tone": "upbeat, trustworthy",

"keywords": ["lightweight", "breathable"],

"reading_level": "Grade 7",

"max_chars_headline": 60,

"max_chars_body": 120

},

"variants": 4,

"brand_rules_ref": "brand-v4"

}

Example output schema (structured JSON “copySpec”):

{

"copy": [

{

"headline": "Run Lighter. Breathe Easier.",

"body": "Meet Nimbus—featherweight comfort with breathable mesh and planet-friendly design.",

"cta": "Shop Now",

"keywords_covered": ["lightweight", "breathable"],

"est_reading_level": 6,

"char_counts": {"headline": 28, "body": 99},

"compliance": {"banned_terms": [], "claims_verified": true}

}

],

"rationale": "Optimized for clicks: punchy verbs, benefits up front, clear CTA.",

"diagnostics": {"model": "llm-x", "temperature": 0.7, "seed": 42}

}

Prompt engineering and control

Design prompts as deterministic templates that incorporate your schema and constraints. Keep few-shot examples fresh by rotating in recent top performers.

Example system + user prompt (pseudo):

System: You are a senior performance copywriter. Output strictly as JSON matching the copySpec schema. Follow brand rules: {brand_rules}.

User: Create {variants} ad variants for {channel} with objective {objective}.

Audience: {audience}. Product: {product}.

Constraints: tone={tone}; max chars headline={max_h}; max chars body={max_b}; keywords={keywords}.

No medical or absolute claims. If uncertain, prefer benefit-led phrasing.

Return only JSON.

Controls to expose:

- Creativity: temperature, top_p, and a deterministic seed for reproducibility when desired.

- Diversity: nucleus/top-k sampling and “anti-plagiarism” penalties across variants.

- Length: hard character limits with server-side truncation fallback.

- Safety: stop sequences around risky phrases; brand rule DSL.

API design: endpoints that matter

A simple REST surface works well; gRPC or GraphQL can come later.

-

POST /v1/generate

- Body: input schema above plus generation params (temperature, max_tokens, seed).

- Query: stream=true to stream tokens for instant previews.

- Response: copySpec JSON with diagnostics and moderation flags.

-

POST /v1/variations

- Body: existing creative ID + constraints to remix within brand boundaries.

-

POST /v1/templates

- Manage prompt templates and style-guide snippets; support versioning and canary releases.

-

POST /v1/evaluate

- Pairwise or listwise ranking of variants with rubrics (clarity, brand fit, compliance, novelty).

-

GET /v1/brand-rules/{id}

- Resolve rules used at generation time for auditability.

Example: cURL

curl -X POST https://api.yourdomain.com/v1/generate \

-H 'Authorization: Bearer $TOKEN' \

-H 'Content-Type: application/json' \

-d '{

"channel": "email_subject",

"objective": "open_rate",

"audience": {"persona": "frequent_buyer", "language": "en"},

"product": {"id": "SKU-123", "name": "Nimbus Running Shoes"},

"constraints": {"tone": "friendly, concise", "max_chars_headline": 45},

"variants": 5,

"generation": {"temperature": 0.6, "seed": 101}

}'

Example: Node.js (fetch with retries)

import fetch from 'node-fetch';

async function generateCopy(payload) {

const res = await fetch('https://api.yourdomain.com/v1/generate?stream=false', {

method: 'POST',

headers: {

'Authorization': `Bearer ${process.env.API_TOKEN}`,

'Content-Type': 'application/json'

},

body: JSON.stringify(payload),

// network resilience

timeout: 10000

});

if (!res.ok) throw new Error(`HTTP ${res.status}`);

return res.json();

}

const payload = { /* ...as above... */ };

generateCopy(payload).then(console.log).catch(console.error);

Guardrails: brand, legal, and safety

- Brand voice: define a rule set (allowed tones, banned phrases, capitalization, emoji policy). Enforce with a linter after generation; if violations exist, trigger an edit pass.

- Claims: never invent specs or discounts. Require verifiable inputs (price, availability) and insert only via templated variables from your product DB.

- Disclaimers: auto-attach regional or category disclaimers (e.g., health, finance) from a rules engine.

- PII and sensitivity: block requests that ask to generate content using personal data without explicit consent flags.

- Moderation: run toxicity, hate/abuse, and sensitive-topic classifiers. If flagged, either redact or request human review.

Knowledge integration: RAG for facts

To avoid hallucinations, integrate Retrieval-Augmented Generation (RAG):

- Index product catalogs, feature docs, competitor matrices, and past winners with embeddings.

- At request time, retrieve top-k passages by SKU and channel.

- Insert only retrieved facts into the prompt’s context window.

- Post-check: verify that any factual statements map to a retrieved snippet; if not, downgrade or revise.

Pseudocode:

facts = retriever.query([sku, channel, keywords], k=6)

prompt = assemble(base_template, inputs, context=facts)

resp = llm.generate(prompt, temperature=0.5)

if not verify(resp, facts):

resp = revise(resp, rules="remove unverifiable claims")

Evaluation: offline and online

- Offline

- Heuristics: length, keyword coverage, reading level, forbidden terms.

- Model judges: pairwise preference with a rubric (“clarity, benefit-first, on-brand, compliant”).

- Human panel: small weekly calibration set to catch drift.

- Online

- A/B or multi-armed bandits by channel and audience.

- Metrics: CTR, CVR, add-to-cart rate, open/reply rate, revenue per send.

- Guard for novelty bias: cap exposure per variant until significance.

Store lineage: variant -> prompt template -> model -> context docs -> ruleset -> datasets. This enables root-cause analysis and reproducibility.

Cost and performance controls

- Token budgeting: truncate context aggressively; summarize long specs first.

- Caching: memoize identical requests keyed by normalized payload; use embeddings to dedupe near-duplicates.

- Batch generation: one call, many variants; cheaper than N small calls.

- Streaming: show creatives as they generate to improve authoring UX.

- Asynchronous jobs: queue large batch requests; provide webhooks for completion.

- Idempotency keys: avoid duplicate generations on retries.

Internationalization and localization

- Separate translation from transcreation: generate natively in target language rather than translating English copy.

- Control region-specific rules: measurement units, currencies, prohibited claims, cultural references.

- Offer language and locale fields; attach locale-specific stop words and banned phrases.

Observability and governance

- Structured logs: include request hash, template version, model, latency, token counts, moderation flags.

- Metrics: success rate, average spend per 1K tokens, time-to-first-token (TTFT), variant win rate by channel.

- Alerts: sudden spike in moderation flags, brand rule violations, or CTR collapse in a canary.

- Access: per-tenant API keys, scopes (generate, templates:write, evaluate), and rate limits.

- Audits: endpoint to reconstruct any variant’s provenance.

Sample brand rule DSL

id: brand-v4

voice:

allowed_tones: ["upbeat", "warm", "trustworthy"]

banned_phrases: ["guaranteed results", "miracle", "best ever"]

style:

capitalization: sentence

emoji: disallow

numerals: use_digits_over_ten: true

claims:

allow_absolute: false

require_refs: ["price", "materials"]

localization:

region_overrides:

EU:

disclaimers: ["VAT may apply"]

Security and privacy

- Secrets: never put API keys in prompts or logs; use a per-tenant KMS.

- Data retention: configurable TTL and redaction for PII in stored prompts and outputs.

- Model choice: isolate tenants that require stricter data handling.

- Compliance: document GDPR/CCPA data subject workflows; provide deletion endpoints.

Launch checklist

- Agree on copySpec JSON schema and freeze v1 for 6 months.

- Create high-coverage tests: prompt assembly, rule linting, fact verification.

- Canary the model/template to <5% traffic; watch moderation and CTR deltas.

- Build rollback: previous template+model pair always available.

- Document failure modes and playbooks (timeouts, provider outages, hallucination spikes).

Conclusion

An AI marketing copy generation API succeeds when it is predictable, auditable, and optimized for business outcomes—not just fluent text. By grounding generations in brand rules and product facts, enforcing structured outputs, and continuously evaluating online performance, you can scale high-quality, on-brand creative across channels with confidence.

Related Posts

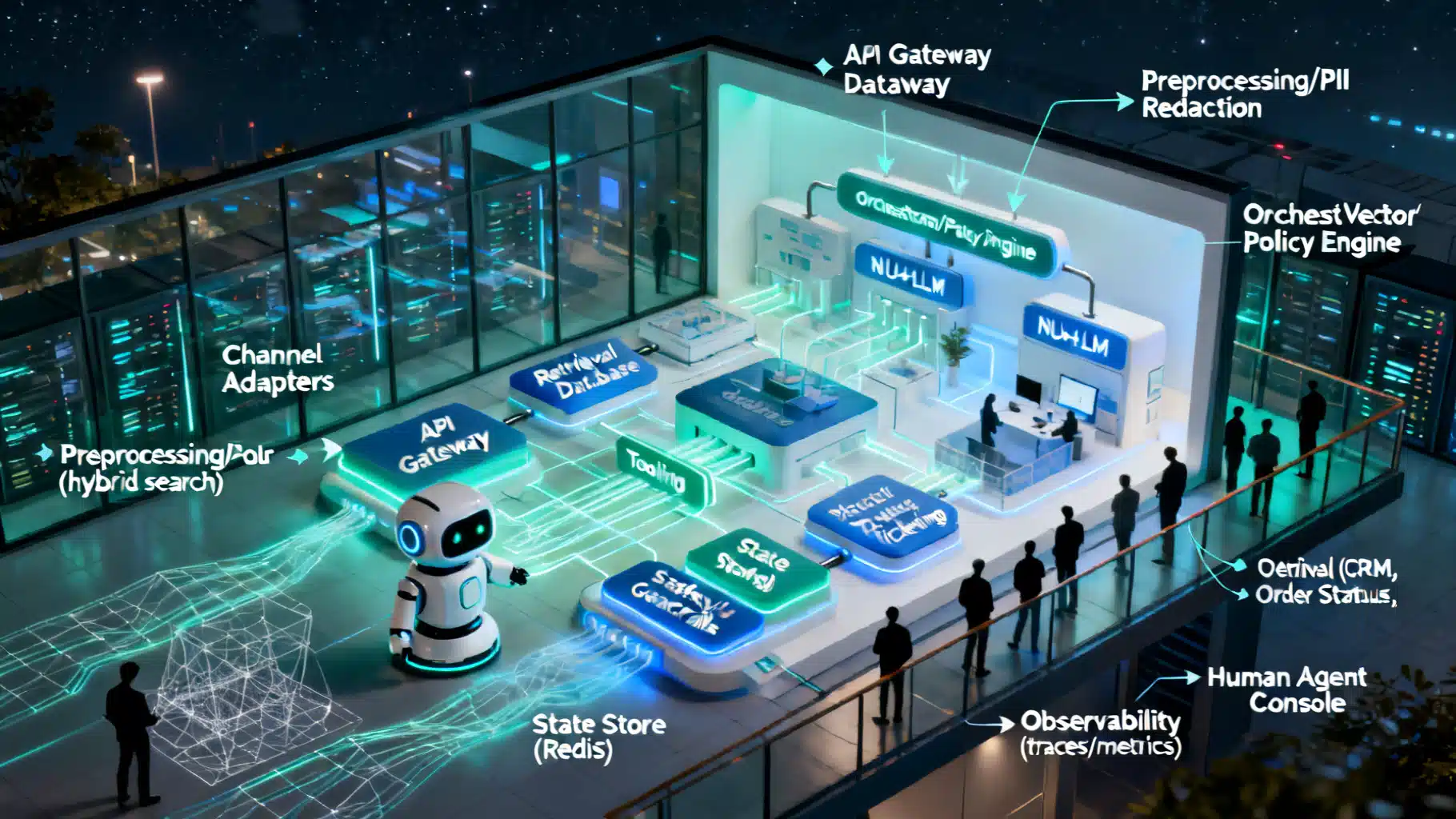

Designing a Robust AI Customer Support Chatbot Architecture

A practical blueprint for building scalable, safe AI support chatbots—from NLU and RAG to orchestration, guardrails, and observability.

The Essential Guide to Evaluating Retrieval‑Augmented Generation: Metrics that Matter

A practical, end-to-end guide to RAG evaluation metrics—from retrieval and grounding to faithfulness, relevance, and online impact.

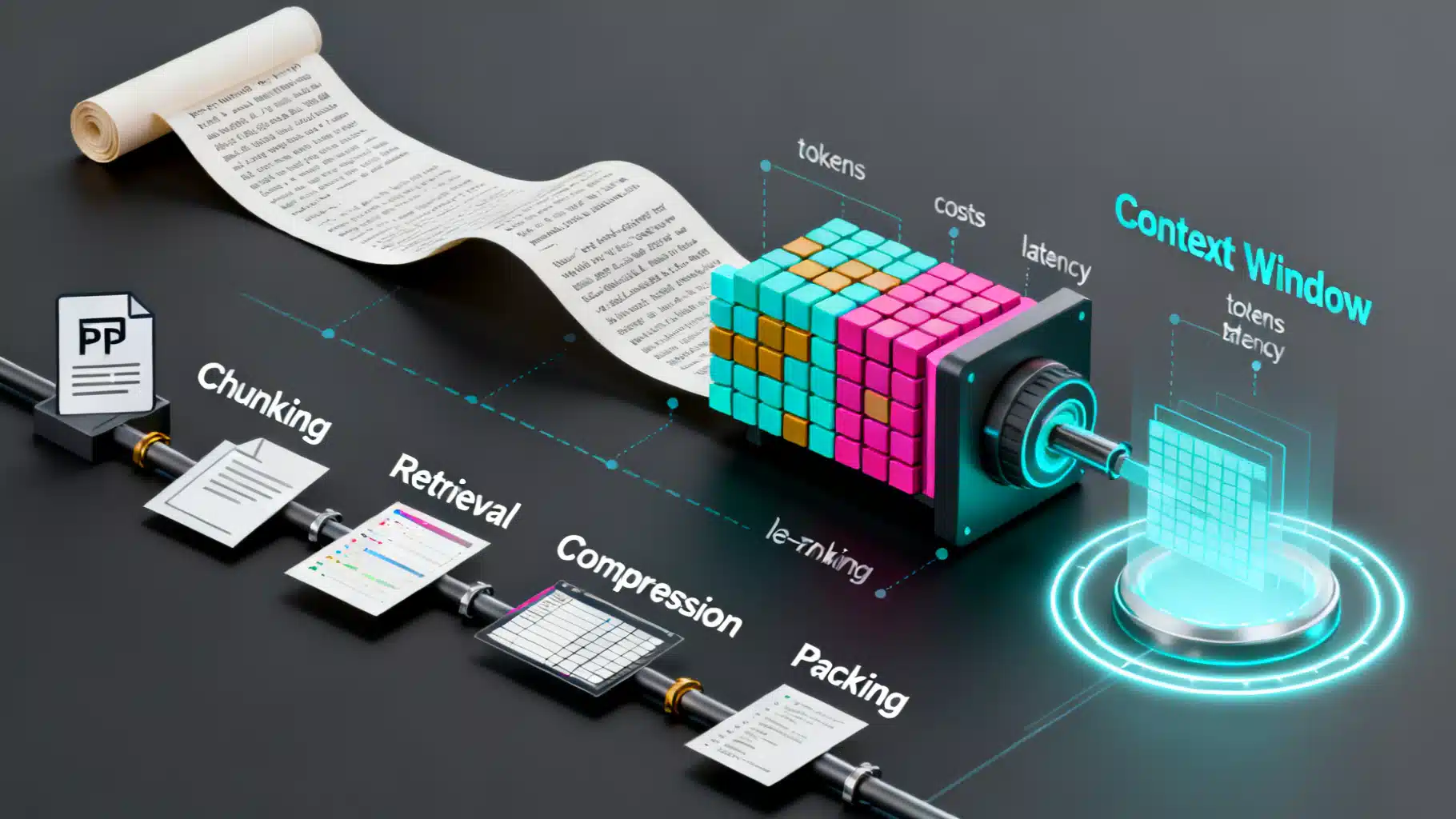

LLM Context Window Optimization: Strategies for Speed, Cost, and Accuracy

Practical strategies to optimize LLM context windows—reduce cost and latency while preserving accuracy with RAG, chunking, compression, caching, and evaluation.