Sliding Window Rate Limiting for APIs: Concepts, Trade-offs, and Production Patterns

Implement API rate limiting with a sliding window: concepts, Redis designs, Lua scripts, headers, and production pitfalls to build fair, scalable APIs.

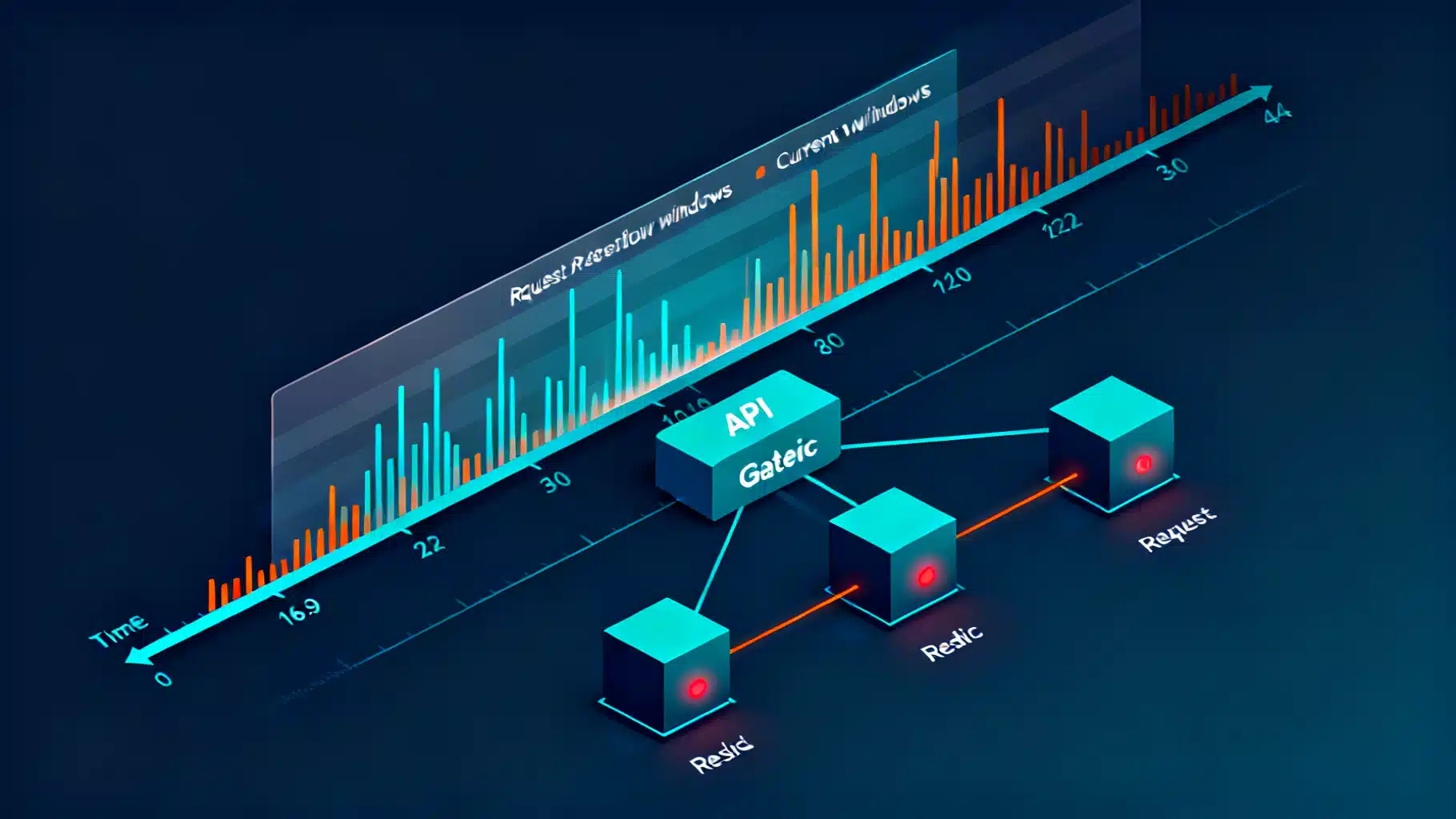

Image used for representation purposes only.

Overview

API rate limiting protects your backend from abuse and smooths traffic bursts so real users get predictable performance. Among common strategies, the sliding window family provides strong fairness with simple math and operationally friendly data structures. This article explains the sliding window approach, shows two production-ready designs in Redis, and shares practical guidance on headers, testing, and pitfalls.

Why not just a fixed window?

A fixed window limit (for example, 100 requests per minute) counts all requests in discrete, whole-minute buckets. It is simple but unfair around boundaries: a client can send 100 requests at 12:00:59 and another 100 at 12:01:01—200 requests within 2 seconds—while still “complying.” The result is burstiness and user-visible latency spikes.

Sliding window algorithms avoid boundary effects by measuring over the last N seconds at any instant, not just within integer-aligned buckets.

Two sliding window variants

There are two widely used variants. Both enforce a limit L per window W seconds.

- Sliding log (exact)

- Keep a time-ordered log (timestamps) of recent requests per key.

- On each request: drop entries older than now − W, count remaining, allow if count < L, then append the new timestamp.

- Pros: exact enforcement; smoothest behavior.

- Cons: memory grows with traffic; requires efficient pruning and atomicity.

- Sliding window counter (approximate)

- Maintain counts for two adjacent fixed buckets: the current and the immediately previous window of length W.

- Effective usage = current_count + previous_count × (1 − fraction_of_window_elapsed).

- Allow if effective_usage < L, then increment the current bucket.

- Pros: O(1) memory; very fast; easy to shard.

- Cons: slight approximation; at small W or very spiky traffic, it can be a bit more permissive.

Both variants work well in practice. Pick sliding log when you need per-request precision for tight limits. Pick sliding counter for high-throughput, large-tenant systems.

Key design and scoping

- Key granularity: choose the identity you want to protect. Common keys: user_id, API key, organization_id, IP (fallback), or route-level key (e.g., org:123:/v1/search).

- Multiple limits per key: you can enforce tiered limits (e.g., per-second and per-minute) by checking multiple windows.

- Shaping vs policing: shaping (token bucket) smooths traffic by queuing; policing (sliding window) rejects excess immediately. Most public APIs police with 429 responses and Retry-After.

Choosing W and L

- Window W: small windows (1–10s) cap bursts; longer windows (1–60m) cap sustained consumption. Many APIs combine both.

- Limit L: derive from backend capacity and fairness goals. Start with conservative values, then adjust using telemetry.

- Headroom: reserve 10–20% system headroom for retried or skewed traffic.

Redis implementation: sliding log with sorted sets

A compact, atomic design uses a Redis Sorted Set per key:

- Member: a unique request ID (e.g., ULID or a counter) or the timestamp to avoid collisions.

- Score: the request timestamp in milliseconds.

- Steps per request:

- ZREMRANGEBYSCORE key -inf (now_ms - W_ms)

- ZCARD key → current_count

- If current_count < L then ZADD key now_ms member and EXPIRE key TTL

To avoid race conditions, wrap in a Lua script so cleanup, count, and append are atomic:

-- KEYS[1] = rate key, ARGV[1] = now_ms, ARGV[2] = window_ms, ARGV[3] = limit, ARGV[4] = ttl_s, ARGV[5] = member

local key = KEYS[1]

local now_ms = tonumber(ARGV[1])

local window_ms = tonumber(ARGV[2])

local limit = tonumber(ARGV[3])

local ttl_s = tonumber(ARGV[4])

local member = ARGV[5]

redis.call('ZREMRANGEBYSCORE', key, '-inf', now_ms - window_ms)

local count = redis.call('ZCARD', key)

if count < limit then

redis.call('ZADD', key, now_ms, member)

redis.call('EXPIRE', key, ttl_s)

return {1, limit - (count + 1)} -- allowed, remaining

else

local oldest = redis.call('ZRANGE', key, 0, 0, 'WITHSCORES')

local reset_ms = 0

if oldest and oldest[2] then

reset_ms = (oldest[2] + window_ms) - now_ms

end

return {0, reset_ms} -- blocked, milliseconds until a slot frees

end

Notes

- Use Redis TIME to source server time and reduce clock skew across app nodes.

- Set EXPIRE to slightly more than W (e.g., W + 10s) so keys disappear when idle.

- Complexity: ZREMRANGEBYSCORE is O(log N + M). With pruning each request, N stays ≤ L, keeping operations fast.

Redis implementation: sliding window counter (two-bucket)

Maintain counts for buckets identified by floor(now / W). Effective usage is the linear interpolation of current and previous buckets.

Pseudocode

window = W seconds

limit = L

bucket_now = floor(now / W)

bucket_prev = bucket_now - 1

count_now = INCRBY key:bucket:bucket_now 0 # read without increment

count_prev = GET key:bucket:bucket_prev # may be nil → 0

elapsed = now - bucket_now * W

weight_prev = 1 - (elapsed / W) # in [0,1)

usage = count_now + (count_prev or 0) * weight_prev

if usage < L then

INCR key:bucket:bucket_now

EXPIRE key:bucket:bucket_now W+10

allow

else

block (compute reset as time until usage < L; usually W - elapsed)

end

In practice you’ll combine reads and writes into a single Lua script or a MULTI/EXEC pipeline for atomicity and speed. Here’s a Lua sketch returning allowed flag, remaining tokens (approx), and seconds to reset:

-- KEYS[1] = key prefix, ARGV[1] = now_s, ARGV[2] = window_s, ARGV[3] = limit, ARGV[4] = incr

local prefix = KEYS[1]

local now = tonumber(ARGV[1])

local W = tonumber(ARGV[2])

local L = tonumber(ARGV[3])

local incr = tonumber(ARGV[4]) or 1

local bucket_now = math.floor(now / W)

local bucket_prev = bucket_now - 1

local key_now = prefix .. ':' .. bucket_now

local key_prev = prefix .. ':' .. bucket_prev

local count_now = tonumber(redis.call('GET', key_now)) or 0

local count_prev = tonumber(redis.call('GET', key_prev)) or 0

local elapsed = now - bucket_now * W

local weight_prev = 1 - (elapsed / W)

local usage = count_now + count_prev * weight_prev

if usage + incr <= L then

local new_now = redis.call('INCRBY', key_now, incr)

redis.call('EXPIRE', key_now, W + 10)

-- remaining is approximate

local remaining = math.floor(L - (new_now + count_prev * weight_prev))

local reset = math.ceil(W - elapsed)

return {1, remaining, reset}

else

local reset = math.ceil(W - elapsed)

return {0, 0, reset}

end

Advantages

- O(1) space per active key.

- Naturally sharded: hash the key prefix across Redis slots/cluster nodes.

- Good enough approximation for most public APIs.

Multi-limit enforcement in one pass

Real APIs typically enforce several windows simultaneously (e.g., 10 r/s and 600 r/m). Evaluate each limit in the same script and deny if any would overflow. Return the smallest reset time and the minimum remaining across limits to inform clients.

Returning helpful response headers

When a request is limited, respond with HTTP 429 Too Many Requests and include guidance headers. Common patterns:

- Retry-After: seconds until the next attempt is likely to succeed.

- RateLimit-Limit, RateLimit-Remaining, RateLimit-Reset: emerging standard names seen across providers.

- X-RateLimit-*: legacy but still widely used.

Example (approximate names and values):

HTTP/1.1 429 Too Many Requests

Content-Type: application/json

Retry-After: 7

RateLimit-Limit: 100;w=60

RateLimit-Remaining: 0

RateLimit-Reset: 1715879652

{"error":"rate_limited","message":"Try again in 7 seconds"}

Handling distributed systems realities

- Clock skew: use Redis TIME or a trusted time source in your gateway; avoid individual app node clocks.

- At-least-once retries: idempotency keys prevent duplicate work when clients retry after backoff.

- Partial failures: decide fail-open vs fail-closed when the limiter store is unreachable. Many public APIs fail-closed for safety, but internal services sometimes fail-open to preserve SLOs.

- Multi-region: either keep per-region limits (documented to clients) or centralize counters with low-latency replication. If centralized, consider read-local, write-remote patterns and the effect on p99s.

- Hot keys: large tenants may create hotspots. Shard by sub-dimension (e.g., org:route) or use probabilistic sampling plus admission control for extreme spikes.

Observability and analytics

- Emit allow/deny metrics labeled by key, route, window, and decision.

- Track near-miss counts (e.g., remaining ≤ 5%) to understand headroom.

- Log denials with reset times to support customer success.

- Build dashboards: top limited tenants, routes with most denials, and correlation with backend latency.

Testing and tuning

- Load tests: replay production-like traffic with bursts and think-time to validate fairness.

- Fuzzing: randomize inter-arrival times; assert that any 1-minute interval never exceeds L for W=60.

- Canary rollout: start with observe-only mode—calculate limits but don’t enforce—then enable enforcement per-tenant.

- Backoff guidance: document exponential backoff with jitter to clients; provide SDK helpers.

When to prefer other algorithms

- Token Bucket: if you want to allow short bursts while capping average rate; better for smoothing uploads or streaming APIs.

- Leaky Bucket: if you need steady outflow shaping and are comfortable queuing.

- Concurrency limits: if the dominant risk is parallel in-flight requests rather than pure arrival rate.

Memory, CPU, and cost considerations

- Sliding log: memory is O(L) per active key; with L=100 and 16 bytes/timestamp, that’s ~1.6 KB per hot key. Keep W and L realistic and expire aggressively.

- Sliding counter: constant memory (two integers per active key). Very cost-efficient at scale.

- CPU: Lua scripts avoid round trips and ensure atomicity; prefer EVALSHA and keep scripts short.

Example: Node.js gateway with ioredis (sliding log)

const Redis = require('ioredis');

const redis = new Redis(process.env.REDIS_URL);

const lua = `\

redis.call('ZREMRANGEBYSCORE', KEYS[1], '-inf', ARGV[1] - ARGV[2])\

local c = redis.call('ZCARD', KEYS[1])\

if c < tonumber(ARGV[3]) then\

redis.call('ZADD', KEYS[1], ARGV[1], ARGV[4])\

redis.call('EXPIRE', KEYS[1], ARGV[5])\

return {1, tonumber(ARGV[3]) - (c + 1)}\

else\

local o = redis.call('ZRANGE', KEYS[1], 0, 0, 'WITHSCORES')\

local reset = 0\

if o and o[2] then reset = (o[2] + ARGV[2]) - ARGV[1] end\

return {0, reset}\

end`;

async function allow(reqKey, limit=100, windowMs=60000) {

const now = Date.now();

const ttl = Math.ceil(windowMs/1000)+10;

const member = `${now}-${Math.random().toString(36).slice(2)}`;

const [allowed, extra] = await redis.eval(lua, 1, `rl:${reqKey}`, now, windowMs, limit, member, ttl);

return {allowed: allowed === 1, extra};

}

Common pitfalls and how to avoid them

- Boundary denial storms: if many clients hit the limit simultaneously, they’ll all retry at the same next-second boundary. Recommend jittered backoff and include Retry-After.

- Missing expirations: without EXPIRE, your keyspace grows forever. Always set TTL ≥ W.

- Per-IP limits only: NATs and mobile networks share IPs. Combine identity-based keys with IP as a fallback.

- Silent throttling: over-restrictive limits without clear headers create support load. Be explicit with limits and resets.

- Ignoring method/route: POST /login may need tighter limits than GET /status. Use route-specific keys where risk differs.

Quick decision checklist

- Need exact fairness and small limits? Sliding log with Redis ZSET + Lua.

- Need massive scale and predictable cost? Sliding window counter with two buckets.

- Enforce multiple windows? Evaluate all in one script; deny if any fails.

- Multi-region? Prefer region-local enforcement and document region scoping, or centralize with a latency budget.

- Client experience? Return 429 with Retry-After and clear RateLimit-* headers.

Conclusion

Sliding window rate limiting offers a balanced, production-proven way to protect APIs without punishing clients at bucket boundaries. With either the exact sliding log or the lightweight two-bucket counter, you can build an efficient limiter that scales, remains fair under bursty traffic, and communicates clearly with your users. Start small, observe, then tune windows and limits to match real workloads. The result is better reliability for everyone who depends on your API.

Related Posts

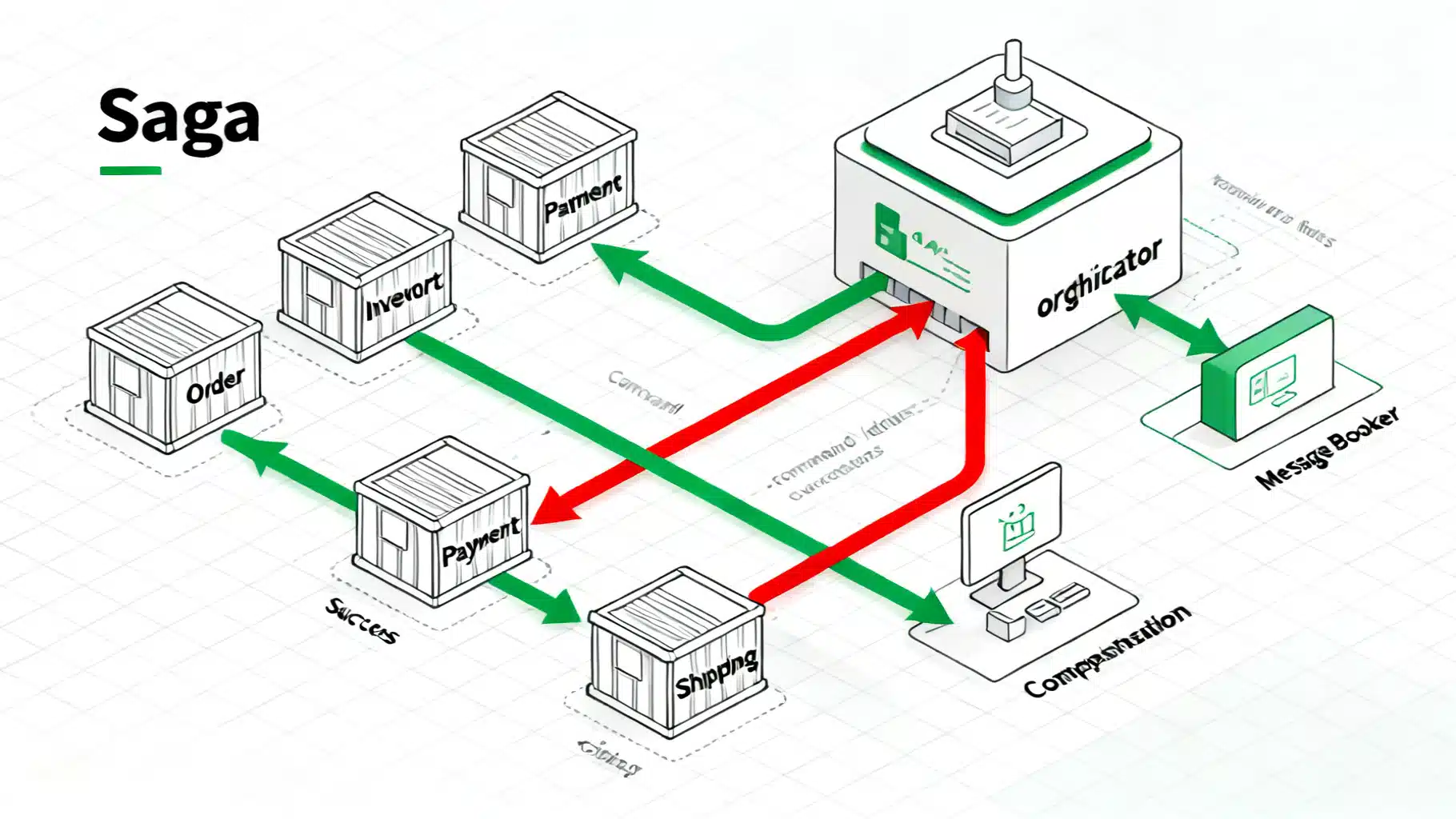

API Saga Pattern: Building Reliable Distributed Transactions at Scale

Designing reliable distributed transactions with the Saga pattern: orchestration vs choreography, API design, idempotency, compensations, and production pitfalls.

Designing API Throttling and User Tiers: A Practical Guide for Scalable Platforms

Design robust API throttling and user tier management with algorithms, policies, headers, and billing integration.

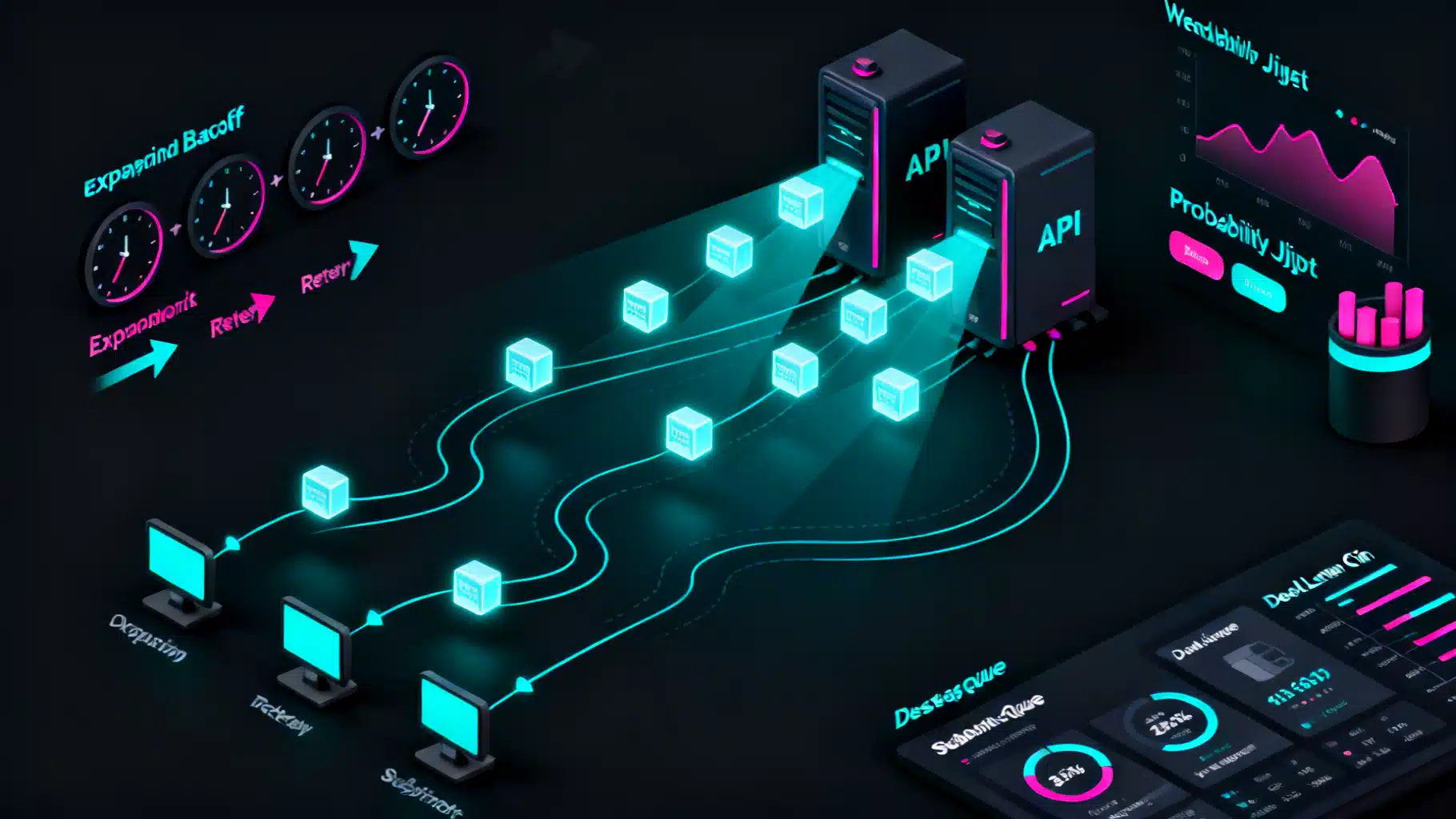

Designing resilient REST API webhook retry mechanisms

Design reliable webhook retries: backoff with jitter, idempotency, Retry-After, DLQs, security, and ops patterns for resilient REST API webhooks.